AI supremacy

Incorporating technological singularities, hard AI take-offs, game-over high scores, the technium, deus-ex-machina, deus-ex-nube, nerd raptures and so forth

December 2, 2016 — March 8, 2024

Small notes on the Rapture of the Nerds. If AI keeps on improving, will explosive intelligence eventually cut humans out of the loop and go on without us? Also, crucially, would we be pensioned in that case?

The internet has opinions about this.

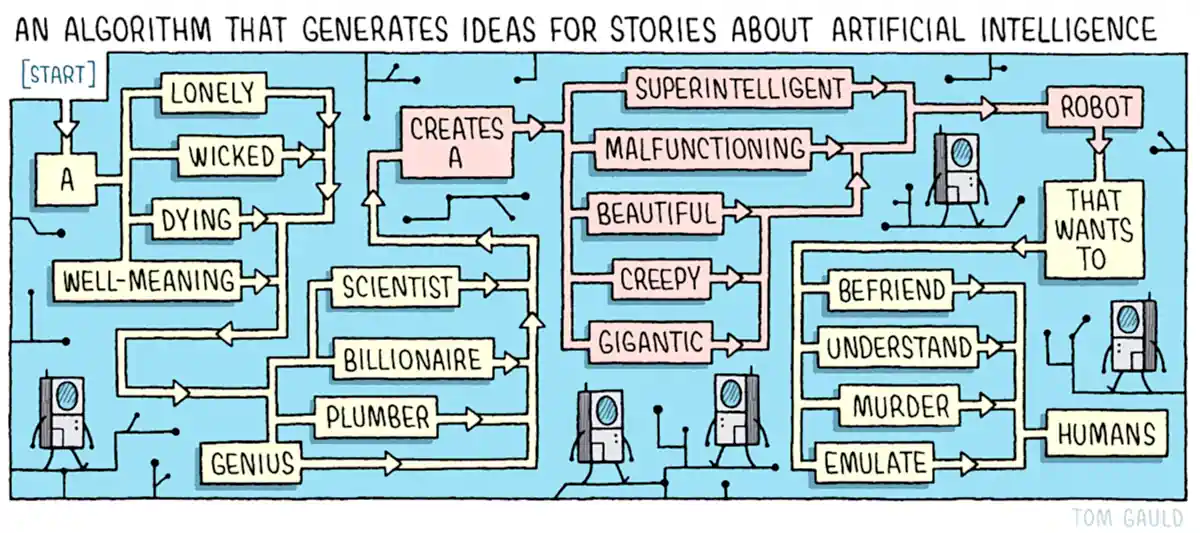

A fruitful application of these ideas is in producing interesting science fiction and contemporary horror.

1 x-risk

Incorporating other badness risk.

It is a shibboleth for the rationalist community to express the opinion that the risks of a possible AI explosion are under-managed compared to the risks of more literal explosions. Also, to wonder if an AI singularity happened and we are merely simulated by it.

There is a possibility that managing e.g. climate crisis is on the critical path to AI takeoff, and we are not managing that risk well; in particular I think that we are not managing its tail risks at all well.

I would like to write some wicked tail risk theory at some point.

- Katja Grace, Counterarguments to the basic AI x-risk case

- AI Impacts

2 In historical context

- Ian Morris on whether deep history says we’re heading for an intelligence explosion

- The Singularity in Our Past Light-Cone

- Economies and Empires are Artificial General Intelligences

More filed under big history.

2.1 Most-important century model

3 Technium

4 Models of AGI

Hutter’s models

-

AIXI [’ai̯k͡siː] is a theoretical mathematical formalism for artificial general intelligence. It combines Solomonoff induction with sequential decision theory. AIXI was first proposed by Marcus Hutter in 2000[1] and several results regarding AIXI are proved in Hutter’s 2005 book Universal Artificial Intelligence.[2]

ecologies of minds considers evolutionary rather than optimising minds.

5 Technium stuff

6 Aligning AI

Let us consider general alignment, because I have little AI-specific to say.

7 Constraints

7.1 Compute methods

We are getting very good at efficiently using hardware (Grace 2013). AI and efficiency (Hernandez and Brown 2020) makes this clear:

We’re releasing an analysis showing that since 2012 the amount of compute needed to train a neural net to the same performance on ImageNet classification has been decreasing by a factor of 2 every 16 months. Compared to 2012, it now takes 44 times less compute to train a neural network to the level of AlexNet (by contrast, Moore’s Law would yield an 11x cost improvement over this period). Our results suggest that for AI tasks with high levels of recent investment, algorithmic progress has yielded more gains than classical hardware efficiency.

7.2 Compute hardware

TBD

8 Incoming

Henry Farrell and Cosma Shalizi: Shoggoths amongst us connect AIs to cosmic horror to institutions.

Black Holes and the Intelligence Explosion | Sequoia Capital

Artificial Consciousness and New Language Models: The changing fabric of our society - DeepFest 2023

Douglas Hofstadter changes his mind on Deep Learning & AI risk (June 2023)?

Matthe Hutson, Computers ace IQ tests but still make dumb mistakes. Can different tests help?

François Chollet, The implausibility of intelligence explosion

Ground zero of the idea in fiction, perhaps, Vernor Vinge’s The Coming Technological Singularity

Stuart Russell on Making Artificial Intelligence Compatible with Humans, and interview on various themes in his book (Russell 2019)

Attempted Gears Analysis of AGI Intervention Discussion With Eliezer

Superintelligence: The Idea That Eats Smart People (I thought that effective algorithm meta criticism was the idea that ate smart people?)

Kevin Scott argues for trying to find a unifying notion of what knowledge work is to unify what humans and machines can do (Scott 2022).

Hildebrandt (2020) argues for talking about smart tech instead of AI tech.

Everyone love’s Bart Selman’s AAAI Presidential Address: The State of AI

Smart technologies | Internet Policy Review

Speaking of ‘smart’ technologies we may avoid the mysticism of terms like ‘artificial intelligence’ (AI). To situate ‘smartness’ I nevertheless explore the origins of smart technologies in the research domains of AI and cybernetics. Based in postphenomenological philosophy of technology and embodied cognition rather than media studies and science and technology studies (STS), the article entails a relational and ecological understanding of the constitutive relationship between humans and technologies, requiring us to take seriously their affordances as well as the research domain of computer science. To this end I distinguish three levels of smartness, depending on the extent to which they can respond to their environment without human intervention: logic-based, grounded in machine learning or in multi-agent systems. I discuss these levels of smartness in terms of machine agency to distinguish the nature of their behaviour from both human agency and from technologies considered dumb. Finally, I discuss the political economy of smart technologies in light of the manipulation they enable when those targeted cannot foresee how they are being profiled.

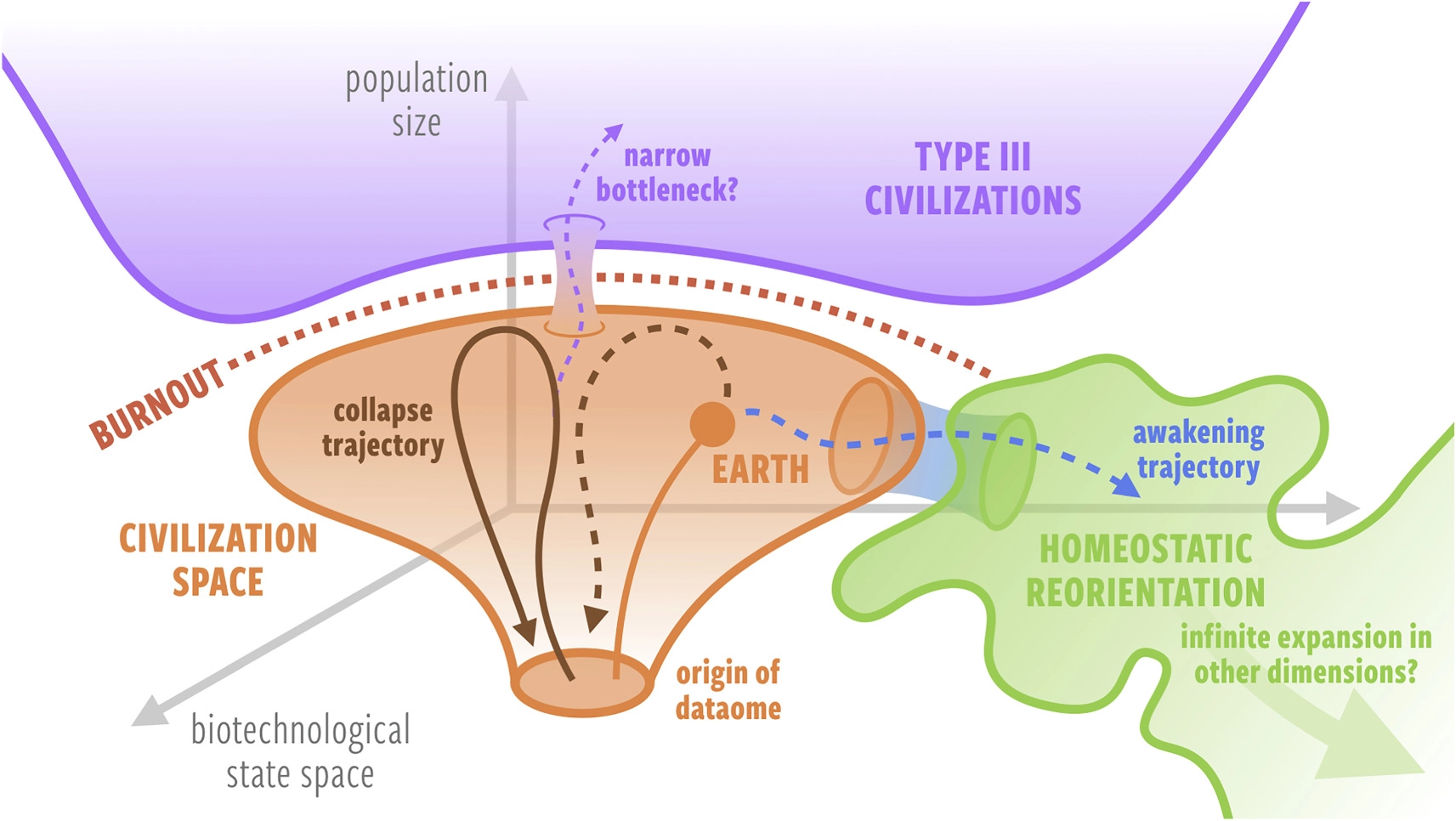

Asymptotic burnout and homeostatic awakening: a possible solution to the Fermi paradox?

TESCREALism: The Acronym Behind Our Wildest AI Dreams and Nightmares

An article about some flavours of longtermism leading to unpalatable conclusions. It goes on to frame several online community which have entertained longtermist ideas to be a “bundle”, which I think is intended to imply that these groups form a political bloc which encourages or enables accelerationist hypercapitalism.

I am not a fan of this kind of argument. If I disagree with longtermism, why not just say that? Disagreeing-with-longtermism-plus-feeling-bad-vibes-about-various-other-movements-and-philosophies-that-have-a-diverse-range-of-sometimes-tenuous-relationships-with-longetermism doesn’t feel like it is doing useful work.