Knowledge collapse and the epistemic commons

What happens to collective knowledge when individual learning becomes optional

2023-03-23 — 2026-04-21

Wherein Welfare Is Shown to Be Non-Monotone in the Precision of Agentic AI Recommendations, an Interior Optimum Being Identified Past Which Aggregate Wellbeing Is Diminished by Further Improvements in Accuracy.

tl;dr Agentic AI substitutes for individual learning effort but the general knowledge it depends on is a public good generated as a by-product of that same effort. Acemoglu, Kong, and Ozdaglar (2026) formalize this and show welfare is non-monotone in AI accuracy — there is an interior optimum, past which we are worse off. Their model admits a knowledge-collapse steady state in which the human-generated stock of general knowledge vanishes. Whether overall knowledge (human + AI-generated) also collapses depends on whether synthetic data can substitute for human learning — which we might suppose it can in some domains but not others.

Well, it’s really terribly simple, […] it works any way you want it to. You see, the computer that runs it is a rather advanced one. In fact, it is more powerful than the sum total of all the computers on this planet including—and this is the tricky part—including itself.

— Douglas Adams, Dirk Gently’s Holistic Detective Agency

How do foundation models change the economics of knowledge production? To a first-order approximation, LLMs provide a way of massively compressing collective knowledge and synthesizing the bits I need on demand. They do seem pretty good at being “nearly as smart as everyone on the internet combined.” I’m not sure the distinction is easy to delineate — as someone whose career has been built on interdisciplinary work and who’s often been asked to “synthesize” knowledge from different domains. I’m not sure the distinction between “synthesizing” and “generating” is so clear-cut; certainly much of my publication track record is “merely” synthesizing, and it was bitter and hard work.

Using these models will test how much collective knowledge depends on our participation in boring, boilerplate grunt work, and which incentives are necessary to encourage us to produce and share our individual contributions.

1 Complement or substitute?

Is AI a bicycle or a snowmobile? The question looks different at the individual and societal scales.

Consider a doctor diagnosing a patient. She needs two kinds of knowledge: broad medical understanding (how diseases work, which treatments exist, what the literature says about drug interactions) and knowledge specific to this patient (their symptoms, history, lifestyle, preferences). Neither kind is much use without the other — knowing everything about the patient is worthless if she doesn’t understand the disease, and vice versa. And the process of learning generates both at once: reading the patient’s chart and examining them sharpens her understanding of their case and deposits a thin layer of general medical insight that could, in principle, help other doctors with other patients. Which is to say there are economies of scope in learning — the private return (context-specific knowledge) and the public by-product (general knowledge) are jointly produced.

Acemoglu, Kong, and Ozdaglar (2026) build a formal model around this structure. The private return is what motivates the effort. The public by-product is an externality that accumulates into a community’s stock of shared knowledge — but nobody internalizes it. When a software engineer diagnoses a rare production bug, the main private payoff comes from restoring their own system. Turning the insight into a maintained public write-up — on Stack Overflow, say — would benefit many future developers, but yields only a small private return.

Now introduce agentic AI. It’s good at the context-specific part: given a patient’s records, it can synthesize a personalised recommendation. This substitutes for the doctor’s own investigative effort — she doesn’t need to work as hard to reach a decent diagnosis. But general medical knowledge complements that effort: the more she understands, the higher the marginal return from examining this particular patient. So we have an opposition: agentic AI improves individual decisions right now, while eroding the learning effort that sustains the long-run stock of human-generated collective knowledge.

2 Knowledge collapse

Acemoglu, Kong, and Ozdaglar (2026) argue that this dynamic can tip into a knowledge-collapse steady state. In their baseline model — which assumes new general knowledge comes only from human effort, not from AI-generated synthetic data — when human effort is sufficiently elastic and agentic recommendations exceed an accuracy threshold, the economy converges to an equilibrium in which the human-generated stock of general knowledge vanishes entirely. No human learning, no human-generated externality, no commons. The AI can still give personalised advice, but it’s drawing on a depleted well.

The mechanism:

- Agentic AI improves → less incentive for costly human learning effort

- Less effort → less general knowledge generated as a by-product

- Less general knowledge → lower marginal return to remaining effort

- Repeat until the high-knowledge equilibrium disappears

In this baseline (humans the sole source of new general knowledge), welfare is non-monotone in the accuracy of agentic AI. In their model, \(\tau_A\) measures the precision of the agentic recommendation (how good the AI’s personalised advice is), and \(\alpha > 1\) governs how elastic learning effort is — higher \(\alpha\) means effort drops off more steeply when the AI gets better. There is a finite welfare-maximising precision \(\tau_A^*\), and going past it makes everyone worse off. As \(\tau_A \to \infty\), welfare converges to zero — because there is nobody left generating the general knowledge that everything depends on.

When effort is very elastic (\(\alpha - 1 < \frac{1}{4}\)), the system admits multiple steady states: a high-knowledge one and a knowledge-collapse trap. Above a threshold \(\tau_A^c\), the high-knowledge steady state vanishes and collapse becomes inevitable regardless of initial conditions.

More effective aggregation of human-generated general knowledge — more effective sharing and pooling — unambiguously raises welfare and increases resilience to collapse. The asymptotic result is a kind of scaling law: both the collapse threshold and the optimal precision grow only logarithmically in aggregation capacity, and the planner optimally stays bounded away from the tipping point (by a factor of \(1/\alpha\)).

3 Empirical signs

For some early empirical indications that AI is already substituting for human effort, see Microsoft Study Finds AI Makes Human Cognition “Atrophied and Unprepared” (Lee 2025). I have many specific qualms about the experimental question the authors are answering there, but it’s a start.

In the context of the coding Q&A platform Stack Overflow, there is now less activity, less human engagement with questions, and arguably less generation of new knowledge as experienced coders grapple with new challenges. The evidence strongly indicates this is in response to people turning to generative AI tools.1 The pattern seems to be similar for Wikipedia, with less article reading and generation in areas where ChatGPT is an effective substitute.2

Kosmyna et al. (2025) find that using ChatGPT for writing assistance reduces users’ ability to memorize and accurately record their own arguments. The resulting output also looks much more similar across writers, raising concerns about the erosion of individual creativity and agency. Or maybe it causes all writers to converge on the truth(tm).

Invective: Why Quora isn’t useful anymore: A.I. came for the best site on the internet. is a more popular-press version of the same story.

4 Intellectual property and incentive shifts

Historically, there was a strong incentive for open publishing. In a world where LLMs effectively use all openly published knowledge, we might see a shift towards more closed publishing, secret knowledge, and hidden data, and away from reproducible research, open-source software, and open data, since publishing those things will be more likely to erode our competitive advantage.

Generally: will we wish to share truth and science in the future, or will economic incentives push us towards a fragmentation of reality into competing narratives, each with its own private knowledge and secret sauce?

Consider the incentives for humans to opt out of the tedious work of being themselves in favour of AI emulators: The people paid to train AI are outsourcing their work… to AI. This makes models worse (Shumailov et al. 2023).

We might ask: “Which bytes did you contribute to GPT4?”

5 Organizational knowledge dynamics

There is a theory of career moats — unique value propositions only I have, which make me unsackable. I’m quite fond of Cedric Chin’s writing on this theme, which is often about developing valuable skills. But he, and organizational literature generally, acknowledges there are other ways of ensuring unsackability that are less pro-social — for example, gaining power over resources, becoming a gatekeeper, or using opaque decision-making.

Both these strategies co-exist in organizations, but I think it’s likely that LLMs, by automating skills and knowledge, tilt incentives towards the latter. In that scenario, it’s rational for us to worry less about how well we use our skills and command of open (e.g. scientific, technical) knowledge to be effective, and instead to focus on how we can privatise or sequester secret knowledge that we control exclusively if we want to show a value-add to the organization.

How would that shape an organization, especially a scientific employer? Longer term, I’d expect to see a shift (in terms both of who is promoted and how staff personally spend time) from skill development and collaboration, towards resource control, competition, and privatization: less scientific publication, less open documentation of processes, less time doing research and more time doing funding applications, more processes involving service desk tickets to speak to an expert whose knowledge resides in documents we cannot see.

This is the Acemoglu mechanism playing out at the organizational scale. The general knowledge my learning generates is an externality I don’t capture; when agentic AI devalues the private return to that learning, the externality dries up first.

6 Synthetic data, model collapse, and the feedback loop

An obvious objection to the knowledge-collapse model: can’t AI generate its own training data and sidestep the dependence on human effort entirely?

Sometimes, yes. AlphaGo Zero learned to play Go from self-play alone, with no human games at all. More recently, AI systems have discovered faster matrix multiplication algorithms and proved theorems by generating candidates and checking them against formal verification. The common thread is that these domains have an objective correctness signal — we can tell whether a proof is valid or a move wins the game without needing a human to evaluate it. In such domains, synthetic data can substitute for human-generated knowledge. The total stock of knowledge (human + AI-generated) stays positive even if humans stop contributing, because the AI keeps discovering things on its own.

Acemoglu, Kong, and Ozdaglar (2026) formalize this and find that the human contribution still collapses — the incentive problem doesn’t go away just because machines can also learn. But the total knowledge stock bottoms out above zero rather than at zero, so the welfare consequences are less dire. The qualitative picture is the same (agentic AI still crowds out human effort, the trap still exists), but the trap is shallower.

In domains without an objective correctness signal — open-ended text, policy advice, medical judgement, scientific interpretation — synthetic data is a different beast. An AI trained on its own outputs has no external check on whether it’s drifting. Recursive self-training becomes self-referential and degrades the information environment. Shumailov et al. (2023) call this model collapse: each generation of model, trained on the previous generation’s outputs, loses the tails of the distribution and converges on an increasingly bland mean.

The distinction between these two failure modes matters. Model collapse is about training on one’s own outputs. Knowledge collapse is about humans opting out of generating the inputs. They reinforce each other. See also Spamularity and slop for the adversarial dimension of the same problem — the dark forest where automated entities colonise public discourse.

7 Why learn, young humans

An implication of the Acemoglu model that I keep chewing on: it may be welfare-improving to deliberately limit the accuracy of agentic AI recommendations.

Their Proposition 13 characterises an optimal two-phase policy: first a moratorium (fully suppress agentic recommendations, forcing agents to rely on their own learning, rebuilding the stock of general knowledge), then a permanent precision cap at the welfare-maximising level. Garbling — adding noise to the agentic signal — preserves learning incentives. Airlines enforce mandatory hand-flying for pilots for exactly this reason. Flight instructors call it “maintaining stick-and-rudder skills”; the Acemoglu model calls it information design. Same idea.

I don’t know how to operationalise this for AI policy. “Make the AI deliberately worse” is a hard sell. But the mathematical structure says something important: the planner stays bounded away from the collapse threshold for human knowledge. There is no regime in which “as accurate as possible” is optimal, because the human learning externality is always worth preserving.

The Tragedy of the Cognitive Commons: How the Smartest AI Could Produce the Dumbest Society is a long polemical analysis of Acemoglu, Kong, and Ozdaglar (2026).

8 The optimistic channel: aggregation

One unambiguous positive in the model: increasing the effective aggregation capacity I — meaning more effective sharing and pooling of human-generated general knowledge — always raises welfare. It also shrinks the basin of attraction of the knowledge-collapse steady state, making the high-knowledge outcome more robust.

This suggests we should invest in knowledge-sharing infrastructure: open-access publishing, well-maintained Q&A platforms, Wikipedia-like commons, open data, reproducible research. The model literally says that the complement to human effort is the quality of the general-knowledge commons, not the power of the agentic recommendation.

Which brings us back to public sphere business models.

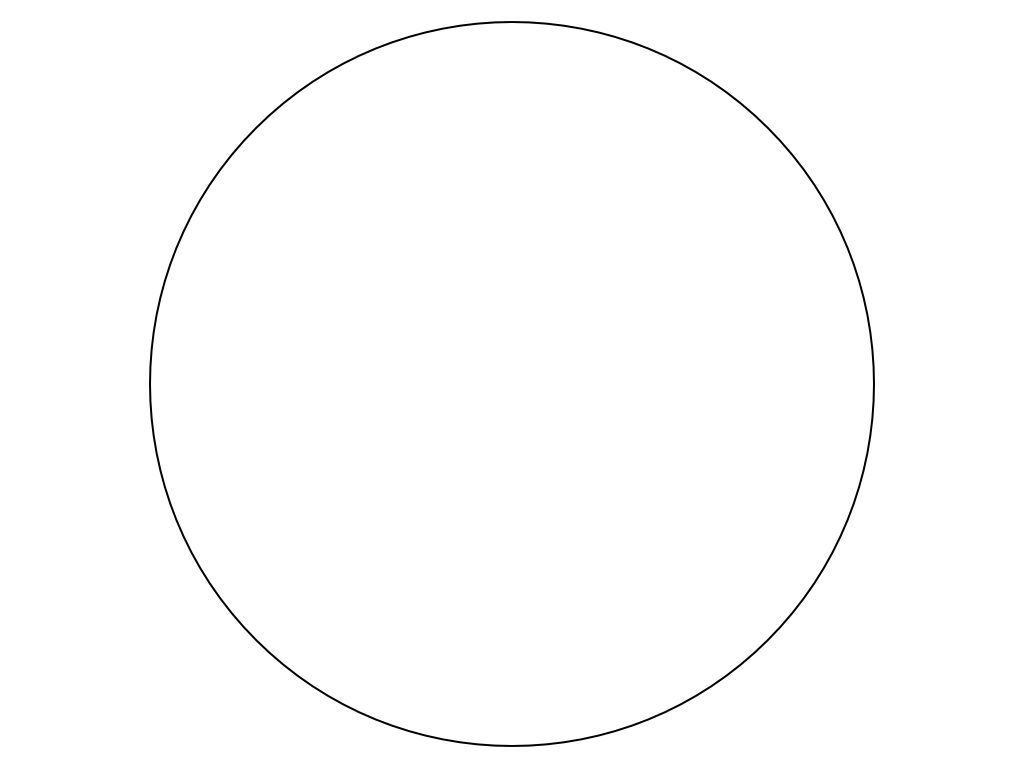

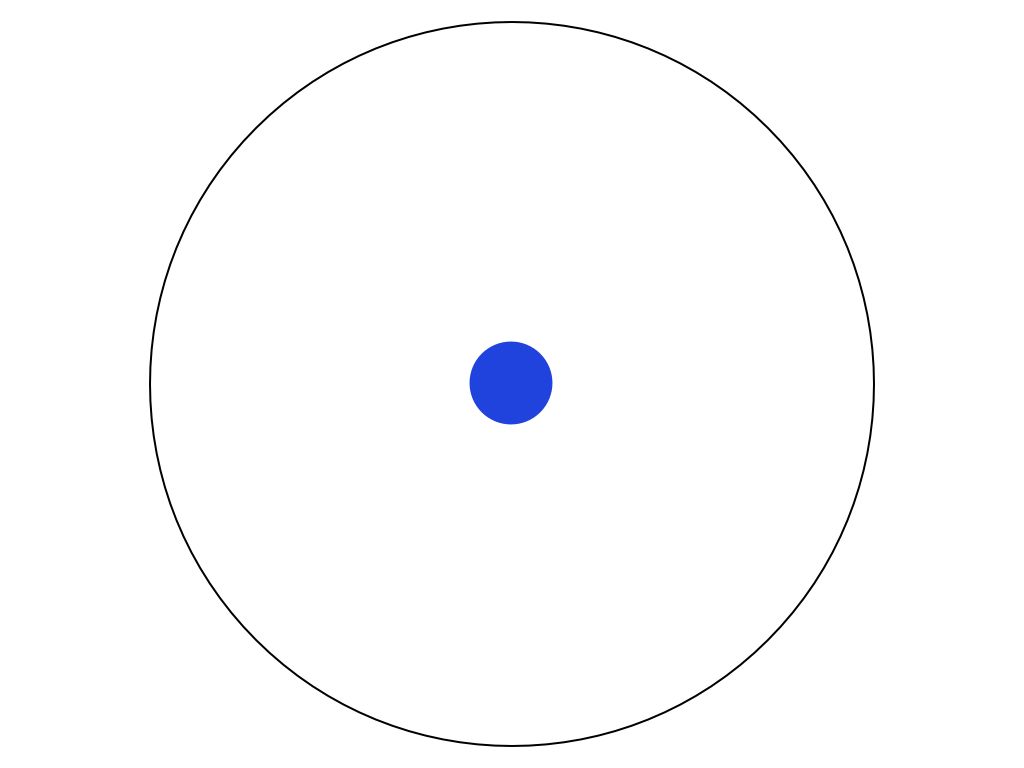

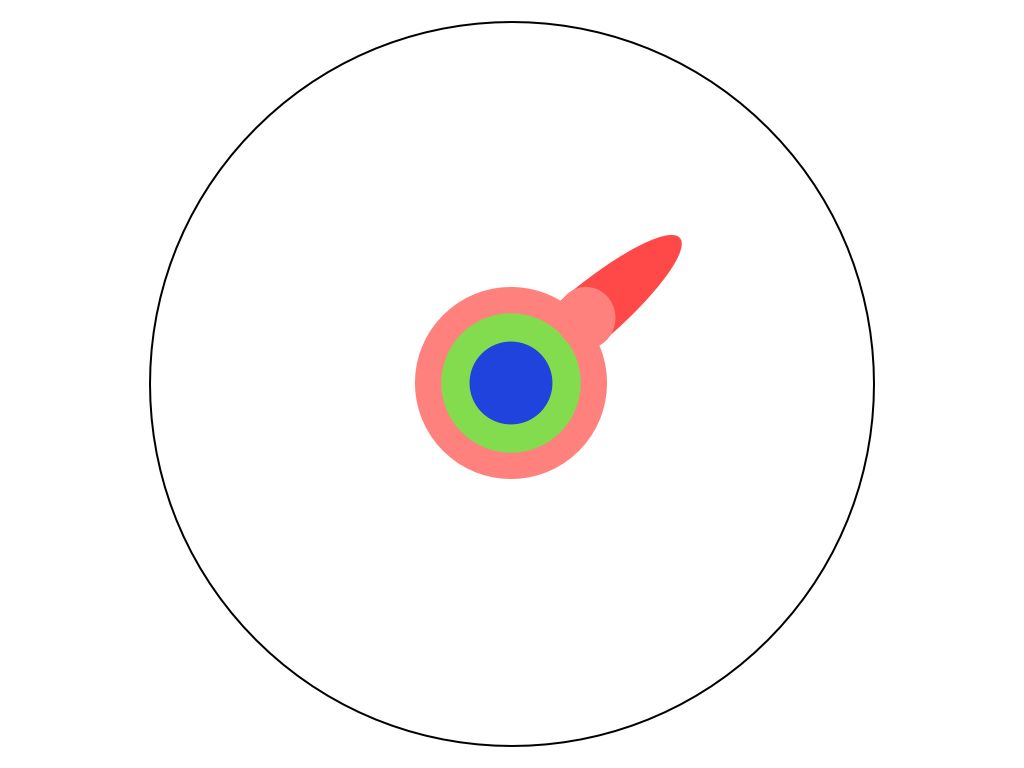

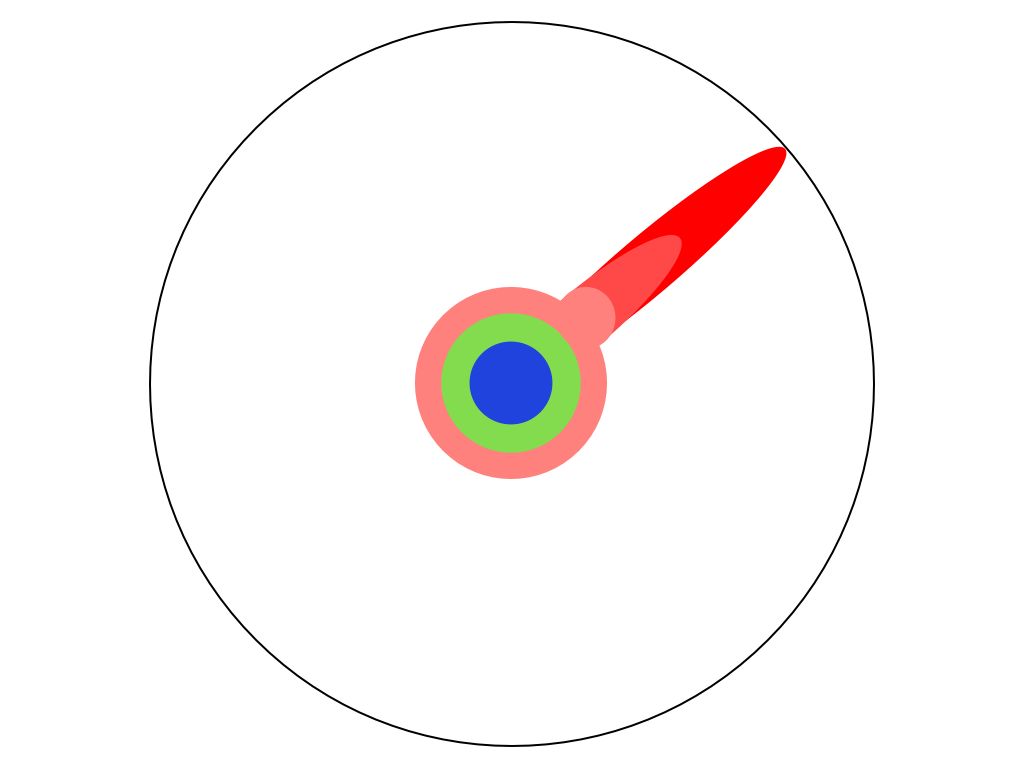

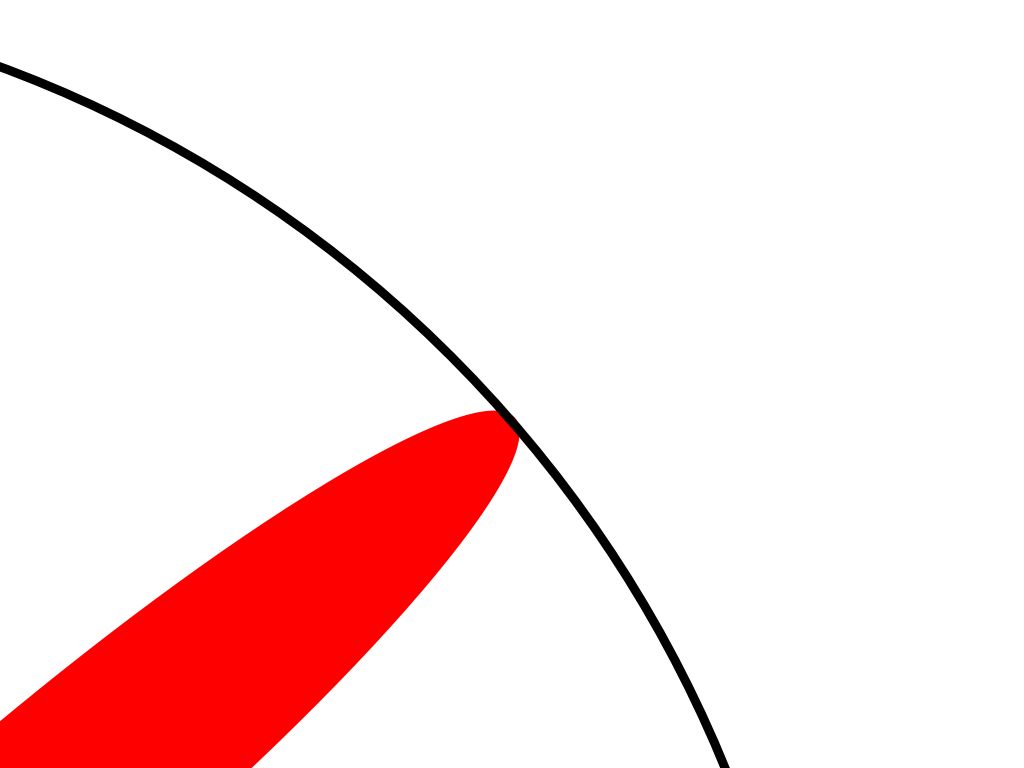

9 Matt Might’s PhD and the LLM

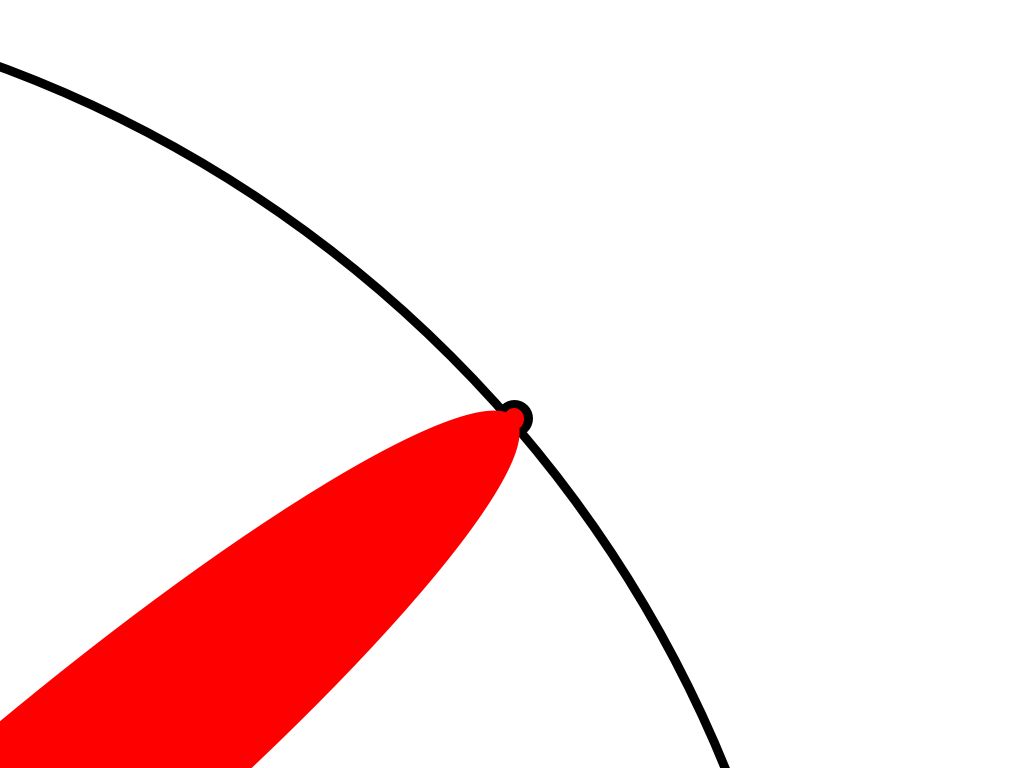

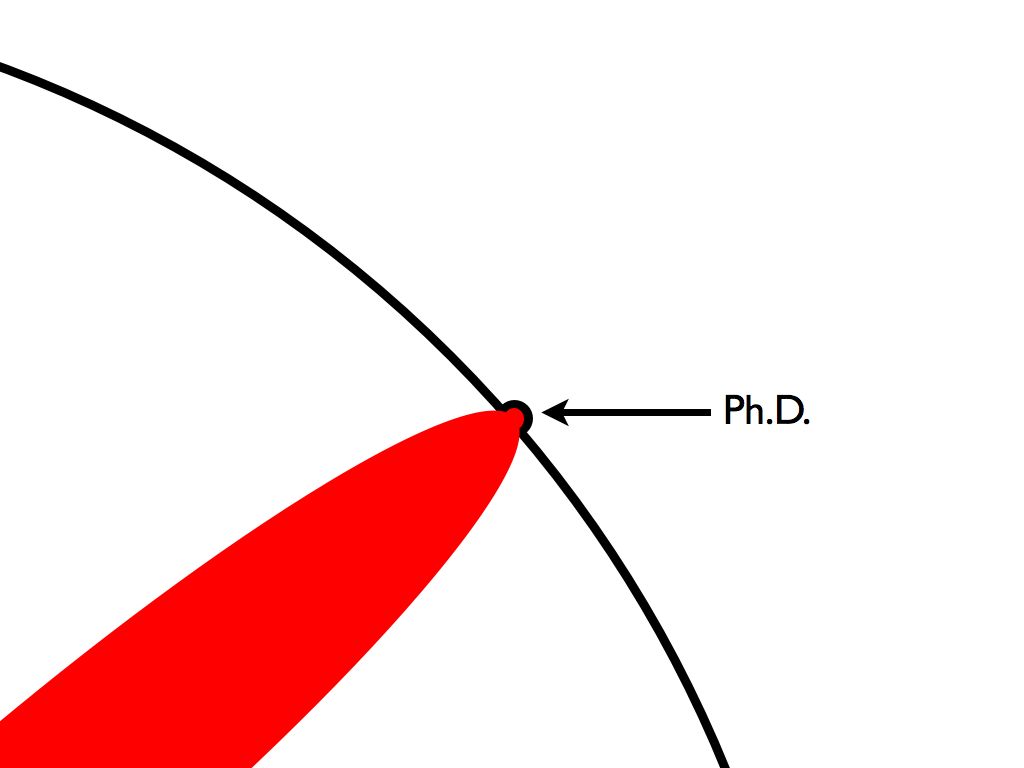

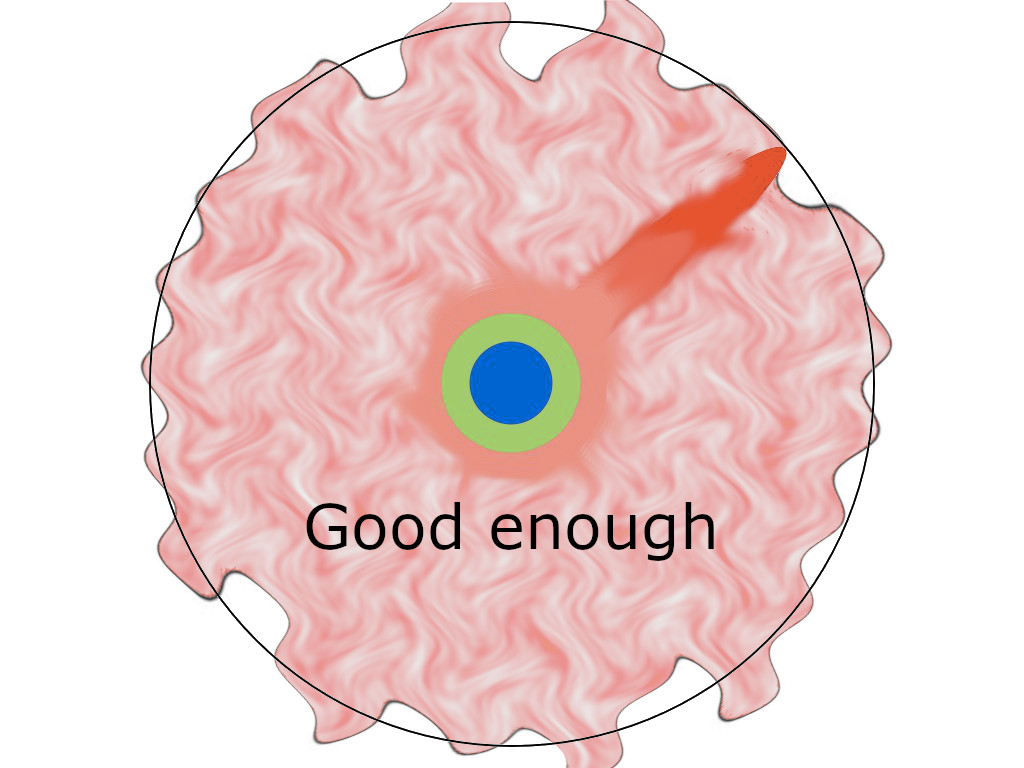

Think of Matt Might’s iconic illustrated guide to a Ph.D..

In the 2020s, does the map look like this?

If the Acemoglu model is right, the circle doesn’t just get a smooth bump outward. The LLM fills in context-specific synthesis — the stuff inside the circle — while the human effort that pushes the boundary outward (novel general knowledge, the externality nobody internalizes) starts to erode. In domains where AI can push the boundary on its own (formal proofs, games), this may not matter. In domains where it can’t (medicine, policy, the social sciences, most of what we mean by “understanding the world”), the dent we make during a PhD might still matter — but the substrate we’re pushing against is thinning, because fewer people are doing the unglamorous work of maintaining it.

10 Incoming

MALUS - Clean Room as a Service | Liberation from Open Source Attribution

Large AI models are cultural and social technologies (Farrell et al. 2025)

Greg Egan, Understudies

In a future where the rich kids are being raised with a digital Cyrano beside them to sweet-talk their way into the best jobs, four friends train together for a battle to prove that other kinds of minds might still have the edge.

I Do Not Think It Means What You Think It Means: Artificial Intelligence, Cognitive Work & Scale