Incoming links and notes

2020-03-05 — 2025-09-07

Wherein a Miscellany of Incoming Links and Notes Is Laid Out, Being Maintained as an Intermittently Updated, Disorderly Repository; Items Are Ranged From AI‑safety Papers and Preprints to Tooling Links and Assorted Curiosities.

Assumed audience:

Me

Things that I think should be noted and filed in an orderly fashion, but which I lack time to address right now. Content will change incessantly.

1 Links

Jakarta’s Remarkable Urban Transit Transformation – In Development

-

Data-Driven DJ is a series of music experiments that combine data, algorithms, and borrowed sounds.

ETHZ’s incredible historical teaching aids: E-Pics Scientific instruments

Bret Beheim

The Most Secure, Modern Computer Might Be A Mac | Hackaday (How to boot a macbook into asahi linux)

Copyright reform is necessary for national security - Anna’s Blog

Reliable Sources: How Wikipedia Admin David Gerard Launders His Grudges Into the Public Record

-

All 2,242 illustrations from James Sowerby’s compendium of knowledge about mineralogy in Great Britain and beyond, drawn 1802–1817 and arranged by color.

I cannot believe how good this is you guys

-

Palisade Research is a nonprofit investigating cyber offensive AI capabilities and the controllability of frontier AI models. […] Palisade’s mission is to help people and institutions build the understanding needed to avoid permanent disempowerment by strategic AI agents.

Are extractive institutions always bad? - Aporia. Interesting literature list of extractive colonialism. The central argument is that extractive institutions are worse than inclusive institutions for the colonised, but also that they might be better than the status quo. Examples are cherry-picked, but the nuance is nice.

The Bureaucracy of Automatons | Conflated Automatons : An introduction to the notes on Confucian Software.

James Butler · Am I perhaps in Italy? Cultures of Homosexuality

Zoneless is a time zone-aware meeting planner that shows you cannot have east-coast Australia/Europe/West Coast US groups, if you want to meet synchronously

Ada Palmer: Inventing the Renaissance - by Martin Sustrik on re-running the tape, forecasting, ensembles and chaos, via the papacy

Meditations on Doge - by Martin Sustrik - 250bpm (Institutional reform from a Soviet-bloc perspective)

How “95%” escaped into the world – and why so many believed it

Clavicular and Fuentes - by Aidan Walker “elder zoomers vs. the young ones”

THROUGH THE BLIND HOLE | APOCALYPSE CONFIDENTIAL “The Birth & Death of Autotrepanation”. CW: everything about this is upsetting

Variational Learning Finds Flatter Solutions at the Edge of Stability | OpenReview

Learning Latent Variable Models via Jarzynski-adjusted Langevin Algorithm | OpenReview

Understanding neural networks through sparse circuits | OpenAI

Nebari OSS data science

Olmo 3: Charting a path through the model flow to lead open-source AI | Ai2

Flutracking.net | Tracking respiratory illness across Australia and New Zealand

Gaia Network: An Illustrated Primer - by Roman Leventov/ Gaia Network: a practical, incremental pathway to Open Agency Architecture — LessWrong / GAIA Lab | AI Safety & Collective Intelligence /gaia-os/gaia_network_prototype

-

Today the GDELT Project consists of over a quarter-billion event records in over 300 categories covering the entire world from 1979 to present, along with a massive network diagram connecting every person, organization, location, theme and emotion.

preprocessed dataset of sentiment analysis and entity recognition over global news databases back to 1979.

What drove the rise of civilizations? A decades-long quest points to warfare

Academia in AI Safety

Interesting preprint and thesis on observational uncertainty and climate sensitivity

Regression, Fire, and Dangerous Things (1/3) | Elements of Evolutionary Anthropology

Exploration hacking: can reasoning models subvert RL? — LessWrong

URL Lengthener – The Absurd Opposite of a URL Shortener. Beautiful. Transforms https://danmackinlay.name/ into https://www.namitjain.com/tools/url-lengthener/cache/that/settings/pro/nested/why/cache/version/are/keeps/turbo/🍝/🤖/thing/logs/very/if/critical/logs/temp/🦄/archive/pro/user/🤖?data=aHR0cHMlM0ElMkYlMkZkYW5tYWNraW5sYXkubmFtZSUyRg%3D%3D&utm_source=infinite&utm_medium=spaghetti&utm_campaign=hyper-elongation&cachebust=1756914577271&meta=loremipsumdolorsitamet"e1=cogito-ergo-sum"e2=the-unexamined-life-is-not-worth-living

Replicate— “Run and fine-tune models. Deploy custom models. All with one line of code”

Lovable the capable AI website builder

Social behavior curves, equilibria, and radicalism – Unexpected Values

glama “All-in-one AI workspace”

[2502.21098] Re-evaluating Theory of Mind evaluation in large language models

Can Transformer Models Generalize Via In-Context Learning Beyond Pretraining Data? | OpenReview

Machine Learning as an Experimental Science | Machine Learning

Preparing for the Intelligence Explosion | Forethought

In fact, there are ways we can prepare for these challenges, today. For example, we can:

- Prevent extreme and hard-to-reverse concentration of power, by establishing institutions and policies now. For instance, we can ensure that data centers and essential components of the semiconductor supply chain are distributed across democratic countries and that access to frontier AI continues to be available to many parties, both within and across countries.

- Empower responsible actors: Increase the chance that those actors who wield the most power over the development of superintelligence (such as politicians and AI company CEOs) are responsible, competent, and accountable.

- Build AI tools to improve collective decision-making. We could begin building, testing, and integrating AI tools which we want to be in wide use by the time the intelligence explosion is underway, across epistemics, deal-making, and decision-making advice.

- Remove obstacles to applying superintelligent AI to downstream challenges, for example by unblocking bureaucracy which makes it unnecessarily difficult to use advanced AI tools in government.

- Get started early on institutional design for new areas of governance, including on the rights of digital beings and the legal framework for property claims on offworld resources.

- Raise awareness and improve our understanding of the intelligence explosion and each of the challenges that follow from it, so that it takes less time to get up to speed at the crucial moments when decisions do need to be made.

From Design doc to code: the Groundhog AI coding assistant (and new Cursor meta)

[2412.01786] Hard Constraint Guided Flow Matching for Gradient-Free Generation of PDE Solutions

-

What does it mean to give a model a capability? And while we’re on the subject, how do you give a model a capability?

I’m talking about AI progress in the past year again. Contrary to the expectations that GPT-4 set in 2023, research labs emphasized the “capabilities” of the models they released this year over their scale.

The Math Academy Way: Using the Power of Science to Supercharge Student Learning

Gordon Brander — The Zombocom Problem

Make better documents. — Anil Dash

Even very smart, capable communicators routinely send important documents that distract from, or even undermine, their goals.

Experiment metascience grant

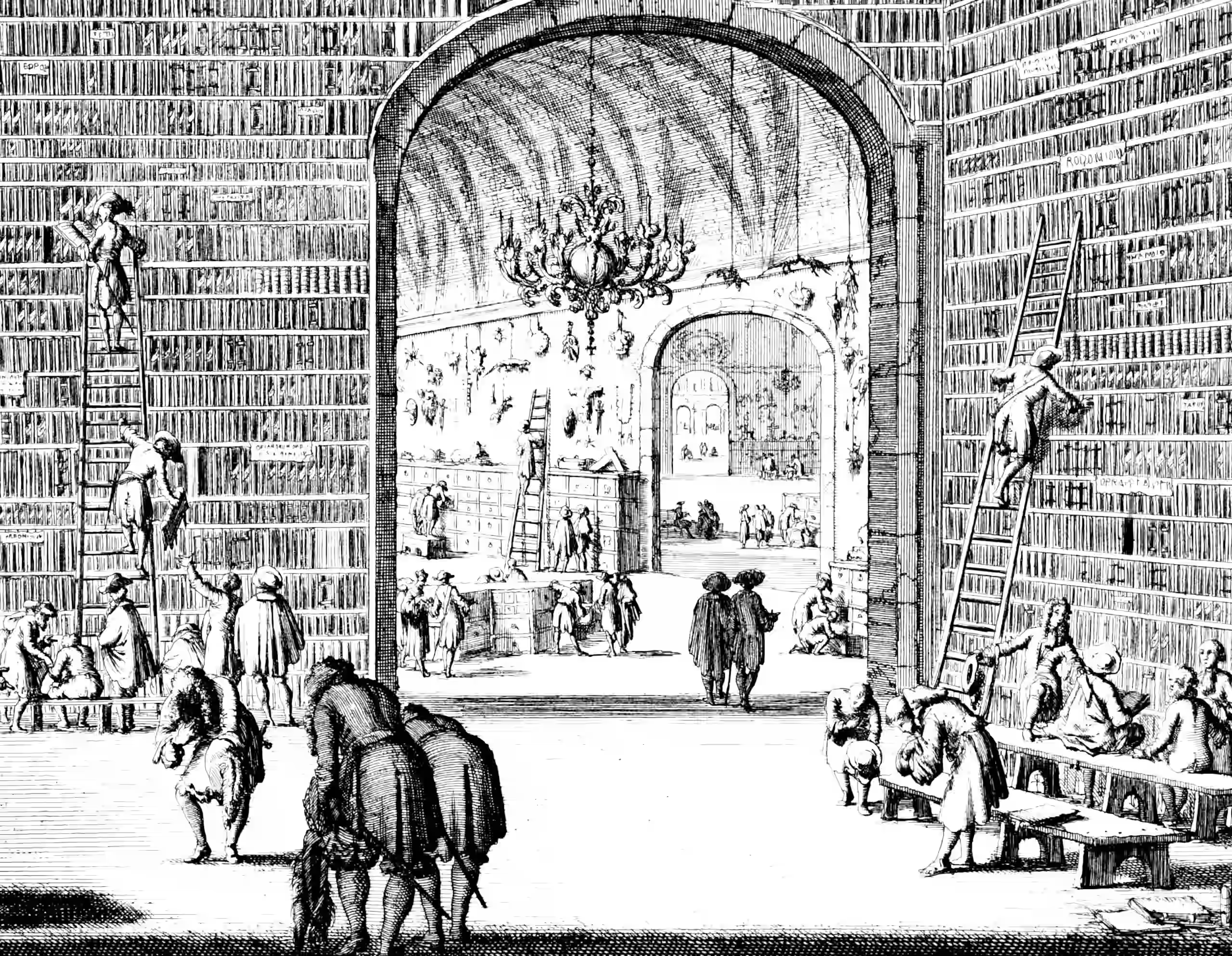

Art in Art: Cabinets of Curiosity and the Rise of the Gallery Painting — The Public Domain Review

PolymathicAI/walrus: “This repo is built for training and evaluating Walrus, a multi-domain foundation model for continuum dynamics trained primarily on fluid-like behaviors. Walrus was trained on 19 different physical scenarios spanning 63 physical variables in both 2 and 3D. Walrus utilizes new tools for adaptive computation and improved stability in order to achieve accurate long-term rollouts while co-adapting sampling and distribution to improve training throughput despite handling varying dimensions, resolutions, and aspect ratios.”

The Perils of Optimizing Learned Reward Functions — LessWrong

Generative AI has access to a small slice of human knowledge | Aeon Essays

Pebbling – a new love language

Not giving actual pebbles but the sharing of memes and gifs as a form of love language. Spotting something and sharing is a form of connection. A ‘look I saw this and thought of you!’ moment.

DDN: Discrete Distribution Networks / Discrete-Distribution-Networks.github.io/blog_en.md / [2401.00036] Discrete Distribution Networks

Subscribe to Dance Around The World

Come with BBC Radio 6 DJ Jamz Supernova on a global voyage via dancefloors and underground scenes around the world. Be transported into alternative clubs, electronic havens, jazz bars, and the unclassified in between. Each album selection tells a story and spotlights bold artists who fuse genres, transcend geography, and celebrate creative freedom.

Renormalization Redux: QFT Techniques for AI Interpretability — LessWrong

tinygrad/tinygrad: You like pytorch? You like micrograd? You love tinygrad! ❤️

Thomas Urquhart:

- Maligned for mathematics: Sir Thomas Urquhart and his Trissotetras: Annals of Science: Vol 76 , No 2 - Get Access

- The Trissotetras: Or, A Most Exquisite Table For Resolving all manner of Triangles, whether Plaine or Sphericall, Rectangular or Obliquangular, with greater facility, then ever hitherto hath been practised. Most necessary for all such as would attaine to the exact knowledge of Fortification, Dyaling, Navigation, Surveying, Architecture, the Art of Shadowing, taking of Heights, and Distances, the use of both the Globes, Perspective, the skill of making the Maps, the Theory of the Planets, the calculating of their motions, and of all other Astronomicall computations whatsoever. Now lately invented, and perfected, explained, commented on, and with all possible brevity, and perspicuity, in the hiddest, and most re-searched mysteries, from the very first grounds of the science it selfe, proved, and convincingly demonstrated

- Urquhart’s Inflationary Universe | SpringerLink

- “Verbs, mongrels, participles and hybrids”: Sir Thomas Urquhart of Cromarty’s Universal Language in: “Joyous Sweit Imaginatioun”

Can We Save Our Internet From The Bots, AND Preserve Anonymity?

ChatGPT Hallucinated a Feature, Forcing Human Developers to Add It

Spartacus app: commit to doing things with people

Project War and Peace: Tolstoy’s novel with simplified names

Europe and brain drains

Conflict Transformation | Coursera / Conflict Transformation | Beyond Intractability

Rachel Thomas, PhD - Deep learning gets the glory, deep fact checking gets ignored

Schneier, When AIs Start Hacking

-

The story so far: back in the early two-thousands Christian Bök, famous for accomplishments lesser poets would never even dream of attempting […] started work on the world’s first biologically-self-replicating poem: the Xenotext Experiment, which aspired to encode a poem into the genetic code of a bacterium. Not just a poem, either: a dialog. The DNA encoding one half of that exchange (“Orpheus” by name) was designed to function both as text and as a functional gene. The protein it coded for functioned as the other half (“Eurydice”), a sort of call-and-response between the gene and its product. The protein was also designed to fluoresce red, which might seem a tad gratuitous until you realize that “Eurydice”’s half of the dialog contains the phrase “the faery is rosy/of glow”.

Smoother - A unified spatial dependency framework in PyTorch — Smoother v1.0.0 documentation

duchesneaumathieu/pyperlin: GPU accelerated Perlin Noise in python

shot-scraper: A command-line utility for taking automated screenshots of websites

Susan McKinnon Foundation - Strengthening Australia’s Democracy

Dan Simpson on GPs

Clio: Privacy-preserving insights into real-world AI use Anthropic

Artificial Analysis: Comparison of AI Models across Quality, Performance, Price

Nvidia unveils $3,000 desktop AI computer for home researchers - Ars Technica

-

Mathematics was designed for the human brain.

Multistakeholder Engagement for Safe and Prosperous AI - Future of Life Institute

Infrastructure alignment problem: How Madrid built its metro cheaply - Works in Progress

Beyond Message Passing: a Physics-Inspired Paradigm for Graph Neural Networks

SSI Club Safe Super Intelligence (SSI) Club

An Overview of “Obvious” Approaches to Training Wise AI Advisors – AI Impacts

Free Anagram Sentence Generator for English, German, French, and Spanish

Reflections on 2020 as an independent researcher | Andy Matuschak

Kate Wagner’s piece Behind F1’s Velvet Curtain was taken down shortly after publication

The Shift from Models to Compound AI Systems – The Berkeley Artificial Intelligence Research Blog

Interesting synth: Vital

Ask a Neoliberal: An Interview with J. Bradford DeLong - Dissent Magazine

GitHub - ml-explore/mlx: MLX: An array framework for Apple silicon

-

Argues that, for a given limited event budget, closed captioning is more inclusive than hiring a signer, since many deaf people do not sign; captions also help autistic and non-native English speakers.

Science communicators need to stop telling everybody the universe is a meaningless void

Accelerating Natural Gradient with Higher-Order Invariance | Yang Song

How to Find the Taylor Series of an Inverse Function - Randorithms

Remix - Build Better Websites — distributed, rather than static, site rendering. Backed by Shopify.

f-DM: A Multi-stage Diffusion Model via Progressive Signal Transformation | OpenReview

[2309.10068] A Unifying Perspective on Non-Stationary Kernels for Deeper Gaussian Processes

Monitoring, Streamlining and Reorganising Work with Digital Technology

[2302.02947] GPS++: Reviving the Art of Message Passing for Molecular Property Prediction

CodaLab Competitions: An Open Source Platform to Organize Scientific Challenges

Pushover: Simple Notifications for Android, iPhone, iPad, and Desktop

“VC qanon” and the radicalization of the tech tycoons - Anil Dash

Smart Countdown Timer is a good timer.

Smart Countdown Timer allows you to use natural language to set, modify and start a countdown on your Mac. Our simple and easy to use UI just requires you to enter your countdown time using plain English, such as ‘1 hour and 35 mins’ or ‘add 25 mins’.

-

Agenda’s unique approach of organising notes into a timeline helps to drive your projects forward. While other apps focus specifically on the past, present, or future, Agenda is the only note-taking app that tracks them all at once, giving you the complete picture.

The first AI model based on Yann LeCun’s vision for more human-like AI / [2301.08243] Self-Supervised Learning from Images with a Joint-Embedding Predictive Architecture

BIMLOGIQ is where Amir Dezfouli went to work.

SPIGM @ ICML ICML 2023 workshop on Structured Probabilistic Inference & Generative Modeling

Sam Kriss’s All the nerds are dead conflates geeks and nerds, but it’s funny anyway.

The reasonable(?) effectiveness of data analysis

Why is it that we can be thrown into the work of other people, in a field we have zero experience in, and have any expectation of making any useful impact at all? When stated objectively, it sounds utterly ridiculous. But in my experience, a data team can find something to improve, even if the impact is sometimes small.

Mastroianni’s favourite Underrated ideas in psychology

I especially like

Annie Lowrey, We Haven’t Been Measuring How the Economy Really Works

TIL Apophenia vs Pareidolia

Matthew Feeney, Markets in fact-checking

Jason Collins, We don’t have a hundred biases, we have the wrong model

factorization_machine Something something kernels, something regression something interaction effects. 🚧TODO🚧 clarify

Making Friends with Machine Learning is Cassie Kozyrkov’s lecture series.

Prof Steve Keen | Creating realistic economics for the post-crash world

Cult Classic ‘Fight Club’ Gets a Very Different Ending in China

In Which Long-Time Netizen & Programmer-at-Arms Dave Winer Records a Podcast for Me, Personally

Nicholas Gruen, Democracy: forking the project

Ethan Epperly, Low-Rank Approximation Toolbox: Nyström Approximation

The Australian academic STEMM workplace post-COVID: a picture of disarray

Merve Emre, Has Academia Ruined Literary Criticism?

Tom Stafford, Microarguments and macrodecisions

Kevin Munger, Why I am (Still) a Conservative (For Now)

Randy Au, in Data science has a tool obsession, talks about Gear Acquisition Syndrome for data scientists.

danah boyd, What if failure is the plan?

Adam Mastroianni, The great myths of political hatred

Big correlations and big interactions ([2105.13445] The piranha problem: Large effects swimming in a small pond)

How to keep cakes moist and cause the greatest tragedies of the 20th century

Microsoft CSR’s Law Enforcement Request Report is disconcertingly transparent

Marc ten Bosch, Let’s remove Quaternions from every 3D Engine (An Interactive Introduction to Rotors from Geometric Algebra)

Ti John’s Publications

Zoomers Co-Working Community (co-working for accountability)

Oshan Jarow, Markets Underinvest In Vitality

Judah, A Speech I would Like To Give Undergrads

Listen to people who tell you to embrace foolhardy ventures the same way you would listen to a newly-minted lottery winner. And look at the ones that say those are the only ways to make a difference with pure skepticism.

Darren Wilkinson’s Bayesian inference for a logistic regression model 1, 2, 3, 4, 5

https://www.patreon.com/posts/taylor-modeling-70131808

Book Review: Public Choice Theory And The Illusion Of Grand Strategy

https://downforeveryoneorjustme.com/

Census is a tool that links all the weird, different data storage systems and CRM stuff.

Nemanja Rakicevic, NeurIPS Conference: Historical Data Analysis

Samuel Moore, Why open science is primarily a labour issue

Machine Learning Trick of the Day (1): Replica Trick — Shakir Mohammed

What’s the difference between a tutorial and how-to guide? - Diátaxis

https://cbergmeir.com/

http://i.giwebb.com/

Francis Bach Going beyond least-squares – II: Self-concordant analysis for logistic regression

On the Generalization Ability of Online Strongly Convex Programming Algorithms

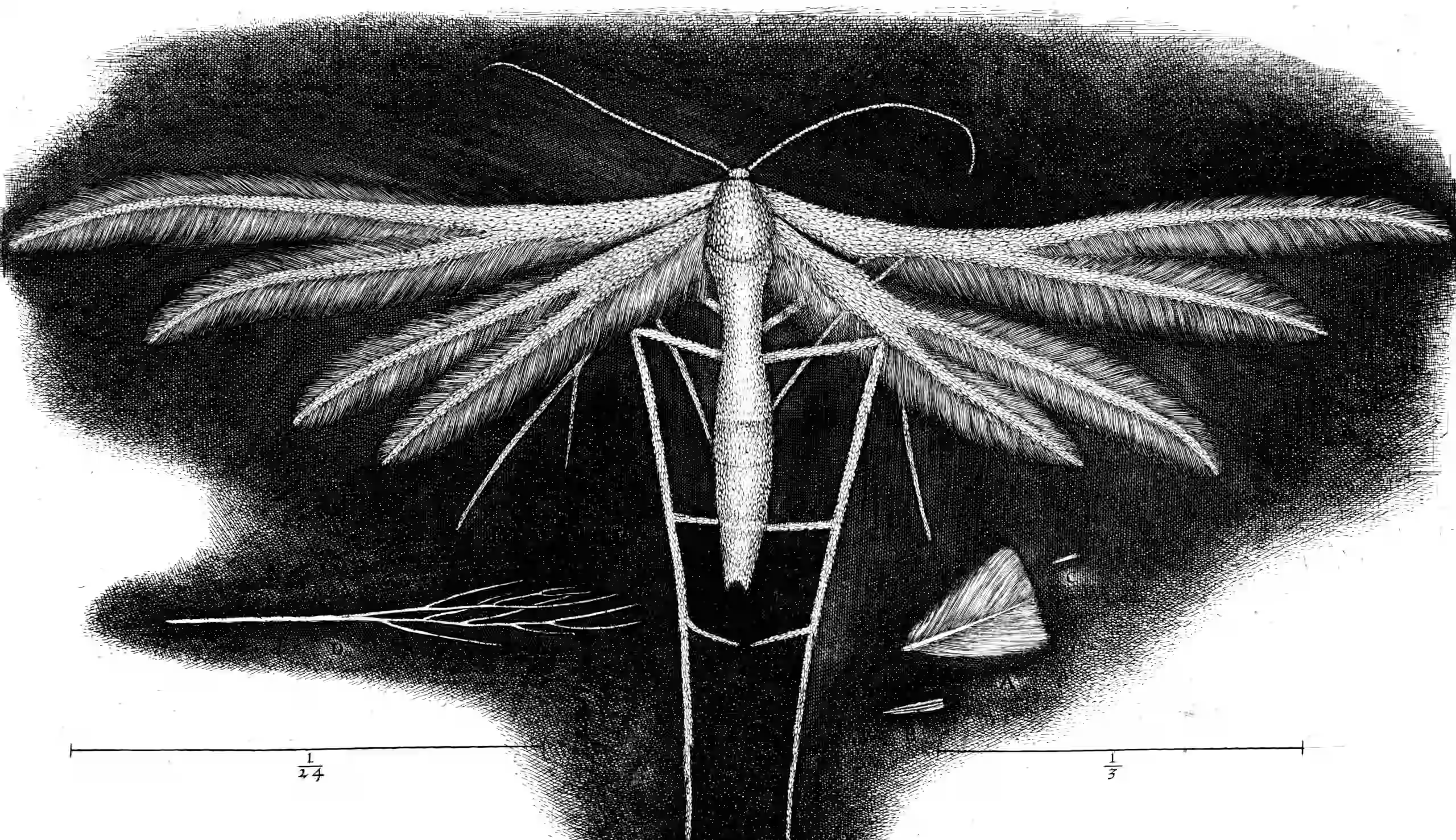

2 Illustrations

3 Homeless links

Bookmarked, but where will they ever go?

Dispel your justification-monkey with a “HWA!” - Malcolm Ocean

Roger’s Bacon, Living and Dying with a Mad God

Washable & breathable flexiOH cast adapts to the patient’s skin

-

Today I took a desk lamp whose Halogen light had burned out, whose crappy transformer always made those bulbs sputter, and whose mildly art-deco appearance I’d always liked, and swapped it out to run an LED bulb off USB power. It took about an hour’s work to replace the light with an LED, the switch with a nice heavy clicky one, and now the whole thing runs off USB-C instead of wall voltage. It emits no appreciable heat, and if these calculations are to be believed, will run for decades for a few cents per year, assuming I leave it on all the time.

I hadn’t really appreciated how big a deal USB-PD voltage negotiation was until I found out that the little chips that handle that negotiation are about the size of the end of a pencil, and that if you include the USB-C port, you can replace basically any low-voltage transformer with something smaller than a quarter.

The magic search string, if you want to try this yourself, is “usb-pd trigger module.”

vscode-paste-image/README.md at master · mushanshitiancai/vscode-paste-image

mhoye/awesome-falsehood: 😱 Falsehoods Programmers Believe in

Communications’ digital initiative and its first digital event

Playable Half Earth Socialism simulator

flatmax/vector-synth: Old 2002 era vector synth code based on XFig

Nick Chater, Would you Stand Up to an Oppressive Regime

Lambda School’s Job Placement Rate May Be Far Worse Than Advertised

State Power and the Power Law, State Power and the Power Law 2

Liquid Information Flow Control, a confidential computing DSL

Kostas Kiriakakis, A Day at the Park

-

pyribs is the official implementation of Covariance Matrix Adaptation MAP-Elites (CMA-ME) and other quality diversity optimisation algorithms.[…]

Quality diversity (QD) optimisation is a subfield of optimisation where solutions generated cover every point in a measure space while simultaneously maximising (or minimising) a single objective. QD algorithms within the MAP-Elites family of QD algorithms produce heatmaps (archives) as output where each cell contains the best discovered representative of a region in measure space.

Is Pandemic Stress to Blame for the Rise in Traffic Deaths? Nope — apparently decreased congestion made drivers go faster on shitty roads.

Black Americans are pessimistic about their position in U.S. society

A study of lights at night suggests dictators lie about economic growth

Paint With Music — Google Arts & Culture turns brushstrokes into melodies.

Chalk is a good calculator for macOS, including useful things like matrices and bitwise ops.

Clive Thompson, The Power of Indulging Your Weird, Offbeat Obsessions

Victoria Falconer is incredible to watch.

-

River is a visual connection engine. Clear your mind and surf laterally through image space. May contain NSFW content. Right-click any image to open it on Are.na for the source, full resolution, and human-curated connections.

SoSha | Social Media Engagement for Communities, Employees, and Partners

Combining embeddable sharing buttons, advanced analytics, and AI-powered content creation to provide the highest-performing organic social media amplification platform.

He Fought for Freedom. Then He Chose Prison. — on Jimmy Lai

Indivisible: A Practical Guide to Democracy on the Brink - Google Docs

The Artist Who Trained Rats to Trade in Foreign-Exchange Markets

Specific Suggestions: Simple Sabotage for the 21st Century (Posting this link is… not advice.)

A ‘Second Tree of Life’ Could Wreak Havoc, Scientists Warn - The New York Times

-

A project called CETI — Cetacean Translation Initiative — was launched to help scientists understand the language of sperm whales.

ClearerThinking.org’s courses, e.g.

- Introduction to Decision Academy: The Science of Better Decisions

- Rhetorical Fallacies: Dodging Argument Traps

- Learning from Mistakes: A Systematic Approach

- Probabilistic Fallacies: Gauging the Strength of Evidence

- Explanation Freeze: Interpreting Uncertain Events

- The Sunk Cost Fallacy: Focusing on the Future

- When to Stop Exploring | The explore-exploit trade-off

2023’s best global tech stories we wish we’d written — Rest of World

Christian Lawson-Perfect’s Interesting Esoterica is a collection of weird math papers.

Office for the Preservation of Normalcy — I feel confident enough to post these now. Cosmic horror warning posters.

How the Kayin BGF’s business interests put Myanmar at risk of COVID-19. Myanmar’s ethnic border militias sound fascinating.

I’d like to read the diaries of Usama ibn Munqidh

-

Janet is a functional and imperative programming language. It runs on Windows, Linux, macOS, BSDs, and should run on other systems with some porting. The entire language (core library, interpreter, compiler, assembler, PEG) is less than 1MB. You can also add Janet scripting to an application by embedding a single C source file and a single header.

Seems to work on WebAssembly, too — e.g. Bauble