Debugging, profiling and accelerating Julia code

2019-11-27 — 2020-06-26

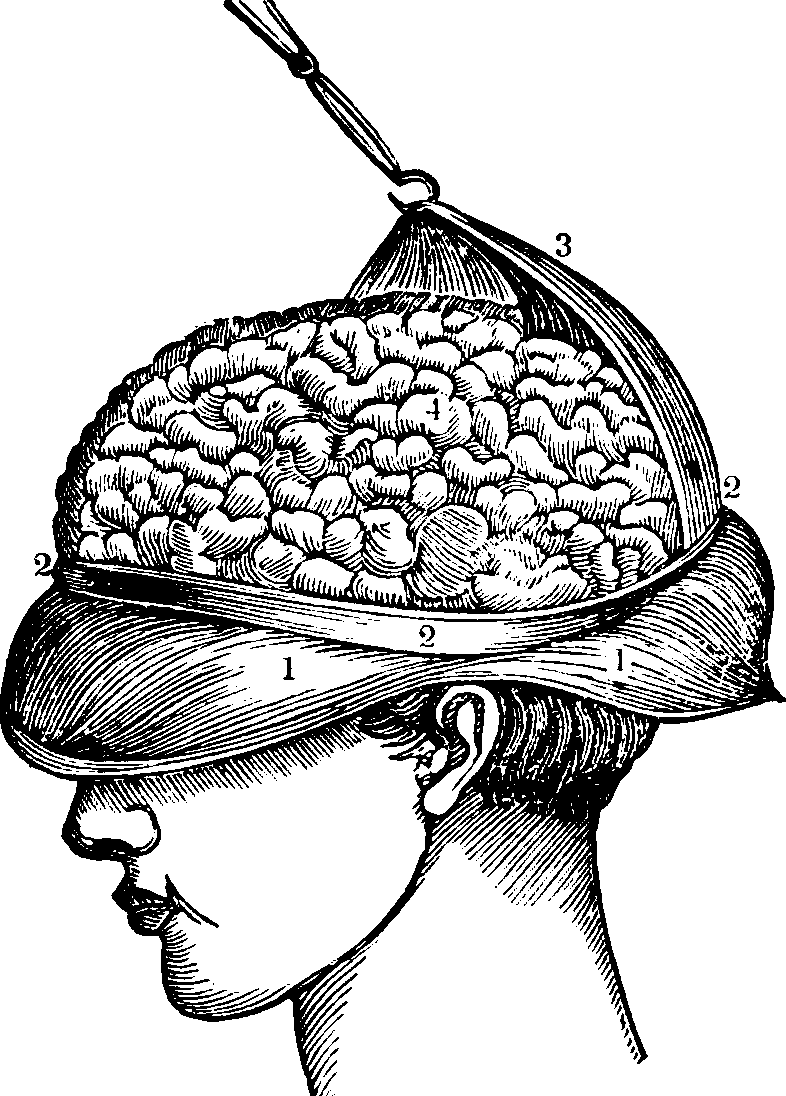

Wherein Julia’s Debugging, Profiling and Speed Tactics Are Surveyed, REPL-only Debuggers and Profile/ProfileView Are Noted, and Explicit LRU-style in-Memory Caching Patterns Are Recommended for Heavy Computations.

Debugging and profiling and performance hacks in Julia.

1 Exceptions

1.1 Stacktraces

🏗

1.2 Don’t be uninterruptible!

If you try to make a script robust against miscellaneous errors by catching and ignoring generic exceptions (e.g. because it has to work with IO or some badly-behaved algorithm that can fail in various ways), it is easy to cause it to be uninterruptible because interrupting is also an exception. This seems to be the idiom to cause it to try to grind on with boring errors but exit on user request:

However, this will also continue through other errors that I would probably like to stop on (e.g. TypeError, MethodError, ArgumentError) because they imply I did something foolish. Hmm. Possibly there is no one-size-fits-all solution here.

2 Tracing

There is a tracing debug ecosystem based on JuliaInterpreter. This provides such useful commands as @bp to inject breakpoints and @enter to execute a function in a step debugger. (Juno is still, for my taste, unpleasant enough that this is not worth it.) doomphoenix-qxz explains:

There are also two different (main) debuggers that you’ll want to learn about, because each one has different use cases. Debugger.jl is your best bet if you want to step through functions, but it runs Julia code through the interpreter instead of compiling it, and if you need to figure out why your ML model starts diverging after training for 30 epochs on 10 million data points, that’s going to be too slow for you. If you need to debug but also compile your code for speed, you’ll need Infiltrator.jl, which will allow you to observe everything that’s going on at the breakpoints you set, but will only enter debug mode at the breakpoints that you set before running your code. I believe the integrated debugger in Juno is based on Debugger.jl, but I’m not 100% sure.

NB none of these work from the IJulia/jupyter notebook interface; they must be run from the REPL.

There are some other ones I have not yet tried:

- Rebugger.jl - an expression-level debugger for Julia with a provocative command-line (REPL) user interface

- MagneticReadHead.jl - a Julia debugger based on Cassette.jl

- rr the Time Traveling Bug Reporter can reconstruct hard-to-identify weird bugs by reconstructing difficult state in a complex project.

Linter, Lint.jl can identify code style issues. (also has an atom linter plugin).

3 Going fast isn’t magical

Don’t believe that wild-eyed evangelical gentleman at the Julia meetup. (and so far there has been one at every meet-up I have been to.) He doesn’t write actual code; he’s too busy talking about it. You still need to optimize and hint to get the most out of your work.

Active Julia contributor Chris Rackauckas is at pains to point out that type-hinting is rarely required in function definitions, and that often even threaded vectorization annotation is not needed, which is amazing. (The manual is more ambivalent about that latter point, but anything in this realm is a mild bit of magic.)

Not to minimize how excellent that is, but bold coder, still don’t get lazy! This of course doesn’t eliminate the role that performance annotations and careful code structuring typically play, and I also do not regard it as a bug that you occasionally annotate code to inform the computer what you know about what can be done with it. Julia should not be spending CPU cycles proving non-trivial correctness results for you that you already know. That would likely be solving redundant, possibly super-polynomially hard problems to speed up polynomial problems, which is a bad bet. It is a design feature of Julia that it allows you to be clever; it would be a bug if it tried to be clever in a stupid way. You will need to think about array views versus copies.

3.1 Analysing

If you want to know how you made some weird mistake in type design, try:

- Traceur.jl - runs your code and tells you about any obvious performance traps

- Cthulhu.jl - descend into Julia type dispatching.

3.2 Profiling

Here is a convenient overview of timer functions that help work out what to optimise. The built-in timing tool is called time. It can even time arbitrary begin/end blocks.

Going deeper, try Profile the statistical profiler:

ProfileView is advisable for making sense of the output. It doesn’t work in jupyterlab, but from jupyterlab (or anywhere) you can invoke the Gtk interface by setting PROFILEVIEW_USEGTK = true in the Main module before using ProfileView.

It’s kind of the opposite of profiling, but you can give use feedback about what’s happening using a ProgressMeter.

TimerOutputs is a macro system that can accumulate various bits of timing information under tags filed by category.

3.3 Near-magical performance annotations

- @simd

- equivocally endorsed in the manual, as it may or may not be needed to squeeze best possible performance out of the inner loop. Caution says try it out and see.

- @inbounds

- disables bounds checking, but be careful with your indexing. Bounds checking is expensive.

- @fastmath

- violates strict IEEE math setup for performance, in a variety of ways some of which sound benign and some not so much.

3.4 Caching

Doing heavy calculations? Want to save time? Caching notionally helps, but of course is a quagmire of bikeshedding.

The Anamemsis creator(s) have opinion about this:

Julia is a scientific programming language which was designed from the ground up with computational efficiency as one of its goals. As such, even ad hoc and “naive” functions written in Julia tend to be extremely efficient. Furthermore, any memoization implementation is liable to have some performance pain points: in general they contain type unstable code (even if they have type stable output) and they must include a value cache which is accessible from the same scope as the function itself so that global objects are potentially involved. Because of this, memoization comes with a significant overhead, even with Julia’s highly efficient AbstractDict implementations.

My own opinion of caching is that I’m for it. Despite the popularity of the automatic memoization pattern I’m against that as a rule. I usually end up having to think carefully about caching, and magically making functions cache never seems to help with the hard part of that. Caching logic should, for projects I’ve worked on at least, be explicit, opt-in, and that should be an acceptable design overhead because I should only be doing it for a few expensive functions, rather than creating magic cache functions everywhere.

LRUCache is the most commonly recommended cache. It provides a simple, explicit, opt-in, in-memory, typed cache structure, so it should be performant. It promises sensible, if not granular, locking for multi-threaded access: one thread at a time can access any key of the cache. AFAICT this meets 100% of my needs.

Invocations look like

Memoize provides an automatic caching macro but primitive in-memory caching using plain old dictionaries. AFAICT it is possibly compatible with LRUCache, but weirdly I could not find discussion of that. However, I don’t like memoisation, so that’s the end of that story.

Anamemsis has advanced cache decorators and lots of levers to pull to alter caching behaviour.

Caching is especially full-featured with macros, disk persistence and configurable evictions etc. It wants type annotations to be used in the cached function definitions.

Cleverman Simon Byrne notes that you can roll your own fancy caching using wild Julia introspection stunts via Cassette.jl, but I don’t know how battle-tested this is. I am not smart enough for that much magic.

4 Parallelism

There is a lot of multicore parallelism built in to julia, with occasional glitches in the multithreading.

Handy, apparently, is ParallelProcessingTools.jl:

This Julia package provides some tools to ease multithreaded and distributed programming, especially for more complex use cases and when using multiple processes with multiple threads on each process.

This package follows the SPMD (Single Program Multiple Data) paradigm (like, e.g MPI, Cuda, OpenCL and DistributedArrays.SPMD): Run the same code on every execution unit (process or thread) and make the code responsible for figuring out which part of the data it should process. This differs from the approach of

Base.Threads.@threadsandDistributed.@distributed. SPMD is more appropriate for complex cases that the latter do not handle well (e.g. because some initial setup is required on each execution unit and/or iteration scheme over the data is more complex, control over SIMD processing is required, etc).