Adversarial learning

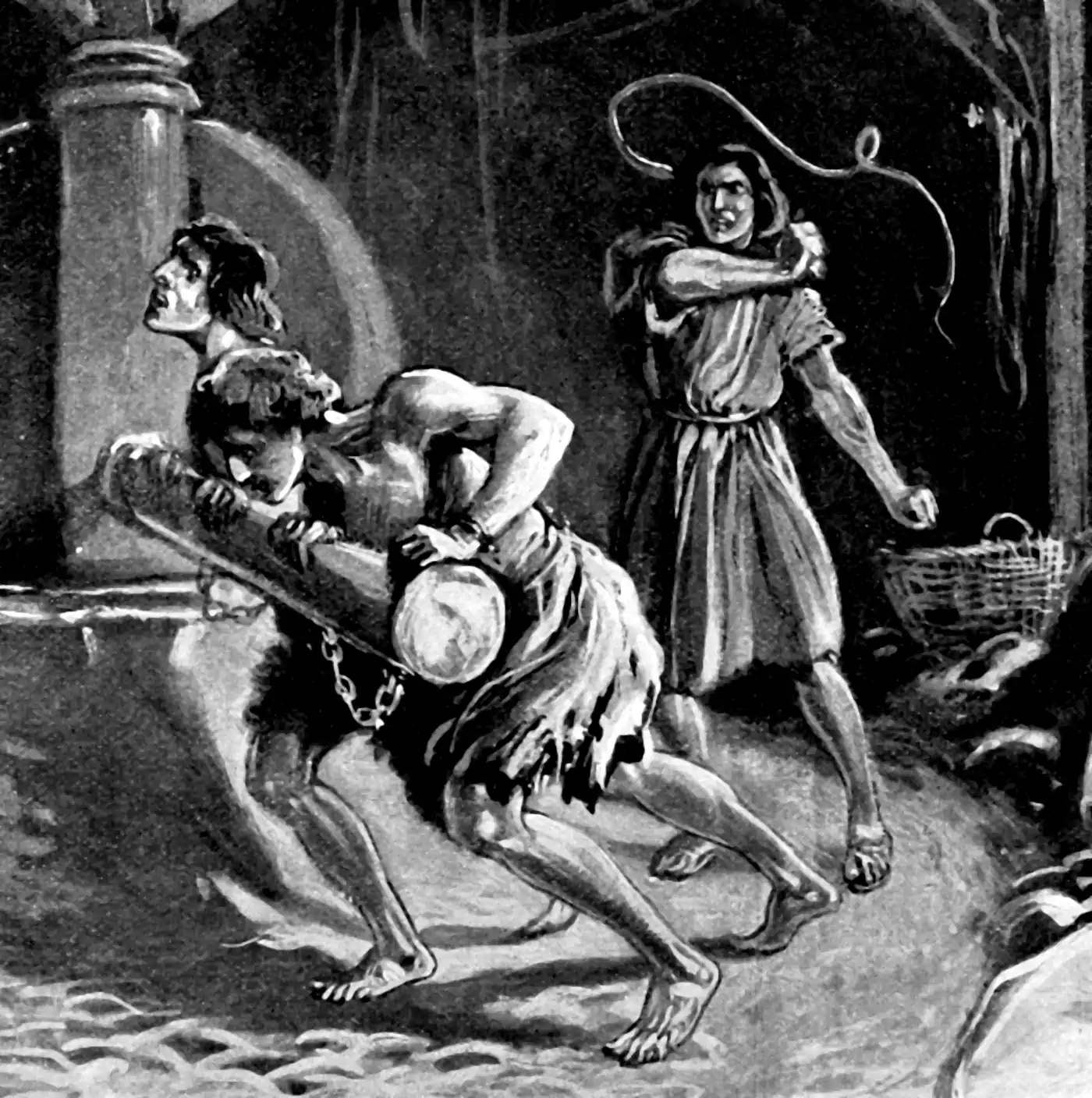

Statistics against Shayṭtān

2016-10-06 — 2022-02-20

Wherein the Noise Is Construed as Worst-Case Within Given Constraints, Contrasted With Random Perturbations, and Is Related to Game-Theoretic Tactics and Goodhartian Target Gaming.

Adversarial learning, where the noise is not purely random but chosen to be the worst possible noise for you (subject to some rules of the game). This is in contrast to classic machine learning and statistics where the noise is purely random; Tyche is not “out to get you.”

As renewed in fame recently by the related (?) method of generative adversarial networks (although much older).

The associated concept in normal human experience is Goodhardt’s law, which tells us that “people game the targets you set for them.”

Interesting connection to implicit variational inference.

🏗 discuss politics implied by treating the learning as a battle with a conniving adversary as opposed to an uncaringly random universe. I’m sure someone has done this well in a terribly eloquent blog post, but I haven’t found one I’d want to link to yet.

The toolset of adversarial techniques is broad. Game theory is an important one, but also computational complexity theory (how hard is it to find adversarial inputs, or to learn despite them?) and lots of functional analysis and optimization theory. Surely much other stuff I do not know because this is not really my field.

Applications are broad too — improving ML but also infosec, risk management, etc.

1 Incoming

Adversarial attacks can be terrorism or freedom-fighting, depending on the pitch, natch: From data strikes to data poisoning, how consumers can take back control from corporations.