Anomaly detection

I don’t define what is abnormal, but I know it when I see it

2015-10-06 — 2022-10-19

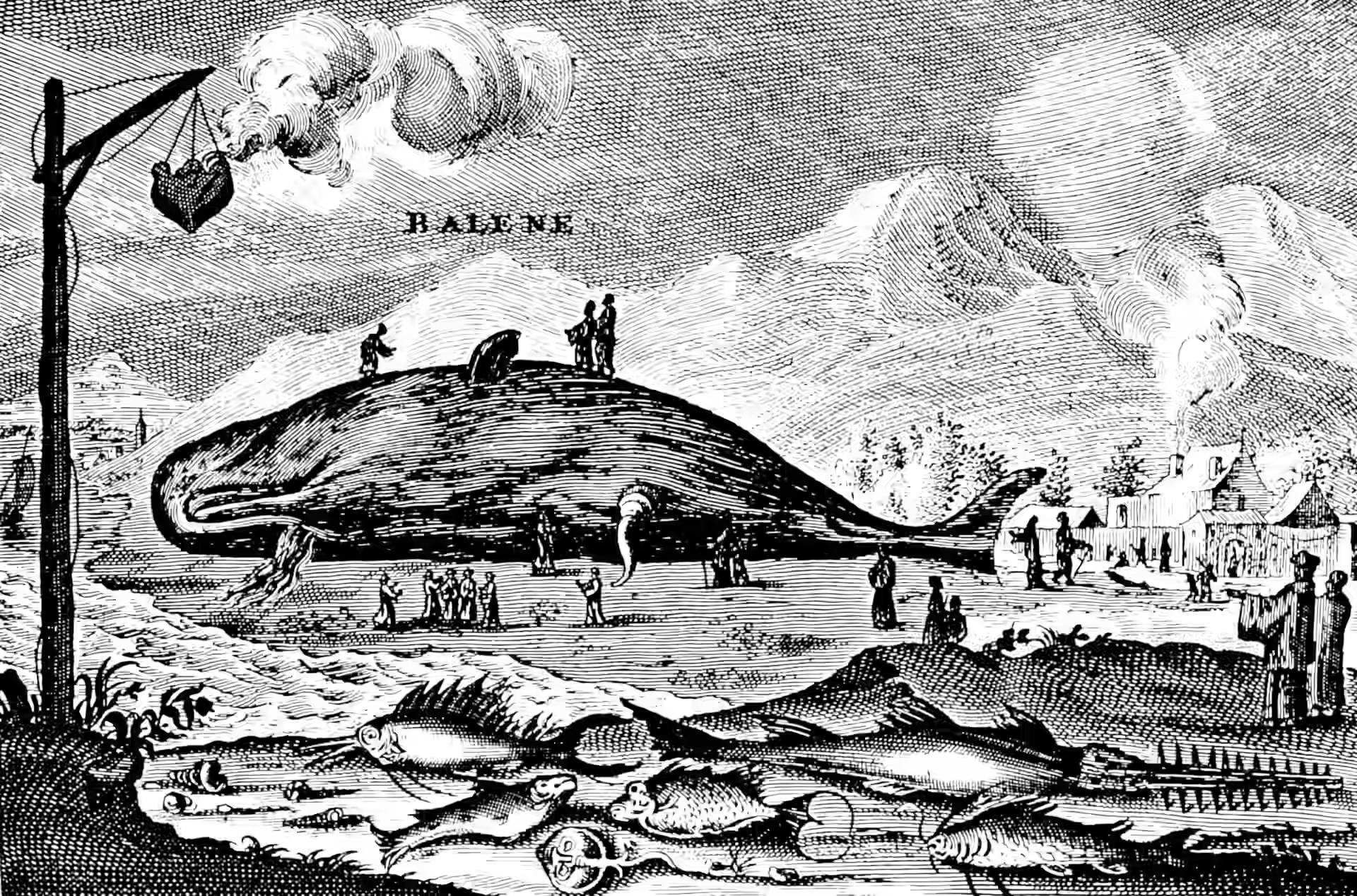

Wherein Anomaly Detection Is Considered, With Emphasis on High-Dimensional and Time-Series Methods, and an Ocean-Hydrology Model Outlier Shaped Like a Distended Whale Is Examined.

Working out when something you see is not what you expect, especially when you’re not quite sure how to quantify your expectations. Detecting black swans, for example.

Nuit Blanche rounds up methods for high dimensional statistics.

1 Trend detection

e.g. Gnip Trend detection Nikolov (2012) looks fun and comes with a cute explanation of how you might do this nonparametrically.

2 Incoming

Sevvandi Kandanaarachchi is presenting some methods in a seminar right now: (Kandanaarachchi and Hyndman 2021; Kandanaarachchi, Hyndman, and Smith-Miles 2020; Kandanaarachchi 2021; Sevvandi Kandanaarachchi and Hyndman 2021; Talagala et al. 2020).

Related software: