Cosmic decision theories

Newcomb’s boxes etc

2018-10-23 — 2026-05-05

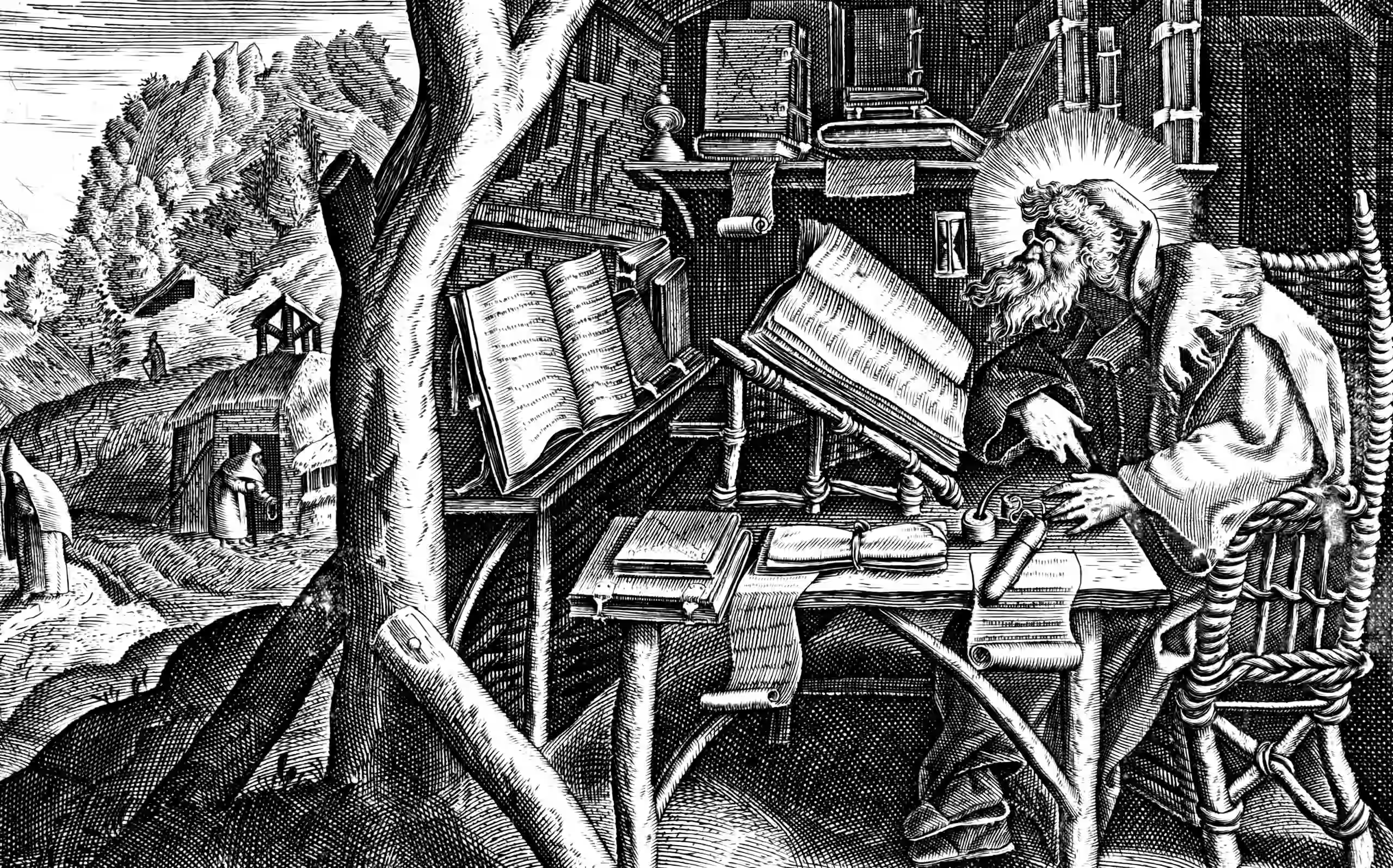

In Which the Distinction Between Causal and Evidential Decision Rules Is Examined Through the Lens of Mutually Predictive Agents, With Newcomb’s Paradox Presented as the Animating Illustrative Case.

Vanilla decision theory is the field that studies the connection of decision to inference. When someone in AI Safety says “decision theory” they typically don’t want that vanilla stuff. They want the primo, uncut cosmic shit.

Cosmic decision theories are concerned with a very particular set of problems that are relevant to superintelligence, especially wrt agent foundations and alignment. Normie vanilla decision theory is, AFAICT, still included in cosmic decision theory but as kind of a trivial, boring case. Cosmic decision theories worry about intellectual catnip such what happens when you have agents that are perfect predictors of each other or whatever. Keyword: Newcomb’s paradox.

Here are some brief notes here about cosmic decision theories. Not many though, because I am generally sceptical of the value of these theories; I think they point at something important but lean upon suspect asymptotics. I moreover think it’s bad to lean upon excessively cosmic asymptotics because the intuitions that cosmic decision theories bring about in us (causal versus evidential, for example) map down to very normie settings, in the sense that things that are usually mentioned in the cosmic context, like “causal” and “evidential” decision theories, are things that we also want to think about in the normie context. Intuitively, I mean. I want an intuitive, normal version of evidential decision theory for use in my own life. We, or at least I, want to talk about agents with evidential-vs-causal-vs-functional-vs-updateless decision rules, but I rather suspect those rules as commonly taught import more weirdness than they need to.

I might be wrong about that, and in fact maybe we do need all that cosmic shit. For now though, I will be kind to my soft money brain and will try to work it through via the not-very-cosmic, basic tools of mechanised decision theory, and then come back to more nakedly cosmic, asymptotic theories if I run into trouble.

What exactly do I mean by “cosmic asymptotics”? I’m not sure yet, because I’m still trying to work through this material, but whatever weirdness goes off in your brain when someone talks about Newcomb’s paradox, that’s what I am pointing to. I will endeavour to make precise what I mean when I have found the words and intuition to do so.

Those notes follow.

1 Causal vs Evidential decision theory

A reflective twist on game theory looks at decision problems involving smart, predictive agents.

I have had the following resources recommended to me:

- Causal decision theory

- Evidential decision theory

- Veritasium: This Paradox Splits Smart People 50/50

We might want to start with MIRI’s introductory reading list:

Existing methods of counterfactual reasoning turn out to be unsatisfactory both in the short term (in the sense that they systematically achieve poor outcomes on some problems where good outcomes are possible) and in the long term (in the sense that self-modifying agents reasoning using bad counterfactuals would, according to those broken counterfactuals, decide that they should not fix all of their flaws).

I haven’t read any of those, though. I’d probably start with Wolpert and Benford (2013); David Wolpert always seems to have a good Gordian knot cutter on his analytical multitool.