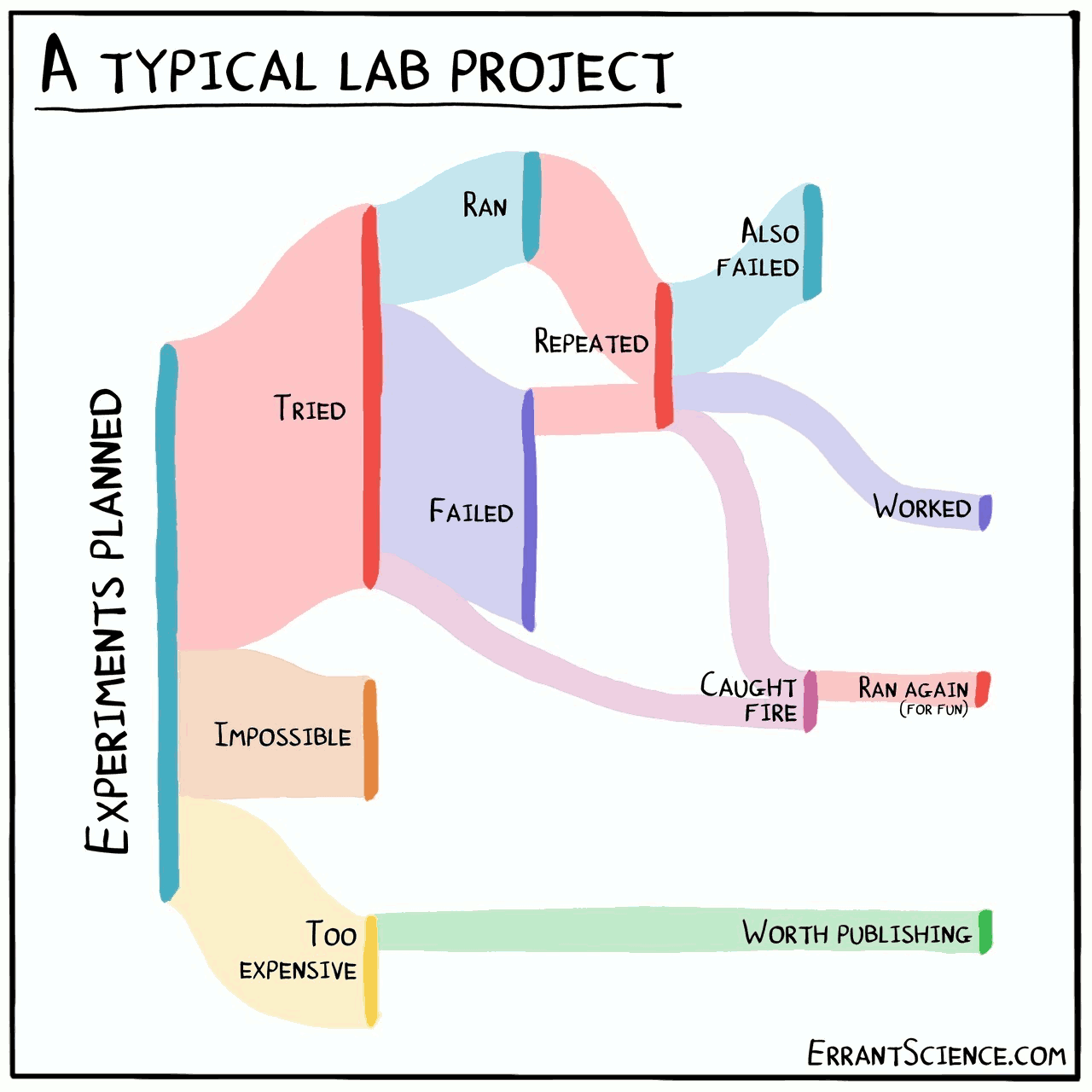

Tracking experiments in data science

2020-10-23 — 2024-02-01

Wherein the Landscape of Experiment Tracking Is Surveyed; Special Attention Is Given to Neural Nets as Ongoing Convergence Monitored, and Tooling From Sacred to MLflow Is Catalogued.

1 For neural nets in particular

Special because we do not expect to run discrete experiments, but monitor the convergence of an ongoing one. See experiment tracking for neural nets.

2 Sacred

Sacred is a tool to configure, organize, log and reproduce computational experiments. It is designed to introduce only minimal overhead, while encouraging modularity and configurability of experiments.

The ability to conveniently make experiments configurable is at the heart of Sacred. If the parameters of an experiment are exposed in this way, it will help you to:

- keep track of all the parameters of your experiment

- easily run your experiment for different settings

- save configurations for individual runs in files or a database

- reproduce your results

Parallelism is managed via Observers. If we wanted a slurm executor we would need to write a custom observer. It seems to want a MongoDB server process to store data?

3 Aimstack

FS-backed indexing of artefacts.

Storage is RocksDB which is not multi-process safe.

4 Guild AI

Value proposition: stores all information in a local FS-backed system.

TBD

Parallel execution filed under remotes. Seem to favour synchronous execution on VMs rather than SLURM. Possibly could hack in submitit via the Python API

5 MLFlow

I ran across it for NNs, but apparently it has affordances for other ML experiments too.

Seems interesting: shortcoming seems to be that it only supports multiprocessing-type parallelism. See MLFlow.

6 ml-metadata

A data store for use with a configuration system.

ML Metadata (MLMD) is a library for recording and retrieving metadata associated with ML developer and data scientist workflows. MLMD is an integral part of TensorFlow Extended (TFX), but designed so that it can be used independently.

Every run of a production ML pipeline generates metadata containing information about the various pipeline components, their executions (e.g. training runs), and resulting artifacts (e.g. trained models). In the event of unexpected pipeline behaviour or errors, this metadata can be leveraged to analyse the lineage of pipeline components and debug issues. Think of this metadata as the equivalent of logging in software development.

MLMD helps you understand and analyse all the interconnected parts of your ML pipeline instead of analysing them in isolation and can help you answer questions about your ML pipeline such as:

- Which dataset did the model train on?

- What were the hyperparameters used to train the model?

- Which pipeline run created the model?

- Which training run led to this model?

See MLMD guide.

7 Dr Watson

DrWatson is a scientific project assistant software package. Here is what it can do:

- Project Setup: A universal project structure and functions that allow you to consistently and robustly navigate through your project, no matter where it is located on your hard drive.

- Naming Simulations: A robust and deterministic scheme for naming and handling your containers.

- Saving Tools: Tools for safely saving and loading your data, tagging the Git commit ID to your saved files, safety when tagging with dirty repos, and more.

- Running & Listing Simulations: Tools for producing tables of existing simulations/data, adding new simulation results to the tables, preparing batch parameter containers, and more.

Think of these core aspects of DrWatson as independent islands connected by bridges. If you don’t like the approach of one of the islands, you don’t have to use it to take advantage of DrWatson!

Applications of DrWatson are demonstrated on the Real World Examples page. All of these examples are taken from code of real scientific projects that use DrWatson.

Please note that DrWatson is not a data management system.

8 Lancet

Lancet comes from neuroscience.

Lancet is designed to help you organize the output of your research tools, store it, and dissect the data you have collected. The output of a single simulation run or analysis rarely contains all the data you need; Lancet helps you generate data from many runs and analyse it using your own Python code.

Its special selling point is that it integrates exploratory parameter sweeps. (can it do such interactively, I wonder?)

Parameter spaces often need to be explored for the purpose of plotting, tuning, or analysis. Lancet helps you extract the information you care about from potentially enormous volumes of data generated by such parameter exploration.

Natively supports the over-engineered campus jobs manager PlatformLSF, and it has a thoughtful workflow for reproducible notebook computation. Less focused on the dependency management side.

9 CaosDB

Some kind of “researcher-friendly” MySQL frontend for managing experiments. I’m not sure how well this truly integrates into a workflow of solving problems I actually have.

CaosDB - Research Data Management for Complex, Changing, and Automated Research Workflows

Here we present CaosDB, a Research Data Management System (RDMS) designed to ensure seamless integration of inhomogeneous data sources and repositories of legacy data. Its primary purpose is the management of data from biomedical sciences, both from simulations and experiments during the complete research data lifecycle. An RDMS for this domain faces particular challenges: Research data arise in huge amounts, from a wide variety of sources, and traverse a highly branched path of further processing. To be accepted by its users, an RDMS must be built around workflows of the scientists and practices and thus support changes in workflow and data structure. Nevertheless it should encourage and support the development and observation of standards and furthermore facilitate the automation of data acquisition and processing with specialized software. The storage data model of an RDMS must reflect these complexities with appropriate semantics and ontologies while offering simple methods for finding, retrieving, and understanding relevant data. We show how CaosDB responds to these challenges and give an overview of the CaosDB Server, its data model and its easy-to-learn CaosDB Query Language. We briefly discuss the status of the implementation, how we currently use CaosDB, and how we plan to use and extend it.

10 Tensorboard

Oh go on, tensorboard is kinda OK at this.

11 Forge

TBC. Forge is an attempt to create a custom experiment-oriented build tool by Adam Kosiorek.

Forge makes it easier to configure experiments and allows easier model inspection and evaluation due to smart checkpoints. With Forge, you can configure and build your dataset and model in separate files and load them easily in an experiment script or a jupyter notebook. Once the model is trained, it can be easily restored from a snapshot (with the corresponding dataset) without the access to the original config files.

12 Sumatra

Sumatra is about tracking and reproducing simulation or analysis parameters for sciencey types. Exploratory data pipelines, especially. Language-agnostic, written in python.