Reproducibility in Machine Learning research

2024-05-05 — 2025-11-12

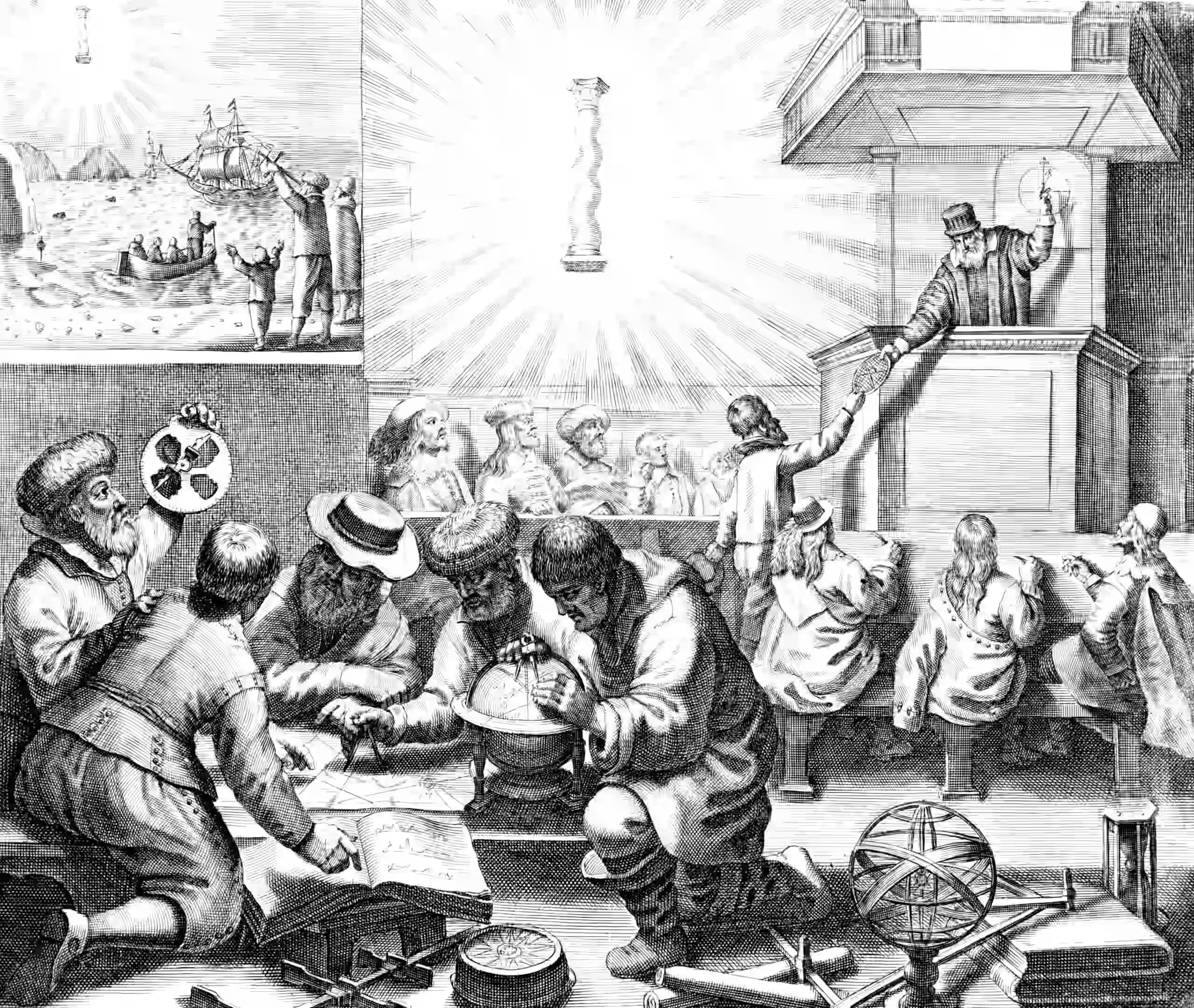

Wherein the Practice of Reproducing ML Findings Is Described, a Case Study Is Provided: Computo’s Policies Are Outlined, With Virtual Environments Tagged and Repositories Archived on Software Heritage.

How does reproducible science actually happen for ML models and algorithms? How can we responsibly communicate how a sexy new paper is likely to work in practice?

Various syntheses arise from time to time: Albertoni et al. (2023); Pineau et al. (2020).

Implicitly, this ties into the science of benchmarking and what that even means as a “scientific method”.

1 Computo journal

A fascinating case study.

Highlights from COMPUTO’s about page:

Computo has been created in the context of a reproducibility crisis in science, which calls for higher standards in the publication of scientific results. Computo aims at promoting computational/algorithmic contributions in statistics and machine learning (ML) that provide insight into which models or methods are the most appropriate to address a specific scientific question.

The journal welcomes the following types of contributions:

- New methods with original stats/ML developments, or numerical studies that illustrate theoretical results in stats/ML;

- Case studies or surveys on stats/ML methods to address a specific (type of) question in data analysis, neutral comparison studies that provide insight into when, how, and why the compared methods perform well or less well;

- Software/tutorial papers to present implementations of stats/ML algorithms or to feature the use of a package/toolbox. For such papers we expect not only the description of an existing implementation but also the study of a concrete use case. If applicable, a comparison to related works and appropriate benchmarking are also expected.

The reviews and the discussions they sparked between authors and their peers are open, i.e. visible to any reader after acceptance of the contribution. These reviews are published either as issues in the git repository associated with the publication, or directly available for consultation on Open review.

Note that Reviewers may choose to remain anonymous or not.

The issue of reproducibility is at the heart of the Computo project. Therefore, the reproducibility of numerical results is a necessary condition for publication in Computo. To this end, we rely on a combination of notebooks, literate programming, virtual environments, and continuous integration.

Submissions must also include all necessary data (e.g., via Zenodo repositories) and code. For contributions featuring the implementation of methods or algorithms, the quality of the provided code is assessed during the review process.

For interested readers, more details are provided on this page about our expectations regarding reproducibility. […] To ensure that all published information remains accessible and usable over time:

- we tag every published article, including its virtual environment;

- we regularly run the workflow of each published article to check its medium- and long-term reproducibility;

- we archive all git repositories of published articles on Software Heritage

.

2 Difficulties of foundation models in particular

It’s a problem when our model is both too large and too secretive to interrogate. TBD

3 Connection to domain adaptation

How do we know our models generalize to the wild? See Domain adaptation.

4 Benchmarks

See ML benchmarks.

5 Incoming

REFORMS: Reporting standards for ML-based science

The REFORMS checklist consists of 32 items across 8 sections. It is based on an extensive review of the pitfalls and best practices in adopting ML methods. We created an accompanying set of guidelines for each item in the checklist. We include expectations about what it means to address the item sufficiently. To aid researchers new to ML-based science, we identify resources and relevant past literature.

The REFORMS checklist differs from the large body of past work on checklists in two crucial ways. First, we aimed to make our reporting standards field-agnostic, so that they can be used by researchers across fields. To that end, the items in our checklist broadly apply across fields that use ML methods. Second, past checklists for ML methods research focus on reproducibility issues that arise commonly when developing ML methods. But these issues differ from the ones that arise in scientific research. Still, past work on checklists in both scientific research and ML methods research has helped inform our checklist.