Scaling laws for very large neural nets

Theory of trading-off budgets for compute size and data

2021-01-14 — 2025-11-26

Wherein Observational Scaling From Nearly a Hundred Public Models Is Described, a Low‑dimensional Capability Space Is Proposed, and RL Scaling Is Shown to Be Far Weaker Than Inference‑time Compute.

Got good behaviour from a million-parameter model? Want to see whether things get weirder as we push to a billion parameters? Turns out they do! They even seem to do so dependably! There’s something philosophically deep going on here. Why does looking at more stuff — more data or larger models — seem to bring more computationally complex problems within reach? I don’t know, but I’m keen to carve out some time to solve that.

Brief links on the theme of scaling in the extremely large model / large-data limit and what that does to the models’ behaviour. This is a new front in the complexity and/or statistical mechanics of statistics, and in whether neural networks extrapolate.

For how to scale up these models in practice, see distributed gradient descent.

Content on this page hasn’t been updated as fast as the field has been moving; you should follow the references for the latest.

1 Bitter lessons in compute

See optimal cleverness.

2 Big transformers

One fun result came from transformer language models. An interesting observation in 2020 was an unexpected trade-off: we could go faster by training a bigger network. I think this paper was ground zero for modern scaling studies, which try to identify and predict optimal trade-offs and ultimate performance under different scaling (of compute, data, parameters) regimes.

nostalgebraist summarises Henighan et al. (2020); Kaplan et al. (2020):

2.1 L(D): information

OpenAI derives a scaling law called L(D). This law is the best you could possibly do — even with arbitrarily large compute/models — if you are only allowed to train on D data points.

No matter how good your model is, there’s only so much it can learn from a finite sample. L(D) quantifies this intuitive fact (if the model is an autoregressive transformer).

2.2 L(C): budgeting

OpenAI also derives another scaling law called L(C). This is the best you can do with compute C if you spend it optimally.

What does optimal spending look like? Remember, you can spend a unit of compute on * a bigger model (N), or * training the same model for longer (S)

… In the compute regime we’re currently in, making the model bigger is way more effective than taking more steps.

Scaling laws keep getting revised.

- Thoughts on the new scaling laws for large language models

- New Scaling Laws for Large Language Models

2.3 Observational

Ruan, Maddison, and Hashimoto (2025):

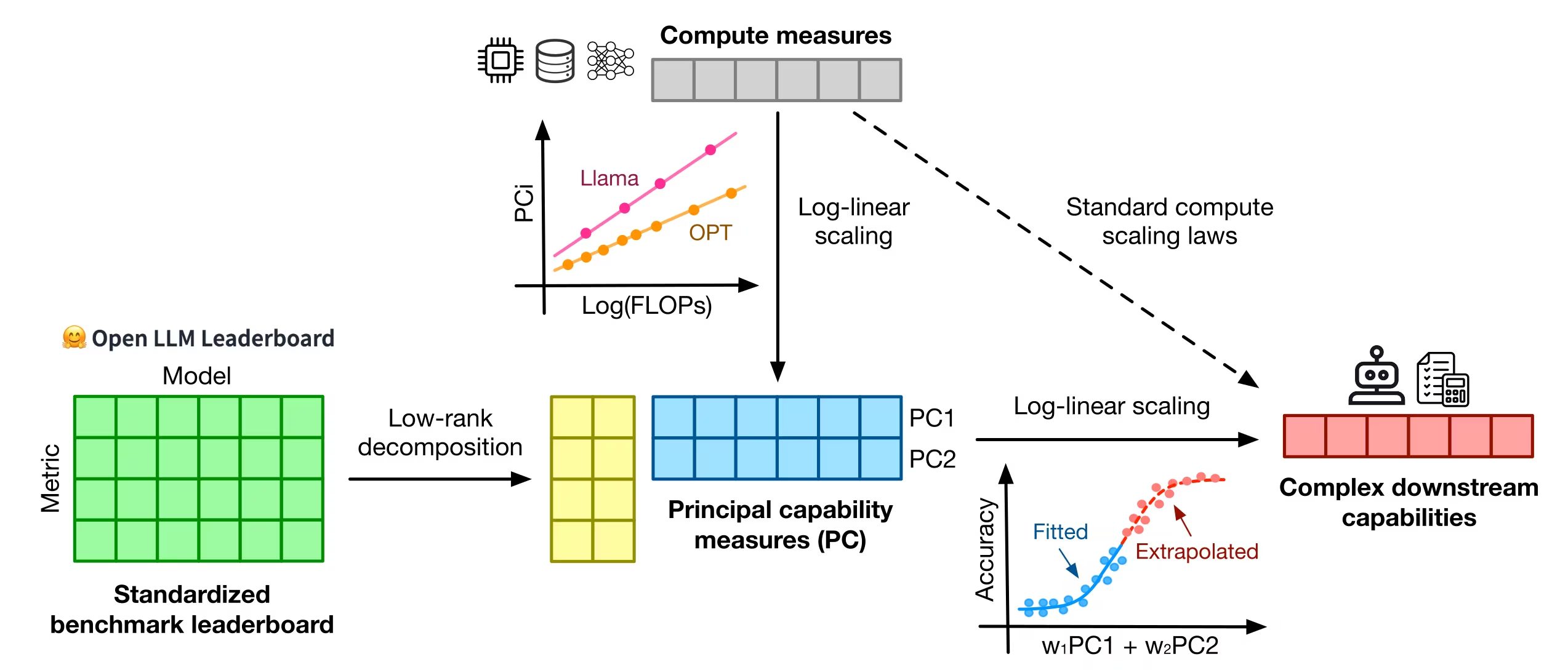

Understanding how language model performance varies with scale is critical to benchmark and algorithm development. Scaling laws are one approach to building this understanding, but the requirement of training models across many different scales has limited their use. We propose an alternative, observational approach that bypasses model training and instead builds scaling laws from ~100 publicly available models. Building a single scaling law from multiple model families is challenging due to large variations in their training compute efficiencies and capabilities. However, we show that these variations are consistent with a simple, generalized scaling law where language model performance is a function of a low-dimensional capability space, and model families only vary in their efficiency in converting training compute to capabilities. Using this approach, we show the surprising predictability of complex scaling phenomena: we show that several emergent phenomena follow a smooth, sigmoidal behaviour and are predictable from small models; we show that the agent performance of models such as GPT-4 can be precisely predicted from simpler non-agentic benchmarks; and we show how to predict the impact of post-training interventions like Chain-of-Thought and Self-Consistency as language model capabilities continue to improve.

Standard compute scaling In compute scaling laws, there is a hypothesized power-law relationship between models’ compute measures \(C_m\) (e.g., training FLOPs) and their errors \(E_m\) (e.g., perplexity). Specifically, for a model \(m\) within a family \(f\) (e.g., Llama-2 7B, 13B, and 70B) we hypothesize

\[ \log \left(E_m\right) \approx \beta_f \log \left(C_m\right)+\alpha_f \]

and if this linear fit is sufficiently accurate, we draw inferences about the performance of a model at future compute scales \(C^{\prime}>C\) by extrapolating this relationship. However, fitting such a scaling law can be tricky, as each model family \(f\) and downstream benchmark has its own scaling coefficients \(\beta_f\) and \(\alpha_f\). This means that scaling experiments, especially for post-training analysis, are often fitted on very few (3-5) models sharing the same model family, and any predictions are valid only for a specific scaling strategy used within a model family. Several studies […] have generalized the functional form to analyse the scaling of LMs’ downstream performance (where \(E_m\) is normalised to \([0,1]\)) with a sigmoidal link function \(\sigma\) :

\[ \sigma^{-1}\left(E_m\right) \approx \beta_f \log \left(C_m\right)+\alpha_f \]

Observational scaling: In our work, we hypothesize the existence of a low-dimensional capability measure for LMs that relate compute to more complex LM capabilities and can be extracted from observable standard LM benchmarks, as illustrated in Figure 2. Specifically, given \(T\) simple benchmarks and \(B_{i, m}\) the error of a model \(m\) on benchmark \(i \in[T]\), we hypothesize that there exists some capability vector \(S_m \in \mathbb{R}^K\) such that,

\[ \begin{aligned} \sigma^{-1}\left(E_m\right) & \approx \beta^{\top} S_m+\alpha \\ S_m & \approx \theta_f \log \left(C_m\right)+\nu_f \\ B_{i, m} & \approx \gamma_i^{\top} S_m \end{aligned} \]

for \(\theta_f, \nu_f, \beta \in \mathbb{R}^K, \alpha \in \mathbb{R}\), and orthonormal vectors \(\gamma_i \in \mathbb{R}^K\).

3 RL Scaling and reasoning

The scaling laws discussed above primarily concern the pre-training phase, where the objective is typically next-token prediction over vast datasets.1 However, the capabilities of modern frontier models are increasingly driven by post-training refinements and by how the models use compute during inference.2 This involves two key paradigms: Reinforcement Learning (RL) and Chain-of-Thought (CoT) reasoning.3

3.1 Chain-of-Thought and Inference Scaling

As models scale, they develop latent abilities for complex reasoning.4 Chain-of-Thought (CoT) reasoning exploits this by prompting the model to generate intermediate steps—a “scratchpad” or “thinking out loud”—before committing to a final answer. This dramatically improves performance on multi-step tasks like maths and coding (Wei et al. 2023).

3.2 Reinforcement Learning and Training Scaling

How do we train models to use CoT effectively and align their behaviour with our goals?

This is typically achieved through Reinforcement Learning (RL). In the RL phase, the model is optimized based on feedback.

This feedback can take the form of human preferences (Reinforcement Learning from Human Feedback, or RLHF) or verifiable rewards (e.g., solving a maths problem correctly).

This introduces another axis: RL scaling. This refers to increasing the amount of compute dedicated to this post-training phase.

Initially, RL scaling seemed highly attractive because the compute used for RL was minuscule compared to the massive investment in pre-training, allowing for significant capability improvements without drastically increasing the total training budget.

3.3 How Well Does RL Scale?

Which factor is driving the recent gains—the RL training, or the inference scaling it enables? And how efficiently does RL training convert compute into capability?

Toby Ord investigates these trade-offs in How Well Does RL Scale?. His findings suggest that RL scales surprisingly poorly compared to inference scaling.8

Ord argues that while RL training does provide a direct performance boost (the “RL boost”), its primary contribution is unlocking the model’s ability to productively use much longer chains of thought.

In analysing several benchmarks, Ord found that the majority of the performance improvement (sometimes over 80–90%) comes from the subsequent inference-scaling boost, rather than the RL training itself.

The efficiency of RL scaling seems to be low. Ord notes that most of the gains come from unlocked inference compute rather than from RL compute.

The chart shows steady improvements for both RL-scaling and inference-scaling, but they are not the same. […] the slope of the RL-scaling graph (on the left) is almost exactly half that of the slope of the inference-scaling graph (on the right).13

Because these graphs use logarithmic x-axes, a slope that’s half as steep means RL scaling requires twice as many orders of magnitude of compute to achieve the same improvement in capabilities.

- A 100x scale-up in inference compute might raise a benchmark score from 20% to 80%.

- Achieving the same improvement via RL scaling would require a 10,000x (100²) increase in RL training compute.

While we’ve seen RL be cost-effective so far because of its low starting point, these poor scaling dynamics suggest the advantage is rapidly eroding. When RL training compute starts to rival pre-training compute, that inefficiency becomes a major economic bottleneck.

I estimate that at the time of writing (Oct 2025), we’ve already seen something like a 1,000,000x scale-up in RL training and it required ≤2x the total training cost. But the next 1,000,000x scale-up would require 1,000,000x the tot16al training cost, which is not possible in the foreseeable future.17

This implies that future capability gains may be increasingly tied to expensive inference-time scaling — making models think longer and harder at runtime — rather than from fundamental improvements to the RL training process.

4 Incoming

The Scaling Paradox — Toby Ord

- AI progress over time is impressive even if progress as a function of resources is not.

- The scaling laws are smooth and long-lasting, but they prove poor yet predictable scaling rather than genuinely powerful scaling.

- Although we know that AI quality metrics scale very poorly with resources, real-world impacts may scale much better.

Zhang et al. (2020) (how do NNs learn from language as n increases?)

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization (targeting large language models)