Operationalising the bitter lessons in compute and cleverness

Amortizing the cost of being smart

2021-01-14 — 2025-01-26

Wherein it is argued that massive training compute is justified because inference is cheaply amortised, enabling foundation models trained once to serve many tasks, and token-level economics and compute overhangs are examined

What to compute, and when, to make inference about the world with greatest efficiency. Overparameterization and inductive biases. Operationalizing the scaling hypothesis. Compute overhangs. Tokenomics. Amortization of inference. Reduced order modelling. Training time versus inference time. The business model of FLOPS.

Concretely, traditional statistics was greatly upset when it turned out that labouriously proving things was not as great as training a Neural Network to do the same thing. The field was upset again when it turned out that Foundation models and LLMs make it even weirder: it turns out to be worthwhile spending a lot of money training a gigantic model on all the data you can get, and then using that model to do very cheap inference. Essentially you can pay once for a very expensive training run and thereafter everything is cheap.

A lot of ML problems are in hindsight implicitly about this question of when to spend your compute budget. I think it is useful to group the normative question of doing this well under the heading of when to compute.

1 Background

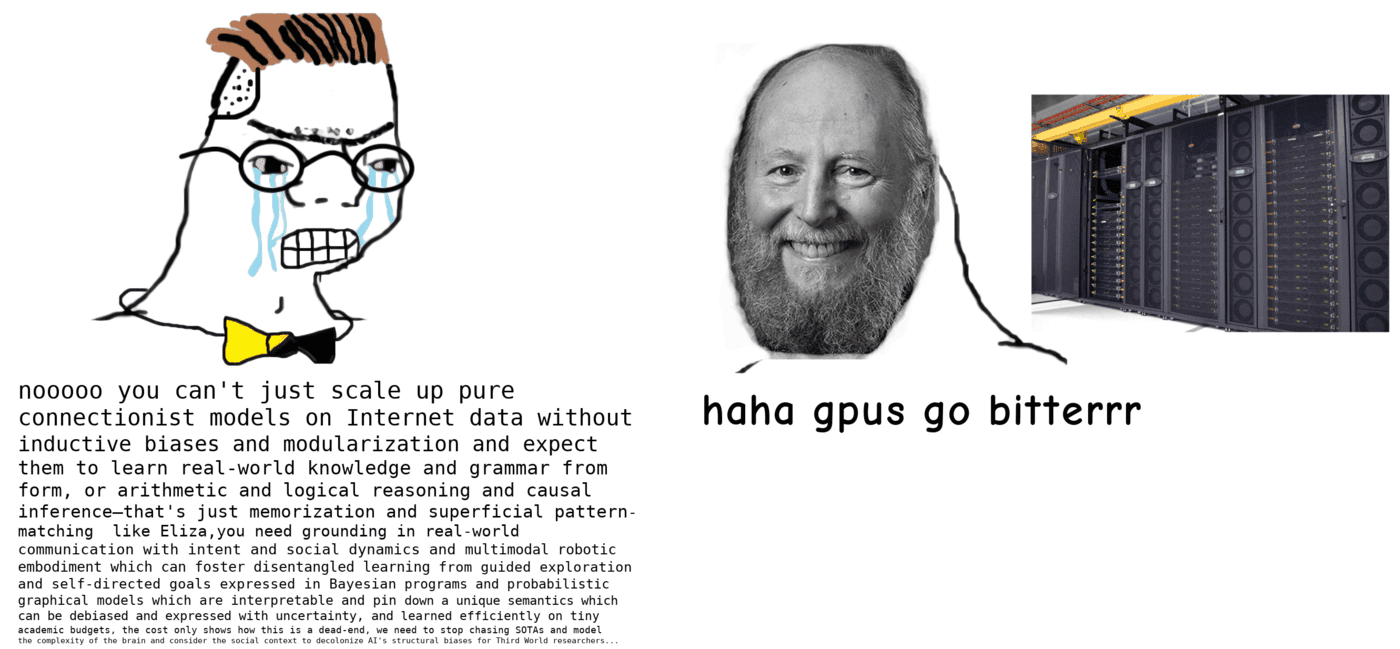

Sutton’s famous bitter lesson set the terminology:

The biggest lesson that can be read from 70 years of AI research is that general methods that leverage computation are ultimately the most effective, and by a large margin.

Lots of people declaim this one, e.g. On the futility of trying to be clever (the bitter lesson redux).

Alternative phrasing: Even the best human mind is not very good, so the quickest path to intelligence is the one that prioritizes replacing that bottleneck.

Things are better when they can scale up. The idea is that it is most important to deploy the compute you have effectively.

I do think that there are many interesting research directions consistent with the philosophy of the bitter lesson that can have more meaningful, longer-term impact than small algorithmic or architectural tweaks. I just want to wrap up this post by giving a couple of examples below:

Probing the limits of model capabilities as a function of training data size: can we get to something close to human-level machine translation by leveraging everything multi-lingual on the web (I think we’ve learned that the answer to this is basically yes)? Can we get to something similar to human-level language understanding by scaling up the GPT-3 approach a couple of orders of magnitude (I think the answer to this is probably we don’t know yet)? Of course, smaller scale, less ambitious versions of these questions are also incredibly interesting and important.

Finding out what we can learn from different kinds of data and how what we learn differs as a function of this: e.g. learning from raw video data vs. learning from multi-modal data received by an embodied agent interacting with the world; learning from pure text vs. learning from text + images or text + video.

Coming up with new model architectures or training methods that can leverage data and compute more efficiently, e.g., more efficient transformers, residual networks, batch normalization, self-supervised learning algorithms that can scale to large (ideally unlimited) data (e.g. likelihood-based generative pre-training, contrastive learning).

I think that Orhan’s statement is easier to engage with than Sutton’s because he provides concrete examples. Plus, his invective is tight.

The examples he gives suggest that when data is plentiful, your main goal should be to work out how to extract all the information into a compute-heavy infrastructure.

This does not directly apply everywhere e.g. in hydrology a single data point on my last project cost AUD700,000, because drilling a thousand-metre well costs that much. Telling me to collect a billion more data points rather than being clever is not useful, as it would not be clever to collapse the entire global economy collecting my data points. There might be ways to get more data, such as pretraining a geospatial foundation model on hydrology-adjacent tasks, but this does not look trivial.

What we generally want is not a homily about being clever being a waste of time, so much as a trade-off curve quantifying how clever to bother being. That would probably be the best lesson.

2 General compute efficiency

We are getting very good at efficiently using hardware (Grace 2013). AI and efficiency (Hernandez and Brown 2020) makes this clear:

We’re releasing an analysis showing that since 2012 the amount of compute needed to train a neural net to the same performance on ImageNet classification has been decreasing by a factor of 2 every 16 months. Compared to 2012, it now takes 44 times less compute to train a neural network to the level of AlexNet (by contrast, Moore’s Law would yield an 11x cost improvement over this period). Our results suggest that for AI tasks with high levels of recent investment, algorithmic progress has yielded more gains than classical hardware efficiency.

3 Amortisation

What the Bayesians call the trade-off of learning inferential shortcuts. TBC

4 Distillation

See distillation.

5 Computing and remembering

TBD. For now see NN memory.

6 LLMs in particular

Partially discussed at economics of LLMs.. A general theory is required though.

7 Bitter lessons in career strategy

The best way of spending the limited compute in my skull is working out how to use the larger compute in my GPU.

Career version:

…a lot of the good ideas that did not require a massive compute budget have already been published by smart people who did not have GPUs, so we need to leverage our technological advantage relative to those ancestors if we want to get cited.