Differentiable learning of automata

2016-10-14 — 2025-06-05

Wherein the training of stack machines and random-access machines is undertaken via differentiable neural formalisms, and Turing-machine–like controllers are induced from data.

We learn stack machines, random access machines, nested hierarchical parsing machines, Turing machines, and whatever other automata-with-memory we want from data. In other words, we’re teaching computers to program themselves via a deep learning formalism.

This is an obvious idea — one of the original ideas from the GOFAI days. How to make it work in NNs isn’t obvious, but there are some charming toy examples. There are also arguably non-toy examples in the form of transformers which learn to parse and generate actual code.

Obviously, a hypothetical superhuman Artificial General Intelligence would be good at handling computer-science problems.

Directly learning automata isn’t the absolute hippest research area right now, though — it’s hard in general and also not very saleable. However, it’s simple enough, in some sense, to be analytically tractable. Some progress has been made. I feel that most of the hyped research that looks like differentiable computer learning sits in the slightly better-contained area of reinforcement learning where more progress can be made, or in the hot area of large LLMs which are harder to explain but solve the same kind of problems while looking different inside.

Related: grammatical inference, memory machines, computational complexity results in NNs, reasoning with LLMs. Automata are a kind of formalization of reasoning, but I think they’re more easily considered as their own thing. If we call this program synthesis, then these things sound connected. See learnable memory for a survey of the state of the art.

1 Programs as singularities

Murfet and Troiani (2025), citing (Clift and Murfet 2019, 2020; Clift, Murfet, and Wallbridge 2020; Ehrhard and Regnier 2003; Waring, n.d.) uses singular learning theory to study the geometry of the space of programs and how to learn them.

2 Differentiable Cellular Automata

3 Incoming

Latent space of small turing machines

Blazek claims his neural networks implement predicate logic directly and are tractable, which would be interesting to look into (Blazek and Lin 2021, 2020; Blazek, Venkatesh, and Lin 2021).

Google-branded: Differentiable Neural Computers.

Christopher Olah’s Characteristically pedagogic intro.

Adrian Colyer’s introduction to neural Turing machines.

Andrej Karpathy’s memory machine list.

Facebook’s GTN:

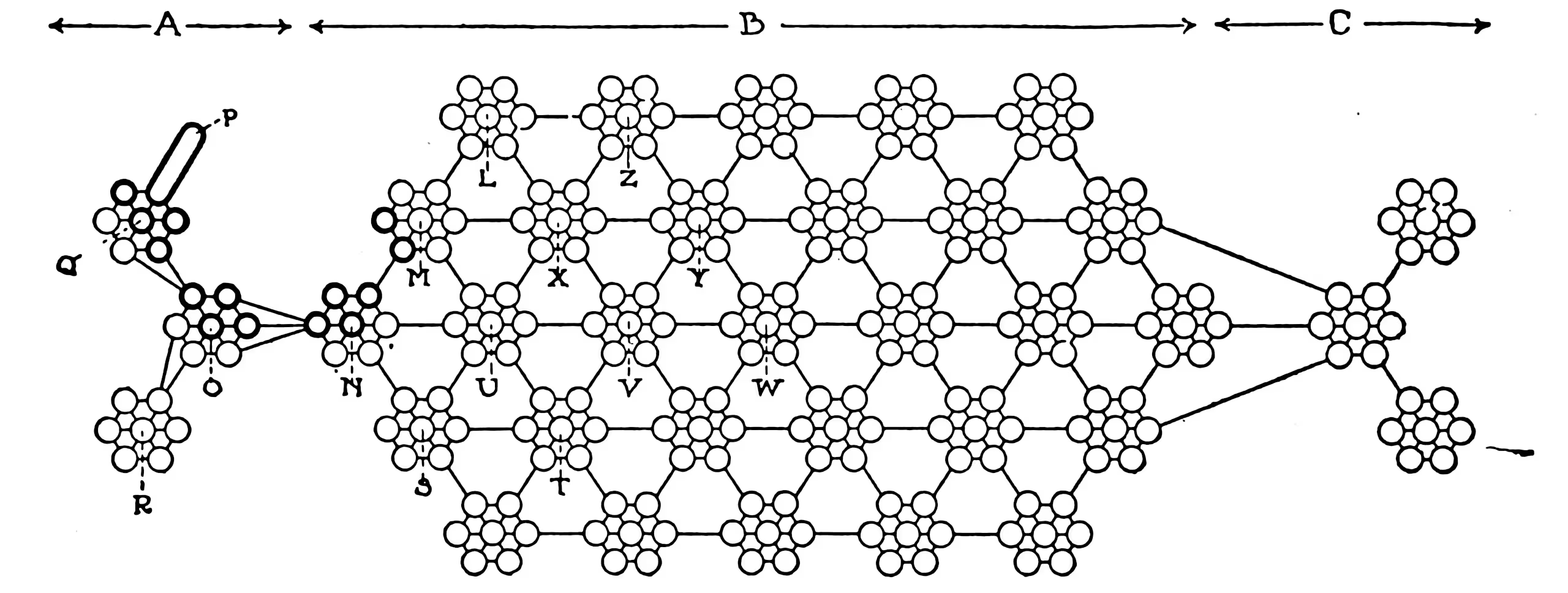

GTN is an open source framework for automatic differentiation with a powerful, expressive type of graph called weighted finite-state transducers (WFSTs). Just as PyTorch provides a framework for automatic differentiation with tensors, GTN provides such a framework for WFSTs. AI researchers and engineers can use GTN to more effectively train graph-based machine learning models.