Neural music synthesis

2016-01-15 — 2021-10-13

Wherein neural music synthesis is surveyed and differentiable DSP methods and raw time‑domain models are noted, with pointers to diffusion, Jukebox, and waveform‑domain approaches.

I have a lot of feelings and ideas about this, but no time to write them down. For now, here are some links and ideas by other people.

Sander Dielemann on waveform-domain neural synthesis. Matt Vitelli on music generation from MP3s (source). Alex Graves on RNN predictive synthesis. Parag Mittal on RNN style transfer.

1 Models

I’m not massively into spectral-domain synthesis because I think the stationarity assumption is a stretch (heh). Very much into raw audio me.

1.1 Diffusion

1.2 Differentiable DSP

This is a really fun idea — do audio processing as normal, but using an NN framework so that the operations are differentiable.

Project site. Github. Twitter intro. Paper. Online supplement. Timbre transfer example. Tutorials.

1.3 PixelRNN

PixelRNN turns out to be good at music Dadabots have successfully weaponised samplernn and it’s cute.

1.4 Jukebox

Open AI Jukebox is the latest hot generative music thing that I should be across.

1.5 Gansynth

1.6 Wavegan

1.7 Neuralfunk

1.8 Melnet

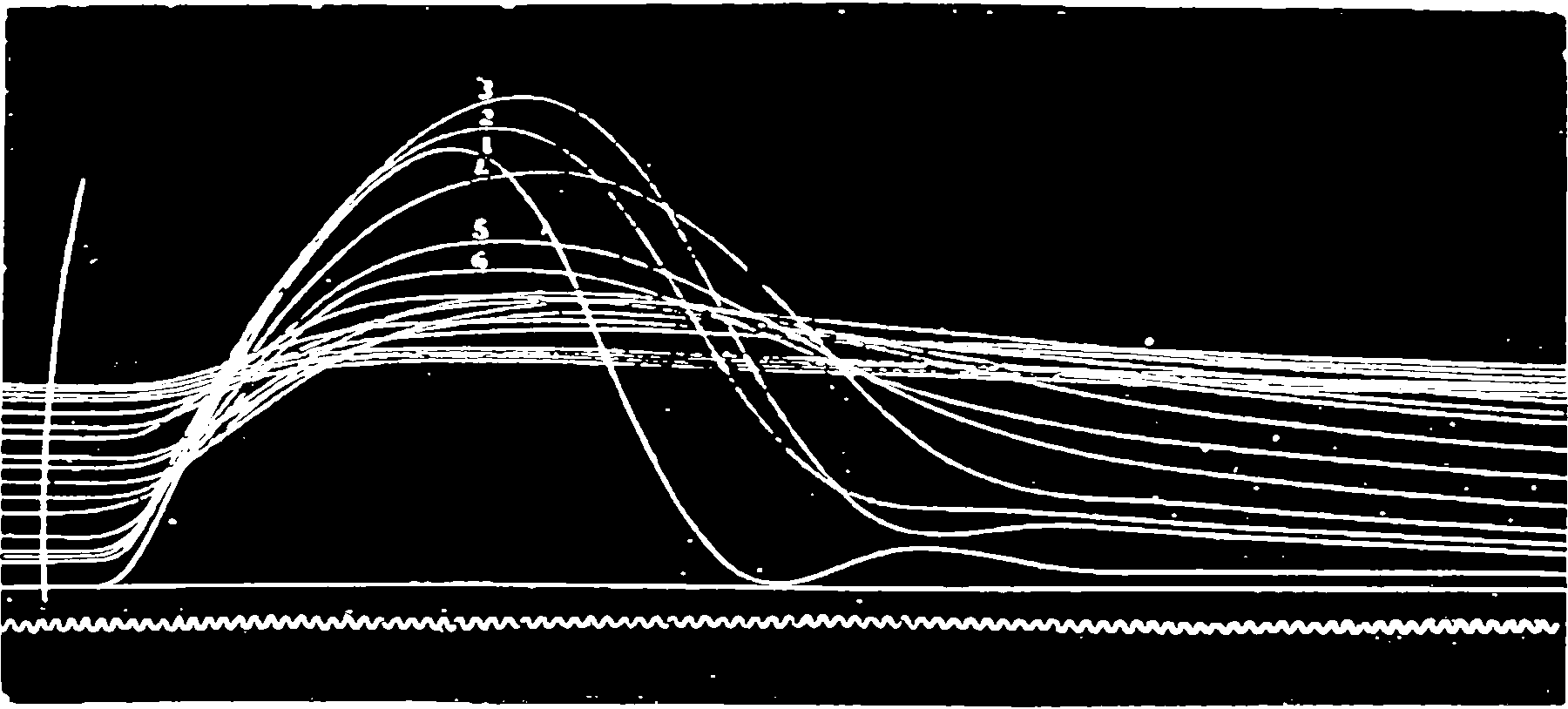

Existing generative models for audio have predominantly aimed to directly model time-domain waveforms. MelNet instead aims to model the frequency content of an audio signal. MelNet can be used to model audio unconditionally, making it capable of tasks such as music generation. It can also be conditioned on text and speaker, making it applicable to tasks such as text-to-speech and voice conversion.

1.9 State spaces

2 Praxis

Jlin and Holly Herndon show off some artistic use of messed-up neural nets.

Hung-yi Lee and Yu Tsao, Generative Adversarial nets for DSP.

3 Incoming

What is Loris?

Soundtracking audio from video.

Andy Sarrof, Musical Audio Synthesis Using Autoencoding Neural Nets. (code)

4 Products

https://djtechtools.com/2020/07/14/best-ai-platforms-to-help-you-make-music/

https://www.deepjams.com/

https://evokemusic.ai/

https://www.patreon.com/loudlystudio