Single subject experiments

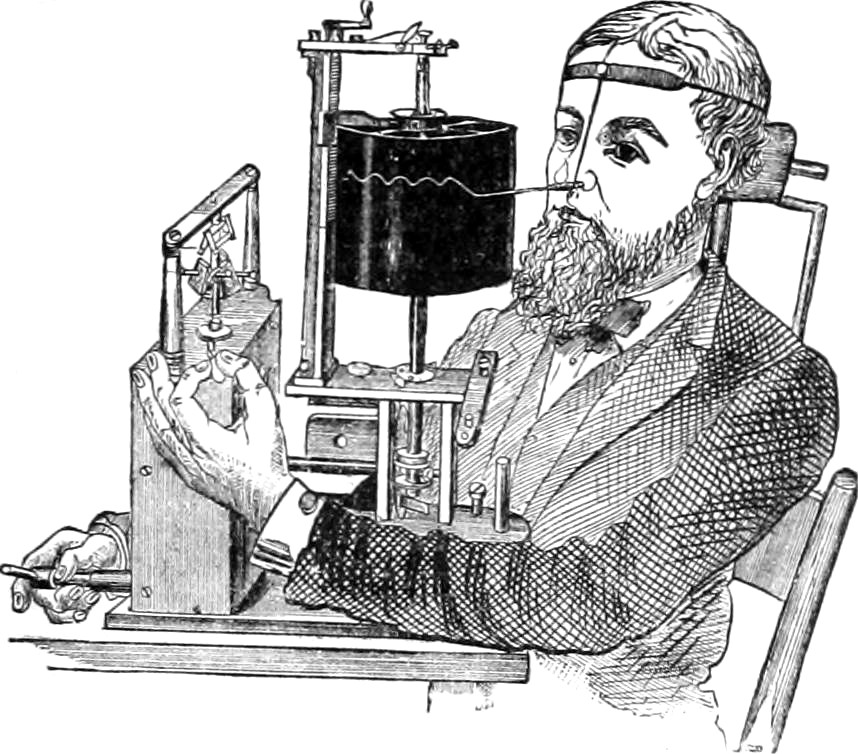

Instrumentation and analytics for body and soul; Quantified self; precision medicine.

2022-01-11 — 2026-04-26

Wherein the Trade Between Internal and External Validity in N-of-1 Trials Is Examined, and Bayesian Methods Are Shown to Dissolve Frequentist Complications Attendant Upon Crossover Designs.

Other people have written much more about principled single-subject experimentation, especially self-experimentation. I have many opinions about this, as a statistician of observational data, which is essentially the tool we use in single-subject experiments.

1 Folk history of the quantified self movement

The Quantified Self movement was named by Gary Wolf and Kevin Kelly in 2007, with the first meetup held the following year at Kelly’s house in Pacifica. It’s a loose constellation of people who self-track in the hope of learning things about themselves that institutional medicine, with its population-scale instruments, cannot tell them. The motivating gripe is statistical: clinical trials estimate average treatment effects across heterogeneous populations, and a guideline that is optimal in expectation can be near-useless for any particular individual whose response distribution differs from the cohort mean (Senn 2004, 2018). (My body is not the average body; nobody’s is.) IMO the virtuous twin to the supplements grift — same diagnosis, opposite epistemic standards — is the precision-medicine apparatus: pharmacogenomics, biomarker stratification, individual-level dose tuning; institutional medicine trying to serve the heterogeneity of treatment effects rather than averaging over it. The grift sells uncontrolled n=1 anecdata as personalised insight; precision medicine and disciplined self-experimentation try to do the same job with enough methodological care to distinguish signal from noise.

Obligatory: The tragic morality fable, Seth Roberts’ Final Column: Butter Makes Me Smarter.

A more methodologically careful single-subject experiment in the wild: Alexey Guzey’s Effects on Cognition of Sleeping 4 Hours per Night for 12–14 Days: a Pre-Registered Self-Experiment.

2 The basic idea

An n-of-1 experiment is a controlled trial where the population under study has size 1: a single subject, repeatedly measured. Rather than averaging across a cohort, we exploit repeated measurements within the individual over time. This trades external validity (we learn nothing about anyone else) for internal validity (we learn about this subject, with no confounding from heterogeneous response or selection across people).

The simplest workable design is the alternating-treatment trial. Suppose I want to know whether morning coffee gives me a headache. I randomize each morning’s drink to caffeinated or decaf — ideally a flatmate prepares the cups so I am blinded — and rate my headache on a 0–10 scale at noon. After enough days, I have paired observations of (caffeine, headache score) and (decaf, headache score), and read off the posterior on the per-day treatment effect under a small Bayesian model.

What makes this estimator work, when it works:

- Randomization breaks confounding with anything that happens to vary day to day — sleep, weather, work stress — on average. Anything systematically correlated with the day-of-experiment — say, if my decaf supply runs low on Mondays — leaks straight into the estimate. The schedule has to be locked in before the experiment starts, ideally implemented by someone or something other than me.

- Blinding breaks the placebo channel. Without it, I am estimating “caffeine plus my belief about caffeine,” and most interventions I might want to test are dwarfed by my expectations about them. Self-blinding is fiddly but doable; see Gwern on self-blinding.

- Within-subject contrast absorbs my personal baseline. The estimate is the individual treatment effect directly, not an average treatment effect over a population that may not include anyone resembling me.

The assumption that lets this be analysed as a paired comparison is that today’s drink has worn off by tomorrow morning, and that yesterday’s drink has not altered today’s susceptibility. When that fails — carryover, slow biomarkers, autocorrelated outcomes, learning effects — we are no longer doing Stats 101. But hold onto this as an intuition pump.

There is nothing too rocket-sciencey going on here. Because we have a long legacy of randomised controlled multi-subject trials for detecting population-level effects, we might be nervous of other designs. However, other designs are possible, and very common in e.g. engineering and state-space modeling. I can understand why people don’t see this often though. For one, medicine is a high stakes domain, so it is understandable that the funding apparatus favours very old very well-tested methods. Contrariwise, n-of-1 designs are almost by definition not profitable (1 customer!) so there is not much corporate effort behind them.

Also, and I could be wrong about this, I get the sense that the n-of-1 literature is a bit thin because of the affordances of frequentist statistics. We can totally do n-of-1 designs in frequentist methods, don’t get me wrong. But the circumlocutions we need to use are so complicated that I, personally, find it hard to reason about. The apparent zoo of complications (corrections for autocorrelation, washout periods, repeated-measures ANOVA, sequential-testing penalties) is mostly a frequentist artefact. The recent rise of Bayesian methods, and the ability to write down generative models that capture the dependencies in the data, seems to make n-of-1 designs much more intuitive and flexible. We just write down the model, condition on the data, and read off the posterior on the treatment effect.

Lots of interesting machinery becomes relevant once we relax the i.i.d.-conditional-on-treatment assumption: state-space models for autocorrelated outcomes; informative priors borrowed from population studies; partial pooling across a series of n-of-1 trials (which recovers some external validity); latent-variable models when the outcome is a noisy proxy for something fuzzier (mood, energy, “feeling crap”); structural causal models for interacting treatments. Each of these is the same recipe — write the joint, condition on the data, marginalise to the posterior on the treatment effect — extended with whichever bit of structure the situation demands. Out of scope for this note, but the design literature below covers a lot of it.

3 Privacy with shared data

As a kind of aside, I feel I should mention that although I did not attempt to unpack it any further, we can imagine doing better than this pure trade of external validity for internal validity.

If many of us run the same n-of-1 protocol on ourselves and feed results back to a shared model, we can recover, in principle, everything an RCT estimates — average treatment effect, heterogeneity across people, individual treatment effects refined by population priors — except we never have to assemble the cohort under one roof. The bottleneck is privacy, scheduling, and a benevolent coordinator (plus we lose some statistical power to the noisy experiments run by amateurs in the wild).

A much simpler protocol asks only that we trust one person. Hand the raw data to a single Bayesian statistician, who computes the posterior on shared parameters and then answers queries from the rest of us by drawing posterior samples rather than releasing the full posterior or any deterministic decision. Under suitable smoothness conditions on the prior and likelihood, this protocol is itself differentially private: the noise that DP requires is provided for free by the Bayesian uncertainty, and informative priors give better privacy because the posterior depends less on any one person’s data (Dimitrakakis et al. 2013). Trust requirement: one statistician. Cryptographic infrastructure: nil.

There is some cool cryptographic technology which allows us to handle the privacy and benevolent coordinator problems. IANAC, so what follows is vibes-based — a starting map for further reading rather than rigorous engineering.

Three classes of cryptographic primitive matter here, all of which have matured in the last decade:

- Federated learning. Each participant trains the shared model locally on their own device; only parameter updates leave the device. Google’s Gboard uses this in production for keyboard prediction; the same plumbing applies to a Bayesian hierarchical n-of-1 model with shared hyperparameters and per-individual latents.

- Differential privacy, now layered on top of federated aggregation rather than baked into a single posterior sampler. Calibrated noise is added to gradient or parameter updates before they leave the device, or to the aggregated model before release; the privacy budget \(\varepsilon\) is tunable and composes across releases. We pay some statistical power for it.

- Homomorphic encryption and adjacent secure multi-party computation. The server computes on encrypted inputs and emits an encrypted output that only participants can decrypt. Slow, but adequate for the kind of sufficient-statistic exchanges that hierarchical Bayesian inference actually needs.

Some combination of these might get us democratized private group trials: the marginal participant uploads only what is needed for partial pooling, gets informative priors fed back to their device, and never reveals their raw symptom log to the operator or to other participants.

Economies of scale, based on shallow reading of the literature:

- Posterior precision on shared parameters scales roughly linearly with \(N\) (under i.i.d.), so ATE and HTE estimates become useful with far fewer subjects than an RCT needs to recruit and retain.

- Heterogeneity of treatment effects becomes estimable directly: the spread of individual treatment effects across participants is a parameter to learn, not a nuisance to average out.

- The marginal participant’s own posterior also tightens, because their personal latent borrows strength from the population prior. The cost of joining is sub-linear in their data; the benefit is super-linear.

The hard problem that remains is social coordination. Somebody has to choose the protocol, write the priors, run the analysis, and be trusted not to fudge it. SMTM have written about exactly this gap under the heading Job Posting: Reddit Research Czar — a benevolent-coordinator role for distributed self-experimentation, adjacent to their Community Trial protocols where one organizer takes the lead and participants self-assign to variations on a shared design. The cryptographic primitives turn that role from “trust the czar with your raw symptom logs” into “trust the czar with the protocol, and let the maths handle the data.”

The earlier startup-idea callout is the single-app version of this. The federated version is the next layer up: a substrate where the protocol designer is the entry point and the participant gets stronger statistical guarantees in exchange for slightly less computational headroom on their device.

4 Experiment design

- Piccininni et al. (2024)

- Zarbin and Novack (2021)

- Zenner, Böttinger, and Konigorski (2022)

- Gwern on self-blind trials

- White Paper: Design and Implementation of Participant-Led Research in the Quantified Self Community

- Quantified Self How-To: Designing Self-Experiments

- International Collaborative Network for N-of-1 Trials and Single-Case Designs — academic-side hub for the methodology, with a useful analysis resources page.

- SLIME MOLD TIME MOLD on amateur self-experimentation: an introduction, and a follow-up on single-subject research.

- Quantopian-style contest for food intake and weight — LessWrong proposal for a collective tournament of n-of-1-style nutrition experiments.

5 Data collection

5.1 Activity

I mention some activity-monitoring strategies under time management.

5.2 Subjective things

Measuring moods? See the Experience Sampling Method (Verhagen et al. 2016; Hektner, Schmidt, and Csikszentmihalyi 2007) or Swan (2013).

5.3 Biomarkers

See biomarkers.

6 Tools

AFAICT there is a conservation law in this space: app UX and methodological seriousness trade off against each other. The polished symptom-trackers do not run experiments; the things that do run actual experiments are research outputs with research-output UX. StudyU is the only tool I have found that gets the statistics right, and using it assumes a research methodologist within shouting distance.

A tool with the methodological care of StudyU and the UX care of Bearable. Bayesian model under the hood — proper handling of carryover, autocorrelation, and informative priors borrowed from population studies — randomization done by something that is not me, push notifications for today’s intervention, sync with Apple Health / Google Fit for covariates, non-awful charts, impeccable privacy, and an export button that emits a tidy dataset and a model card I could hand to my doctor.

6.1 Designed for n-of-1 trials

StudyU — research platform from HPI Berlin. Methodologically the most serious of the bunch (proper Bayesian analysis of crossover trials), with UX shaped by clinical-research workflows rather than self-experimenting laypeople.

StudyMe — open-source mobile companion to StudyU from the same HPI group, aimed at end-user-led trials. More usable than StudyU, less methodologically deep. See Zenner, Böttinger, and Konigorski (2022).

n1.tools — minimal alternating-treatment runner. Frequentist (mean + p-value) and admirably candid about its limits:

This tool works for simple experiments where the effects of what you’re testing show up and wear off within a day. It doesn’t take into account things like lag times between your action and the outcome, autocorrelation (when data points influence each other), the buildup of substances in your body over time etc.

This can be useful as a first order approximation, and is still more rigourous than many other methods. However, if you’re looking at something more complex, you likely need to consider other factors and use a more sophisticated methodology.

Flaredown — chronic-illness symptom tracker with a light experiment-tracking layer. The associated Kaggle dataset is a decent playground for the analytics side.

6.2 Symptom and mood trackers (no experimental scaffolding)

Well-designed instruments for recording things; the analytics are basic correlations at best, no randomisation, no causal model.

- Bearable — mood and symptom tracking. Probably the best UX in the category, and the obvious shape an n-of-1 app could be cast in.

- Cronometer — nutrition tracking with a thorough food database.

- Exist — quantified-self aggregator that pulls from many sources and surfaces correlations. Correlations, not experiments.

6.3 Passive collection

- ActivityWatch — open-source watcher for computer activity (active windows, AFK status, etc.). Companion to the time-management notes.

- Gyroscope — AI-coach-style aggregator with a narrative interface. Pretty, very pop-health in tone, no experimental design at all.

7 Data export and self-hosting

Tools for prying my data out of vendor silos and putting it somewhere I can analyse with my own scripts.

7.1 Apple Health exports

Apple Health is a reasonable aggregation point on iOS but does not love letting its data out. These help.

- HealthExport — export iPhone Health data to CSV.

- Simple Health Export CSV — App Store alternative.

- Eric Wolter — Apple Health XML-to-CSV Converter.

- Mark Koester — How to Export, Parse and Explore Your Apple Health Data with Python.

- Kieran Healy — Burn Notice, a worked exploration of his own iPhone Health archive.

7.2 Self-hosted aggregators

For people who want their personal-metrics database to live on their own kit, with their own analysis stack on top.

- heedy — aggregator for personal metrics with an extensible analysis engine; ships with a Jupyter notebook plugin.

- markwk/qs_ledger — quantified-self personal-data aggregator with analysis notebooks.

- pacogomez/health-records — plain-text health records, Markdown-first.

- onejgordon/flow-dashboard — goal, task and habit tracker plus personal dashboard.

- Flow — hosted goal/dashboard tool in a similar shape.

8 Community and reference

- Show & Tell Projects Archive — Quantified Self — community gallery of self-experiments.

- Bibliography — Quantified Self — academic and grey-literature references curated by the community.

- woop/awesome-quantified-self — link aggregator for apps, devices and platforms.

- Open Humans — platform for donating and sharing personal data for collective research.