Statistics and machine learning

2011-04-15 — 2023-09-13

Wherein a Distinction Between Exploratory and Descriptive Statistics Is Drawn, the Role of Statistics in Guarding Against Marginally Viable Research Is Invoked; Harmonisation of Statistics and Machine Learning Is Noted.

Those who ignore statistics are condemned to reinvent it

A methodological distinction that some people make: What’s the difference between analytics and statistics?

- Analytics helps you form hypotheses. It improves the quality of your questions.

- Statistics helps you test hypotheses. It improves the quality of your answers.

I would divide these into exploratory and descriptive statistics, but that terminology is not universal.

It is best not to need statistics at all. If a pattern is so clear it is undeniable, then we can go home early. Although — is our bar for undeniable high enough? Have we really eliminated our own biases and wishful thinking? How would we know?

OK, statistics also play some other roles, like giving us greater accuracy in our predictions, but that doesn’t fit into the aphorism so nicely.

What else to say here? I am not sure. I created this page before I became a professional statistician, and statistics grew to be half this website. For more information on statistics, see… pretty much any page.

1 Role in science

Statistics is an Excellent Servant and a Bad Master:

This means that Galileo, Newton, Kepler, Hooke, Pasteur, Mendel, Lavoisier, Maxwell, von Helmholtz, Mendeleev, etc. did their work without anything that resembled modern statistics, and that Einstein, Curie, Fermi, Bohr, Heisenberg, etc. etc. did their work in an age when statistics was still extremely rudimentary. We don’t need statistics to do good research.

Indeed we do not. What we need statistics for is to ensure that marginally viable research is not 💩 research.

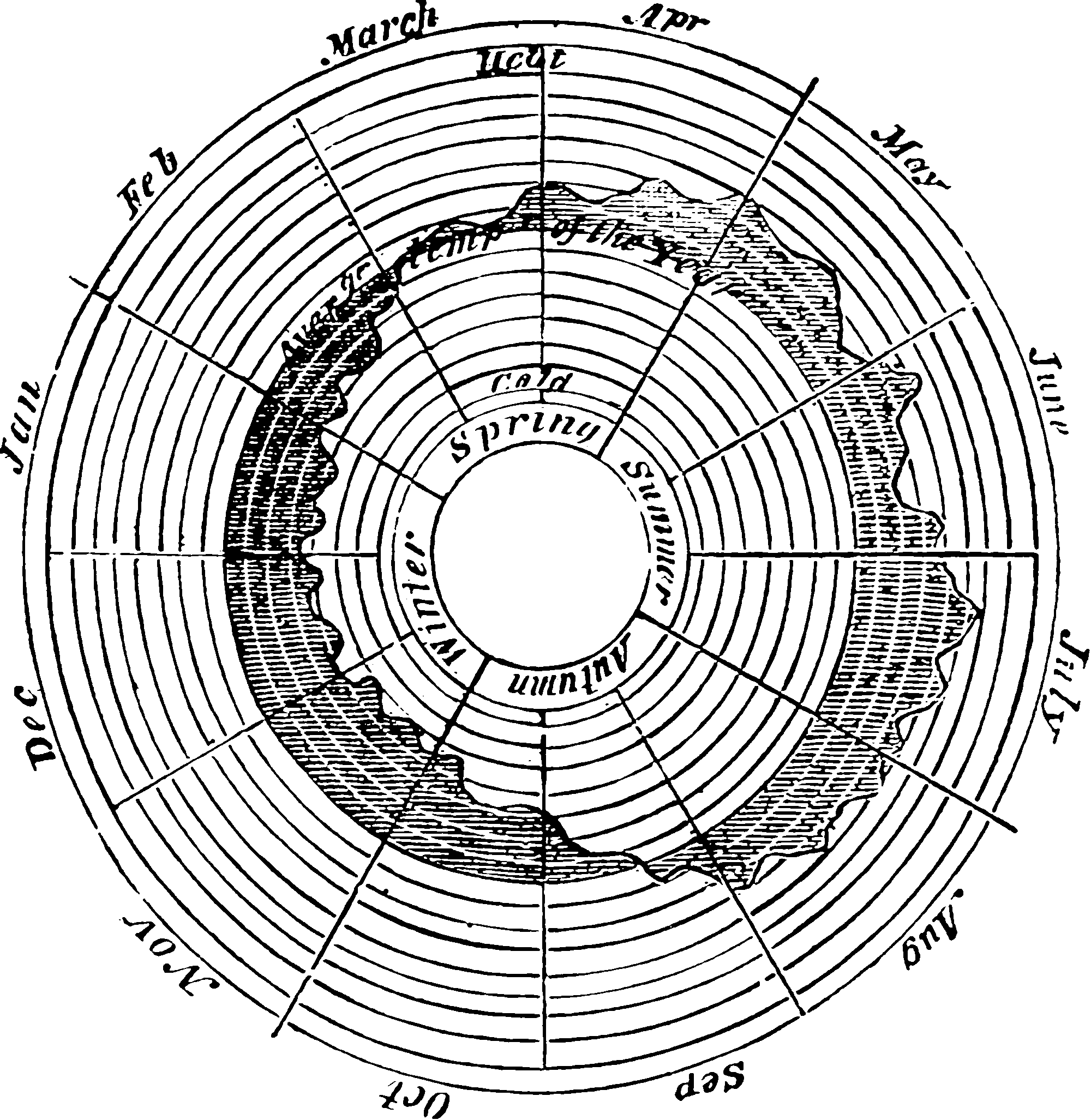

2 Exploratory data analysis

3 Unifying statistics and ML

I’m especially interested in modern fusion methods that harmonise what we would call statistics and machine learning methods, and the unnecessary terminological confusion between those systems. But I have nothing to say about that right now.

Why not read Kevin Murphy’s probml/pml-book: Probabilistic Machine Learning textbooks? They are free online.

4 Decisions

TODO: Introduce decision theory.

5 Tests

TODO: Introduce tests.

6 Taxonomies

Boaz Barak, ML Theory with bad drawings attempts one division of labour:

However, what we actually do is at least thrice-removed from this ideal:

- The model gap: We do not optimise over all possible systems, but rather a small subset of such systems (e.g., ones that belong to a certain family of models).

- The metric gap: In almost all cases, we do not optimise the actual measure of success we care about, but rather another metric that is at best correlated with it.

- The algorithm gap: We don’t even optimise the latter metric since it will almost always be non-convex, and hence the system we end up with depends on our starting point and the particular algorithms we use.

The magic of machine learning is that sometimes (though not always!) we can still get good results despite these gaps. Much of the theory of machine learning is about understanding under what conditions can we bridge some of these gaps.

The above discussion explains the “machine Learning is just X” takes. The expressivity of our models falls under approximation theory. The gap between the success we want to achieve and the metric we can measure often corresponds to the difference between population and sample performance, which becomes a question of statistics. The study of our algorithms’ performance falls under optimisation.

7 Textbooks, resources

- Arne Hallam’s Home Page includes some excellent lectures on statistics

- Encyclopedia of Machine Learning and Data Science would really like to be a definitive reference

- roboticcam/machine-learning-notes: My continuously updated Machine Learning, Probabilistic Models and Deep Learning notes and demos (2000+ slides) 我不间断更新的机器学习,概率模型和深度学习的讲义(2000+页)和视频链接 Richard’s notes are a masterclass in 80/20ing your note-taking. Breathtakingly ambitious, well explained, little bit scruffy.

- Jean Gallier and Jocelyn Quaintance, Algebra, Topology, Differential Calculus, and Optimization Theory for Computer Science and Machine Learning, 2188 pages as of 2022/10/30, and growing.

- Arne Hallam’s Home Page includes some excellent lectures and tutorials on statistics