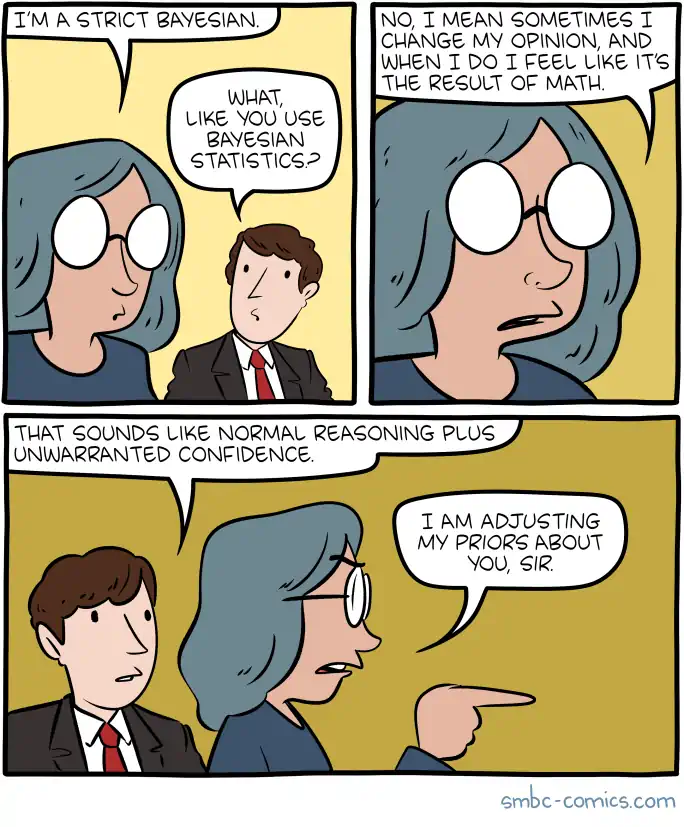

Saying “Bayes” is not enough

The other secret steps to doing Bayesian statistics

2016-05-30 — 2023-05-19

Wherein Bayesian Methods Are Acknowledged but Their Insufficiency Is Asserted Due to Heavy Mathematical and Computational Burdens, and Because Priors and Hypothesis Generation Are Not Provided by Bayes.

Bayesian statistics is not sufficient to describe actually-existing scientific practice. There are various barriers, mathematical, computational, and practical, that prevent us from being fully Bayesian in practice.

If you find yourself saying \(“P(A|B) = [P(A)P(B|A)]/P(B)\) all the rest is commentary” then… a naïve reading of this apparent endorsement of Bayesian statistics both underestimates how very much commentary there is and excludes the important stuff that is not usefully a commentary on the measure-theoretic treatment of belief.

Don’t get me wrong, I use Bayesian statistical methods all the time, and these tools are incredibly useful to me. Bayesian statistics is great for getting a handle on reasonable beliefs and even informally it is a great crutch to prevent us from being excessively certain. But.

I am a humble practitioner, so my instinctual first objection is that inference is mathematically challenging for even many apparently basic problems, in that Bayesian reasoning frequently requires difficult mathematical manipulations of integral calculus, even for apparently basic problems. Is the model simple but the likelihood non-exponential? Is the likelihood exponential but the prior non-conjugate? I know no one who can do this in their head, except with tedious slowness. Even if the mathematics is not too awful, I often find that basic stuff is computationally prohibitive, in that even simple Bayesian logistic regression can grind my laptop to a halt. In fact, in sufficiently nasty cases it is #P-hard. Further, there are many difficulties in practice. e.g. If I start from sufficiently opinionated priors, I can fail to find the truth in any sensible budget of data.

But maybe that is only for doing “real” science and I should not worry so much about these details in casual use of Bayesian inference, where we are simply maintaining betting odds for various possibilities in our heads. Mayyyybe.

Even then, I at least simply lack time to be Bayesian for many questions of interest, unless I delude myself into thinking I am smarter than I am. I might claim to be doing all manner of Bayesian arithmetic in my head when I update my opinions in real data, and I can offer you some odds. However, I know that when I get out my notebook and attempt to cross-check my supposed “posterior updates” with pencil and paper, I am highly likely to have gotten my intuitive mental arithmetic wrong, even in apparently trivial cases. Maybe some people are better at this than me, but I hold that even if you are substantially smarter than I, it is not much extra complexity you can assume in any given estimate before you need a notepad and a computer, or a computing cluster, to actually do the inference that we seem to want to claim we do. This is not a thing that we can do mid-conversation. It is a thing we can attempt offline with some quiet time, or maybe that we can get fast at for certain classes of commonly-observed problems. It is not a way that we can afford to handle many novel hypotheses each day, what with the need to shower and eat etc. Which hypotheses do we treat rigorously? That is in itself a difficult Bayesian optimization, with a forbiddingly complex mathematical and computational cost attached.

More stringent theoreticians would raise profound theoretical objections to universalizing claims to Bayes-as-science. A philosopher of science might mention that Bayesian statistics is not sufficient to generate hypotheses, only to compare them. A probabilist would mention that inference can be badly behaved if the model class does not contain a true model (and almost no model is true), and probably other objections besides. A decision theorist might demand that you actually provide an action rule to plug into your inference procedure because the likelihood principle does not apply in open-model settings. I am not an expert in those theoretical domains, but I mention them here because they were name-checked during my training at least, and they are points easy to miss if you come to this field from the outside.

This page is for links and further notes on this theme of claims to using Bayesian statistics as a principle for daily life or the project of science.

That is one way of doing things. I could on the other hand decide that compute is no object and I will do whatever calculations are required. In this case, I will be doing Solomonoff induction etc. See AIXI for that intensely theoretical approach.

Upon writing this piece, I notice that I had already found some grumpy rationalists complaining about other rationalists’ Bayes cred, so… maybe there is sufficient snark here and I do not need to add any more.

Deborah Mayo in, “You May Believe You Are a Bayesian But You Are Probably Wrong” draws together some highlights of Stephen Senn (Senn 2011)

It is hard to see what exactly a Bayesian statistician is doing when interacting with a client. There is an initial period in which the subjective beliefs of the client are established. These prior probabilities are taken to be valuable enough to be incorporated in subsequent calculation. However, in subsequent steps the client is not trusted to reason. The reasoning is carried out by the statistician. As an exercise in mathematics it is not superior to showing the client the data, eliciting a posterior distribution and then calculating the prior distribution; as an exercise in inference Bayesian updating does not appear to have greater claims than ‘downdating’ and indeed sometimes this point is made by Bayesians when discussing what their theory implies. …

Richard Ngo, Against strong bayesianism:

I want to lay out some intuitions about why bayesianism is not very useful as a conceptual framework for thinking either about AGI or human reasoning. This is not a critique of bayesian statistical methods; it’s instead aimed at the philosophical position that bayesianism defines an ideal of rationality which should inform our perspectives on less capable agents, also known as “strong bayesianism”. As described here:

The Bayesian machinery is frequently used in statistics and machine learning, and some people in these fields believe it is very frequently the right tool for the job. I’ll call this position “weak Bayesianism.” There is a more extreme and more philosophical position, which I’ll call “strong Bayesianism,” that says that the Bayesian machinery is the single correct way to do not only statistics, but science and inductive inference in general — that it’s the “aspirin in willow bark” that makes science, and perhaps all speculative thought, work insofar as it does work.

It is good. Go read it. Or, if you prefer visual learning…

1 Does my estimator converge to the frequentist one?

Sometimes. This is fraught in the M-open setting, and far from guaranteed in the nonparametric setting. See (e.g. Stuart 2010) for the kind of extra work needing doing there.

2 Is my likelihood correct?

Not usually, no. Equivalently, is the true model one of my hypotheses? No. See M-open. This has troubling implications for the quality of our inference if we are not careful, and in particular means we cannot just update with the likelihood and hope our inference is valid for all possible decision rules.

3 Computational constraints

Some inference is so expensive that we cannot perform it exactly in a convenient time frame even for one model, let alone a large, or even infinite hypothesis space. Approximations and optimal estimates need to be considered.

TBC

4 Are my priors OK?

Even in vanilla inference, this is a whole research field in itself. A starting prior is a delicate thing.

Should we go for an uninformative prior? What an uninformative prior even is is a whole question. Short version — an uninformative prior is a pain in the arse that will break your inference.

More generally, there are pathological cases where apparently-sensible priors might not be proper for weird reasons. See Larry Wasserman’s summary of a piece by Stone (Stone 1976).

Also, because Wasserman did a lot of complaining about this, see Freedman’s Neglected Theorem:

it is easy to prove that for essentially any pair of Bayesians, each thinks the other is crazy.

TBC

5 Where does my hypothesis set even come from?

Let us return to nostalgebraist grumping about strong Bayes as a methodology for science. Key point: you do not have all the possible models, and you do not have the computational resource to assign them posterior likelihoods if you did; assuming that you can do Bayesian learning over them is thus a broken model for learning the world. (although cf AIXI for a theoretical idealization that does exactly that, at incomputable cost).

TBC — connection to Lakatosian-style falsificationism.

6 So Bayesian statistics is useless then?

No, it is extremely useful. It is simply not magic. It is hard, has weird failure modes, and intimidating mental and computational requirements. Being sloppy about this will cause me to update my priors towards you making mistakes about your posteriors.

7 Are my axioms correct?

TBC.

See Wasserman on the Finite Additivity axiomatization for a taster.

8 Uncertain probabilities of weird stuff

Pascal’s wager, Pascal’s mugging etc. Duncan (2007); Neiva (2022).