Containerized apps (for scientists)

Doing things that previously took 0.5 computers using 0.4 computers

2015-11-05 — 2022-08-29

Wherein Containerized Apps Are Presented as Lightweight, Reproducible Execution Environments for Science, and Apptainer Is Noted as an HPC‑oriented Alternative to Docker, While Inner‑loop Development Workflows Are Considered.

Assumed audience:

People who want to do containerization for machine learning research

Content warning:

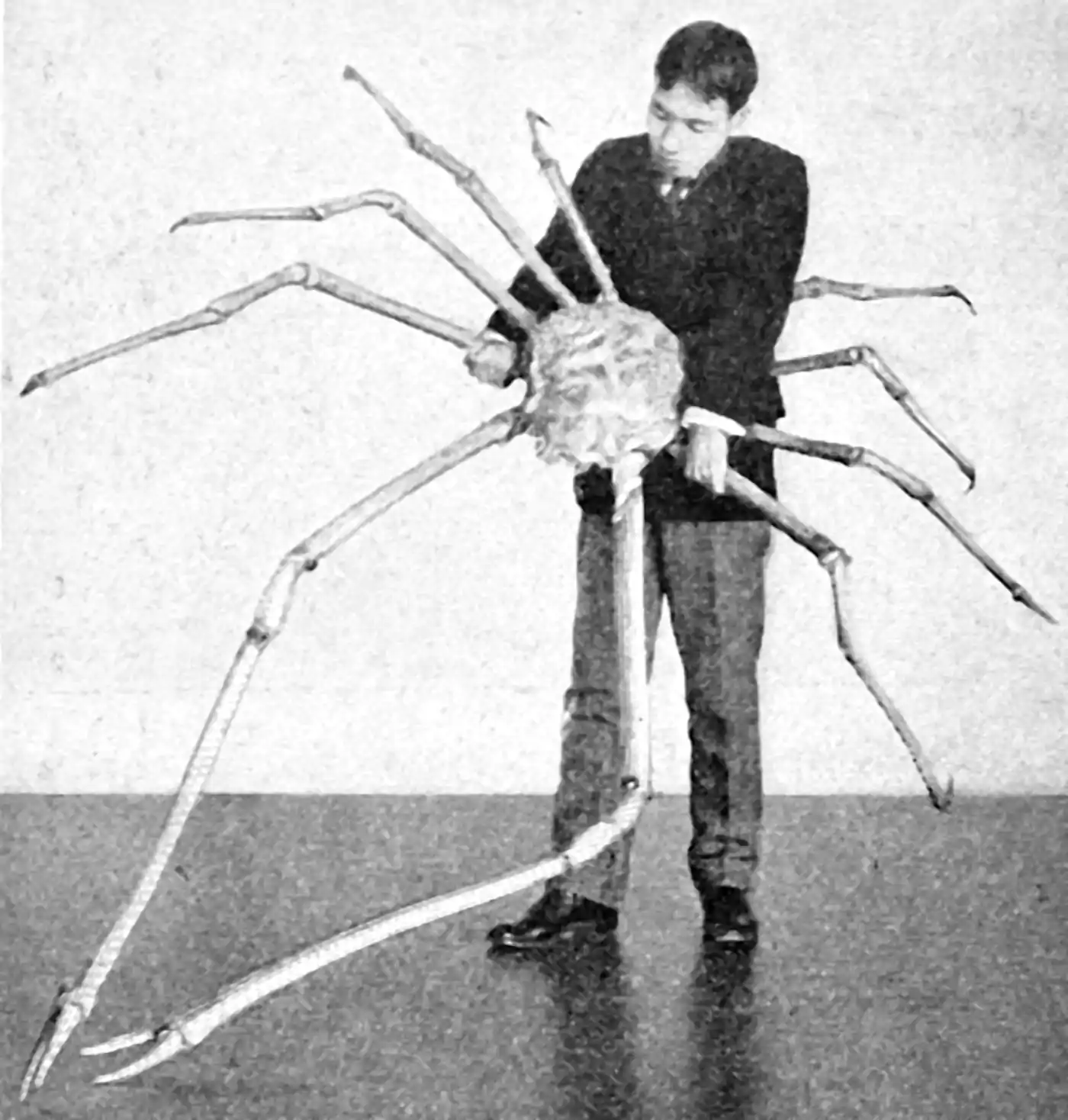

The needs of ML research people are not the usual scaling-web-apps type needs of many containerization users. Obsolete advice danger. Thalassophobes, there is an unsettlingly large crab.

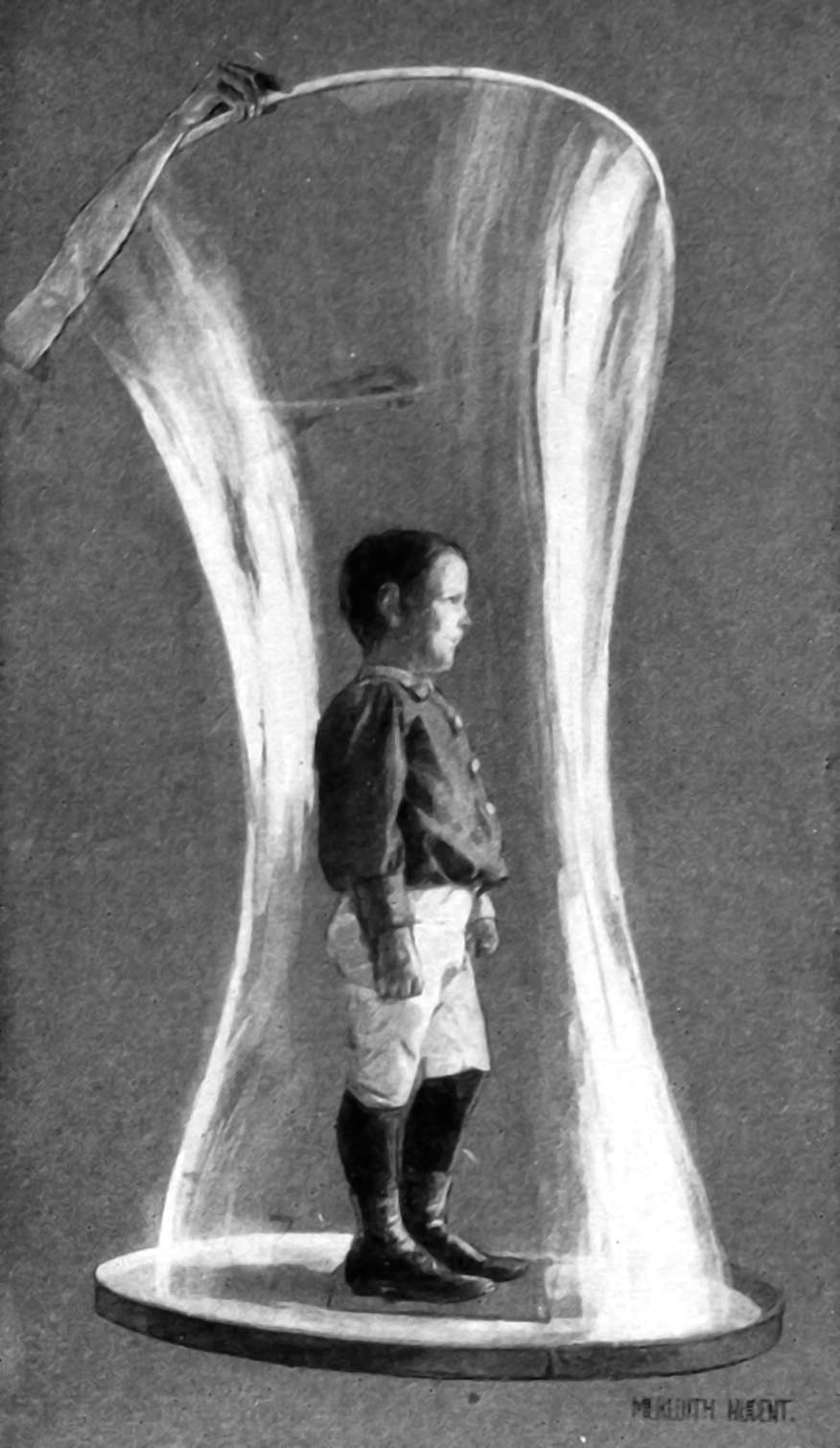

A lighter, hipper alternative to virtual machines, which, AFAICT, attempts to make provisioning services more like installing an app than building a machine, because the (recommended) unit of packaging is apps or services, rather than machines. This emphasis leads to somehow even more webinars. Related to sandboxing, in that containerized apps are usually sandboxed although these systems can be in conflict. Containerization targets are typically intended to be lightweight reproducible execution environments for some program which might be deployed in the cloud.

I care about this because I hope to use it for practical cloud ML in a reproducible research as part of my machine learning best practice; the document is biased accordingly.

Most containerization systems in practice comprise several moving parts bolted together. They provide systems for building reproducible sets of software dependencies to execute some desired process in a controlled and not-crazy environment, separate from your other apps. There is a built-in system for making recipes for other people/machines to duplicate what you are doing exactly, or at least, close enough. That is, it is a system for doing package management by using supporting features of the host OS to make isolation easier. — Although Nix seems to do it using only clever path management.

The most common hosts for containers are, or were, Linux-ish, but I believe there are also Windows/macOS solutions these days. I have a vague notion that they run VMs to emulate a container-capable host OS. Or something? Have not checked. For my purposes Linux host OSes are sufficient.

Learn more from Julia Evans’s How Containers work.

When you first start using containers, they seem really weird. Is it a process? A virtual machine? What’s a container image? Why isn’t the networking working? I’m on a Mac but it’s somehow running Linux sort of? And now it’s written a million files to my home directory as root? What’s HAPPENING?

And you’re not wrong. Containers seem weird because they ARE really weird. They’re not just one thing, they’re what you get when you glue together 6 different features that were mostly designed to work together but have a bunch of confusing edge cases.

Another way to understand what is going on is to build your own toy docker system. See Containers the hard way: Gocker: A mini Docker written in Go.

Also insightful, the build toolchain is well-explained by Tiny Container Challenge: Building a 6kB Containerized HTTP Server!. Here they distinguish between crap your image has from building versus crap your image needs for running.

1 For reproducible research

Containerization may not be the ultimate tool for reproducible research but it is a start.

These seem to be two dominant toolchains of interest in this domain, Docker, and Apptainer (formerly Singularity). Docker is popular on the wider internet but Apptainer is more popular for HPC. Docker is cross-platform and easy to run on your personal machine, while Apptainer is linux-only (i.e. if I want to use Apptainer from not-linux, I must install a linux VM.) Apptainer can run many docker images (all?), so for some purposes we may as well just assume “Docker” and bear in mind that we might use Apptainer as an implementation detail. 🤞

A challenge is that most containerization tutorials are about how to deploy a webapp for my unicorn dotcom startup, which is how they get features on Hacker News. That is useless to me. I want to Do Science™️. The thing which confused the crap out of me starting out is “what is the ‘inner loop’ of containerized workflow for science?”. I don’t care about all this stuff about deploying vast swarms of Postgresql servers or whatever, or finding someone else’s pre-rolled docker image; I need to develop some research. How do I get my code into one of these containers? How do I change which code is in the container as the project evolves? (and why do so few tutorials start from there?) How do I get my data in there? How does the blasted thing get onto the cluster? Why do tutorials rarely start from this?

Here are some more pragmatically useful ones for me. (TODO: rank in some pedagogic order.)

Tiffany Timbers gives a brisk run-through for academics. Jon Zelner goes in-depth with R in a series culminating in continuous integration for science. See Keunwoo Choi’s guide for researchers by example. The Alan Turing Institute containerization guide is an excellent introduction to how to use containers for reproducible science. Timothy Ko shows off a minimal python app development workflow cycle. Pawsey containerization class (possibly based off software carpentry?). Alan Turing institute Docker intro.

A worked example, including many details and caveats that are normally glossed over is Jeremy Kun’s Searching for RH Counterexamples with Docker.

2 Developing using

Tedious done naively, although apparently I should try devcontainers.

3 Docker

The most common containerization tool, as measured by number of tutorials. Fiddly for ML but it can be worth it apparently. See Docker.

4 Apptainer

A containerization solution targeting scientists. See Apptainer.

5 Podman

podman is a different and, I gather, more general runtime.

Podman and Buildah for Docker users:

Now that we’ve discussed some of the motivation it’s time to discuss what that means for the user migrating to Podman. There are a few things to unpack here and we’ll get into each one separately:

- You install Podman instead of Docker. You do not need to start or manage a daemon process like the Docker daemon.

- The commands you are familiar with in Docker work the same for Podman.

- Podman stores its containers and images in a different place than Docker.

- Podman and Docker images are compatible.

- Podman does more than Docker for Kubernetes environments.

Podman vs Docker: What are the differences?

Docker is a monolithic, powerful, independent tool with all the benefits and drawbacks implied, handling all of the containerization tasks throughout their entire cycle. Podman has a modular approach, relying on specialized tools for specific duties.

That last part sounds like an actual win for pedagogical reasons. What even is Docker doing most of the time?

6 LXD

LXD is another containerization standard/system. Because docker is a de facto default, let’s look at this in terms of docker.

LXD is a next generation system container and virtual machine manager. It offers a unified user experience around full Linux systems running inside containers or virtual machines.

LXD is image based and provides images for a wide number of Linux distributions. It provides flexibility and scalability for various use cases, with support for different storage backends and network types and the option to install on hardware ranging from an individual laptop or cloud instance to a full server rack.

When using LXD, you can manage your instances (containers and VMs) with a simple command line tool, directly through the REST API or by using third-party tools and integrations. LXD implements a single REST API for both local and remote access.

Where podman is a Redhat project, LXD is a Canonical project.