Databases for realtime stuff

For when log files seem dated

2020-02-18 — 2020-05-27

Wherein Real‑time Databases Are Surveyed and the Suitability of In‑memory Stores Such as Redis for Heavy Write Traffic and Millisecond Updates Is Noted, With Ring‑buffer RRD and Streaming Materialize Also Described.

Databases at the intersection of storing data and processing streams, for, e.g. time series forecasting and real-time analytics. Do you need a specialist database for this? Sometimes, irritatingly, it seems useful to have a tool that can handle rapidly changing data.

1 Differential dataflow

In this book, we will work through the motivation and technical details behind differential dataflow, a computational framework built on top of timely dataflow intended for efficiently performing computations on large amounts of data and maintaining the computations as the data change.

Differential dataflow programs look like many standard “big data” computations, borrowing idioms from frameworks like MapReduce and SQL. However, once you write and run your program, you can change the data inputs to the computation, and differential dataflow will promptly show you the corresponding changes in its output. Promptly meaning in as little as milliseconds.

This relatively simple setup, write programs and then change inputs, leads to a surprising breadth of exciting and new classes of scalable computation. We will explore it in this document!

2 Log files

Writing to a file is usually OK for my purposes, but sometimes it is not feasible due to concurrency problems.

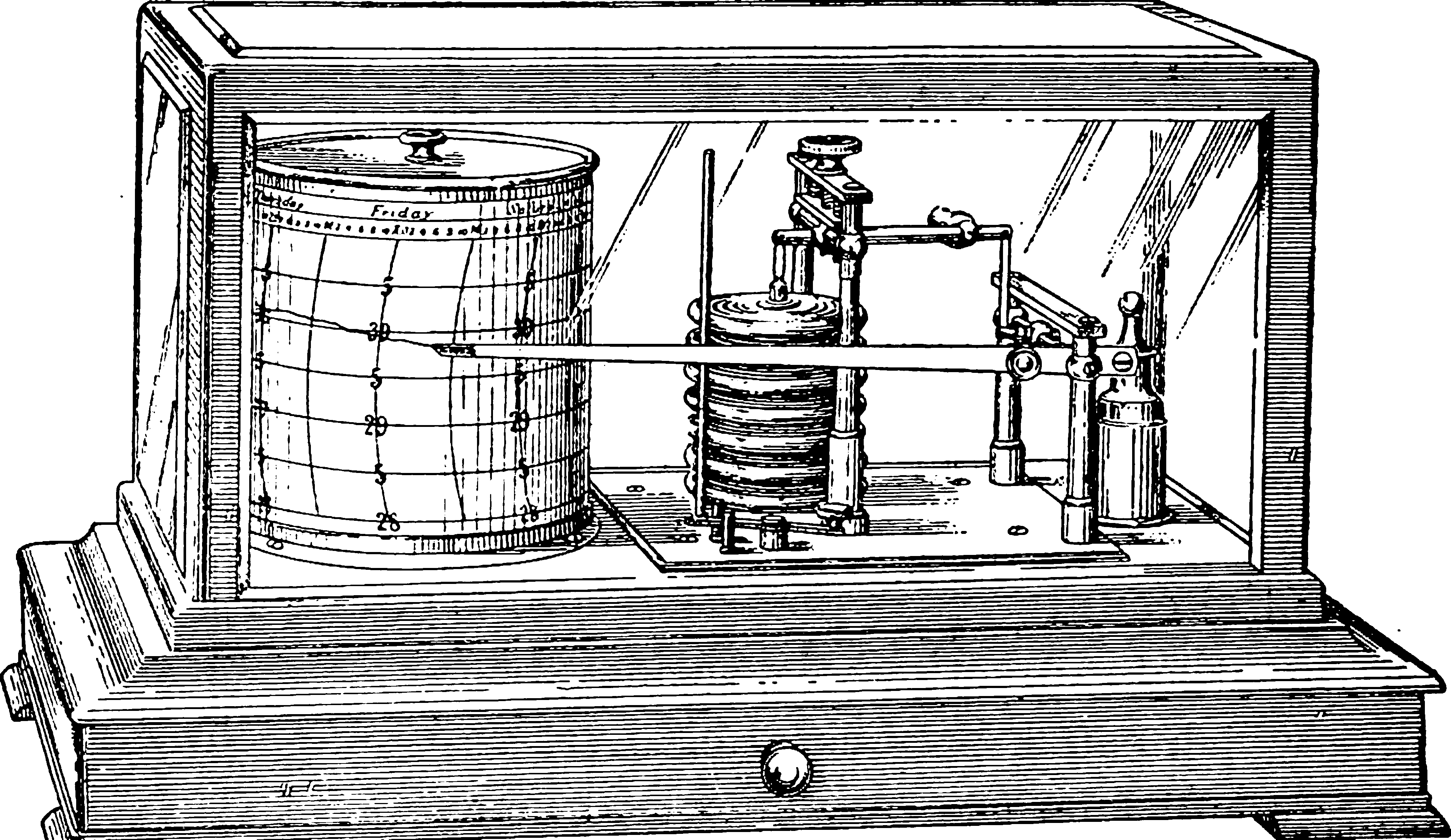

3 RRD

RRD is not flashy or new but remarkably capable; designed for network and computer monitoring but basically any real-time data with a ring buffer (i.e. fixed maximum history size of interest) can be stored and processed. I like the utilitarian aesthetic.

What data can be put into an RRD?

You name it, it will probably fit as long as it is some sort of time-series data. This means you have to be able to measure some value at several points in time and provide this information to RRDtool. If you can do this, RRDtool will be able to store it. The values must be numerical but don’t have to be integers…

This is good for debugging something that crashes and you want to know what happened just before.

4 Redis

Redis is adept at heavy write-transactions, so time series stuff works. You can just run it without setting up your special dedicated server. Convenient for things that are just big enough to fit in your memory but you need to process the crap out of them fast. Easy setup, built-in Lua interpreter, fashionable enough for wide support.

These last two have met all my needs. Inevitably, when trying to find a solution to a problem I got recommendations for many more which I list below in the hope that someone else might need them. I do not.

5 InfluxDB

Influxdb is a database designed to query time-series live, by current time, relative age and so on. The sort of thing designed to run the kind of elaborate real-time situation visualization that evil overlords have in holographic displays in their lairs. Comes with free count aggregation and lite visualisations. Haven’t used it, just noting it here, will return if I need a dashboard of malevolence for my headquarters.

Haven’t used it.

6 Druid

druid, as used by Airbnb, is “a high-performance, column-oriented, distributed data store” that claims to be good at events also.

7 Prometheus

Prometheus is an open-source systems monitoring and alerting toolkit originally built at SoundCloud. […] Prometheus’s main features are:

- a multi-dimensional data model with time series data identified by metric name and key/value pairs

- PromQL, a flexible query language to leverage this dimensionality

- no reliance on distributed storage; single server nodes are autonomous

- time series collection happens via a pull model over HTTP

- pushing time series is supported via an intermediary gateway

- targets are discovered via service discovery or static configuration

- multiple modes of graphing and dashboarding support

Haven’t used it.

8 Timescaledb

timescaledb is a real-time/time series extension to Postgres.

Haven’t used it.

9 Heroic

Heroic, by Spotify

Heroic is our in-house time series database. We built it to address the challenges we were facing with near real-time data collection and presentation at scale. At the core are two key pieces of technology: Cassandra, and Elasticsearch. Cassandra acts as the primary means of storage with Elasticsearch being used to index all data. We currently operate over 200 Cassandra nodes in several clusters across the world serving over 50 million distinct time series.

Good onya. I have not used it.

10 RethinkDB

rethinkdb is a database which does push instead of being polled. Recently open-sourced, eminent pedigree, haven’t used it.

11 Materialize

Materialize by materialize.io:

Materialize is a streaming database for real-time applications.

Materialize lets you ask questions of your live data, which it answers and then maintains for you as your data continue to change. The moment you need a refreshed answer, you can get it in milliseconds. Materialize is designed to help you interactively explore your streaming data, perform data warehousing analytics against live relational data, or just increase the freshness and reduce the load of your dashboard and monitoring tasks.

Materialize focuses on providing correct and consistent answers with minimal latency. It does not ask you to accept either approximate answers or eventual consistency. Whenever Materialize answers a query, that answer is the correct result on some specific (and recent) version of your data. Materialize does all of this by recasting your SQL92 queries as dataflows, which can react efficiently to changes in your data as they happen. Materialize is powered by timely dataflow, which connects the times at which your inputs change with the times of answers reported back to you.