Entropy vs information

MaxEnt(?), macrostates, subjective updating, epistemic randomness, Szilard engines, Gibbs paradox…

2010-12-01 — 2026-01-27

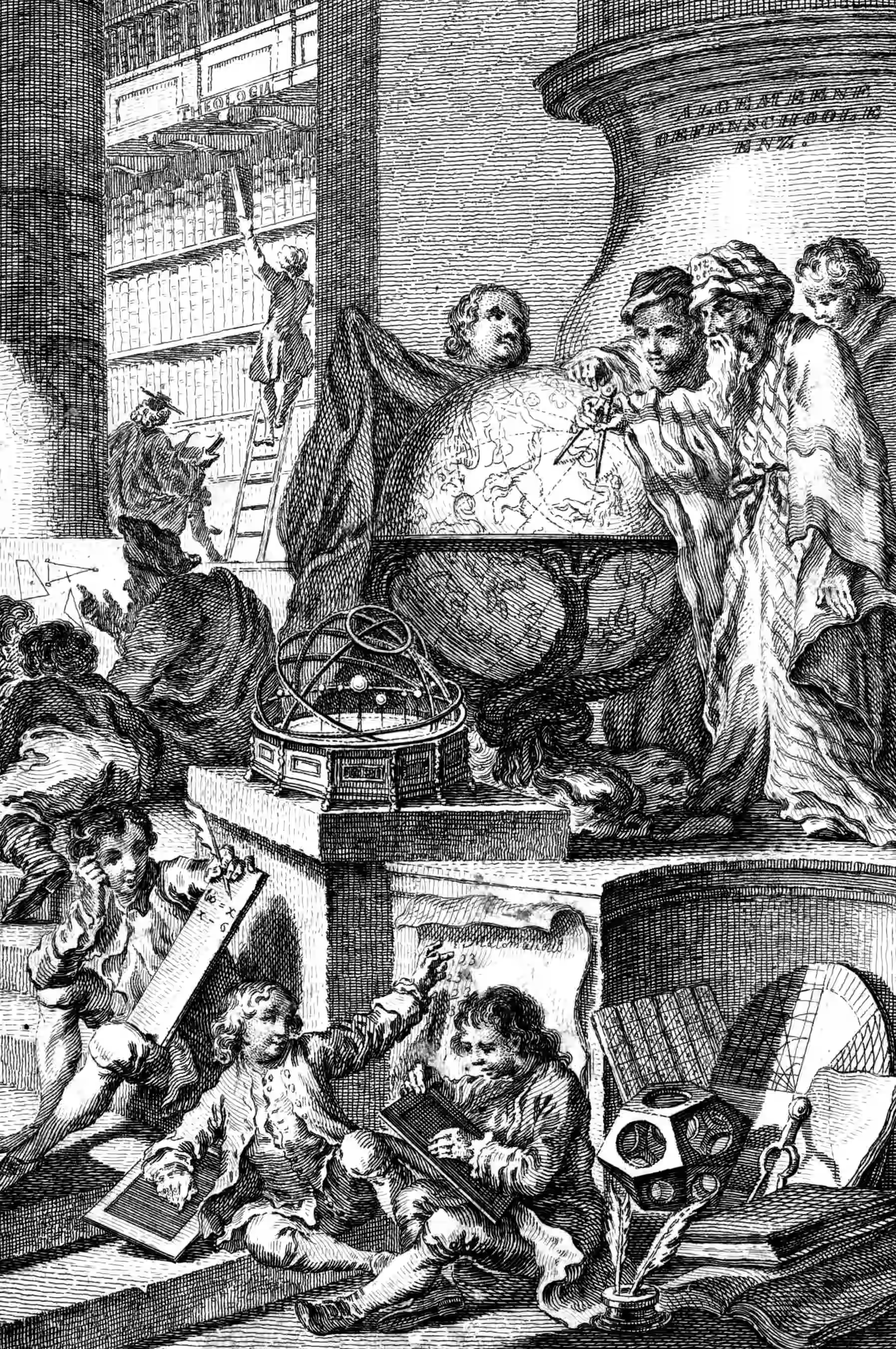

Wherein the Relation of Entropy and Information Is Examined, and Macrostates Are Shown to Be Defined as the Unique Markovian Partitions of Phase Space, Linking Thermodynamic Notions to Predictive Causal States.

Over at statistical mechanics of statistics we’re wondering about the connection between statistical mechanics and statistics, and that leads us to think about the connection between entropy and information.

Entropy, as a physics concept, and information, as a computer-science concept, look dang similar, yet they’re defined for different kinds of things.

How do they connect?

Related somehow: algorithmic statistics, information geometry.

Friendly reminder that entropy is not a property of individual states/configurations of a system but rather a probability distribution of all the possible states/configurations (relative to some reference measure, if we want to get technical).

An apparently “messy” or “disorganized” configuration of a room is not by itself high entropy. By definition, any room configuration completely describes the room. In other words, there is no uncertainty about where every individual object is placed.

On the other hand, if we don’t know what the configuration of the room is, then we might describe its possible configurations with a probability distribution over room configurations.

If this probability distribution exhibits high entropy (relative to a uniform measure), then all room configurations will be nearly equally probable. Moreover, if there are many more messy configurations than clean configurations, then we can say that clean room configurations are rare.

Too plain? Try this. Shalizi and Moore (2003):

We consider the question of whether thermodynamic macrostates are objective consequences of dynamics, or subjective reflections of our ignorance of a physical system. We argue that they are both; more specifically, that the set of macrostates forms the unique maximal partition of phase space which 1) is consistent with our observations (a subjective fact about our ability to observe the system) and 2) obeys a Markov process (an objective fact about the system’s dynamics). We review the ideas of computational mechanics, an information-theoretic method for finding optimal causal models of stochastic processes, and argue that macrostates coincide with the “causal states” of computational mechanics. Defining a set of macrostates thus consists of an inductive process where we start with a given set of observables, and then refine our partition of phase space until we reach a set of states which predict their own future, i.e. which are Markovian. Macrostates arrived at in this way are provably optimal statistical predictors of the future values of our observables.

1 MaxEnt

How about we throw out classical Bayes and do something different?

See MaxEnt.

2 Incoming

Gottwald and Braun (2020) This seems like a good explication in the context of free energy:

The concept of free energy has its origins in 19th century thermodynamics, but has recently found its way into the behavioral and neural sciences, where it has been promoted for its wide applicability and has even been suggested as a fundamental principle of understanding intelligent behavior and brain function. We argue that there are essentially two different notions of free energy in current models of intelligent agency, that can both be considered as applications of Bayesian inference to the problem of action selection: one that appears when trading off accuracy and uncertainty based on a general maximum entropy principle, and one that formulates action selection in terms of minimizing an error measure that quantifies deviations of beliefs and policies from given reference models. The first approach provides a normative rule for action selection in the face of model uncertainty or when information processing capabilities are limited. The second approach directly aims to formulate the action selection problem as an inference problem in the context of Bayesian brain theories, also known as Active Inference in the literature. We elucidate the main ideas and discuss critical technical and conceptual issues revolving around these two notions of free energy that both claim to apply at all levels of decision-making, from the high-level deliberation of reasoning down to the low-level information processing of perception.

Statistical Physics of Inference and Bayesian Estimation Informational entropy versus thermodynamic entropy.

John Baez’s A Characterization of Entropy etc. See also his Information and Entropy. The post What is Entropy? introduces the topic with the pitch:

I have largely avoided the second law of thermodynamics, which says that entropy always increases. While fascinating, this is so problematic that a good explanation would require another book! I have also avoided the role of entropy in biology, black hole physics, etc. Thus, the aspects of entropy most beloved by physics popularisers will not be found here. I also never say that entropy is ‘disorder’.

It Took Me 10 Years to Understand Entropy — here’s what I learned | by Aurelien Pelissier

Wolpert (2006): I always love Wolpert’s work, but then I get baffled by the lack of estimation theory.

Daniel Ellerman’s Logical Entropy stuff, which he has now written up as Ellerman (2017).

Feldman — A Brief Introduction to Information Theory, Excess Entropy and Computational Mechanics