Feedback system identification, linear

2016-07-27 — 2022-01-20

Wherein Feedback System Identification Is Addressed for Irregularly Sampled Data, and Continuous‑time Autoregressive Models Are Fitted via Kalman Recursion and Transformed‑coefficient Methods, While Likelihoods Are Observed to Be Multimodal.

In system identification, we infer the parameters of a stochastic dynamical system of a certain type, i.e. usually ones with feedback, so that we can e.g. simulate it, or deconvolve it to find the inputs and hidden state, maybe using state filters. In statistical terms, this is the parameter inference problem for dynamical systems.

Moreover, it totally works without Gaussian noise; that’s just convenient in optimal linear filtering, Kalman filtering isn’t rocket science, after all. Also, mathematically Gaussian is a useful crutch if you decide to go to a continuous time index, cf Gaussian processes.

This is the mostly offline version. There is a sub-notebook focusing on online recursive estimation.

1 Intros

Oppenheim and Verghese, Signals, Systems, and Inference is free online. ritvikmath explains partial autocorrelation as a graphical model, which is not complicated, but for some reason, I never had it laid out this way in my own time series courses. See also Kenneth Tay, The relationship between MA(q)/AR(p) processes and ACF/PACF

(Martin 1999):

Consider the basic autoregressive model,

\[ Y(k) = \sum_{j=1}^pa_jY(k-j)=\epsilon(k). \]

Estimating AR(p) coefficients:

The [power] spectrum is easily obtained from [the above] as

\[ P(f) = \frac{\sigma^2}{|1+ \sum_{j=1}^pa_jz^{-1}|^2},\\ z=\exp 2\pi if\delta t \]

with \(\delta t\) the intersample spacing. […] for any given set of data, we need to be able to estimate the AR coefficients \(\{a_j\}_{j=1}^N\) conveniently. Three methods for achieving this are the Yule-Walker, Burg and Covariance methods. The Yule-Walker technique uses the sample autocovariance to obtain the coefficients; the Covariance method defines, for a set of numbers \(\mathbf{a}=\{a_j\}_{j=1}^N,\) a quantity known as the total forward and backward prediction error power:

\[ E(Y,\mathbf{a}) = \frac{1}{2(N-p)}\sum_{n=p+1}^N\left\{ \left|Y(n)+\sum{j=1}^pa_jY(n-p)\right|^2 + \left|Y(n-p)+\sum{j=1}^pa^*_jY(n-p+j)\right|^2 \right\} \]

and minimises this w.r.t. \(\mathbf{a}\). As \(E(Y, \mathbf{a})\) is a quadratic function of \(\mathbf{a}\), \(\partial E(Y, \mathbf{a})/partial a\) is linear in \(\mathbf{a}\) and so this is a linear optimisation problem. The Burg method is a constrained minimization of \(E(Y, \mathbf{a})\) using the Levinson recursion, a computational device derived from the Yule-Walker method.

2 Instrumental variable regression

3 Unevenly sampled

4 Model estimation/system identification

You don’t know a parameterised model for the data (and hence a precise bandwidth) and you wish to estimate it.

This is a system identification problem, although the non-uniform sampling means that it has an unusual form.

(Martin 1999) summarises:

One could consider the general problem in an approximate way as the missing data problem with a very high proportion of missing data points, but (Jones 1981, 1984) this is not very realistic. This has led to the consideration of the continuous-time model […] . (Lii and Masry 1992) shows that the coefficients in that equation may be obtained from the [irregularly sampled autocorrelation moments, but], the estimation of these requires a large amount of data and the results are asymptotic in the limit of infinite data. The other continuous-time approach is that of Jones (Jones 1981, 1984) who has used Kalman recursive estimation […] to obtain a likelihood function \(\operatorname{lik}(x|b)\) which is then maximised w.r.t. b to obtain an estimate of the true parameters.

There is a partial review and comparison of methods in (P. M. Broersen 2006; Stoica and Moses 2005). From the latter:

(Martin 1999) applied autoregressive modeling to irregularly sampled data using a dedicated method. It was particularly good in extracting sinusoids from noise in short data sets. (Söderström and Mossberg 2000) evaluated the performance of methods for identifying continuous-time autoregressive processes, which replace the differentiation operator by different approximations. (Larsson and Söderström 2002) apply this idea to randomly sampled autoregressive data. They report promising results for low-order processes. (Lahalle, Fleury, and Rivoira 2004) estimate continuous-time ARMA models. Unfortunately, their method requires explicit use of a model for irregular sampling instants. The precise shape of that distribution is very important for the result, but it is almost impossible to establish it from practical data.

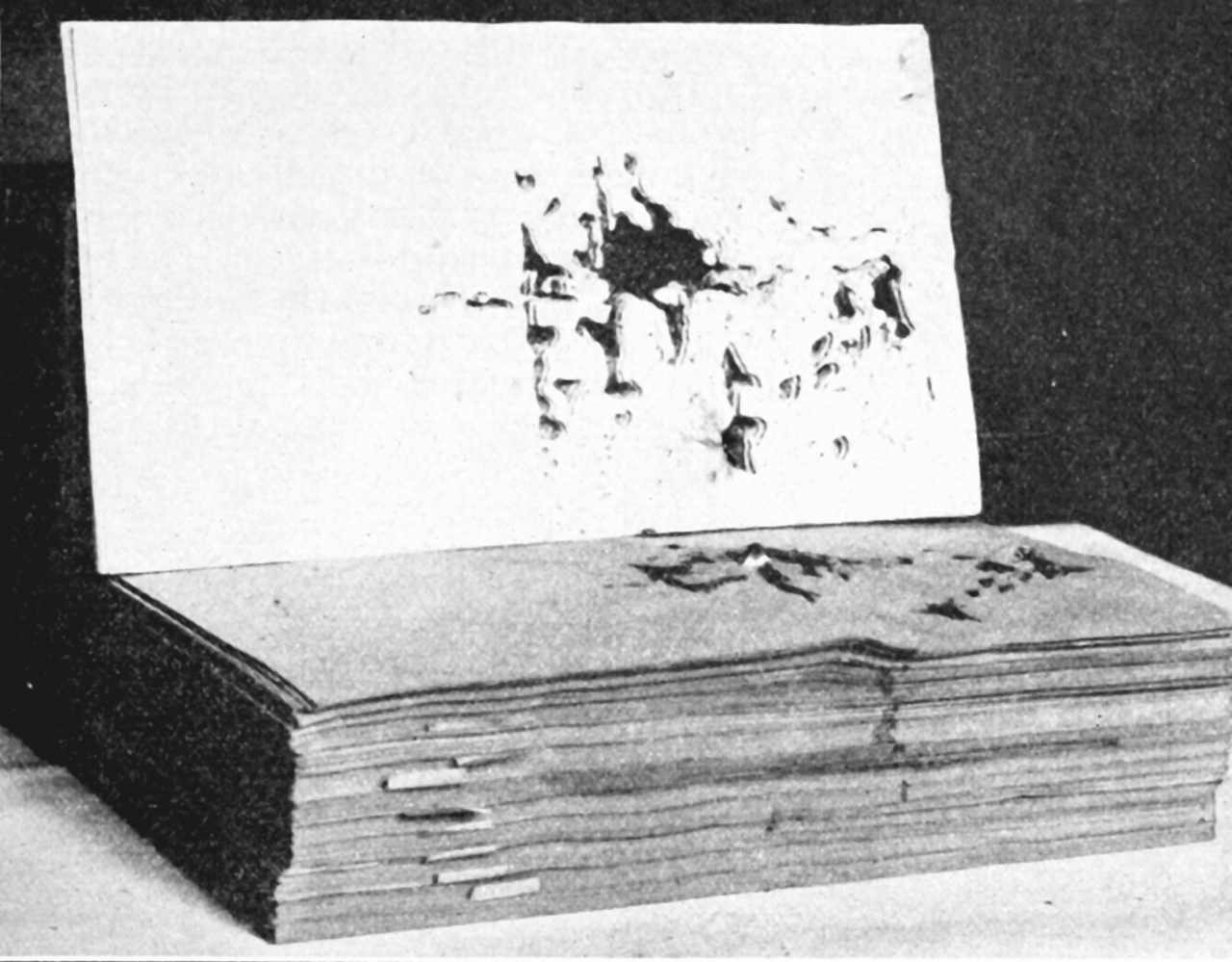

No generally satisfactory spectral estimator for irregular data has been defined yet. Continuous time series models can be estimated for irregular data, and they are the only possible candidates for obtaining the Cramér-Rao lower boundary, because the true process for irregular data is a continuous-time process. (Jones 1981) has formulated the maximum likelihood estimator for irregular observations. However, (Jones 1984) also found that the likelihood has several local maxima and the optimisation requires extremely good initial estimates. (P. M. T. Broersen and Bos 2006) used the method of Jones to obtain maximum likelihood estimates for irregular data. If simulations started with the true process parameters as initial conditions, that was sometimes, but not always, good enough to converge to the global maximum of the likelihood. However, sometimes even those perfect and nonrealisable starting values were not capable of letting the likelihood converge to an acceptable model. So far, no practical maximum likelihood method for irregular data has solved all numerical problems, and certainly no satisfactory realisable initial conditions can be given. As an example, it has been verified in simulations that taking the estimated AR( p—1) model together with an additional zero for order p as starting values for AR( p) estimation does not always converge to acceptable AR( p) models. The model with the maximum value of the likelihood might not in all cases be accurate and many good models have significantly lower numerical values of the likelihood. (Martin 1999) suggests that the exact likelihood is sensitive to round-off errors. (P. M. T. Broersen and Bos 2006) calculated the likelihood as a function of true model parameters, multiplied by a constant factor. Only the likelihood for a single pole was smooth. Two poles already gave a number of sharp peaks in the likelihood, and three or more poles gave a very rough surface of the likelihood. The scene is full of local minima, and the optimisation cannot find the global minimum, unless it starts very close to it.

4.1 Slotting

Asymptotic methods based on gridding observations.

4.2 Method of transformed coefficients

Useful tool: equivalence of a continuous time Ito integral and a discrete ARIMA process (attributed by Martin (1998) to Bartlett (1946)) also implies you can estimate the model without estimating missing data, which is satisfying, although the precise form this takes is less satisfying.

A popular overview seems to be Martin (1999).

4.3 State filters

(Note that you can also do the signal reconstruction problem using state filters, but I’m interested in doing system identification using state filters.) [Jones (1981); MartinAutoregression1998 gave this a go; while (Martin 1998) mentioned problems, I’m curious when it does work, since this seems natural, simple, and easier to make robust against model violations than the other methods.

(Martin 1998):

It is well known that if a univariate continuous time autoregression is sampled at equally spaced time intervals, the resulting, discrete time process is ARMA(p,p-1). If the sampling includes observational error, the resulting process is ARMA(p,p); however, these 2p parameters depend only on the p continuous time autoregression coefficients and the observational error variance. Modeling, the process as a continuous time autoregression with observational error may be much more parsimonious than modeling the discrete time process, whether or not the data are equally spaced. The direct modeling of observational error has the effect of smoothing noisy data and may eliminate the need for moving average terms.

5 Online

See recursive estimation.

6 Incoming

Gradient descent learns Linear Dynamical systems

6.1 Linear Predictive Coding

LPC introductions traditionally start with a physical model of the human vocal tract as a resonating pipe, then mumble away the details. This confused the hell out of me. AFAICT, an LPC model is just a list of AR regression coefficients and a driving noise source coefficient. This is “coding” because you can round the numbers, pack them down a smidgen and then use it to encode certain time series, such as the human voice, compactly. But it’s still a regression analysis, and can be treated as such.

The twists are that

- we usually think about it in a compression context

- Traditionally one performs many regressions to get time-varying models

It’s commonly described as a physical model because we can imagine these regression coefficients corresponding to a simplified physical model of the human vocal tract; But we can think of the regression coefficients as corresponding to any all-pole linear system, so I don’t think that brings special insight; especially as the models of, say, a resonating pipe, would intuitively be described by time-delays corresponding to the length of the pipe, not time-lags corresponding to a corresponding sample plus computational convenience. Sure we can get similar spectral response for this model as with a pipe, according to linear systems theory, but if you are going to assume so much advanced linear systems theory anyway, and mix it with crappy physics, why not just start with the linear systems and ditch the physics?

To discuss: these coefficients as spectrogram smoothing.