Ablation studies, knockout studies, lesion studies

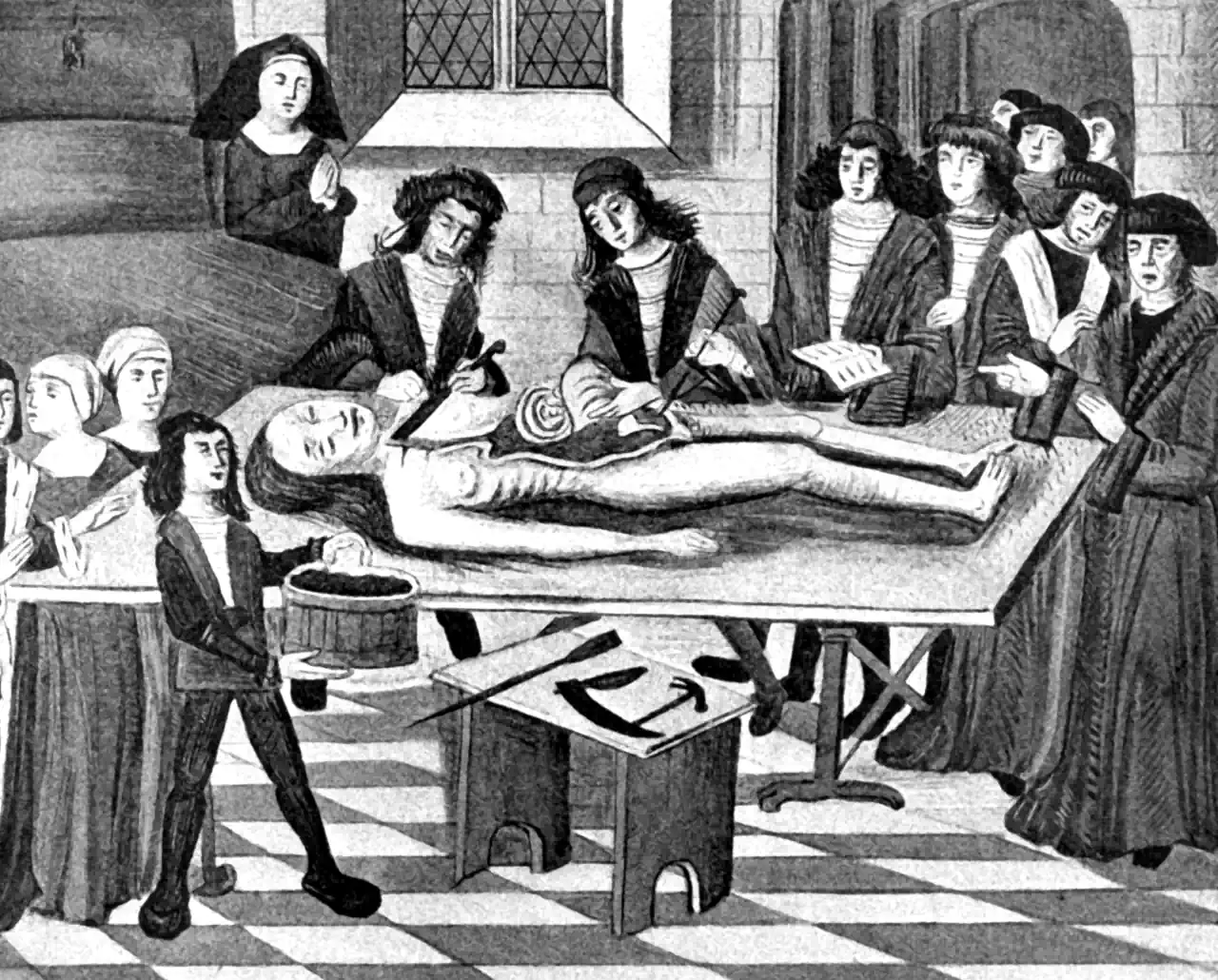

In order to understand it we must be able to break it

2016-10-26 — 2024-10-28

Wherein the Limits of Inference by Destruction Are Examined and It Is Noted That Causal Structure Is Not Uniquely Recoverable From Final States, So Training History Is Required for Identification.

Can we work out how a complex or adaptive system works by destroying bits of it?

This comes up often when studying complicated systems, because there are too many variables and too many ethical constraints to run all the experiments we’d like.

In various fields, people ask how far we can push the simple experiment of working out what breaks. It’s called an ablation study in neural nets, a lesion study in neuroscience, and a knockout study in genetics.

Questions about the limits of this methodology arise in examples such as Can a biologist fix a radio (Lazebnik 2002)? Could a neuroscientist understand a microprocessor (Jonas and Kording 2017)? Should an alien doctor stop human bleeding by removing blood?

The answer, I claim, is “it depends.” These are classic identifiability from observational data problems. For a methodology that attempts to identify causal structures in a principled way, see causal abstraction.

Here’s a spicy take that argues the current state of the model is only understandable in the context of its training history: Interpretability Creationism:

[…] Stochastic Gradient Descent is not literally biological evolution, but post-hoc analysis in machine learning has a lot in common with scientific approaches in biology, and likewise often requires an understanding of the origin of model behaviour. Therefore, the following holds whether looking at parasitic brooding behaviour or at the inner representations of a neural network: if we do not consider how a system develops, it is difficult to distinguish a pleasing story from a useful analysis. In this piece, I will discuss the tendency towards “interpretability creationism” — interpretability methods that only look at the final state of the model and ignore its evolution over the course of training — and propose a focus on the training process to supplement interpretability research.

That essay clearly connects to model explanation, and I think it also connects to observational studies more generally. If we take that seriously, we might start thinking about developmental interpretability.