Causal inference in highly parameterized ML

2020-09-17 — 2026-01-13

Wherein Causal Graphs Are Applied to Nonparametric Neural Nets, Practical Tooling Such as DoWhy and TETRAD Is Noted for Supporting Explicit Causal Modelling, and Benchmarks Such as CauseMe Are Cited for Dataset-Shift Challenges.

Applying a causal graph structure in the challenging environment of a nonparametric machine-learning algorithm, like a neural net or a similar model, is something I’m very interested in. It seems necessary and kind of obvious for handling things like dataset shift, but causal structure is often ignored. What’s that about?

For a good example, see Bernhard Schölkopf: Causality and Exoplanets.

Maybe I should start with the book (Peters, Janzing, and Schölkopf 2017) or with the chatty introduction (Schölkopf 2022), or the bombastically-title Halpern (2016)..

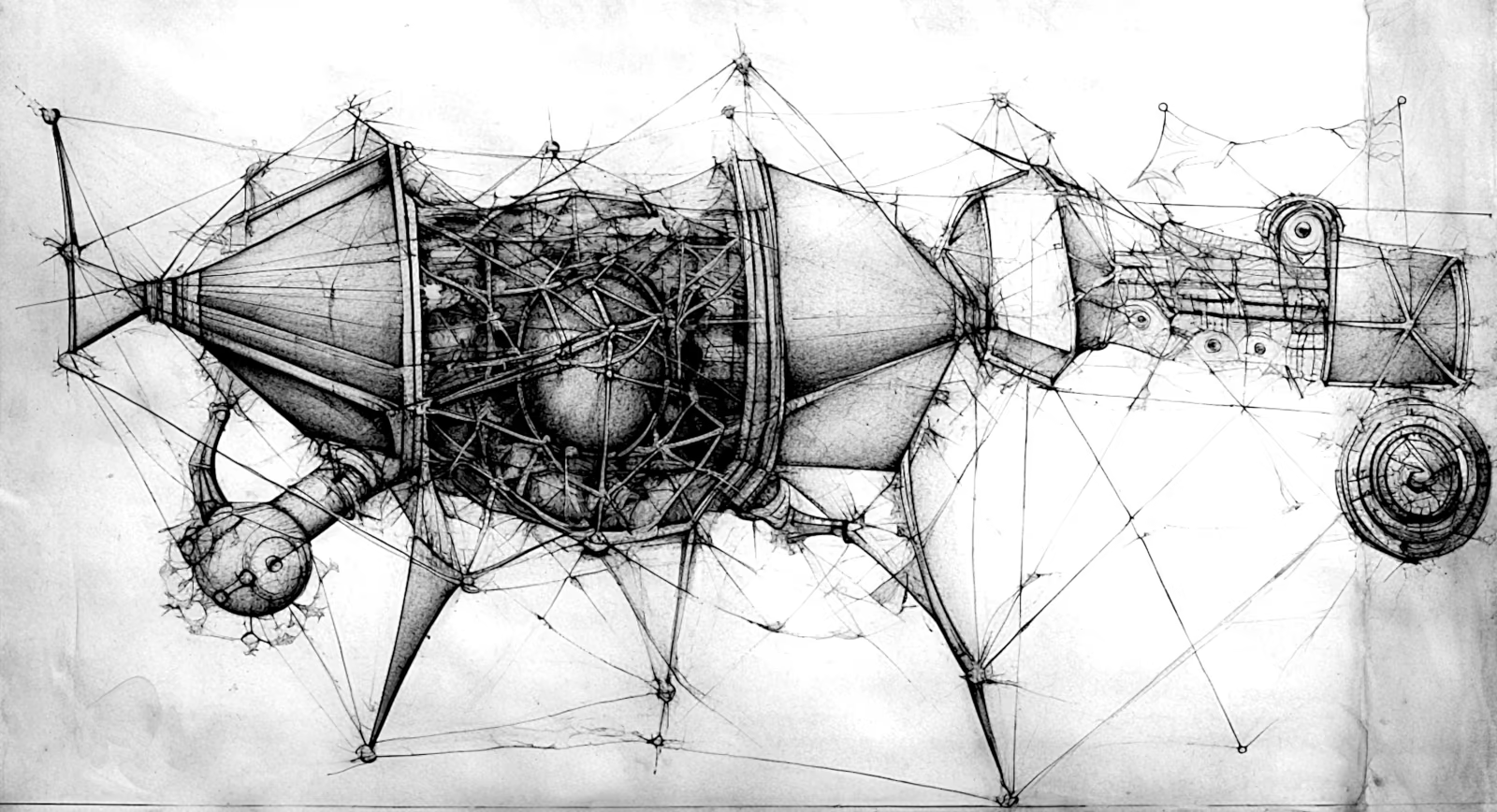

Check out the brain salad: graphical models and supervised models.

1 Causality with agency

2 Implicit causality in foundation models

3 Invariances implied by causality

Léon Bottou, From Causal Graphs to Causal Invariance:

For many problems, it’s difficult to even attempt drawing a causal graph. While structural causal models provide a complete framework for causal inference, it is often hard to encode known physical laws (such as Newton’s gravitation, or the ideal gas law) as causal graphs. In familiar machine learning territory, how does one model the causal relationships between individual pixels and a target prediction? This is one of the motivating questions behind the paper Invariant Risk Minimization (IRM). In place of structured graphs, the authors elevate invariance to the defining feature of causality.

I commend the Cloudera Fast Forward tutorial Causality for Machine Learning; it’s a nice bit of applied work.

4 Causality for feedback and continuous fields

5 Double learning

See Double learning.

6 As “Deep Causality”

I’m not sure what this is yet (Berrevoets et al. 2024; Deng et al. 2022; Lagemann et al. 2023). End-to-end deep learning of graphical structures?

7 Benchmarking

Detecting causal associations in time series datasets is a key challenge for novel insights into complex dynamical systems such as the Earth system or the human brain. Interactions in such systems present a number of major challenges for causal discovery techniques and it is largely unknown which methods perform best for which challenge.

The CauseMe platform provides ground truth benchmark datasets featuring different real data challenges to assess and compare the performance of causal discovery methods. The available benchmark datasets are either generated from synthetic models mimicking real challenges or are real-world data sets where the causal structure is known with high confidence. The datasets vary in dimensionality, complexity and sophistication.

8 Tooling

8.1 Dowhy

DoWhy is a Python library for causal inference that supports explicit modelling and testing of causal assumptions. DoWhy is based on a unified language for causal inference, combining causal graphical models and potential outcomes frameworks

9 pgmpy

Supported data types — 1.0.0 | pgmpy docs

9.1 Causalnex

CausalNex is a Python library that uses Bayesian Networks to combine machine learning and domain expertise for causal reasoning. You can use CausalNex to uncover structural relationships in your data, learn complex distributions, and observe the effect of potential interventions.

9.2 cause2e

The main contribution of cause2e is the integration of two established causal packages that have currently been separated and cumbersome to combine:

- Causal discovery methods from the py-causal package, which is a Python wrapper around parts of the Java TETRAD software. It provides many algorithms for learning the causal graph from data and domain knowledge.

- Causal reasoning methods from the DoWhy package, which is the current standard for the steps of a causal analysis starting from a known causal graph and data

9.3 TETRAD

TETRAD (source, tutorial) is a tool for discovering, visualizing and calculating giant empirical DAGs, including general graphical inference and causality. It’s written by leading researchers in causal inference.

Tetrad is a program which creates, simulates data from, estimates, tests, predicts with, and searches for causal and statistical models. The aim of the program is to provide sophisticated methods in a friendly interface requiring very little statistical sophistication of the user and no programming knowledge. It is not intended to replace flexible statistical programming systems such as Matlab, Splus or R. Tetrad is freeware that performs many of the functions in commercial programs such as Netica, Hugin, LISREL, EQS and other programs, and many discovery functions these commercial programs do not perform. …

The Tetrad programs describe causal models in three distinct parts or stages: a picture, representing a directed graph specifying hypothetical causal relations among the variables; a specification of the family of probability distributions and kinds of parameters associated with the graphical model; and a specification of the numerical values of those parameters.

py-causal is a wrapper around TETRAD for Python, and R-causal is for R.

10 Incoming

- Nisha Muktewar and Chris Wallace’s Causality for Machine Learning is the book Bottou recommends on this theme.

- For coders, Ben Dickson writes about Why machine learning struggles with causality.

- Cheng Soon Ong recommended Finn Lattimore to me as an important perspective.

- biomedia-mira/deepscm: Repository for Deep Structural Causal Models for Tractable Counterfactual Inference (Pawlowski, Coelho de Castro, and Glocker 2020).

- ICML 2022 Tutorial on causality and deep learning

- Causality and Deep Learning: Synergies, Challenges and the Future Tutorial