Operationalising the bitter lessons in compute and cleverness

Amortizing the cost of being smart

2022-01-14 — 2026-04-29

Wherein the Bitter Lesson Is Recast as a Substitution Curve Whose Slope Is Observed to Vary by Regime, Connections Being Drawn to Wang’s Resource-Scarcity Account of Intelligence and to Russell’s Metareasoning.

One of several notebooks I started on the same underlying problem. The others are Agency under bounded compute (the foundational why-must-the-agent-compress angle) and Homunculi (compute split across self, other, and reflective sub-models). That I keep failing to merge them is, I think, telling me something about the shape of the question.

What to compute, and when, to make inferences about the world most efficiently.

A lot of ML problems, in hindsight, are implicitly about when to spend our compute budget. Scaling curves tell us we can spend a predictably large amount of compute at training time and amortise it over cheap inference. Sutton’s folk-wisdom bitter lesson says that investing in compute tends to outperform investing in clever algorithms. The economics of AI/labour substitution ask in what sense compute is a kind of labour. I think it’s useful to group the normative question of doing this well under the heading of when to compute.

There are, it turns out, several existing research programmes that formalise this intuition — more than I initially realised, AI-research-assistants-be-praised. I do not think any of them yet composes into the theory I want, but they get further than “some unvarnished thoughts,” so I should acknowledge them before going further.

1 Intelligence as resource scarcity

Pei Wang’s definition of intelligence in his NARS programme (Wang 2022, 1999) proposes that intelligence is the ability of an information-processing system to adapt to its environment while working with insufficient knowledge and resources. The “Assumption of Insufficient Knowledge and Resources” (AIKR) is foundational to his notion of intelligence. If we had sufficient knowledge and compute, we wouldn’t need intelligence — we’d just look up the answer. Intelligence is the phenomenon that arises from doing inference under resource scarcity.

Compare and contrast with, e.g. AIXI. Hutter’s AIXI defines an ideal agent: one that considers every computable hypothesis, weighted by Kolmogorov complexity, and picks the action maximising expected future reward. It’s a beautiful formalization of what intelligence would be if resources were unlimited — and it’s incomputable, by construction. The practical response has been to approximate: AIXItl bounds the computation time and hypothesis depth, trading optimality for tractability. But the approximation is bolted on after the fact; the theory itself evolved in a world without scarcity.

Wang starts from the other end. AIKR says: we never have enough knowledge, we never have enough time, and the problems arrive without warning. Intelligence, in his view, isn’t what remains after we bound an ideal agent; it’s what emerges because the agent was always bounded. Where an AIXI-derived agent degrades from perfection, an AIKR agent is designed from the ground up to satisfice. It succeeds when it is good at distributing its time–space budget across competing tasks according to their relative priority, where no solution is ever final. Wang explicitly acknowledges the lineage to Simon’s bounded rationality but argues AIKR is more concrete and more restrictive.

It still doesn’t look that concrete to me? He seems to build many different variants of the AIKR model that concretise in different ways? It tells us intelligence is the resource-allocation problem. Insofar as this trade-off is an economic one, AIKR doesn’t give us the production functions or substitution curves between, say, training compute and inference compute.

2 Rational metareasoning and resource rationality

Here are some things I hadn’t heard about until 2 hours ago!

Stuart Russell and Eric Wefald’s rational metareasoning (Russell and Wefald 1991) models each computation step as a decision: the expected value of performing that computation, minus its cost. Intelligence then becomes the skill of choosing which inferences to run — literally “when to compute” as a decision theory. Hay, Russell, Tolpin & Shimony (Hay et al. 2012) later operationalised this as a meta-level MDP, making the framework tractable enough to implement, apparently. I would want to get my hands dirty with this before I felt comfortable claiming to understand it, or to read past the paper abstract at least.

Griffiths, Lieder & Icard’s resource-rational analysis (Lieder and Griffiths 2020) takes a different angle from cognitive science: human cognitive biases aren’t irrational; they’re optimal given finite compute. The brain is ‘doing the best it can with the budget it has’. This turns the bitter lesson into a normative theory — we should expect any intelligent system to use heuristics, caching, and shortcuts, because those are the resource-rational thing to do. Zilberstein’s anytime algorithms (Zilberstein 1996) look a bit like an instrumentalization: algorithms that return progressively better answers as we give them more time, with the agent deciding when to stop.

3 At scale

The above ideas don’t directly address the economic motivation at the start — the substitution between training and inference, the amortization of a foundation model across millions of users, the trade-off between data acquisition cost and model complexity. That seems to require something genuinely economic, not just decision-theoretic.

How much can one kind of computation substitute for another? It might be something “deeper” like a fundamental theory of intelligence.

On the other hand, it feels like just treating the problem as an economic one might have some mileage. It looks like some factors are fungible (accuracy, storage, compute, generalization…) but also we don’t have anything like the “utility” of the decision maker; in fact we just spent a lot of effort arguing our way out of having a conventional utility. Would we be making progress by keeping utility and augmenting it with a compute-regularised meta utility? (TODO) Do we need to resign ourselves to intrinsic motivations and/or utilities as local fitness approximations and it will still all work out?

ANYWAY, had we the right framing we might be able to express foundation models as an ingenious amortization strategy — maybe an interesting financial instrument denominated in the currency of “cognition,” or maybe just a classic production cost curve with unusual returns to scale. I mean, most of these things still cash out in the margins in dollars or joules, so economics is not necessarily futile.

Literature gap: a theory that connects something like Wang’s AIKR (intelligence is resource scarcity), Russell’s metareasoning (each computation is a decision), and the actual microeconomics of ML systems (training vs. inference, data vs. compute, memorization vs. extrapolation). I would like it even to include the computation that happens within the human skull. For now, some more specific notes on useful concepts in this domain

4 Amortization

Amortization is what Bayesians call the trade-off involved in learning inferential shortcuts; there is a whole parallel literature on this in probabilistic NNs and variational inference.

It doesn’t presume Bayes though. Case in point: SGD is a kind of amortised optimization. We could notionally solve the optimization problem at inference time by doing a full-batch gradient descent, but that would be very slow. Instead, we amortise the cost of optimization into a training phase, where we do many cheap updates to learn a good set of parameters that can be evaluated cheaply at inference time.

RL, if you squint at it just so, can be seen as a kind of amortised dynamic programming. We could spend a lot of compute at training time to learn a policy that is very cheap to execute at inference time. In many RL algorithms, notably ones trained on pure physical models, this is a naked attempt to speed up a thing that we could in principle already calculate. We could notionally calculate the optimal action at each time step by solving a dynamic programming problem via some expensive simulator, but that would be very slow. Instead, we learn a policy that approximates the optimal action — amortising the cost of dynamic programming, and maybe even physical simulation, into a single forward pass.

In practice RL+SGD in RLHF adds a second compute budget on top of pretraining. Toby Ord argued that RL training was nearing its effective limit by entering an unfavourable scaling regime: “we may have lost the ability to effectively turn more compute into more intelligence.” That doesn’t seem to have tanked progress, though, so I suspect something else is going on.

TODO: training vs. inference substitution more generally; memorization as a form of amortization.

5 The bitter lesson and its discontents

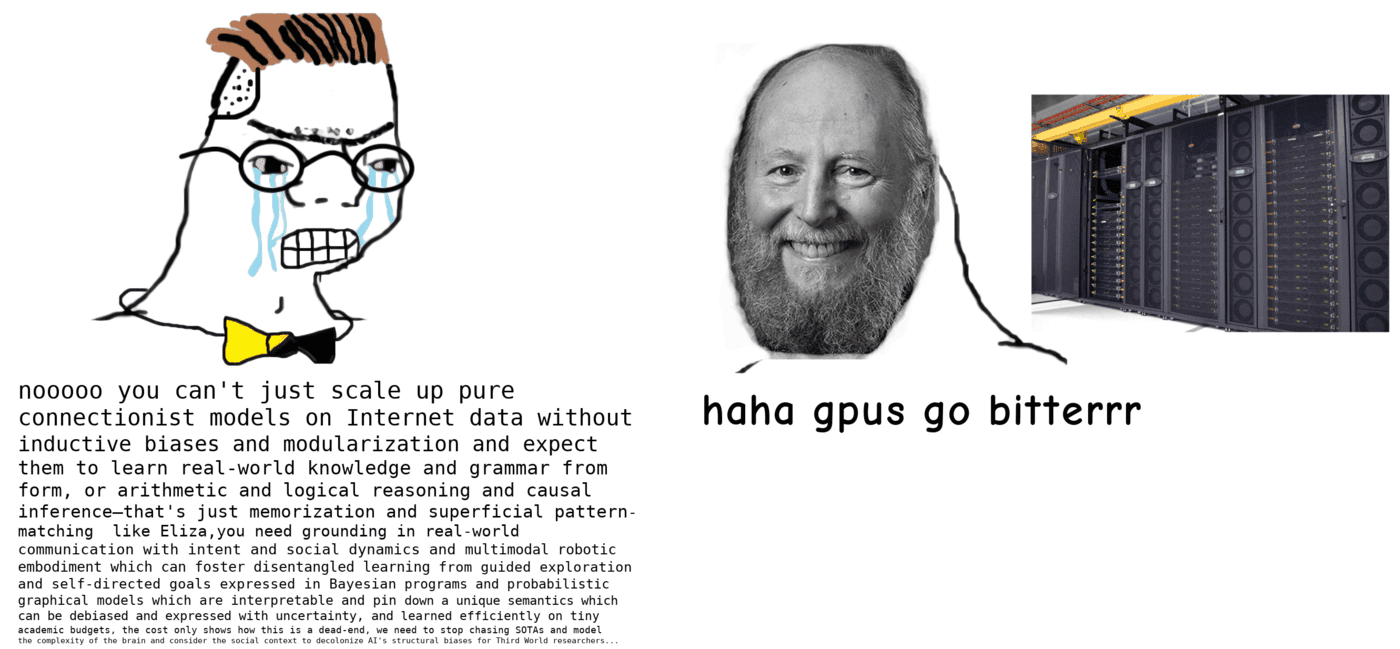

Sutton’s bitter lesson is now folk wisdom:

The biggest lesson that can be read from 70 years of AI research is that general methods that leverage computation are ultimately the most effective, and by a large margin.

In the substitution language above, this is a claim about a particular slope on the curve: at the margin, compute substitutes for cleverness at a generous exchange rate. The slope is well-attested in the data-rich regime. Algorithmic progress has outpaced classical hardware efficiency (Grace 2013); the compute needed to reach AlexNet-level ImageNet performance has halved every 16 months since 2012 (Hernandez and Brown 2020), faster than Moore’s Law would predict. Scaling laws tell the same story in a different vocabulary.

But that slope is the local gradient in one regime. In others it flips. On my last hydrology project a single data point cost about USD 500,000, because drilling a thousand-metre well cost that much in that spot. Telling me to collect a billion more data points instead of “being clever” wasn’t an option; collapsing the global economy to collect them would not have been “clever” in a useful way. The same goes wherever data acquisition is the binding constraint — narrow scientific domains, embodied agents, real-time decisions in novel settings. Foundation-model pretraining is itself a move on the substitution curve: pay compute up front to manufacture training-data-shaped output where data was scarce. Pretraining a geospatial foundation model on hydrology-adjacent tasks is the next move. That does not exempt us from the curve; it picks a different point on it — paying in compute and adjacent data instead of in cleverness.

So the vanilla-flavoured bitter lesson is not a homily; it’s an empirical claim about the slope of a substitution curve in the data-rich regime. The curve does not stop at the regime boundary — it just changes slope. What we generally want is the curve itself, parameterised by data cost, compute cost, and the kind of generalization we need. That’s the best lesson; the bitter one is a corollary in one of its regions.

Ermin Orhan gives a tighter and more concrete reading of where in the data-rich regime to look — probing capability limits as data scales, learning from new modalities, designing architectures that exploit both data and compute.1

6 Human bottlenecks

A second implication of the bitter lesson: even the best human minds aren’t very efficient as general-purpose symbol processors; the quickest path to intelligence prioritises bypassing that human bottleneck.

Things work better when they can scale up at a favourable rate. Humans don’t scale up at many points — not at the scale of an individual organism, and not at the scale of a society either.

TODO: this is a stub; the human-substrate critique of the bitter lesson wants expanding. The career-strategy section below is the personal-economic flip-side of the same observation.

7 Bitter lessons in career strategy

I get the most from the limited compute in my skull by figuring out how to use the larger compute on my GPU.

Career version:

…a lot of the good ideas that did not require a massive compute budget have already been published by smart people who did not have GPUs, so we need to leverage our technological advantage relative to those ancestors if we want to get cited.

8 Incoming

Will the Need to Retrain AI Models from Scratch Block a Software Intelligence Explosion?

IsoFLOP curves of large language models are extremely flat – Severely Theoretical

Compute Goes Brrr: Revisiting Sutton’s Bitter Lesson for Artificial Intelligence

Gwern

Trading off compute against humans — partially in economics of LLMs

Epistemic bottlenecks — information transmission and compression

Thermodynamics of computation: material costs, statistical mechanics of statistics

9 References

Footnotes

Sutton will be more rapidly replaced by a superior prose generator than will Orhan.↩︎