Web browser automation

Industrialising the ancient handicrafts of serfing the web and tilling the clickfarm etc

2016-06-16 — 2025-04-30

Wherein Browser Drudgery Is Delegated to Scripts, Playwright Is Noted as a Testing Framework Repurposed for Full Browser Automation Using Headless Instances, and TabFS Is Described as Mapping Tabs to Folders.

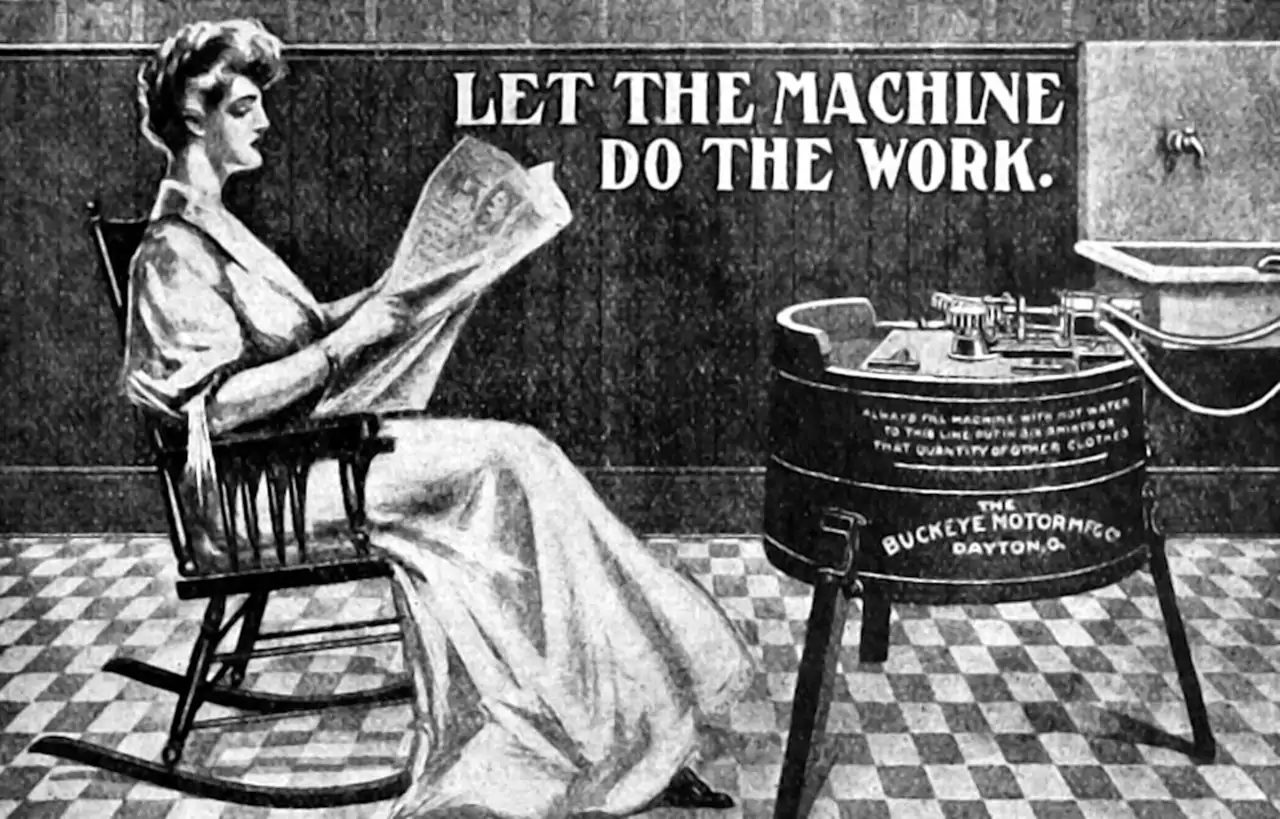

The attention economy of late capitalism demands I spend time clicking on a browser window to do things, rather than automating the drudgery like we thought we would have all worked out by now.

This should be easier! Surely I can automate it?

Maybe. In some cases, our benevolent social media overlords have blessed certain types of automation service. For everything else, you need to hack the browser to automate stuff. Working out whether this violates the terms and conditions of the particular website you are using is your responsibility. I am not qualified to advise on that.

Here are some options of increasing complexity.

This is an old page and probably out of date. The only option I have recent experience with is playwright.

1 I need to snapshot a web page

wkhtmltopdf and wkhtmltoimage are open source (LGPLv3) command line tools to render HTML into PDF and various image formats using the Qt WebKit rendering engine. These run entirely “headless” and do not require a display or display service.

I believe there are more these days

3 I want to get data from some public website with minimal pain

4 Doing stuff using an actual browser

I need to exfiltrate information from a hostile walled garden!

Oh dear, you aren’t trying to fake being on social media for weaponised mass opinion inception are you? Well, I hope they are paying you well.

At this point in history, where we are using billions of dollars of technological infrastructure to perform ritual social behaviour, I find I’d prefer just to pick lice out of the pelts of my audience the old-fashioned way. But maybe this is not an option for you? If so, you might want to check out the links below that I read before realising I wasn’t being paid enough.

There are some good tips in karicoss’s post on data liberation.

Turns out you can automate your local Firefox to do this in an easy way, if not a scalable one, thanks Ian Bicking. If you want something more full-featured, read on.

4.1 Playwright

Playwright is supposed to be for testing, but seems to be full-featured automation

4.2 TabFS

In Omar Rizwan’s TabFS, each of your open tabs is mapped to a folder, i.e. I have 3 tabs open, and they map to 3 folders in TabFS

The files inside a tab’s folder directly reflect (and can control) the state of that tab in your browser.

This gives you a ton of power, because now you can apply all the existing tools on your computer that already know how to deal with files — terminal commands, scripting languages, point-and-click explorers, etc — and use them to control and communicate with your browser.

Now you don’t need to code up a browser extension from scratch every time you want to do anything. You can write a script that talks to your browser in, like, a melange of Python and bash, and you can save it as a single ordinary file that you can run whenever, and it’s no different from scripting any other part of your computer.

4.3 Nickjs

nickjs is a JavaScript library to do browsing automation. If this is something you are doing for money, it might be worth your while paying phantombuster to automate hosting of Nickjs. See their explanatory blog post. (TODO check security guarantees)

4.4 Chromeless

Chromeless, the headless chrome browser, seems to be a hip thing for certain types of automation. And it has various easy cloud-deployment options.

4.5 Browserless

Browserless is containerized browsers with an API, I think.

browserless is a web-service that allows for remote clients to connect, drive, and execute headless work; all inside of docker. It offers first-class integrations for puppeteer, selenium’s webdriver, and a slew of handy REST APIs for doing more common work. On top of all that it takes care of other common issues such as missing system-fonts, missing external libraries, and performance improvements. We even handle edge-cases like downloading files, managing sessions, and have a fully-fledged documentation site.

4.6 Selenium/webdriver

Selenium is a browser testing and automation tool that can automate real work on the web. The protocol used is called WebDriver. An example of this might be automating download of your bank statements in a usable form. But how can one automate its deployment and a bunch of user credentials with some degree of security and yet the absolute minimum of thought or effort? I do not yet know. To be continued if absolutely necessary.

-

Under the hood, Helium forwards each call to Selenium. The difference is that Helium’s API is much more high-level. In Selenium, you need to use HTML IDs, XPaths and CSS selectors to identify web page elements. Helium on the other hand lets you refer to elements by user-visible labels. As a result, Helium scripts are typically 30-50% shorter than similar Selenium scripts. What’s more, they are easier to read and more stable with respect to changes in the underlying web page.

Because Helium is simply a wrapper around Selenium, you can freely mix the two libraries. For example:

So in other words, you don’t lose anything by using Helium over pure Selenium?

-

SeLite automates browser navigation and testing. It extends Selenium. It

- improves Selenium (API, syntax and visual interface),

- enables reuse,

- supports reporting and interaction, […]

SeLite enables DB-driven navigation with SQLite

You might also get some mileage out of the Firefox CLI, mozrepl.

4.7 iMacros

A commercial offering for Windows, scripting your browser for e.g. data extraction. USD99-USD995 depending on features desired.