Elliptical belief propagation

Generalized least generalized squares

2022-08-22 — 2022-08-22

Wherein the Gaussian Assumption Is Relinquished and Mahalanobis Distance Is Invoked, Robust Huber and Student‑t Updates Are Employed, and Outlier Effects Are Inferred by Adaptive Loss Scaling.

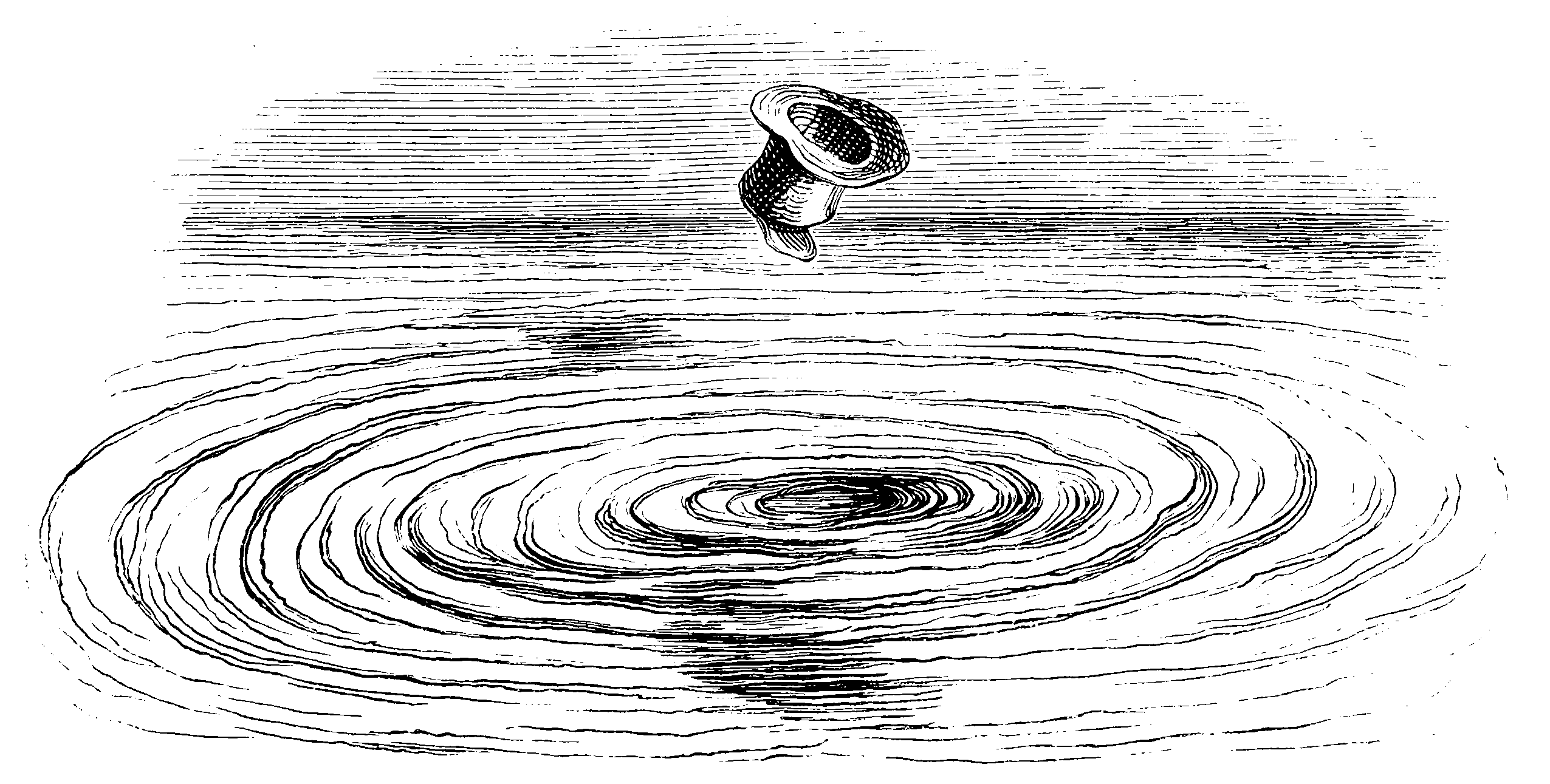

We can generalize Gaussian belief propagation to use general elliptical laws by using Mahalanobis distance without presuming the Gaussian distribution (Agarwal et al. 2013; Davison and Ortiz 2019), making it into a kind of elliptical belief propagation.

1 Robust

If we use a robust Huber loss instead of a Gaussian log-likelihood, then the resulting algorithm is usually referred to as a robust factor or as dynamic covariance scaling (Agarwal et al. 2013; Davison and Ortiz 2019). The nice thing here is that we can imagine the transition from quadratic to linear losses gives us an estimate of which observations are outliers.

2 Student-\(t\)

Surely this is around? Certainly, there is a special case in the t-process. It is mentioned, I think, in Lan et al. (2006) and possibly Proudler et al. (2007) although the latter seems to be something more ad hoc.

3 Gaussian mixture

Surely? TBD.

4 Generic

There seem to be generic update rules (Aste 2021; Bånkestad et al. 2020) which could be used to construct a generic elliptical belief propagation algorithm.