Legibility and automation

Variational approximations to AI modernism

2017-07-24 — 2025-08-22

Wherein the Legibility of Great Society Bureaucracy Is Examined and Its Computerization Is Shown to Hinge on Reframing Tasks, So That Mundane Replacements Like Tablet Ordering and Drink Dispensers Are Rendered Trivial.

Miscellaneous notes on the relationship between the legibility of the Great Society and its automation by computer.

George Hosu argued that AI and automation are at odds:

… the vast majority of use-cases for AI, especially the flashy kind that behaves in a “human-like” way, might be just fixing coordination problems around automation.

From this perspective, AI is like “the computational overhead of metis.”

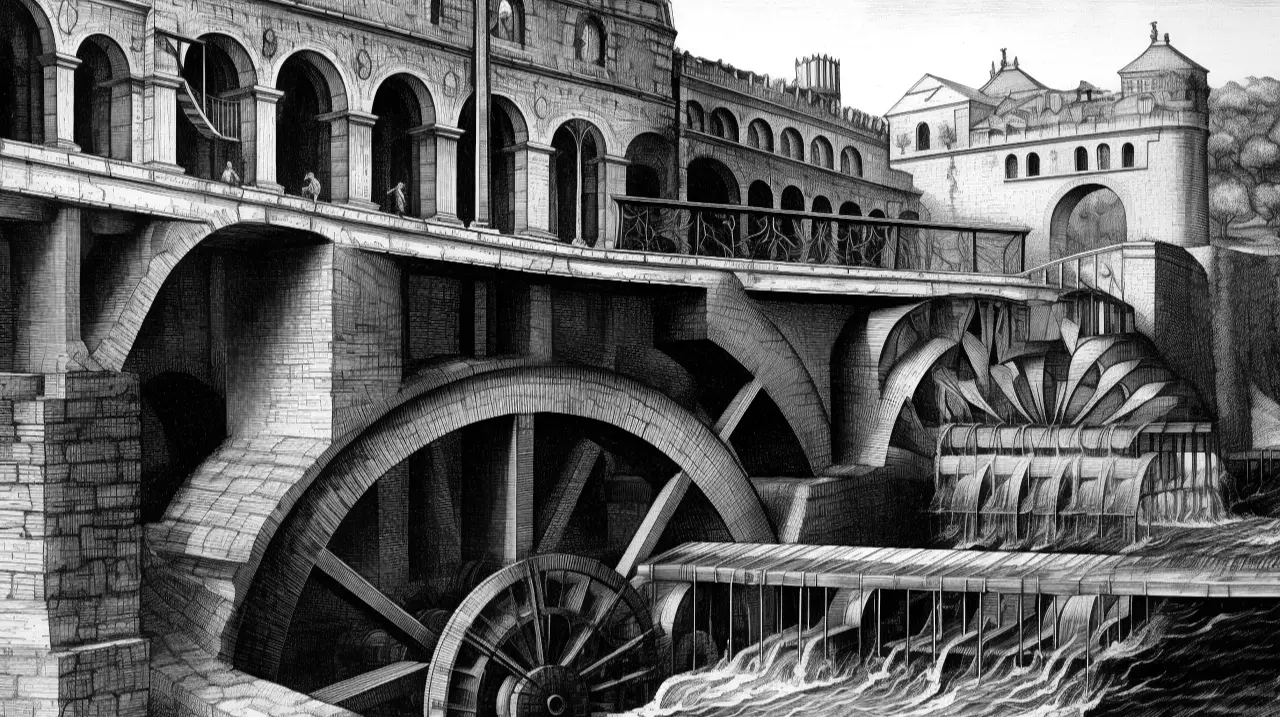

Thus we end up with rather complex jobs; Where something like AGI could be necessary to fully replace the person. But at the same time, these jobs can be trivially automated if we redefine the role and take some of the fuzziness out.

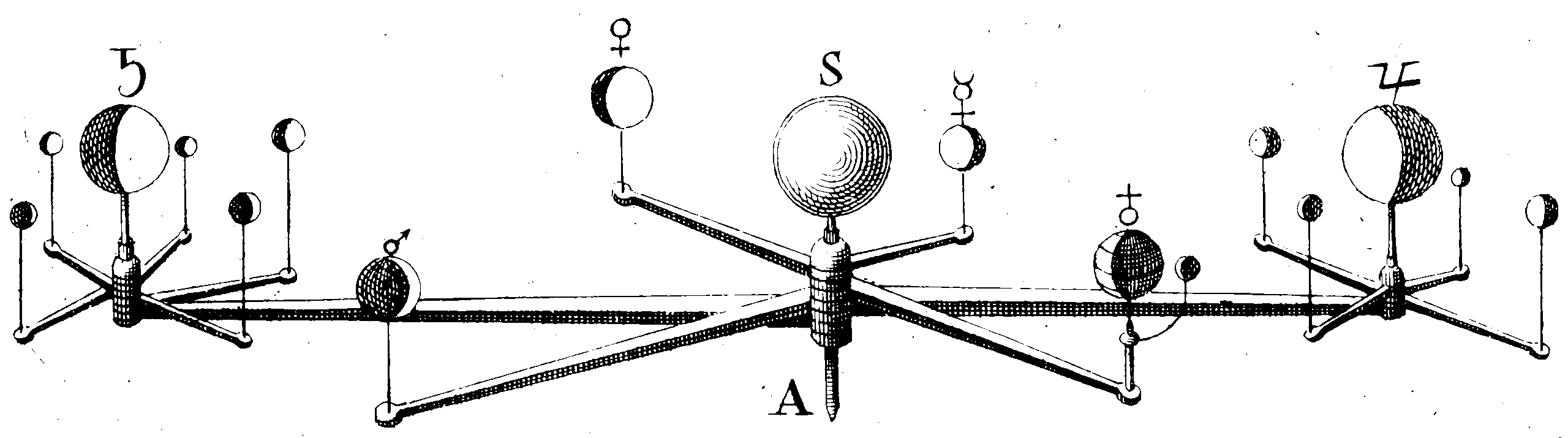

A bartender robot is beyond the dreams of contemporary engineering. A cocktail-making machine, conveyer belt (or drone) that delivers drinks, ordering and paying through a tablet on your table… beyond trivial.

Cf. the simplest thing.

Henry Farrell and Marion Fourcade — The Moral Economy of High‑Tech Modernism

While people in and around the tech industry debate whether algorithms are political at all, social scientists take the politics as a given, asking instead how this politics unfolds: how algorithms concretely govern. What we call “high-tech modernism”—the application of machine learning algorithms to organize our social, economic, and political life—has a dual logic. On the one hand, like traditional bureaucracy, it is an engine of classification, even if it categorizes people and things very differently. On the other, like the market, it provides a means of self-adjusting allocation, though its feedback loops work differently from the price system. Perhaps the most important consequence of high-tech modernism for the contemporary moral political economy is how it weaves hierarchy and data-gathering into the warp and woof of everyday life, replacing visible feedback loops with invisible ones, and suggesting that highly mediated outcomes are in fact the unmediated expression of people’s own true wishes.

1 Policy and Statistical learning

🚧TODO🚧 clarify. Brief digression on how legibility and management look as a statistical learning problem. We know that constructing policies is costly in data, and we know that administrative procedures frequently do not have much data from repeated trials of what works. We also know that coming up with policies (in a machine learning or in a political definition) is computationally challenging and data hungry. How does the need to bow to the ill-fitting bureaucracy of the Great Society resemble having to work with an underfit estimator of the optimal policy? What does that tell us about, e.g. optimal jurisprudence? Possibly something. Or possibly the metaphor doesn’t work; after all, what is the optimisation problem one solves?

2 Recommender systems and collective culture

See recommender dynamics.

3 Categorization and power

See adversarial categorization.

4 Incoming

The Bitter Lesson versus The Garbage Can - by Ethan Mollick

Organizations are too messy to hard-code workflows, so mapping every process won’t scale. Sutton’s “Bitter Lesson” implies outcome-trained, general systems will outperform hand-engineered, prompt-heavy approaches. The practical move is to define clear “good outputs” and train agents to deliver them, letting them navigate the chaos. If that holds, process moats shrink and advantage shifts to teams that can specify quality and provide lots of examples