The simplest thing

Minimum viable whatever, worse is better, PC-losering, Postel chaos, Burkean engineering, maintenance externalities, DX vs UX

2020-02-03 — 2025-04-24

Wherein the Problem of Determining the Least Effortful Course of Action Is Examined, and the Concrete Test Case of Rewriting Software Is Presented to Show Who Is Made to Bear Irreducible Complexity.

1 The Challenge of Simplicity

Machine learning’s bitter lesson, but for human thoughts and human systems. Maybe these are the same thing.

I frequently find it hard to discern what the simplest thing is in the sense of being the easiest to actually do, which isn’t necessarily the simplest thing to analyse, explain, or diagram—unless it is. Or, to put it another way, for whom must the thing be simplest? If there is irreducible complexity, who bears the cost? And how simple is it to identify irreducible complexity?

This is a hard problem, e.g. when designing software, experiments, or research questions. It’s a notable weakness of mine, which is why I’m comfortable asserting I would never have invented deep learning, which is about applying an asinine solution to a problem in a stupid way that turns out to be just good enough to attract billions in funding to improve it. That is the effective kind of simplicity.

[TODO: Transition to the idea of collecting notes on minimum viable product]

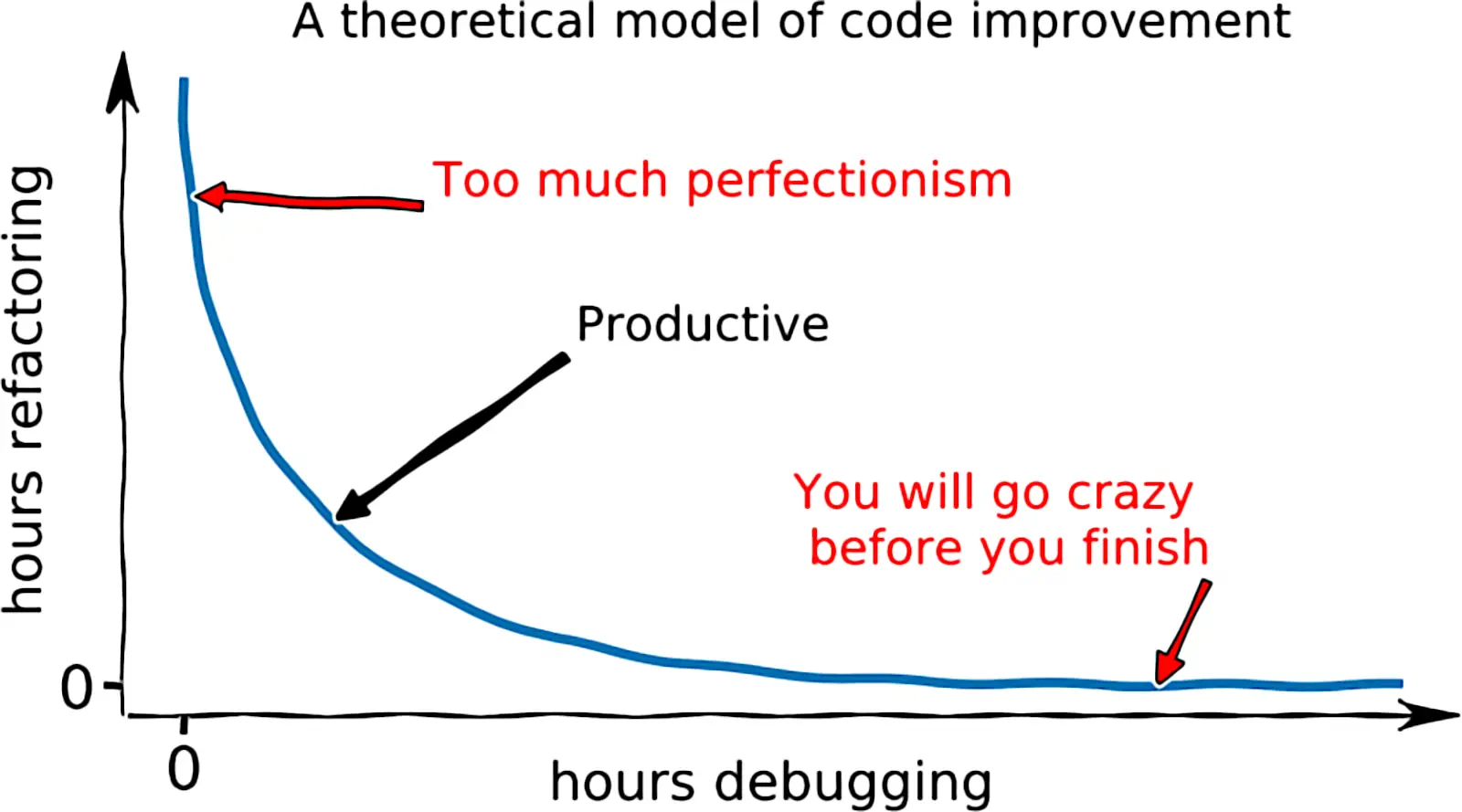

How about I collect some notes on the fraught question of deciding, as early as possible, what the minimum viable product should be? When is it worthwhile starting from scratch? Which cruft is structural? And which is yak shaving? If I had time, I might try to unpack different notions of simplicity into ones that were predictive of different qualities of the completion-time or project-cost distribution (average cost, median cost, extreme tail costs, cost variance…). But for now, I’ll dump some links that dance around that notion.

2 Worse is better etc

Another idea that gestures in this direction is Worse is Better. Richard Gabriel, in the original essay, expounds:

I and just about every designer of Common Lisp and CLOS has had extreme exposure to the MIT/Stanford style of design. The essence of this style can be captured by the phrase “the right thing.” […] I will call the use of this philosophy of design the “MIT approach.” Common Lisp (with CLOS) and Scheme represent the MIT approach to design and implementation.

The worse-is-better philosophy is only slightly different: … the design must be simple, both in implementation and interface. It is more important for the implementation to be simple than the interface. Simplicity is the most important consideration in a design.[…]

Early Unix and C are examples of the use of this school of design, and I will call the use of this design strategy the “New Jersey approach.” I have intentionally caricatured the worse-is-better philosophy to convince you that it is obviously a bad philosophy and that the New Jersey approach is a bad approach.

However, I believe that worse-is-better, even in its strawman form, has better survival characteristics than the-right-thing, and that the New Jersey approach when used for software is a better approach than the MIT approach.

This connects to a question I face: which way of doing things is, overall, simplest. What’s hubristic Not Invented Here-type high modernism, and what’s the clarity of starting over? How do we tell them apart?

[TODO: Introduce other related concepts]

This perhaps connects to metis and friends via Chesterton’s fence, Postel’s Law, YAGNI, The Duct Tape Programmer, Gall’s Law and general standards…

3 The Cost of Complexity

[TODO: Introduce the idea that complexity accumulates and its effects]

The reason software isn’t better is because it takes a lifetime to understand how much of a mess we’ve made of things, and by the time you get there, you will have contributed significantly to the problem.

4 Case Study: Rewriting Software

Rewriting software is a useful test case because of all the attendant complexities. Some of those issues are called second system effects. When is it simpler to start over with a shinier replacement?

Adam Turoff, Rewriting software:

If you’re dealing with a small here and a short now, then there is no time to rewrite software. There are revenue goals to meet, and time spent redoing work is retrograde, and in nearly every case poses a risk to the bottom line because it doesn’t deliver end user value in a timely fashion. […] If you’re dealing with a big here and a long now, whatever work you do right now is completely inconsequential compared to where the project will be five years from today or five million users from now. Requirements change, platforms go away, and yesterday’s baggage has negative value — it leads to hard-to-diagnose bugs in obscure edge cases everyone has forgotten about. The best way to deal with this code is to rewrite, refactor or remove it. […]The key to estimating whether a rewrite project is likely to succeed is to first understand when it needs to succeed.

Is Balaban et al. (2021) the right reference?

5 Radical Simplicity

Here’s one radical position on software in practice from suckless.org:

Our philosophy is about keeping things simple, minimal and usable. We believe this should become the mainstream philosophy in the IT sector. Unfortunately, the tendency for complex, error-prone and slow software seems to be prevalent in the present-day software industry. We intend to prove the opposite with our software projects.

They have a bunch of software projects exemplifying the manifesto. They advertise that their software shades from austerely simple into outright hostile depending on who you are, and that this is a feature.

dwmis customised through editing its source code, which makes it extremely fast and secure — it does not process any input data which isn’t known at compile time, … Because dwm is customised through editing its source code, it’s pointless to make binary packages of it. This keeps its userbase small and elitist. No novices asking stupid questions.

Vexingly, the software is nonetheless not written in Forth: The Hacker’s Language.

6 Balancing Simplicity with Usability

A more viable option in the real world might be to work out how to manage simplicity while still offering some kind of onboarding for new users. While I’m suffused with the DIY spirit I begrudgingly admit that, after giving it a go, the evidence suggests that learning the artisanal Right Way to do everything doesn’t actually leave much time to live our own lives. Sometimes it’s nice to be able to go to the store and buy a chair instead of someone handing us a few nails and planks and saying, “Good luck.” We need to pick our battles.

I’m not sure if this is the Right Way to Do Simple Things, but Ali Furkan Yıldız built a dwm configuratimifier as a web app — it feels like an incredibly effortful way of trolling minimalists.

7 Simplicity as a Cognitive Bias

- People are biased toward adding features to a design rather than removing them (Adams et al. 2021)

- Machines are too: Santagata and Nobili (2025)

8 DX vs UX

Developer experience versus user experience. TBC

9 Learning to use the thing

See learning curve.

10 Robustness Through Simplicity

Greg Kogan frames simplicity as a question of outages:

Take the ship’s steering system, for instance. The rudder is pushed left or right by metal rods. Those rods are moved by hydraulic pressure. That pressure is controlled by a hydraulic pump. That pump is controlled by an electronic signal from the wheelhouse. That signal is controlled by the autopilot. It doesn’t require a rocket scientist or a naval architect to find the cause of and solution to any problem:

- If the autopilot fails, steer the ship manually from the wheelhouse.

- If the electronic signals fail, go to the rudder control room to control the pump by hand, while talking with the bridge through a simple sound-powered phone.

- If the hydraulics fail, use the mechanically linked emergency steering wheel.

- If the mechanical linkage fails, hook a chain to both sides of the rudder and pull in the direction you want!

11 Incoming

Hugging Face talks about simple versus easy

Gordon Brander, Living systems grow from simple seeds summarizes wu wei, second-system syndrome and many others in a megamix

-

The consequences of this divergence in needed talent and effort cause The Lisp Curse:

Lisp is so powerful that problems which are technical issues in other programming languages are social issues in Lisp.

Sebastian Markbage: Minimal API Surface Area | JSConf EU 2014