Institutional alignment problems

Mechanism design for distributed moral wetware

2024-02-27 — 2024-02-27

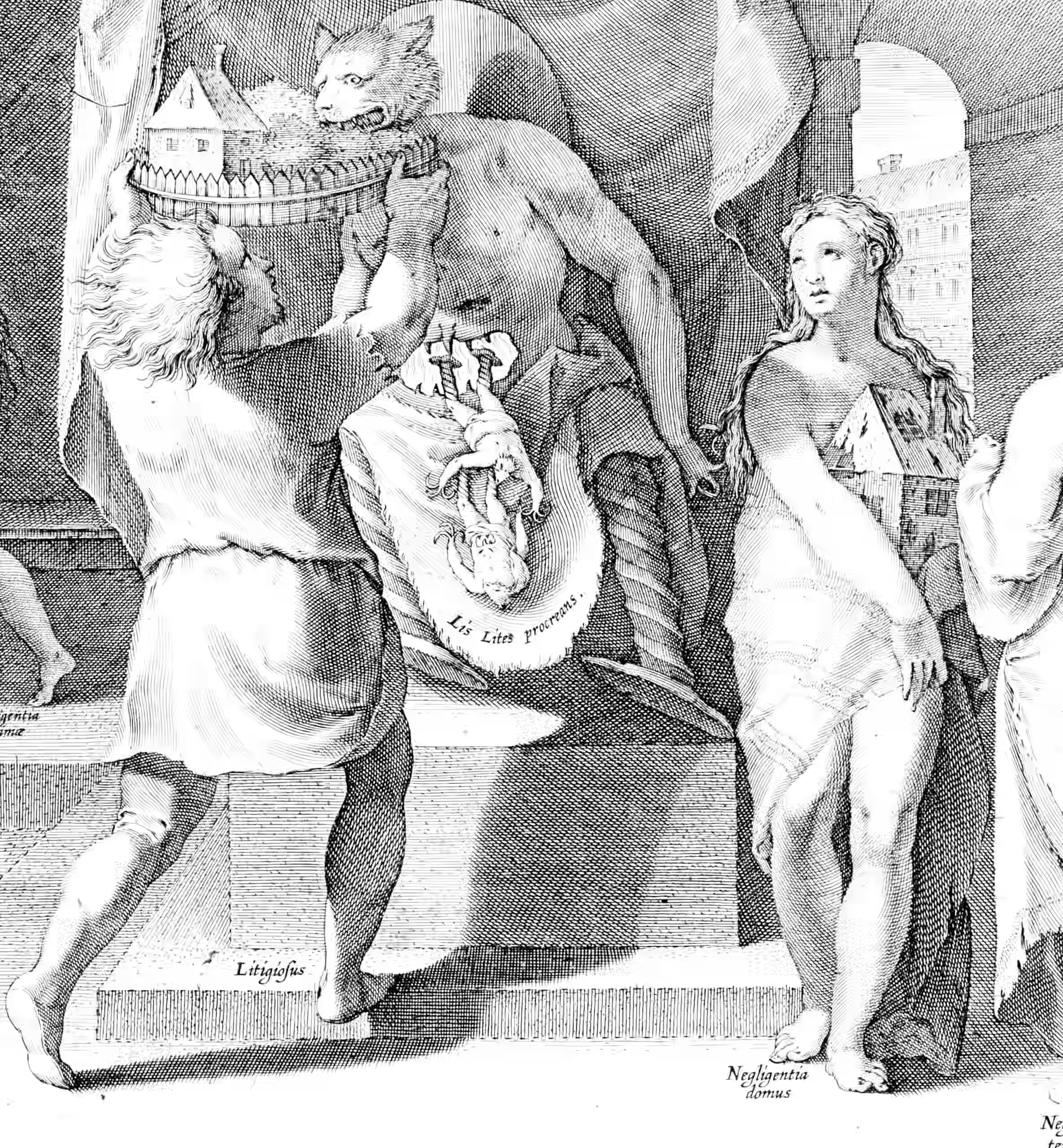

Wherein Institutional Alignment Problems Are Examined Through Mechanism Design for Formalized Distributed Moral Wetware, and the Risk of Excessive Bureaucracy or Mission Failure From Rule-Bound Agents Is Sketched.

AI safety

cooperation

culture

distributed

economics

extended self

faster pussycat

game theory

incentive mechanisms

institutions

networks

wonk

Let me restate the subtitle: Mechanism design for formalized distributed moral wetware, as opposed to the even more vague notion of movement design. That which, if done wrong, leads to too much bureaucracy, or a failure to do the thing it was tasked to do.

My contrarian take is that institutional alignment and AI alignment are very similar problems, for all that they execute on different substrates.

1 References

Conitzer, and Oesterheld. 2023. “Foundations of Cooperative AI.” Proceedings of the AAAI Conference on Artificial Intelligence.

Cunningham, and Shah. 2014. “Decriminalizing Indoor Prostitution: Implications for Sexual Violence and Public Health.” Working Paper 20281. Working Paper Series.

Edelman, Tan, Lowe, et al. 2025. “Full-Stack Alignment: Co-Aligning AI and Institutions with Thick Models of Value.” In.

Gabriel. 2020. “Artificial Intelligence, Values, and Alignment.” Minds and Machines.

Guha, Lawrence, Gailmard, et al. 2023. “AI Regulation Has Its Own Alignment Problem: The Technical and Institutional Feasibility of Disclosure, Registration, Licensing, and Auditing.” George Washington Law Review, Forthcoming.

Hadfield-Menell, and Hadfield. 2018. “Incomplete Contracting and AI Alignment.”

Ingstrup, Aarikka-Stenroos, and Adlin. 2021. “When Institutional Logics Meet: Alignment and Misalignment in Collaboration Between Academia and Practitioners.” Industrial Marketing Management.

Jara-Ettinger, and Dunham. 2025. “The Institutional Stance.” Behavioral and Brain Sciences.

Khan, M. H. 1995. “State Failure in the Weak States: A Critique of New Institutionalist Explanations.” In New Institutional Economics and Third World Development.

Khan, M. 1998. “Patron-Client Networks and the Economic Effects of Corruption in Asia.” European Journal of Development Research.

Khan, M. H. 2005. “Markets, States and Democracy: Patron-Client Networks and the Case for Democracy in Developing Countries.” Democratization.

Khan, Mushtaq Husain, and Sundaram. 2000. Rents, Rent-Seeking and Economic Development: Theory and Evidence in Asia.

Kim. 2020. “Deep Learning and Principal–Agent Problems of Algorithmic Governance: The New Materialism Perspective.” Technology in Society.

Klingefjord, Lowe, and Edelman. 2024. “What Are Human Values, and How Do We Align AI to Them?”

Korinek, and Balwit. 2022. “Aligned with Whom? Direct and Social Goals for AI Systems.” Working Paper 30017.

Korinek, Fellow, Balwit, et al. n.d. “Direct and Social Goals for AI Systems.”

Lowe, Edelman, Zhi-Xuan, et al. 2025. “Full-Stack Alignment: Co-Aligning AI and Institutions with Thicker Models of Value.” In.

MacInnes, Garfinkel, and Dafoe. 2024. “Anarchy as Architect: Competitive Pressure, Technology, and the Internal Structure of States.” International Studies Quarterly.

Ramírez, Kolumbus, Nagel, et al. 2023. “Game Manipulators - the Strategic Implications of Binding Contracts.”

Tan, and Abramsky. 2022. “Institutions Under Composition.”

Zhi-Xuan, Carroll, Franklin, et al. 2025. “Beyond Preferences in AI Alignment.” Philosophical Studies.