Tuning an MCMC sampler

2020-04-30 — 2020-04-30

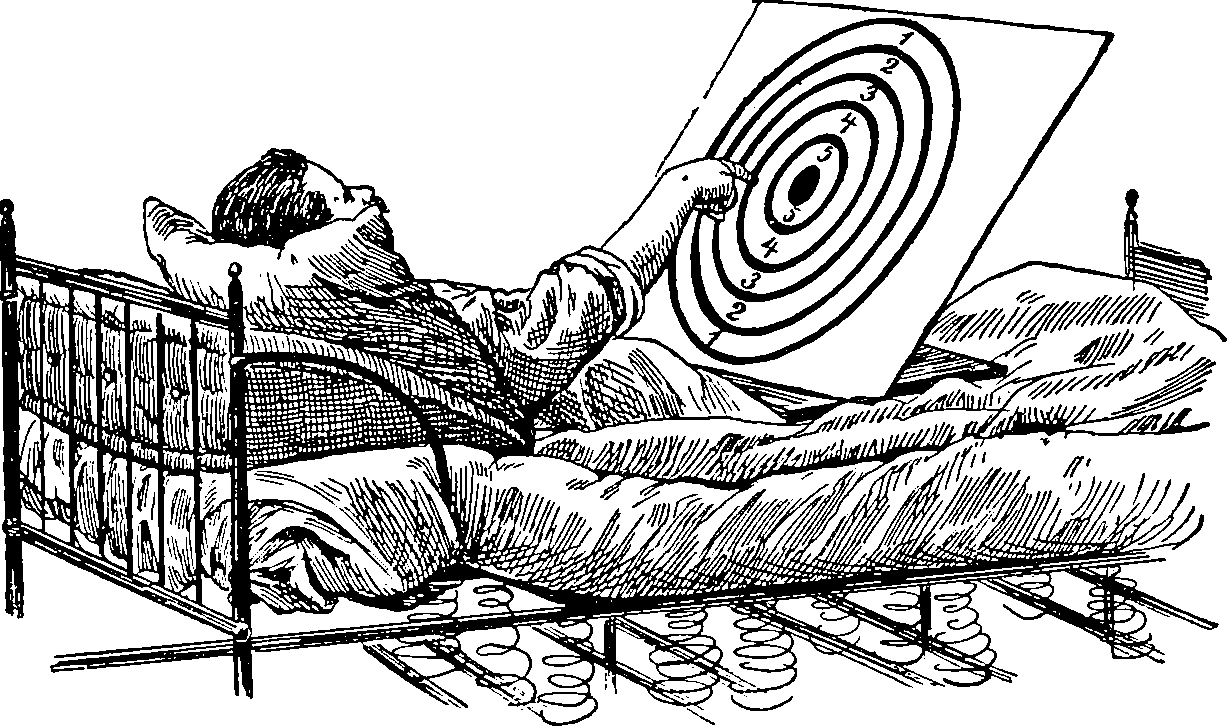

Wherein the Sampler Is Tuned by Pilot Runs and by Online Adaptive Schemes, Suspect Pilot Samples Are Discarded, and Expected Squared Jump Distance Is Proposed as the Optimisation Criterion for Optimal Mixing and Reduced Rejection Rate.

Designing MCMC transition density, possibly via the proposal density in rejection sampling, by optimisation for optimal mixing.

The simplest way to do this is to do a “pilot” run to estimate optimal mixing kernels then use the adapted mixing kernels, discarding the suspect samples from the pilot run as suspect. This wastes some effort but is theoretically simple. Alternatively, you could do this dynamically, online, which is called Adaptive MCMC. There are then some theoretical wrinkles.

I do wish to maximize the mixing rate by some criterion. If I already know my mixing rate is bad without optimising, which is why I am optimising, how do I get the simulations against which to conduct the optimisation? How do we optimise simultaneously for maximising mixing rate and minimising rejection rate? Fearnhead and Taylor (2013) summarises some options here for an objective function. One that seems sufficient for publication of typical MCMC papers is Expected Squared Jump Distance, ESJD (which is more precisely an expected squared Mahalanobis distance) between samples, which minimises the lag-1 autocorrelation, which is in practice most of what we do.

1 Proposal density

Designing the proposal density is often easy for an independent rejection sampler. That is precisely the cross-entropy method. For Markov chain, though the success criterion is muddier. AFAICT the cross-entropy trick does not apply for non-i.i.d. samples.

2 Transition density

🏗

3 Adaptive SMC

In Sequential Monte Carlo, which is not MCMC, we do not need to be so sensitive to changing the proposal parameters, since there is no stationary distribution argument. See Fearnhead and Taylor (2013).

4 Variational inference

What is Hamiltonian Variational Inference? Does that fit under this heading? 🏗 (Caterini, Doucet, and Sejdinovic 2018; Salimans, Kingma, and Welling 2015)