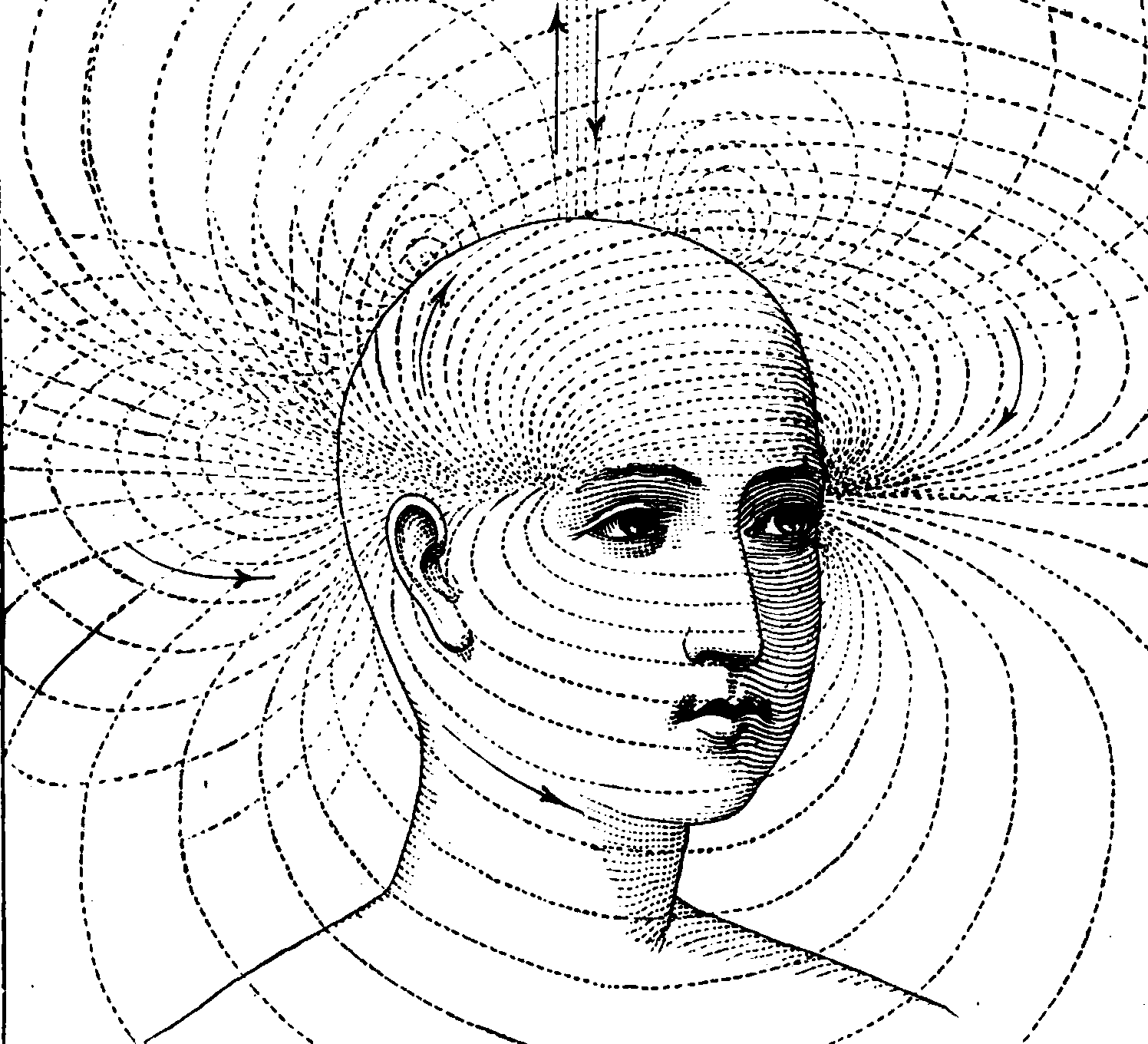

Mind reading by computer

The ultimate inverse problem

2017-07-01 — 2021-03-02

Wherein the Bluntness of fMRI Instruments and the Reliance on Subject-Specific Calibration Are Set Out, and the Extent of Progress Toward Real-Time Decoding Without Priming or Pretraining Is Reported.

A placeholder.

I’d like to know how good the results are getting in this area, and how general across people/technologies etc. How close are we to the point that someone can put an arbitrary individual in some kind of tomography machine and say what they are thinking without pre-training or priming?

1 Base level: brain imaging

The instruments we have are blunt. Consider, could a neuroscientist even understand a microprocessor? (Jonas and Kording 2017) What hope is there of brains?

TODO: discuss the infamously limp state of fMRI inference, problem of multiple testing in correlated fields etc.

TBC.

2 Advanced: brain decoding

Assuming you can get information out of your instruments, can you decode something meaningful. Marcel Just et al do a lot of this. It for sure leads to fun press releases, e.g. CMU Scientists Harness “Mind Reading” Technology to Decode Complex Thoughts but I need time to see details to understand how much progress they are making towards the science-fiction version(Wang, Cherkassky, and Just 2017)

Researchers watch video images people are seeing decoded from their fMRI brain scans in near-real-time. If you want to have a crack at this yourself, you might check out Katja Seeliger’s mind reading datasets.

More intrusively, in rats… Real-time readouts of location memory:

by recording the electrical activity of groups of neurons in key areas of the brain they could read a rat’s thoughts of where it was, both after it actually ran the maze and also later when it would dream of running the maze in its sleep