Biological basis of language

Neurology, evolution and ecology of our memes

2018-01-11 — 2021-09-16

Wherein the Neural Foundations of Language Are Surveyed in a Laconic Register, and the Study of Finch Syntax Is Adduced as a Concrete Model, While Links to Predictive Coding and Transformer Analogies Are Outlined.

A placeholder for squishy brain stuff about language. If I wanted to think about it from a multi-agent perspective, I might consider how a social brain playing language games. Or, I might wonder about predictive coding and how that would relate. We could also wonder if semantics has something to do with biology. My old lectured Ana Wierzbicka would say yes.

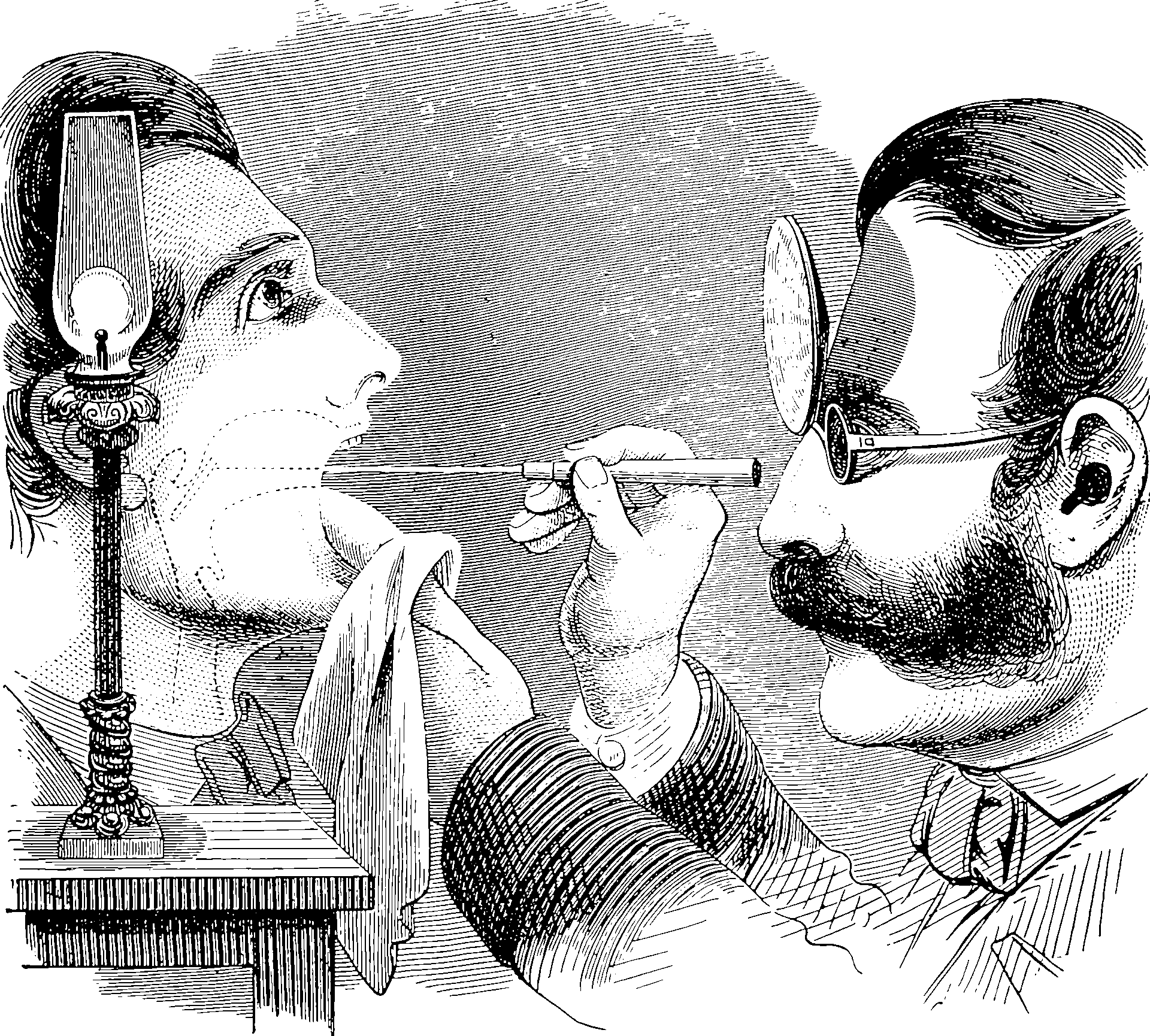

1 Neurology of language

See (Petersson, Folia, and Hagoort 2012; Pylkkänen 2019) for a review of the neural basis of language in humans. I’m quite charmed by how well the neural basis of syntax is studied in finches, e.g. Jin (2009).

2 Analogy with artificial neural networks

Mapping NLP to neurology is surely a thing, but I do not know much about it.

3 Evolution of language

See language games.

4 Computational complexity

See syntax. Or, these days, ignore all the classical theory and just think about how transformer do great at NLP in defiance of classic hopelessness.

5 Meaning

See semantics.

6 Incoming

“They’re using phrase-structure grammar, long-distance dependencies. FLN recursion, at least four levels deep and I see no reason why it won’t go deeper with continued contact. […] It doesn’t have a clue what I’m saying.”

“What?”

“It doesn’t even have a clue what it’s saying back,” she added.

Peter Watts, Blindsight