Moral calculus

2014-08-04 — 2022-02-19

Wherein Trolley Problems and Machine Agency Are Examined, and Weaponised 3D‑printable Golems, Autopilot Ethics, and Continuous Branching Decision‑tree Limits Are Invoked to Probe Moral Choice.

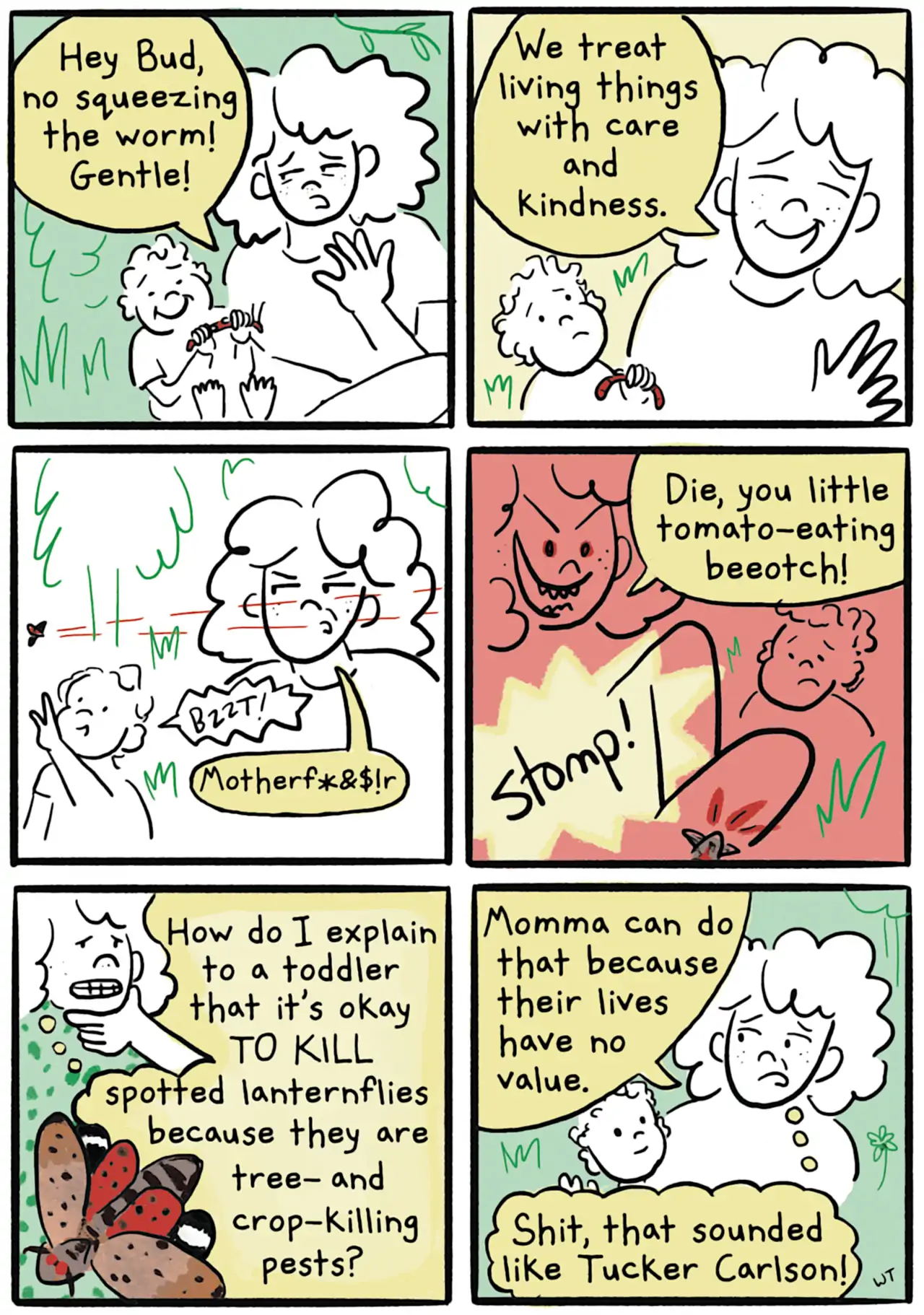

Pareto optimality, utilitarianism, and murderbots. Staple of science fiction since the first robot, and probably since all the holy books of all the religions. cf Golems, contracts with devils. This has all become much more legible and quantifiable now that the golems are weaponised 3D-printable downloads. That said, I am sympathetic, as an ML researcher, to the idea that we are pretty far from needing machines to solve trolley problems for us at the moment. Or at least, the ones that seem reasonable in the short term are more decision theoretic — given my imperfect understanding of the world, how sure am I that I, a robot, am not killing my owner by doing my task badly. Weighing up multiple lives and potentialities does not seem on the short-term cards, except perhaps in a fairness-in-expectation context.

Regardless, this notebook is for trolley problems in the age of machine agency, war drones and smart cars. (Also, what is “agency” anyway?) Hell, even if we can design robots to follow human ethics, do we want to? Do instinctual human ethics have an especially good track record? What are the universals specifically? Insert here: link to an AI alignment research notebook.

<iframe width="560" height="315" src="https://www.youtube.com/embed/-N_RZJUAQY4" frameborder="0" allowfullscreen></iframe>1 For machines

Radiolab’s popsci introduction to the Trolley problem

Here’s a Terrible Idea: Robot Cars With Adjustable Ethics Settings

Moral machines is a deadpan attempt by MIT to elicit our raving nonsense implicit moral codes:

We show you moral dilemmas, where a driverless car must choose the lesser of two evils, such as killing two passengers or five pedestrians. As an outside observer, you judge which outcome you think is more acceptable. You can then see how your responses compare with those of other people.

2 For humans as cogs in the machine

Try moral philosophy.

3 Infinitesimal trolley problems

Something I would like to look into: What about trolley problems that exist as branching decision trees, or even a continuous limit of constantly branching stochastic trees? What happens to morality in the continuous limit?

4 Incoming

To file: