Standards hell

Lock-in, QWERTY, fragmentation problems etc

2015-01-05 — 2022-01-10

Wherein the Propagation of Technical Standards Is Examined Through Postel’s Robustness Principle and Path‑dependence, and the Tension Between Federation and Centralisation Is Illustrated by Messaging Protocols and Power‑outlet Examples.

See also Thomas Schelling, and many other engineering and economics papers I haven’t heard of yet. Minimum power principle. Economics of standards. Partial contracts. Power outlets. The disputed economics of QWERTY. Peyton Young on which side of the road you drive on. Here, read Kevin Bryan’s review of Scott Page’s Path Dependence.

1 Standards on a network

Consider Postel’s Law, or Spolsky’s notorious Martian headsets essay on that law:

[…] this is where Jon Postel caused a problem, back in 1981, when he coined the robustness principle: “Be conservative in what you do, be liberal in what you accept from others.” […] Postel’s “robustness” principle didn’t really work. The problem wasn’t noticed for many years. In 2001 Marshall Rose finally wrote:

Counter-intuitively, Postel’s robustness principle […] often leads to deployment problems. Why? When a new implementation is initially fielded, it is likely that it will encounter only a subset of existing implementations. If those implementations follow the robustness principle, then errors in the new implementation will likely go undetected. The new implementation then sees some, but not widespread deployment. This process repeats for several new implementations. Eventually, the not-quite-correct implementations run into other implementations that are less liberal than the initial set of implementations. The reader should be able to figure out what happens next.

Apenwarr talks about this in terms of interoperating networks:

Postel’s Law is the principle the Internet is based on. Not because Jon Postel was such a great salesperson and talked everyone into it, but because that is the only winning evolutionary strategy when internets are competing. Nature doesn’t care what you think about Postel’s Law, because the only Internet that happens will be the one that follows Postel’s Law.

There are some explicit models for this kind of thing in the work of Peyton Young which analyse what standards can propagate on a graph. (Burke and Young 2010; Young 1996) via network economics.

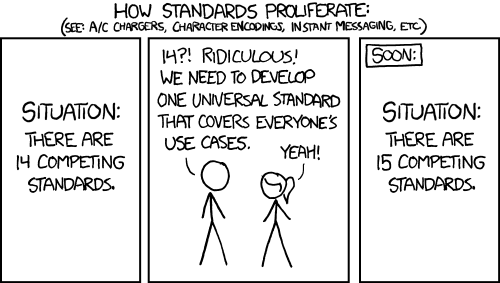

The conversation often feels logjammed once we have invoked Postel’s law. Ever been in one of those conversations where there are several standards and someone suggests a unifying standard to integrate them then someone else posts that XKCD comic?

Well! Have I got a blog post for you! Acko uses a bit of (very light) type theory and pictures of ducks to provide a vocabulary for what standards ARE and how to imagine compatibility.

Moxie Marlinspike observes some dynamics in centralised versus decentralised protocols when discussing Signal messenger:

When someone recently asked me about federating an unrelated communication platform into the Signal network, I told them that I thought we’d be unlikely to ever federate with clients and servers we don’t control. Their retort was “that’s dumb, how far would the internet have gotten without interoperable protocols defined by 3rd parties?”

I thought about it. We got to the first production version of IP, and have been trying for the past 20 years to switch to a second production version of IP with limited success. We got to HTTP version 1.1 in 1997, and have been stuck there until now. Likewise, SMTP, IRC, DNS, XMPP, are all similarly frozen in time circa the late 1990s. To answer his question, that’s how far the internet got. It got to the late 90s.

That has taken us pretty far, but it’s undeniable that once you federate your protocol, it becomes very difficult to make changes. And right now, at the application level, things that stand still don’t fare very well in a world where the ecosystem is moving.

Indeed, cannibalising a federated application-layer protocol into a centralised service is almost a sure recipe for a successful consumer product today. It’s what Slack did with IRC, what Facebook did with email, and what WhatsApp has done with XMPP. In each case, the federated service is stuck in time, while the centralised service is able to iterate into the modern world and beyond.

So while it’s nice that I’m able to host my own email, that’s also the reason why my email isn’t end-to-end encrypted, and probably never will be. By contrast, WhatsApp was able to introduce end-to-end encryption to over a billion users with a single software update. So long as federation means stasis while centralisation means movement, federated protocols are going to have trouble existing in a software climate that demands movement as it does today.