Auditory features

descriptors, maps, representations for audio

2019-11-13 — 2021-01-14

Wherein Differentiable, Invertible, and Psychoacoustically Informed Audio Descriptors Are Surveyed, and the Prevalence of Noninvertible MFCCs Versus Raw-Audio Neural Features That Recover Mel-Like Bands Is Noted.

In machine listening and related tasks like audio analysis, we often want compact representations of audio signals in some manner that is not “raw”; something more useful than the simple record of the vibrations of the microphone as given by the signal pressure level time series. We might want a representation, for example, that tells us something about psychoacoustics, i.e. the parts of the sound that are relevant to a human listener. We might want to use these features to construct a musical metrics which tells us something about perceptual similarity or something.

These representations are called features or descriptors. This is a huge industry — compact summary features, for example, make audio convenient and compact for transmission. (hello mobile telephony, MP3) But this trick is also useful for understanding speech, music, etc. There are as many descriptors as there are IEEE conference slots.

See, say, (Alías, Socoró, and Sevillano 2016) for an intimidatingly comprehensive summary.

I’m especially interested in

invertible ones, for analysis/resynthesis. If not analytically invertible, convexity would do.

differentiable ones, for leveraging artificial neural infrastructure for easy optimization.

Ones that avoid windowed DTFT, because it sounds awful in the resynthesis phase and is lazy.

ones that can encode noisiness in the signal as well as harmonicity…?

1 Just ask someone

There are protocols that measure musical similarity by asking human listeners to rate similarity.

MUSHRA is a useful software package/protocol.

2 Deep neural network feature maps

See, e.g. Jordi Pons’ Spectrogram CNN discussion for some introductions to the kind of features a neural network might “discover” in audio recognition tasks.

There is some interesting work on that; for example, Dieleman and Schrauwen (Dieleman and Schrauwen 2014) show that convolutional neural networks trained on raw audio (i.e. not spectrograms) for music classification recover Mel-like frequency bands. Thickstun et al (Thickstun, Harchaoui, and Kakade 2017) do some similar work.

And Keunwoo Choi shows that you can listen to what they learn.

There is also csteinmetz1/auraloss: Collection of audio-focused loss functions in PyTorch

3 Sparse comb filters

Differentiable! Conditionally invertible! Handy for syncing.

Moorer (Moorer 1974) proposed these for harmonic purposes, but Robertson et al (Robertson, Stark, and Plumbley 2011) have shown it to be handy for rhythm.

4 Autocorrelation features

Measure the signal’s full or partial autocorrelation with itself. Very nearly the power spectrum, thanks to the Wiener-Khintchine theorem.

5 Power spectrum

Power spectral density.

6 Bispectrum

7 Linear Predictive coefficients

How do these transform? If we did this as all-pole or all-zeros might be useful; but many maxima.

8 Scattering transform coefficients

See scattering transform.

9 Cepstra

Classic, but inconvenient to invert.

10 MFCC

Mel-frequency Cepstral Coefficients, or Mel Cepstral transform. Take the perfectly respectable-if-fiddly cepstrum and make it really messy, with a vague psychoacoustic model in the hope that the distinctions in the resulting “MFCC” might correspond to distinctions in human perceptual distinctions.

Folk wisdom holds that MFCC features are Eurocentric, in that they destroy, or at least obscure, tonal language features. Ubiquitous, but inconsistently implemented; MFCCs are generally not the same across implementations, probably because the Mel scale is itself not universally standardized.

Aside from being loosely psychoacoustically motivated features, what do the coefficients of an MFCC specifically tell me?

Hmm. If I have got this right, these are “generic features”; things we can use in machine learning because we hope they project the spectrum into a space which approximately preserves psychoacoustic dissimilarity, whilst having little redundancy.

This heuristic pro is weighted with the practical con that they are not practically differentiable, nor invertible except by heroic computational effort, nor are they humanly interpretable, and riven with poorly-supported somewhat arbitrary steps. (The cepstrum of the Mel-frequency-spectrogram is a weird thing that no longer picks out harmonics in the way that God and Tukey intended.)

11 Filterbanks

Inc bandpasses, Gammatones… Random filterbanks?

12 Dynamic dictionaries

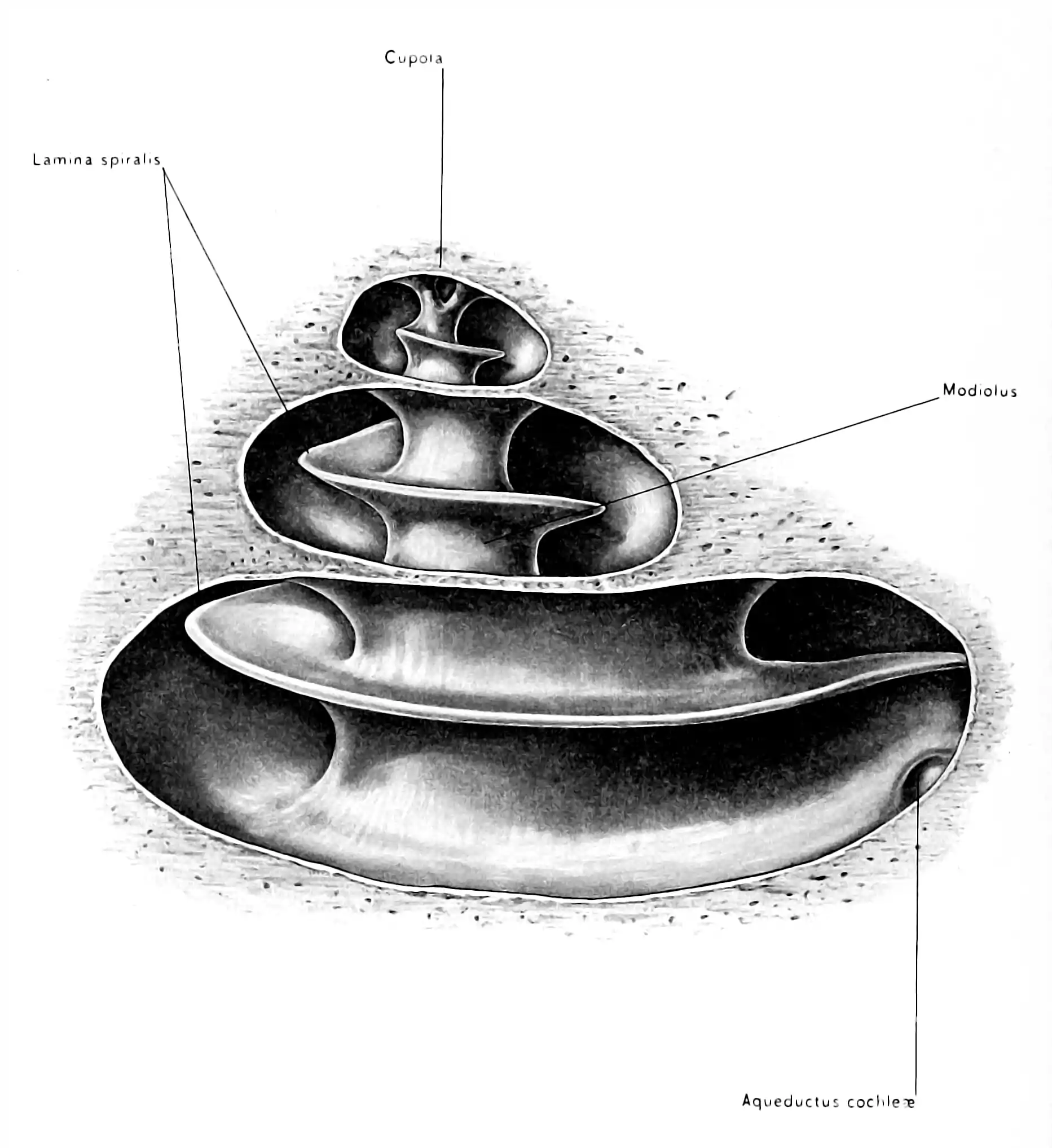

13 Cochlear activation models

Gah.

14 Units

Erbs, Mels, Sones, Phones… There are various units designed to approximate the human perceptual process; I tried to document them under psychoacoustics.