Epistemic communities

Descriptive and normative

2021-08-24 — 2025-10-12

Wherein the workings of news media and scientific circles are examined, with attention paid to misinformation, Bayesian design approaches, and impacts on public shared reality.

There’s nothing to say right now about general epistemic communities. I have some thoughts about the specialized epistemic community that is the science community. I’d also like to think about journalism.

1 Bayesian epistemics

A very interesting inverse design approach, i.e. how should we design a system to optimize for truthfulness? See Bayesian epistemics.

2 Incoming

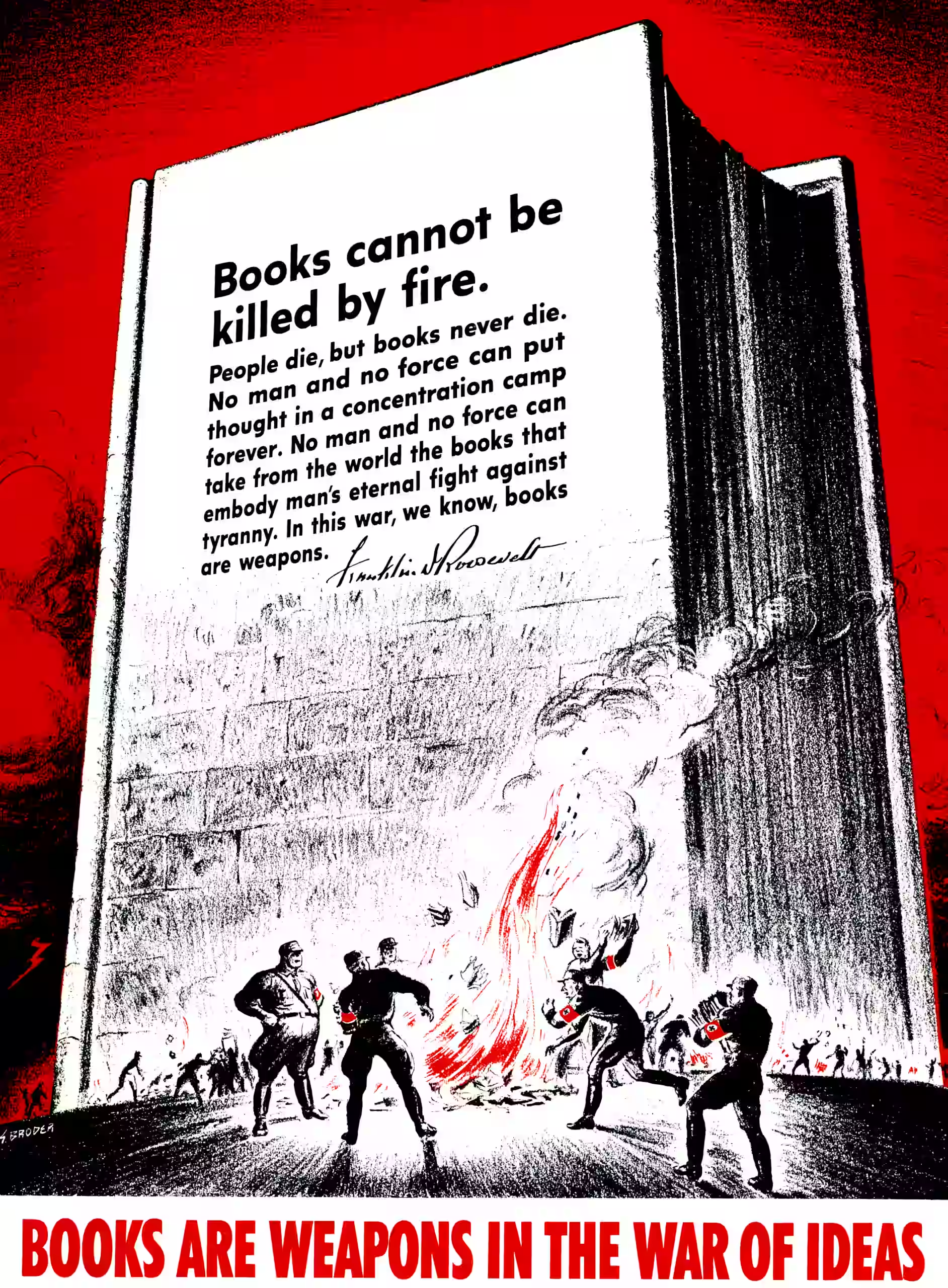

News media and the public’s shared reality. Fake news, indeterminate news, incomplete news, alternative facts, strategic inference, kompromat, agnotology, facebooking to a molecular level. Basic media literacy, and whether it helps. As seen in elections and provocateur twitter bots.

AI Tools for Trust: Community Notes, Rhetoric Detection & More

Dan Williams, Can experts save the public from error?

Erik Hoel, The gossip trap

Gordon Brander, Thinking together, on egregores, Dunbar numbers and information-processing thresholds in Holocene social evolution, which all motivate

- Noosphere, a protocol for thought

- source

- The explainer is poor and doesn’t even tell us how to start one of these noospheres or do anything in particular, which indicates a suspect development model

Why Quora isn’t useful anymore: A.I. came for the best site on the internet.

Samuel Butler:

The public buys its opinions as it buys its meat, or takes in its milk, on the principle that it is cheaper to do this than to keep a cow. So it is, but the milk is more likely to be watered.

Marisa Abrajano has a provocative list of research topics. I’d like to read her work to see her methodology.

Marisa Abrajano is professor of political science at the University of California, San Diego. She is also Provost of Earl Warren College. Her research interests focus on racial and ethnic inequalities in the political system, particularly with political participation, voting and campaigns, and the mass media. She is the author of five books. Her latest book, in collaboration with Nazita Lajevardi, explores the politics of misinformation amongst socially marginalized groups