Variational message-passing algorithms in graphical models

Cleaving reality at the joint, then summing it at the marginal. Generalized Approximate Message Passing.

2014-11-25 — 2024-03-25

Wherein Variational Message-Passing Is Presented Under KL Divergence and Exponential-Family Assumptions, on Factor Graphs Assumed to Be Trees, and an Application to Latent Gaussian Process Models Is Sketched.

Assumed audience:

People who want to learn the tools of modern approximate graphical inference with the minimum of diversions

Even more wrong than normal this notebook is a mere scratch pad where I am sketching out an alternative explanation for myself and it is definitely not correct right now. It may at some future point become correct, but do not hold your breath. Right now I am copy-pasting and trying stuff on to see if I can find a quick path to success.

See also graph computations for a more general sense of “Message Passing,” and graph NNs for a related idea but with more magic neural dust sprinkled on it.

Variational message passing refers to inference wherein we can calculate some approximation to some marginal probability density by passing around “messages” that summarize (in some sense) some conditional probability updates between nodes on a graph. The nodes arise when the probability density factorizes over some graphical independence structure. If we can find such a cool factorisation, and an appropriate form for the messages, we might get cheap and/or distributed inference, for some values of cheap and distributed.

I am currently particularly interested in message passing for latent GP models where it generates Gaussian belief propagation.

The manner in which variational message passing is usually introduced I find confusing. We do not usually lead with the intuition. What are these damned messages? In this notebook I am trying to shuffle about the graphical models curriculum until I find a minimum viable set of concepts to explain to myself what I actually need to do in my own approximate variational inference practice when I fire up modern probabilistic programming tools.

This document is live and subject to revisions and definitely includes errors I have introduced recently in addition to the earlier errors I have not yet found.

For motivation as to why we would try to solve statistical questions by the passing of messages, see the specific example of belief propagation, which is the original setting for message passing, where it turns out there are some nice relations between graphs and integrals of factorised functions. It is worth learning once. Exact Belief Propagation cannot be implemented except in special cases, so you can likely forget it soon after. The key take-home is that some integrals arising in marginalising and conditioning random variables can be solved, at least conceptually, by passing messages around on the graph corresponding to the factorisation of the joint density. It is, IMO, worth not learning too much about because traditionally there are lots of other side-quests they send you at this point, all of which are useless practically, even if they are pedagogic.

There are two common ways to build up message passing ideas; one is to start from belief propagation and try to generalize it in various ways, worrying at each step how valid the extension is. Alternatively, we can present a specific VMP algorithm in its own right, which is pretty simple and always does something, even though sometimes it might produce nonsense. This approach is what a lot of application papers actually do. Later we can wonder under what circumstances it might do marginal inference, and what it might approximate when it does not. The latter approach is my preference for now, since it leads to hands-on intuition-building with easy implementation, and defers the (to my mind) horrible, grinding stage of theorem-proving to later on when we are already solving useful problems and the pay-off is better.

Advice from Tom P. Minka (2005) tells us we have too many choices when devising a message passing algorithm:

The recipe to make a message-passing algorithm has four steps:

- Pick an approximating family for q to be chosen from. For example, the set of fully-factorized distributions, the set of Gaussians, the set of k-component mixtures, etc.

- Pick a divergence measure to minimize. For example, mean-field methods minimize the Kullback-Leibler divergence \(KL(q \| p)\), expectation propagation minimizes \(KL(p \| q)\), and power EP minimizes α-divergence, \(D\alpha(p \| q)\).

- Construct an optimization algorithm for the chosen divergence measure and approximating family. Usually this is a fixed-point iteration obtained by setting the gradients to zero.

- Distribute the optimization across the network, by dividing the network p into factors, and minimising local divergence at each factor.

Take home message: there are a lot of pluggable parts in this family of algorithms. This can be confusing. I think it is easier to start from a concrete set of assumptions, derive a specific (ideally maximally simple) algorithm and then generalize outwards to more fiddly/complicated ones. Here I keep a somewhat concrete example in mind.

There are many variational message-passing algorithms, including Gaussian Belief Propagation, particle message passing, expectation propagation, gradient message passing, Stein variational gradient descent, kernel message passing (Song et al. 2011), Sigma-point belief propagation (Meyer, Hlinka, and Hlawatsch 2014) and probably other stuff I forgot.

1 It is conceptually easier to seek forgiveness for approximate inference than permission for exact inference

A classic way to introduce message-passing inference is to spend time wondering when we are permitted to do exact inference with local calculations (“exact belief propagation”), and then introduce ever more caveats and complications.

After this complicated machinery breaks down we give up, throw most of it out, and introduce a simpler approximation, i.e. variational message-passing (VMP), which can be applied to more diverse distributions and graph structures. There is slightly more complex set up in terms of distributions (what does it mean to variationally approximate a distribution?) but the machinery there is something we can use over and over again and is simpler for me at least than complicated graph algorithms.

2 Which graph?

We can pass messages on factor graphs or on DAGs or what-have-you, and the update rules are slightly different in each case. It comes out simpler on factor graphs (although John M. Winn and Bishop (2005) does a good job of being clear about the structure of the method on DAGs by analogy with expectation maximisation, which produces some lovely symmetries). Regardless, factor graphs are easiest and that is what we pursue here; they come out connecting closely to some free-energy results from physics, e.g. ELBO if we use KL divergences.

3 Which divergence?

Kullback-Leibler divergence is a great starting point. In combination with the other assumptions we have introduced, this makes everything come out nice.

Hold that thought.

4 Which random variates?

We will assume exponential family RVs, or specifically, that each factorized term in a factor is an exponential condition on the incoming message. For now we will, at least. There are cool generalisations.

5 What is mean-field?

I think it means that we choose a factorisation of the joint (typically posterior) density which is identical to the product of variable node marginals:

\[ q(\mathbf{Z})=\prod_i^M q_i\left(\mathbf{Z}_i\right) \]

It is remarkably hard to extract this detail assumption explicitly from the literature. Note that this factorisation pairs well with the KL-divergence, although they are separate design choices.

6 KL variational message-passing for exponential families on factor graphs

Putting this together, a reasonable choice for our basic starting algorithm is Variational message passing on factor graphs with respect to KL divergence (Tom P. Minka 2005; Wand 2017). I will also assume that all the graphs are tree graphs, which is to say, there are no loops. Later on, it will turn out that some things break if we have loops, but often not too badly and the badness can be quantified and controlled using various tools. We can take a short leap of faith where we seem to be doing arbitrary or weird things, then reassure ourselves that we are solving something like a real inference problem.

TODO: compare this to an exact algorithm like Gaussian BP, side by side.

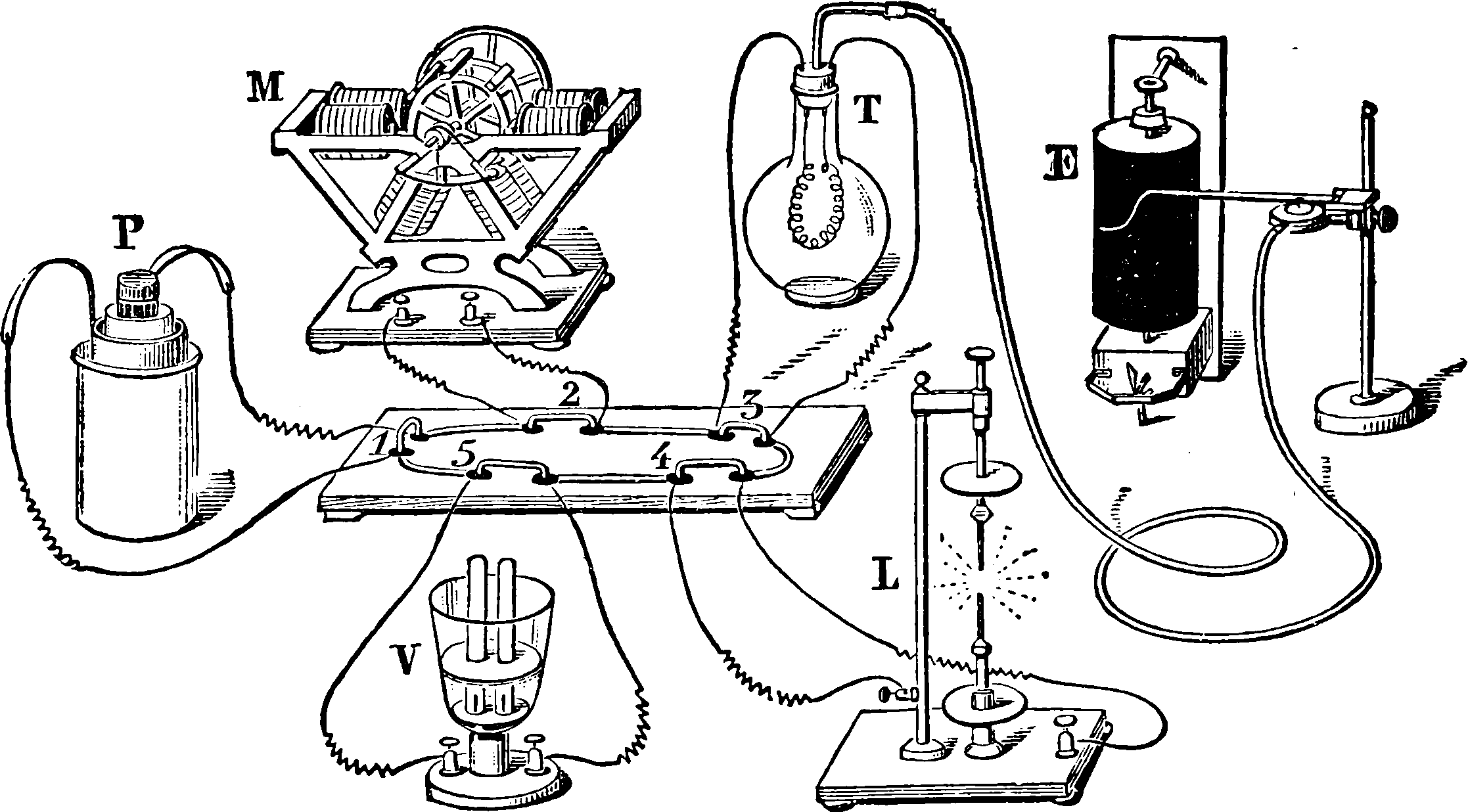

Let us now look at the factor graph from Wand (2017).

Consider a Bayesian statistical model with observed data \(\boldsymbol{D}\) and parameter vector \(\boldsymbol{\theta}\). In a factor graph we can write the joint density of these random nodes as \(p(\boldsymbol{\theta}, \boldsymbol{D})=\prod_{j=1}^{N} f_{j}\left(\boldsymbol{\theta}_{\text {neighbors }(j)}\right) .\) Our goal in inference is to calculate the posterior density function \(p(\boldsymbol{\theta} \mid \boldsymbol{D}).\)1

We do that with updates that look like this:

In the exact case Ortiz et al’s interpretation of these messages in the belief propagation setting makes sense:

Belief Update: The variable node beliefs are updated by taking a product of the incoming messages from all adjacent factors, each of which represents that factor’s belief on the receiving node’s variables.

Factor-to-variable message: To send a message to an adjacent variable node, a factor aggregates messages from all other adjacent variable nodes and marginalizes over all the other nodes’ variables to produce a message that expresses the factor’s belief over the receiving node’s variables.

Variable-to-factor message: A variable-to-factor message tells the factor what the belief of the variable would be if the receiving factor node did not exist. This is computed by taking the product of the messages the variable node has received from all other factor nodes.

We are working in an approximated setting, though, so this is more of a heuristic.

A fully-factorized approximation to the posterior density function \(p(\boldsymbol{\theta} \mid \boldsymbol{D})\) is \[ p(\boldsymbol{\theta} \mid \boldsymbol{D}) \approx q^{*}(\boldsymbol{\theta}) \] where $q^{*}() is the minimizer of the Kullback-Leibler divergence \(\int q(\boldsymbol{\theta}) \log \left\{\frac{q(\boldsymbol{\theta})}{p(\boldsymbol{\theta} \mid \boldsymbol{D})}\right\} d \boldsymbol{\theta}\). We suppress the dependence on data here because we are holding it fixed when we conduct a posterior update.

For each \(1 \leq i \leq M\) and \(1 \leq j \leq N\), the VMP stochastic node to factor message updates are \[ m_{\boldsymbol{\theta}_{i} \rightarrow f_{j}}\left(\boldsymbol{\theta}_{i}\right) \longleftarrow \propto \prod_{j^{\prime} \neq j: i \in \text { neighbours }\left(j^{\prime}\right)} m_{f_{j^{\prime}} \rightarrow \boldsymbol{\theta}_{i}}\left(\boldsymbol{\theta}_{i}\right) \] and the factor to stochastic node message updates are \[ m_{f_{j} \rightarrow \boldsymbol{\theta}_{i}}\left(\boldsymbol{\theta}_{i}\right) \longleftarrow \propto \exp \left[E_{f_{j} \rightarrow \boldsymbol{\theta}_{i}}\left\{\log f_{j}\left(\boldsymbol{\theta}_{\text {neighbours }(j)}\right)\right\}\right] \] where \(E_{f_{j} \rightarrow \boldsymbol{\theta}_{i}}\) denotes expectation with respect to the density function \[ \frac{\prod_{i^{\prime} \in \text {neighbours }(j) \backslash\{i\}} m_{f_{j} \rightarrow \boldsymbol{\theta}_{i^{\prime}}}\left(\boldsymbol{\theta}_{i^{\prime}}\right) m_{\boldsymbol{\theta}_{i^{\prime}} \rightarrow f_{j}}\left(\boldsymbol{\theta}_{i^{\prime}}\right)}{\prod_{i^{\prime} \in \text {neighbours }(j) \backslash\{i\}} \int m_{f_{j} \rightarrow \boldsymbol{\theta}_{i^{\prime}}}\left(\boldsymbol{\theta}_{i^{\prime}}\right) m_{\boldsymbol{\theta}_{i^{\prime}} \rightarrow f_{j}}\left(\boldsymbol{\theta}_{i^{\prime}}\right) d \boldsymbol{\theta}_{i^{\prime}}} \] That looks like we have made a ghastly mistake, because this latter message is horrible in general. It does simplify somewhat for conditionally-exponential families where we can exploit conditional conjugacy.

After passing messages around all the nodes in the graph until it seems to have converged, the Kullback-Leibler optimal \(q\)-densities are obtained via \[ q^{*}\left(\boldsymbol{\theta}_{i}\right) \propto \prod_{j: i \in \text {neighbours }(j)} m_{f_{j} \rightarrow \boldsymbol{\theta}_{i}}\left(\boldsymbol{\theta}_{i}\right). \]

6.1 What just happened?

What is this chaos?

Firstly, what is the message actually? It is a probability distribution, or, more generally a measure, over only some of the axes of the joint distribution.

Some texts describe the message as a vector. The fact that we can represent some target distribution, or more often some approximation to the probability distribution, as a finite-dimensional vector, is because some distributions can be represented by finite-dimensional parameter vectors (e.g. I can pack the means and variances of a multivariate Gaussian into a vector, which means that in Gaussian BP we are simply passing around Gaussian distributions).

This is the same object that turns up across statistics as a distribution-valued sufficient statistic: a message is a measure, and the finite-vector representation is the exponential-family exception, not the rule.

But what do we actually do with these messages? That depends.

What ends up being feasible most often is to introduce that lucky combination of assumptions (KL-divergence, fully-factorized, exponential-family) which causes the factors to decompose nicely such that the messages can reasonably be considered over each edge separately.

If those assumptions do not hold, inference can be hard. In that case, we can try various things. One option is to expand the model into a larger, more flexible one by adding auxiliary latent variables, semiparametric models (Wand 2017), or possibly other clever tricks. Thus, this idea is not as hopeless as it first seemed.

Another weird thing is that the relationship between nodes and observations is often assumed away. You can kind of get away with this if observations are i.i.d., or even exponential family i.i.d., but even then it is confusing to me because it relies on magic. These are important cases, but they are treated as if their relationship to the methodology at its most general was obvious. IMO it is not obvious, but the fact that it comes out so nicely in the special cases means that I feel like an idiot for not “getting” what problem I am solving generally.

tl;dr Part of the magic is that update rules for (conditionally) exponential variates under a mean field assumption and KL divergence are surprisingly simple.

Another part is that each observation in general introduces a new variable and a new factor, and we cannot always collapse them down using an i.i.d. assumption.

7 Factoring within nodes

🏗️

7.1 Fully-factored assumption

Here we assume that the density at each factor is well-approximated by a product of independent terms, one for each edge in the factor graph. (TODO: example equation).

Anyway, it is not always a terrible idea to make these assumptions, but nor is it the only way to do things, at least conceptually. In practice, it is much more effort if we cannot make this assumption.

Nonetheless, papers that do not make it explicit are very confusing. Why would we ever expect inference to look anything like that?2

AFAICT this is a problem of history. Not all variational inference must make the mean-field or fully-factored assumptions, but methods that relax these restrictions start to look different and need rather different technology to go, such as local Monte Carlo estimation. For a long while, this set of assumptions was the main game in town, (or even more onerous assumptions, like assuming everything is discrete and treating inference exactly) so tutorials of a certain vintage take mean-field variational inference as a synonym for variational inference. If I have just learnt some non-mean-field SVI methods from a recent NeurIPS paper before I encounter VMP, I might well be confused by this.

7.2 Structured mean field

Directly generalises mean field (Hoffman and Blei 2015). I am not sure how useful this is in practice.

7.3 Without mean field assumptions

What do relaxations of the mean-field assumption look like, and do they ever arise in message-passing models? Variational autoencoder does not use such assumptions in general, but they also do not use message passing inference. Re-imposing a graphical structure on VAE models is gruelling and AFAIK only done on a case-by-case basis. Perhaps there are some neural methods that do something else?

8 Conditional conjugacy/ exponential families

Under construction.

TODO: note that “natural parameter” form is extra nice here because it summarises the “right” updates.

There are several foundational texts to read about this (Attias 1999; Ghahramani and Beal 2001; John M. Winn and Bishop 2005).

Dustin Tran and David Blei mention the importance of the conditional conjugacy relations when we are working in (conditionally) exponential families.

Justin Domke summarises exponential families for graphical model usage.

9 Conditionally independent observations

TBD

10 Which free energy?

Helmholtz Free Energy is a classic flavour. On graphs, we are concerned with a related, more general (?) free energy called Bethe free energy (J. S. Yedidia, Freeman, and Weiss 2005; Jonathan S. Yedidia, Freeman, and Weiss 2000; M. J. Wainwright and Jordan 2008).

11 Deterministic nodes

TBD

12 In MCMC sampling

See Huang and Gelman (2005), Vehtari et al. (2019) and X. Wang and Dunson (2013).

13 Expectation propagation

14 Semiparametric

This is the main innovation I think, in the synthesis of Wand (2017).

15 Loopy belief propagation

OK, we have assumed tree graphs for everything thus far, but this is an onerous assumption. We can allow loopy graphs, which often works, sort-of. This choice does introduce extra approximations into the solution, but also allows us to handle more plausible systems within the same setup.

From Murphy, Weiss, and Jordan (1999) and analysed in Weiss and Freeman (2001); M. J. Wainwright and Jordan (2008);J. S. Yedidia, Freeman, and Weiss (2005). One day I might be interested in Bethe free energy too?

TBD.

16 Gaussian BP in particular

17 Phase transitions

Thomas Orton’s overview lecture connects message-passing with statistical mechanics of statistics.

Last week, we saw how certain computational problems like 3SAT exhibit a thresholding behavior, similar to a phase transition in a physical system. In this post, we’ll continue to look at this phenomenon by exploring a heuristic method, belief propagation (and the cavity method), which has been used to make hardness conjectures, and also has thresholding properties. In particular, we’ll start by looking at belief propagation for approximate inference on sparse graphs as a purely computational problem. After doing this, we’ll switch perspectives and see belief propagation motivated in terms of Gibbs free energy minimization for physical systems.

More possibly in Barbier (2015).

18 Gradient

19 Neural

TBD. See (Kuck et al. 2020; Satorras and Welling 2021).

20 Generalized approximate message passing (GAMP)

Credited by Oxvig, Arildsen, and Larsen (2017) to Rangan (2011).

21 Further reading

A good introduction is M. J. Wainwright and Jordan (2008), which does some deep stuff with setting up graphical models in exponential family context. Bishop (2006) provides extra application context and a different theoretical development. Dauwels (2007) explains why the factor graph setting is the simplest way to develop the message passing algorithms. I agree with him about this. It is simplest to learn message-passing inference for factor graphs and then translate to and from classic DAGs and MRFs as needed.

Dustin Tran and David Blei advocate Wand (2017) as a modern milestone in this field. Wingate and Weber (2013) connects us to modern stochastic VI.

Next, see the recommended texts about general graphical model theory in the graphical models notebook.

From a signal processing/coding perspective H.-A. Loeliger (2004) is interesting. Read this one if you know what a turbo code is.

Other overviews I have found useful for various reasons are Tom P. Minka (2005),J. S. Yedidia, Freeman, and Weiss (2003), Sutton and Minka (2006) and Cox, van de Laar, and de Vries (2019).

Recommended: Wee Sun Lee’s NeurIPS lecture about how to integrate neural function approximation into distributed inference: Message Passing In Machine Learning.

22 Tools

GAMP.

ForneyLab (Akbayrak, Bocharov, and de Vries 2021) looks especially useful for me:

The message passing paradigm offers a convenient method for leveraging model-specific structures, while remaining generally applicable. Message passing can be conveniently formulated on a Forney-style factor graph (FFG) representation of the model. Inference tasks on the model can then be decomposed in local computations, represented by messages that flow across the graph. This locality allows for storing pre-computed message updates in a look-up table that can be re-used across models. Automated algorithm construction then amounts to scheduling these messages in the order required by the inference task (see also this conference paper at JuliaCon).

ForneyLab (GitHub) is introduced in Cox, van de Laar, and de Vries (2019) as a novel Julia package that allows the user to specify a probabilistic model as an FFG and pose inference problems on this FFG. In return, ForneyLab automatically constructs a Julia program that executes a message passing-based (approximate) inference procedure. ForneyLab is designed with a focus on flexibility, extensibility and applicability to biologically plausible models for perception and decision making, such as the hierarchical Gaussian filter (HGF). With ForneyLab, the search for better models for perception and action can be accelerated

This idea has been baked into various other probabilistic programming frameworks by now, in that many of them seem to infer a reasonable message-passing structure for models.