What follows are some useful kernels to have in my toolkit, mostly over

For these I have freely raided David Duvenaud’s crib notes which became a thesis chapter (D. Duvenaud 2014). Also Wikipedia and (Abrahamsen 1997; Genton 2001).

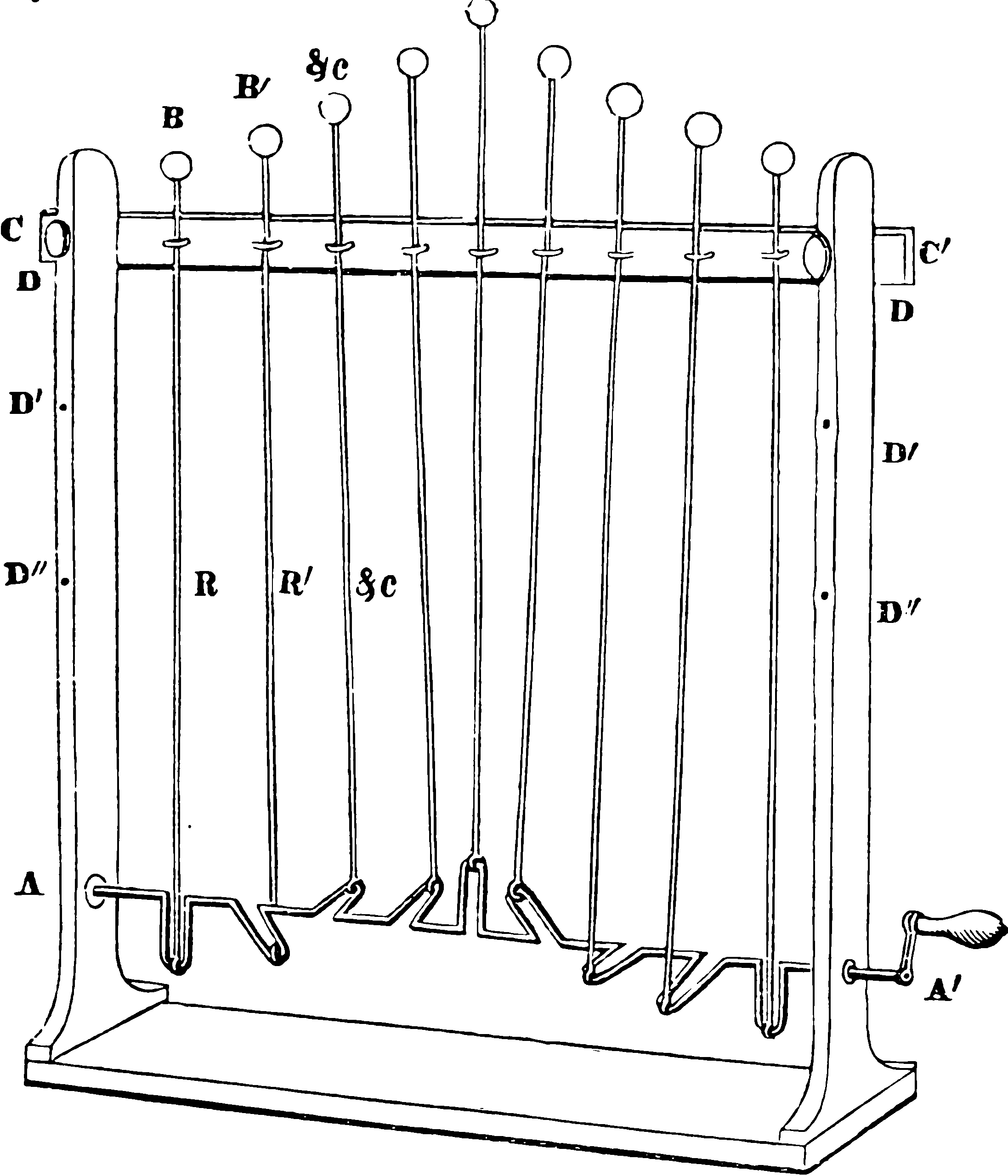

TODO: kernel set diagram.

1 Stationary

A popular assumption, more or less implying that no region of the process is special. In this case, the kernel may be written as a function purely of the distance between

A general stationary kernel is the Hida-Matérn kernel (Dowling, Sokół, and Park 2021).

2 Dot-product

The kernel is a function of the inner product/dot product of the input coordinates, and we may overload notation to write

Such kernels are rotation invariant but not stationary. Instead of the Fourier relationships that stationary kernels have, these can be described in Legendre bases for radial functions. Smola, Óvári, and Williamson (2000) manufacture some theorems which tell you whether your choice of inner product can in fact define an inner product kernel. Unfortunately, the basis so defined is long and ugly and not especially tractable to work with except maybe by computational algebra systems.

3 NN kernels

Infinite-width random NN kernels are nearly dot product kernels. They depend on several dot products,

4 Causal kernels

Time-indexed processes are more general than a standard Wiener process. 🏗

What constraints make a covariance kernel causal? This is not always easily expressed in terms of the covariance kernel; you want something like the inverse covariance/precision.

4.1 Wiener process kernel

The covariance kernel which is possessed by a standard Wiener process, which is a process with Gaussian increments, which is indeed a certain type of dependence. It is over a boring index space, time

Here

5 Squared exponential

A.k.a. exponentiated quadratic. Often “radial basis functions” mean this also, although not always.

The classic, default, analytically convenient, because it is proportional to the Gaussian density and therefore cancels out with it at opportune times.

6 Rational Quadratic

Duvenaud reckons this is everywhere but TBH I have not seen it. Included for completeness.

Note that

7 Matérn

The Matérn stationary (and in the Euclidean case, isotropic) covariance function is a surprisingly convenient model for covariance. See Carl Edward Rasmussen’s Gaussian Process lecture notes for a readable explanation, or chapter 4 of his textbook (Rasmussen and Williams 2006).

where $ $ is the gamma function,

8 Periodic

9 Locally periodic

This is an example of a composed kernel.

Obviously there are other possible localisations of a periodic kernel. This is a locally periodic kernel. NB it is not local in the sense of Genton’s local stationarity, just local in the sense that one kernel is ‘enveloped’ by another.

10 “Integral” kernel

I just noticed the ambiguously named Integral kernel:

I’ve called the kernel the ‘integral kernel’ as we use it when we know observations of the integrals of a function, and want to estimate the function itself.

Examples include:

- Knowing how far a robot has travelled after 2, 4, 6 and 8 seconds, but wanting an estimate of its speed after 5 seconds…

- Wanting to know an estimate of the density of people aged 23, when we only have the total count for binned age ranges…

I argue that all kernels are naturally defined in terms of integrals, but the author seems to mean something particular. I suspect I would call this a sampling kernel, but that name is also overloaded. Anyway, what is actually going on here? Where is it introduced? Possibly one of (Smith, Alvarez, and Lawrence 2018; O’Callaghan and Ramos 2011; Murray-Smith and Pearlmutter 2005).

11 Composed kernels

See composing kernels.

12 Stationary spectral kernels

(Sun et al. 2018; Bochner 1959; Kom Samo and Roberts 2015; Yaglom 1987b) construct spectral kernels in the sense that they use the spectral representation to design the kernel and guarantee it is positive definite and stationary. You could think of this as a kind of limiting case of composing kernels with a Fourier basis. See Bochner’s theorem.

13 Nonstationary spectral kernels

(Sun et al. 2018; Remes, Heinonen, and Kaski 2017; Kom Samo and Roberts 2015) use a generalised Bochner Theorem (Yaglom 1987b) often called Yaglom’s Theorem, which does not presume stationarity. See Yaglom’s theorem.

It is not immediately clear how to use this; spectral representations are not an intuitive way of constructing things.

14 Compactly supported

We usually think about compactly supported kernels in the stationary isotropic case, where we mean kernels that vanish whenever the distance between two observations

Genton (2001) mentions

For inner product kernels, this can be diabolical. The Schaback and Wu method discusses some operations that preserve positive-definiteness.

NB if you are trying specifically to enforce sparsity here, it might be worth considering the kernel induced by a stochastic convolution, which is kind of a precision parameterisation.

15 Markov kernels

How can we know from inspecting a kernel whether it implies an independence structure of some kind? The Wiener process and causal kernels clearly imply certain independences. Any kernel

16 Genton kernels

That’s my name for them because they seem to originate in (Genton 2001).

For any non-negative function

17 Kernels with desired symmetry

(D. Duvenaud 2014, chap. 2) summarises Ginsbourger et al’s work on kernels with desired symmetries / invariances. 🏗 This produces for example, the periodic kernel above, but also such cute tricks as priors over Möbius strips.

18 Stationary reducible kernels

See kernel warping.