Social psychology

Which of those NPR-friendly studies actually replicated?

2017-02-20 — 2022-01-13

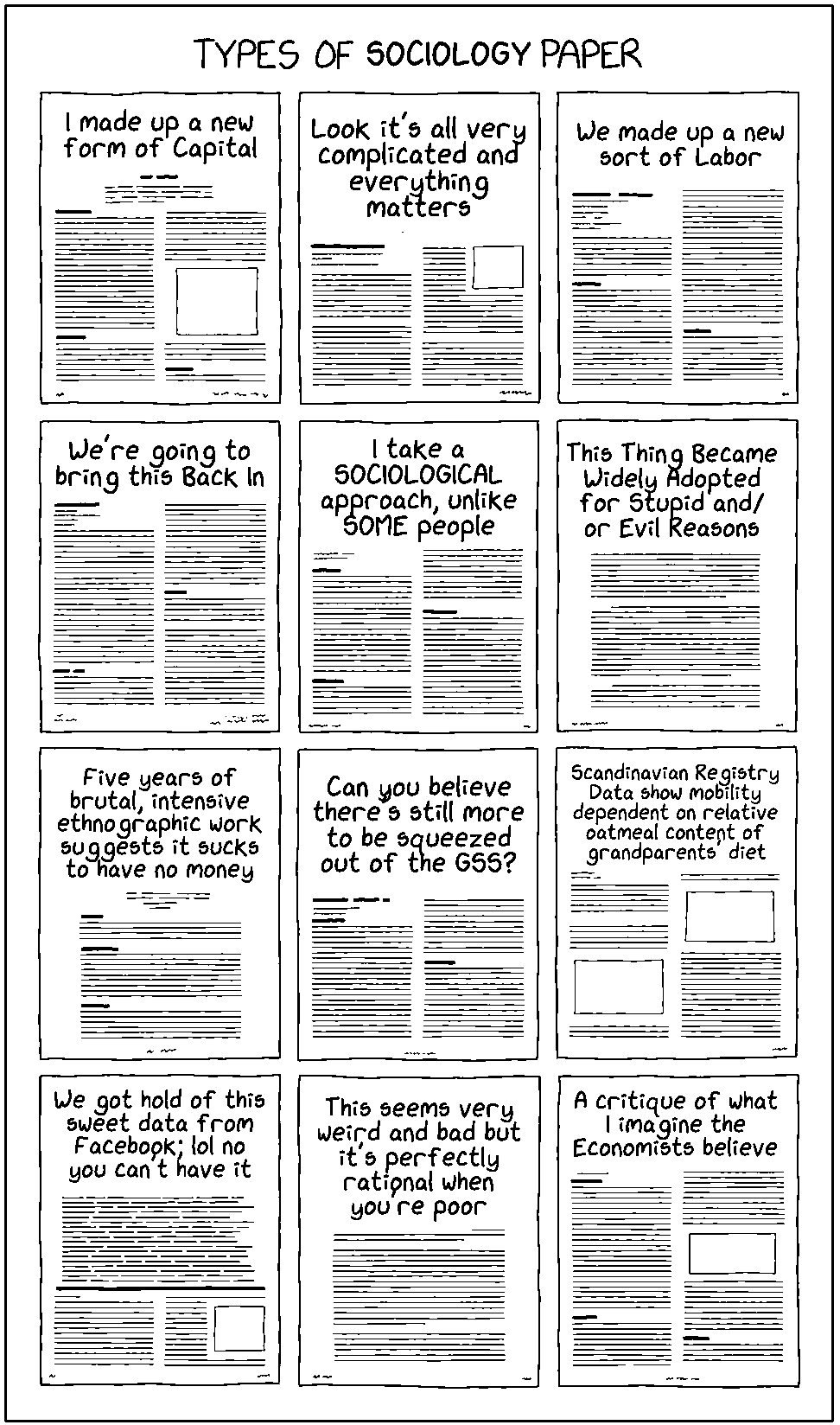

Wherein the Limits of Social Psychology Are Surveyed and the Replication Crisis and Priming Effects Are Scrutinized, While the Rise of Data‑mining Elites Is Noted as Reshaping Societal Models.

I’m no social psychologist, but I keep this notebook page around because I want to note somewhere convenient which have proven to be reproducible of all the dinner-table-conversation-starter factoids that people keep telling me.

This ends up being mostly about bad research methodology, so I started a new notebook of results about people and social cognition called social brain.

I assert the inaccessibility of good models of society to you and me, and the increasing availability of such models to large data-mining organisations will increasingly squeeze out democratic agency, whatever that was supposed to be, and make society into the passive object of entrenched power, with everyone except the elites sealed in protective epistemic communities. Although vast global conspiracies have not existed in history, we have become the midwives of the world which will enable them.

Anyway, let’s look at how we look at the world without that.

- A class divided is the documentary about the blue eyes/brown eyes experiment.

- Add some links about ethnic bias in perception etc.

1 Replication crisis

Does making someone hold a warm drink make them feel warmly toward you? How about does mentioning money make them greedy?

Lots of these results have imploded in the so-called “replication crisis,” which is of interest to me because of

The useful hypothesis I am interested in, in social media weaponisation

All of them, the good factoids and the bad, turn out to be tedious topics of conversation, (“I heard on This American Life that…”) and I would like to have the excuse to shut down at least the erroneous ones expeditiously so I can get at the canapés, without having to fight through explainerist pop psychology.

See an interesting timeline and recap in Andrew Gelman’s short essay, The winds have changed.

A conversation on causal identification, publication bias and pressing research on urgent social questions done badly: The 2019 project: How false beliefs in statistical differences still live in social science and journalism today:

You don’t need to understand interaction effects to see the problem here; you just need to stop drinking the causal-identification Kool-Aid (the attitude by which any statistically significant difference is considered to represent some true population effect, as long as it is associated with a randomized treatment assignment, instrumental variable analysis, or regression discontinuity).

He pulls out the following comment:

What I find really disturbing about both the study and the way it was used by the NYT is that they support the claim that medical people in some general sense believe that physical racial differences make Blacks less susceptible to pain. We are seeing that a lot these days, “white people,” “the culture,” etc. are racist.

Racism is an immense social problem. To characterise it—and misrepresent research accordingly—as a generic, undifferentiated force is really letting true racists off the hook. I have no doubt there are some racist doctors. It would be useful to have a sense of how many there are and to what extent it influences their treatment. That would mean doing research designed around capturing differences across medical people. And that would take us back to a longstanding issue, the fixation on finding average effects when the structure of effect differences is what we ought to be interested in.

For a methodological explanation of what is going wrong here, see OMFG Exogenous Variation! Or, Can You Find Good Nails When You Find an Indonesian Politics Hammer and Cosma Shalizi’s Review of Ashworth, Berry, and Bruno de Mesquita, Theory and Credibility.

To return to that comment though, this bit

the fixation on finding average effects when the structure of effect differences is what we ought to be interested in.

touches on something deep. We can design experiments to estimate the structure of effects differences, but also post hoc deciding to identify one such interaction in a structural model is bad methodology.

As a consulting statistician I am available to help you resolve this tension at competitive rates. Pro-tip: consult me before beginning data collection if you want to get value for money.

See also soft methodology.

2 Unconscious stuff

Neuroskeptic dissects money priming.

Daniel Kahneman chimes in on priming via the blogosphere:

Clearly, the experimental evidence for the ideas I presented in that chapter was significantly weaker than I believed when I wrote it. This was simply an error: I knew all I needed to know to moderate my enthusiasm for the surprising and elegant findings that I cited, but I did not think it through. When questions were later raised about the robustness of priming results I hoped that the authors of this research would rally to bolster their case by stronger evidence, but this did not happen.

I still believe that actions can be primed, sometimes even by stimuli of which the person is unaware. There is adequate evidence for all the building blocks: semantic priming, significant processing of stimuli that are not consciously perceived, and ideo-motor activation. I see no reason to draw a sharp line between the priming of thoughts and the priming of actions. A case can therefore be made for priming on this indirect evidence. But I have changed my views about the size of behavioural priming effects — they cannot be as large and as robust as my chapter suggested.

Scott Alexander’s admittedly hand-wavey but interesting Devoodooifying Psychology about the kind of results that haven’t replicated:

A single thread seems to run through all of these examples: a shift away from the power of the unconscious. The unconscious doesn’t make you succeed or fail proportionately to your belief in yourself. The unconscious doesn’t change your behaviour because of insignificant environmental cues. The unconscious doesn’t make you racially discriminate despite your own better nature. The conscious mind is strong enough to hold onto its preferred beliefs despite brainwashing techniques intended to force it otherwise.

So maybe we should update in general towards less of a role for the unconscious mind?

What is the unconscious mind anyway?

3 Incoming

Putting bad research in context: