Research discovery and synthesis

Has someone answered that question I have not worked out how to ask yet?

2019-01-22 — 2025-10-05

Wherein the Difficulty of Academic Recommendation Is Stated, and a Retrieval‑augmented Method Is Proposed, Using Tens of Millions of Paper Embeddings to Produce Citation‑backed Literature Synthesis.

Recommender systems for academics are hard. I suspect they’re tougher than regular recommender systems because the content needs to be new and is often hard to relate to existing work. Finding connections can be a publishable result in itself. We can think of this problem as a hard-nosed, applied version of the vaguely woo-woo idea of knowledge topology. As such, recent advances in topic embeddings and retrieval-augmented generation models might have effectively solved this problem.

Interactions with peer review systems are complicated. Could such a system integrate with peer review in a useful way? Could we have services like Canopy, Pinterest, or Keen for scientific knowledge? How can we balance recall and precision for the needs of academics?

The information environment is challenging. I am fond of Elizabeth Van Nostrand’s summary:

assessing a work often requires the same skills/knowledge you were hoping to get from said work. You can’t identify a good book in a field until you’ve read several. But improving your starting place does save time, so I should talk about how to choose a starting place.

One difficulty is that this process is heavily adversarial. A lot of people want you to believe a particular thing, and a larger set don’t care what you believe as long as you find your truth via their Amazon affiliate link […] The latter group fills me with anger and sadness; at least the people trying to convert you believe in something (maybe even the thing they’re trying to convince you of). The link farmers are just polluting the commons.

My paraphrase: Knowledge discovery would probably be intrinsically difficult even in a hypothetically beneficent world with good sharing mechanisms, because the economics of the attention economy, advertising, and weaponized media mean we should be wary of the mechanisms currently available.

If I accept this, the corollary is that my scattershot link-sharing might detract from this blog’s value to the wider world.

1 Theory

José Luis Ricón, a.k.a. Nintil, wonders about A better Google Scholar based on his experience creating a better Meta Scholar for Syntopic reading. Robin Hanson, of course, has much to say on potentially better mechanism design for scientific discovery. I have qualms that his suggested cash rewards system would crowd out reputational awards; I think there’s merit in using non-cash currency in that particular economy, though I’m open to being persuaded.

Of course, LLM-based methods are prominent now. Modern information-retrieval theory — to come.

2 “Deep Research” projects

See AI science agents.

3 Discovery projects

3.1 Scholar Inbox

Scholar Inbox is a personal paper recommender which enables researchers to stay up-to-date with the most relevant progress in their field based on their personal research interests. Scholar Inbox is free of charge and daily indexes all of arXiv, bioRxiv, medRxiv and ChemRxiv as well as several open access proceedings in computer science.

Background is here at Autonomous Vision Blog: Scholar Inbox.

This is an amazing service. It has thoughtful features, such as letting us filter by the next conference we’re attending.

3.2 SciLire

SciLire: Science Literature Review AI Tool from CSIRO.

I just saw a tech demo from my colleagues. It looks promising for high-speed, AI-augmented literature review.

3.3 ResearchRabbit

- Spotify for Papers: Just like in Spotify, you can add papers to collections. ResearchRabbit learns what you love and improves its recommendations!

- Personalised Digests: Keep up with the latest papers related to your collections! If we’re not confident something’s relevant, we don’t email you—no spam!

- Interactive Visualisations: Visualise networks of papers and co-authorships. Use graphs as new “jumping off points” to dive even deeper!

- Explore Together: Collaborate on collections, or help kickstart someone’s search process! And leave comments as well!

3.4 scite

scite: See how research has been cited

Citations are classified by a deep learning model that is trained to identify three categories of citation statements: those that provide contrasting or supporting evidence for the cited work, and others, which mention the cited study without providing evidence for its validity. Citations are classified by rhetorical function, not positive or negative sentiment.

- Citations are not classified as supporting or contrasting by positive or negative keywords.

- A Supporting citation can have a negative sentiment and a Contrasting citation can have a positive sentiment. Sentiment and rhetorical function are not correlated.

- Supporting and Contrasting citations do not necessarily indicate that the exact set of experiments was performed. For example, if a paper finds that drug X causes phenomenon Y in mice and a subsequent paper finds that drug X causes phenomenon Y in yeast but both come to this conclusion with different experiments—this would be classified as a supporting citation, even though identical experiments were not performed.

- Citations that simply use the same method, reagent, or software are not classified as supporting. To identify methods citations, you can filter by the section.

For full technical details including exactly how we do classification, what classifications and classification confidence mean, please read our recent publication describing how scite was built: (Nicholson et al. 2021)/

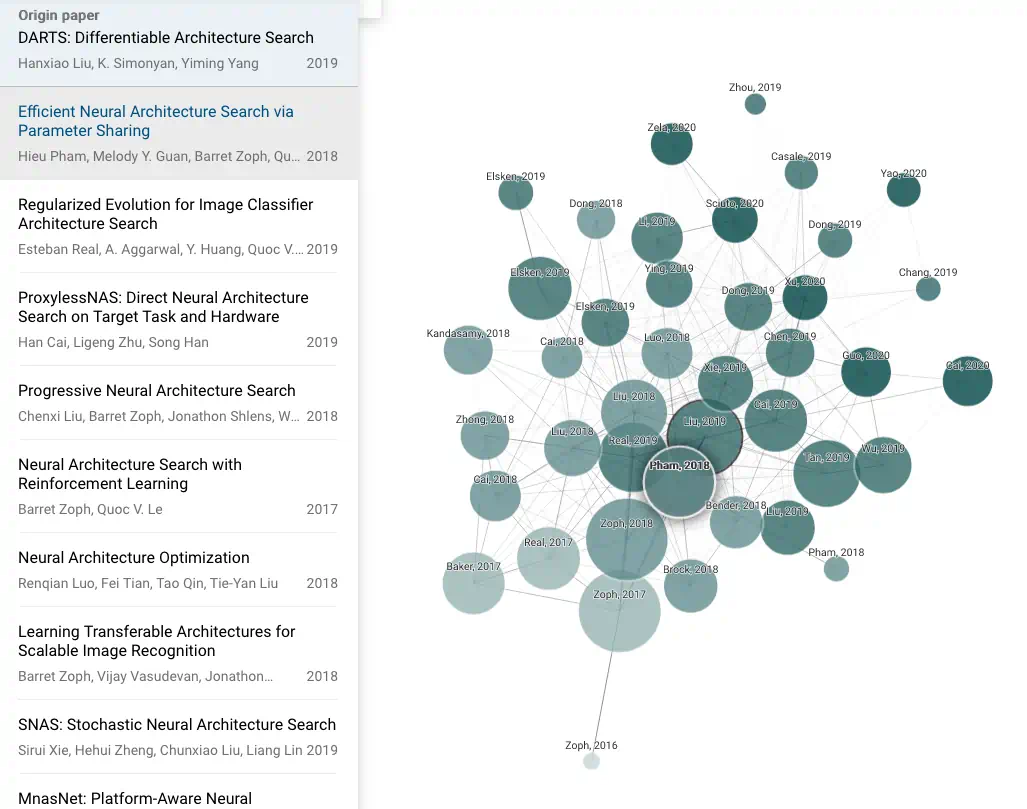

3.5 Connectedpapers

- To create each graph, we analyse an order of ~50,000 papers and select the few dozen with the strongest connections to the origin paper.

- In the graph, papers are arranged according to their similarity. That means that even papers that do not directly cite each other can be strongly connected and very closely positioned. Connected Papers is not a citation tree.

- Our similarity metric is based on the concepts of Co-citation and Bibliographic Coupling. According to this measure, two papers that have highly overlapping citations and references are presumed to have a higher chance of discussing a related subject matter.

- Our algorithm then builds a Force Directed Graph to visually cluster similar papers together and push less similar papers away from each other. Upon node selection, we highlight the shortest path from each node to the origin paper in similarity space.

- Our database is connected to the Semantic Scholar Paper Corpus (licensed under ODC-BY). Their team has done an amazing job of compiling hundreds of millions of published papers across many scientific fields.

Also:

You can use Connected Papers to:

Get a visual overview of a new academic field Enter a typical paper and we’ll build you a graph of similar papers in the field. Explore and build more graphs for interesting papers you find — soon you’ll have a real, visual understanding of the trends, popular works, and dynamics of the field you’re interested in.

Make sure you haven’t missed an important paper In some fields like Machine Learning, so many new papers are published it’s hard to keep track. With Connected Papers you can just search and visually discover important recent papers. No need to keep lists.

Create the bibliography for your thesis Start with the references that you will definitely want in your bibliography and use Connected Papers to fill in the gaps and find the rest!

Discover the most relevant prior and derivative works Use our Prior Works view to find important ancestor works in your field of interest. Use our Derivative Works view to find literature reviews of the field, as well as recently published State of the Art that followed your input paper.

3.6 papr

papr — “Tinder for preprints”

We all know the peer review system is hopelessly overmatched by the deluge of papers coming out. papr reviews use the wisdom of the crowd to quickly filter papers considered interesting and accurate. Add your quick judgements about papers to thousands of other scientists’ reviews around the world.

You can use the app to keep track of interesting papers and share them with your friends. Spend 30 minutes quickly sorting through the latest literature, and papr will keep track of those papers for future review.

With papr, you can filter to only see papers that match your interests, keyword matches, or papers highly rated by others. Ensure your literature review is productive and efficient.

I appreciate that quality is important, but I’m unconvinced by using topic keywords. Quality is only half the problem for me; topic filtering looks harder.

3.7 Daily papers

Daily Papers seems similar to arxiv-sanity, but it’s more actively maintained and less coherently explained. Its paper rankings seem to incorporate… Twitter hype?

3.8 arxiv specialists

3.8.1 Searchthearchive

3.8.2 trendingarxiv

Keep track of arXiv papers and the tweet mini-commentaries that your friends are discussing on Twitter.

Somehow, some researchers still have time for Twitter. Their opinions are probably worth noting.

- Journal / Author Name Estimator identifies potential collaborators and journals via a semantic-similarity search of the abstract

- Grant matchmaker suggests researchers with similar grants at the US NIH

3.8.3 arXiv sanity

It aimed to prioritize the arXiv paper-publishing firehose so we could discover papers of interest, at least if those interests were in machine learning. It’s now defunct.

Arxiv Sanity Preserver

Built by @karpathy to accelerate research. Serving the last 26179 papers from cs.[CV|CL|LG|AI|NE]/stat.ML

This includes Twitter-hype sorting, TF-IDF clustering, and other basic but important baby steps towards Web 2.0–style information consumption.

The servers have been overloaded lately, possibly because all the SVMs the service runs scale poorly, the continued growth of arXiv, or an epidemic addiction to intermittent variable rewards among machine learning researchers. That’s why I’ve opted out of checking for papers.

I could run my own installation — it’s open source — but the download and processing requirements are prohibitive. arXiv is big and fast.

4 Paper analysis/annotation

5 Finding copies

unpaywall and oadoi seem to index non-paywalled preprints of paywalled articles. oadoi is a website, unpaywall is a browser extension. CiteSeer does, too. There are also shadow libraries.

6 Trend analysis

I’ll be honest — I wanted this because a reviewer called something “outdated”, and I got angry. It’s a useless criticism (“incorrect” would be valid), and I suspected the reviewer was wrong about how hip the topic was because they live in their own filter bubble. So I wasted an hour or two plotting the number of papers on the topic over time, then realised I needed to do some mindfulness meditation instead.

The topic, by the way, was factor graphs; the plots I generated before I went off to meditate are an interesting test case for the various tools:

7 Reading groups and co-learning

They’re a great way to get things done. How can we make reading together easier?

The Journal Club is a web-based tool designed to help organise journal clubs, aka reading groups. A journal club is a group of people coming together at regular intervals, e.g., weekly, to critically discuss research papers. The Journal Club makes it easy to keep track of information about the club’s meeting time and place as well as the list of papers coming up for discussion, papers that have been discussed in previous meetings, and papers proposed by club members for future discussion.