Creating scientific knowledge

Descriptive and normative

2021-08-24 — 2025-07-27

Wherein the Mechanisms of Institutional Design, Funding, Network Models, Publication Filters and Prestige Economies for Scientific Communities Are Examined, and Transient Diversity of Practice Is Considered.

On heuristic mechanism and institutional design for communities of scientific practice for the enrichment of the common property resource that is human knowledge. How do diverse underfunded teams manage to advance truth with their weird prestige economy despite the many pitfalls of publication filters and such? What is effective in designing communities, practice and social norms? Both of scientific insiders and outsiders? How much communication is too much? How much iconoclasm is right to defeat groupthink and foster the spread of good ideas? At an individual level, we might wonder about soft methodology. What is the profit model?

A place to file questions like this, in other words (O’Connor and Wu 2021):

Diversity of practice is widely recognised as crucial to scientific progress. If all scientists perform the same tests in their research, they might miss important insights that other tests would yield. If all scientists adhere to the same theories, they might fail to explore other options which, in turn, might be superior. But the mechanisms that lead to this sort of diversity can also generate epistemic harms when scientific communities fail to reach swift consensus on successful theories. In this paper, we draw on extant literature using network models to investigate diversity in science. We evaluate different mechanisms from the modelling literature that can promote transient diversity of practice, keeping in mind ethical and practical constraints posed by real epistemic communities. We ask: what are the best ways to promote the right amount of diversity of practice in such communities?

1 Contrarianism and diversity

Maxwell Tabarrok, Overfitting in Academia:

But our selection criteria do not match with what we want academics to do. The out-of-sample prediction ability of academics is worse than random and laymen. They know lots about research they’ve already been tested on, but are rarely better than random at guessing the results of experiments in their field that they haven’t seen before. Citations and academic rank do not increase the ability of economists to predict the outcome of economic experiments or political events.

Hanania, in Tetlock and the Taliban, points out the illusory nature of some expertise.

[Tetlock’s results] show that “expertise” as we understand it is largely fake. Should you listen to epidemiologists or economists when it comes to COVID-19? Conventional wisdom says “trust the experts.” The lesson of Tetlock (and the Afghanistan War), is that while you certainly shouldn’t be getting all your information from your uncle’s Facebook Wall, there is no reason to start with a strong prior that people with medical degrees know more than any intelligent person who honestly looks at the available data.

His examples about the scientific community are neat, although a little cute, since Tetlock’s work is more about the limits of expert prediction and expert knowledge, so “fake” is too strong. Then he draws a longer bow and makes, in my opinion, less-considered swipes at a weak-manned diversity argument, which somewhat ruins the effect for me. Zeynep Tufekci gets at the problem I think people who talk about contrarianism and diversity are trying to address: do the incentives — especially the incentives built into social structures — actually steer researchers towards truth, or towards collective fictions?

Sometimes, going against consensus is conflated with contrarianism. Contrarianism is juvenile, and misleads people. It’s not a good habit.

The opposite of contrarianism isn’t accepting elite consensus or being gullible.

Groupthink, especially when big interests are involved, is common. The job is to resist groupthink with facts, logic, work and a sense of duty to the public. History rewards that, not contrarianism.

To get the right lessons from why we fail—be it masks or airborne transmission or failing to regulate tech when we could or Iraq war—it’s key to study how that groupthink occurred. It’s a sociological process: vested interests arguing themselves into positions that benefit them.

Scott Alexander, in Contrarians, Crackpots, and Consensus, tries to break this idea open with an ontology.

I think a lot of things are getting obscured by the term “scientific establishment” or “scientific consensus”. Imagine a pyramid with the following levels from top to bottom:

FIRST, specialist researchers in a field…

SECOND, non-specialist researchers in a broader field…

THIRD, the organs and administrators of a field who help set guidelines…

FOURTH, science journalism, meaning everyone from the science reporters at the New York Times to the guys writing books with titles like The Antidepressant Wars to random bloggers…

ALSO FOURTH IN A DIFFERENT COLUMN OF THE PYRAMID BECAUSE THIS IS A HYBRID GREEK PYRAMID THAT HAS COLUMNS, “fieldworkers”, aka the professionals we charge with putting the research into practice. … FIFTH, the general public.

A lot of these issues make a lot more sense in terms of different theories going on at the same time on different levels of the pyramid. I get the impression that in the 1990s, the specialist researchers, the non-specialist researchers, and the organs and administrators were all pretty responsible about saying that the serotonin theory was just a theory and only represented one facet of the multifaceted disease of depression. Science journalists and prescribing psychiatrists were less responsible about this, and so the general public may well have ended up with an inaccurate picture.

Bright (2023):

Du Bois took quite the opposite route from trying to introduce lotteries, with their embrace of chance randomisation. In fact, to a very considerable degree he centrally planned the sort of research his group would carry out so as to form an interlinking whole. Where the status quo system allows for competition between scientists to give funding out piecemeal to whoever seems best at a given moment, Du Bois’ work embodies the attitude that as far as possible our research activities should be coordinated, and not aimed at rewarding individual greatness but rather producing the best overall project. While ideas along these lines have not been totally without support in the history of philosophy of science (see e.g. Neurath 1946, Bernal 1949, Kummerfeld & Zollman 2015), it is safe to say the epistemic merits of this are relatively under-explored. Our brief examination of Du Bois’ plan will thus hopefully form a spur to generate more consideration of this sort of holistic line of action

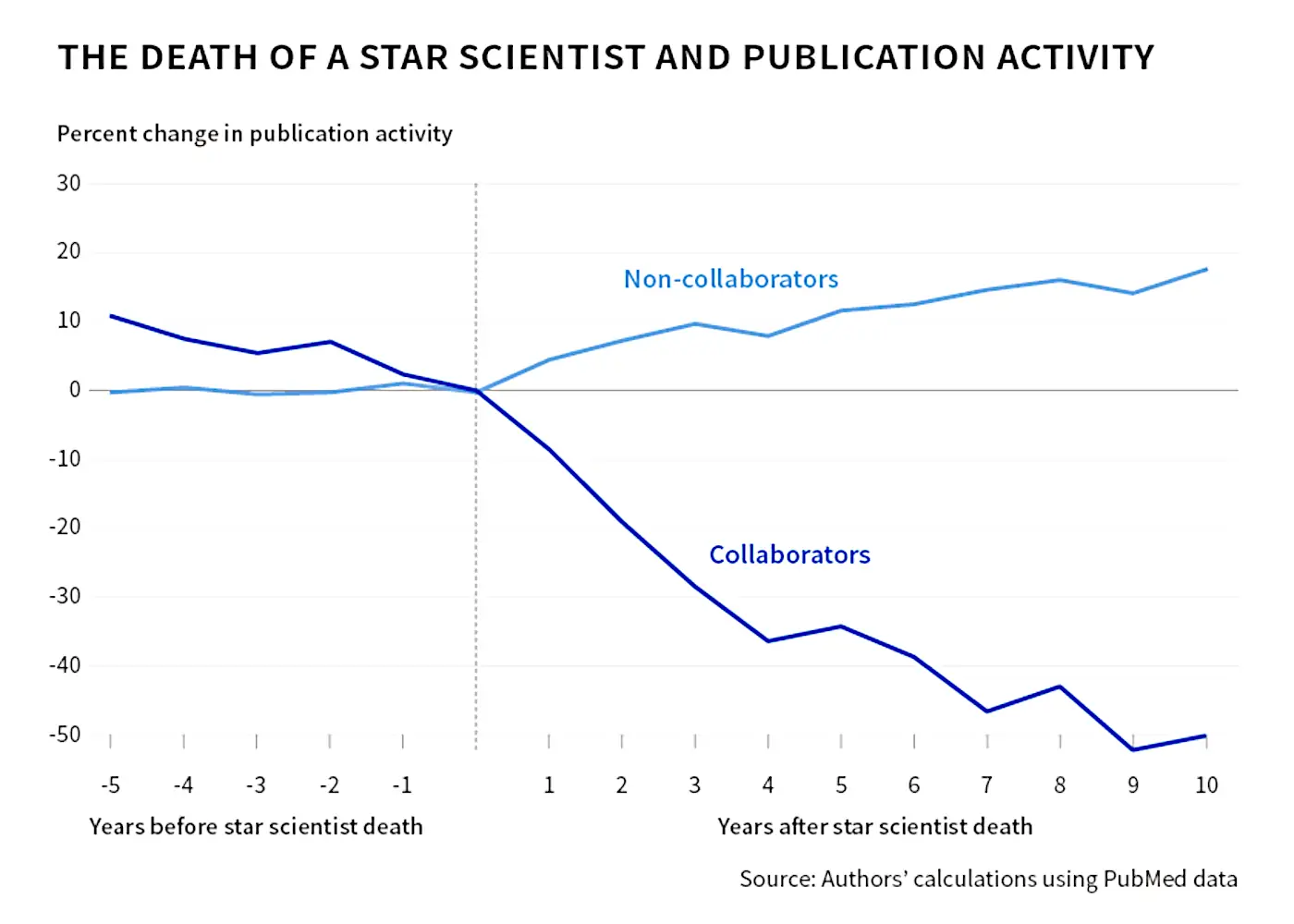

Question: Does science advance one funeral at a time?.

There are a lot of models of what the scientific consensus might mean. (Kuhnian paradigms, degenerative research programs, or whatever Lakatos called them). I don’t have anything practical to add to that right now.

Business models for generating knowledge in science. This is related to public sphere business models.

2 Exploration as a public good

A working scientist faces a bandit problem: spend time on an established line (likely to yield a paper, low variance) or on a weird new direction (high variance, high ceiling, long horizon).

Bolton and Harris (1999) show that when multiple agents can sample a common bandit, free-riding becomes serious: each agent wants the others to bear the cost of risky exploration and then piggyback on the findings. Applied to research, this predicts that novel directions are systematically underprovided in equilibrium — not because scientists are dull, but because the information produced by exploration is a public good while the cost is private.

Hoelzemann and Klein (2021) bring the claim into the lab. Human subjects underexplore relative to the social-planner optimum in exactly the way strategic-experimentation theory predicts. The pathology seems robust across populations — macaques, undergraduates, and presumably postdocs.

One consequence: paying scientists to do existing things more efficiently does not produce exploration. An intervention needs to reward exposure to risk ex ante, not success ex post. Bhattacharya and Packalen (2020) make a broadly consistent case for the stagnation of transformative research under NIH-style grant review — career incentives reward incremental, predictable papers, which is what a risk-averse review panel selects for, which is what young researchers learn to propose. The equilibrium drifts toward safety.

This is a mechanistic story behind the diversity-of-practice argument above, and it suggests where contrarianism comes from — not just a taste for difference, but the one place in the incentive structure where an underexplored region can still be mined for signal.

3 Grant applications in practice

TBD

4 Funding theory and weird ideas

Experiment: Science Kickstarter.

Experiment is a platform for funding scientific discoveries.

You can fund a dinosaur fossil excavation, a historical study of medieval monasteries, or an experiment on the International Space Station. If it helps unlock new knowledge, then we can fund it. We have the technology.

Every dollar you contribute towards science helps push the boundaries of knowledge

The Dream Machine—by Sarah Constantin introduces Renaissance Philanthropy:

It’s intuitive—but surprisingly rare in the overall world of philanthropy—for a donor to go “that’s cool, I want that to exist, it’s a shame there’s no funding for it yet, let me help you get it off the ground”.

And fundamentally I don’t think it’s because donors aren’t generous, or aren’t interested in innovation, but because creating new projects via donation involves a lot of work that mostly isn’t being done.

There’s:

information work

- donors don’t necessarily know about cool underfunded projects or talented underfunded people

- donating to one project you’ve heard of is easy; finding the top 100 projects is a substantial research-and-strategy job.

[many more examples]

That’s what RenPhil is trying to fix. Philanthropy is work; we do the work.

5 Incoming

You and your research, Hamming’s famous 1986 talk on how to do great research.

SakanaAI/AI-Scientist: The AI Scientist: Towards Fully Automated Open-Ended Scientific Discovery 🧑🔬 / The AI Scientist: Towards Fully Automated Open-Ended Scientific Discovery (Lu et al. 2024)

They claim this is about scientific discovery but I think it is also about [peer review](./, since that becomes part of their workflow.

The Leisure of the Theory Class, Top 5 Tyranny and its Consequences * Escaping science’s paradox discusses red-teaming science and incentivizing productive failures * Every Bug is Shallow if One of Your Readers is an Entomologist * How much should theories guide learning through experiments? * Jonathan Rauch, The Constitution of Knowledge: A Defence of Truth * Samir Unni, Molecular Missionaries * Progress Studies: A Discipline is a Set of Institutional Norms (connection to innovation) * Scott And Scurvy (Idle Words) * Stuart Buck, The Good Science Project * Royal Society, The online information environment * Samuel Moore, Why open science is primarily a labour issue * Open call: Consequences of the Scientific Reform Movement · Journal of Trial & Error * Eric Gilliam, FreakTakes * On funding scientific research (by Alan Kay) * White paper on “fractional scholarship” (by Samuel Arbesman and Jon F. Wilkins) * The Half-Life of Facts (by Samuel Arbesman) * Interesting organizational structures (by Ben Reinhardt) * The Need for Long-term Research (by Kevin Kelly) * Retroactive Public Goods Funding * Alt VC (by Mark McGranaghan) * Crowdfunded research (by Andy Matuschak) * A list of research labs (by Matt Webb) * Gatekeeping is Good and Everyone Already Agrees * The Balto/Togo theory of scientific development * Bringing Greater Balance to the Force (the Scientific Workforce, that is) * Rachel Helps, Wikipedia’s Citations Are Influencing Scholars and Publishers

In 2016, Wikipedia was the 6th-largest referrer for DOIs, with half of referrals successfully authenticating to access the article. External links on Wikipedia produce an estimated 7 million dollars of revenue per month. Given that Wikipedia is such a popular website, it’s unsurprising that academic publishers are actively pursuing ways to promote their work on Wikipedia.

Scholarly publishers have reported increased traffic as a result of giving access to their publications to Wikipedia editors, and a controlled experiment on Wikipedia shows that they are right to value Wikipedia citations. Works cited on Wikipedia have an outsized influence on scholarly work—specifically in its literature reviews. Additionally, one research article found that open-access (OA) articles were cited more frequently than non-OA articles on Wikipedia in 2014, an idea supported by the generally increased readership of OA articles compared to paid-access articles

When Does Science Self-Correct? Lessons from a Replication Crisis in Early 20th Century Chemistry

What would it take for fields like economics and social psychology to self-correct as chemistry and physics (sometimes) do?

A crucial feature that leads a field to become self-correcting is the potential for important technological applications. As long as scientists are only writing articles, allegations of error may be difficult to resolve. But once a field has advanced to the point where it can claim to make bread from air, or draw gold from the sea, it more quickly becomes clear if the claim can hold water.

Interintellect’s Upcoming Salons

An Interintellect salon is an evening-length conversation (typically one to three hours) around a specific topic, carrying the atmosphere of a cosy, living room gathering.

Michael Nielsen on science online

Adam Mastroianni’s funding application diptych:

Grant funding is broken. Here’s how to fix it.

I’ve won grants, I’ve awarded them, and I’ve advised people applying for them. Everything I’ve seen has convinced me this is a terrible way to give away money.

Some of the problems with this system are well known, but we treat them as unavoidable. Other problems are totally overlooked. The result is that virtually all grant money — whether it’s going to fund a lab, sponsor a fellowship, or support a nonprofit — is spent inefficiently and often goes to the wrong people, all while wasting everyone’s time.

Here are five of the worst problems with the way we do grants now. I list them because I believe there’s a better way, and we can only see it when we look squarely at the current system’s shortcomings.

We apply for everything: scholarships, internships, jobs, promotions, apartments, loans, grants, clubs, conferences, prizes, and even dating apps. A kid today might apply for every school they ever attend, from preschool to graduate school. Even if they get in, they’ll find they’ve merely entered the lobby, and every room beyond requires another application. Want to join the student radio station, take a seminar, or work in a professor’s lab? You’ll have to apply. In undergrad, being unpaid tour guide required an application — and 50% of people were rejected!

[…] The worst part is the subtle, craven way applications irradiate us, mutating our relationships into connections, our passions into activities, and our lives into our track record. Instagram invites you to stand amidst beloved friends in a beautiful place and ask yourself, if we took a selfie right now would it get lots of likes? Applications do the same for your whole life: would this look good on my resume? If you reward people for lives that look good on paper, you’ll get lots of good-looking paper and lots of miserable lives.

Applications might be all right if we knew that they worked really well. But we don’t know that.