Models of mind popular amongst ML nerds. Various morsels on the theme of what-machine-learning-teaches-us-about-our-own-learning. Thus biomimetic algorithms find their converse in our algo-mimetic biology, perhaps.

This should be more about general learning theory insights. Nitty gritty details about how computing is done by biological systems is more what I think of as biocomputing. If you can unify those then well done, you can grow minds in a petri dish.

See also evolution of perception and predictive coding.

1 Language theory

The OG test-case of mind-like behaviour is grammatical inference, where a lot of ink was spilled over learnability of languages of various kinds. This is less popular nowadays, where Natural language processing by computer is doing rather interesting things without bothering with the details of formal syntax or traditional semantics. What does that mean? I do not hazard opinions on that because I am too busy for now to form them.

There are some provoking results though, coming from the theory of vector embeddings, e.g. Goldstein et al. (2025).

2 Descriptive statistical models of cognition

See, e.g. a Bayesian model of human problem-solving, Probabilistic Models of Cognition, by Noah Goodman and Joshua Tenenbaum and others, which is also a probabilistic programming textbook.

This book explores the probabilistic approach to cognitive science, which models learning and reasoning as inference in complex probabilistic models. We examine how a broad range of empirical phenomena, including intuitive physics, concept learning, causal reasoning, social cognition, and language understanding, can be modelled using probabilistic programs (using the WebPPL language).

Disclaimer, I have not actually read the book.

This descriptive model is not the same thing as the normative model of Bayesian cognition.

(Dezfouli, Nock, and Dayan 2020; Peterson et al. 2021) do something different again, finding ML models that are good “second-order” fits to how people seem to learn things in practice.

3 Biologically (more) plausible neural nets

See, e.g. forward-forward.

4 Free energy principle

See predictive coding.

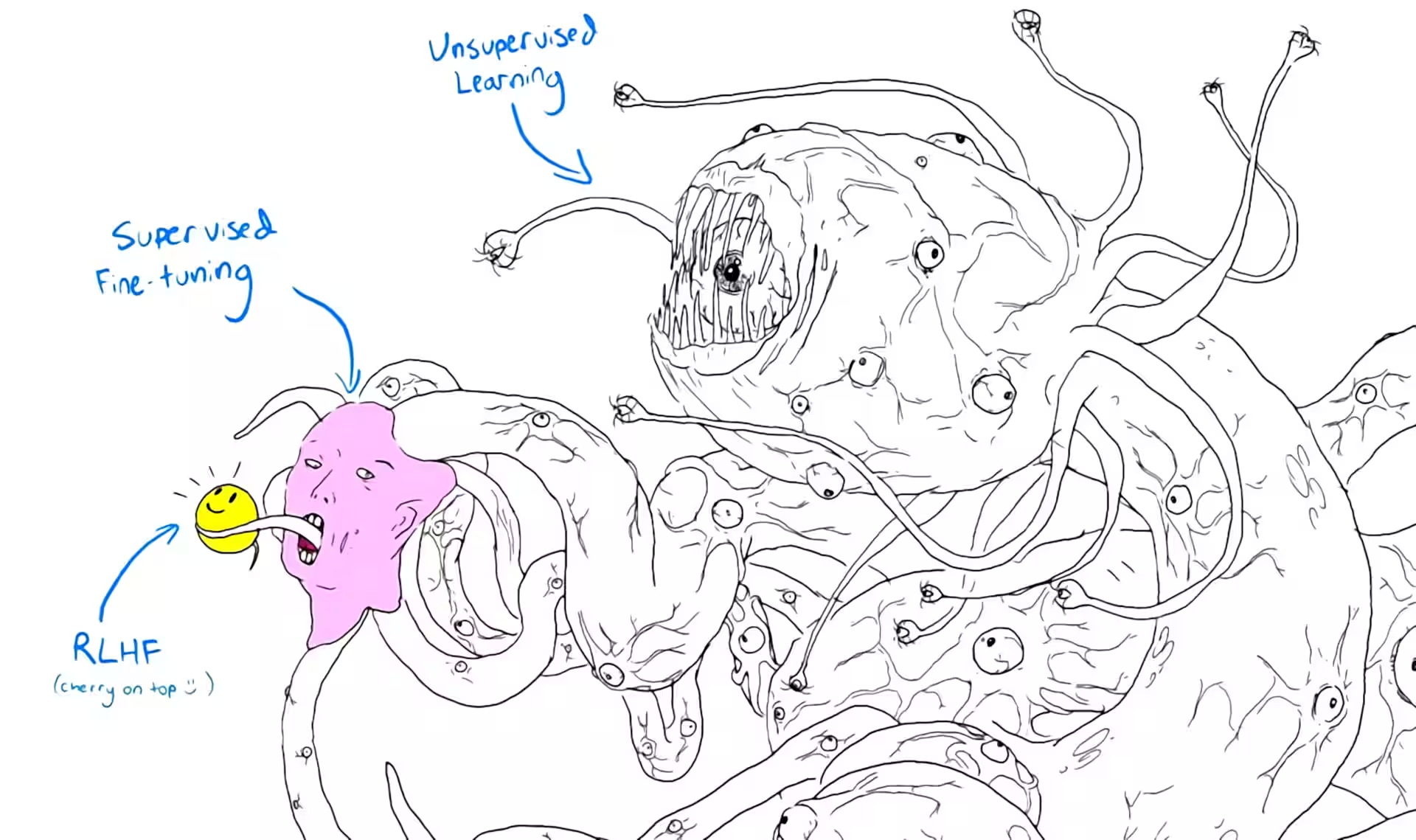

5 Little shoggoths we

Shoggoth with Smiley Face (Artificial Intelligence) | Know Your Meme

I will put the obvious question: Can I know I am not a shoggoth?

6 Life as ML

Michael Levin talks a good game here:

SC: Does this whole philosophy help us, either philosophically or practically, when it comes to our ambitions to go in there and change organisms, not just solve, cure diseases, but to make new organisms to do synthetic biology to create new things from scratch and vice versa, does it help us in what we would think of usually as robotics or technology, can we learn lessons from the biological side of things?

ML: Yeah, I think absolutely. And there’s two ways to… There’s sort of a short-term view and a longer-term view of this. The short-term view is that, absolutely, so we work very closely with roboticists to take deep concepts in both directions. So on the one hand, take the things that we’ve learned from the robustness and intelligence… I mean, the intelligent problem-solving of these living forms is incredibly high, and even organisms without brains, this whole focus on kind of like neuromorphic architectures for AI, I think is really a very limiting way to look at it. And so we try very hard to export some of these concepts into machine learning, into robotics, and so on, multi-scale robotics… I gave a talk called why robots don’t get cancer. And this is, this is exactly the problem, is we make devices where the pieces don’t have sub-goals, and that’s the good news is, yes, no, you’re not going to have a robots where part of it decides to defect and do something different, but on the other hand, the robots aren’t very good, they’re not very flexible.

ML: So part of this we’re trying to export, and then going in the other direction and take interesting concepts from computer science, from cognitive science, into biology to help us understand how this works. I fundamentally think that computer science and biology are not really different fields, I think we are all studying computation just in different media, and I do think there’s a lot of opportunity for back and forth. But now, the other thing that you mentioned is really important, which is the creation of novel systems. We are doing some work on synthetic living machines and creating new life forms by basically taking perfectly normal cells and giving them additional freedom and then some stimulation to become other types of organisms.

ML: We, I think in our lifetime, I think, we are going to be surrounded by… Darwin had this phrase, endless forms most beautiful. I think the reality is going to be a variety of living agents that he couldn’t have even conceived of, in the sense that the space, and this is something I’m working on now, is to map out at least the axes of this option space of all possible agents, because what the bioengineering is enabling us to do is to create hybrid… To create hybrid agents that are in part biological, in part electronic, the parts are designed, parts are evolved. The parts that are evolved might have been biologically evolved or they might have been evolved in a virtual environment using genetic algorithms on a computer, all of these combinations, and this… We’re going to see everything from household appliances that are run in part by machine learning and part by living brains that are sort of being controllers for various things that we would like to optimize, to humans and animals that have various implants that may allow them to control other devices and communicate with each other.