Science for policy

Using evidence and reason to govern ourselves, wicked problems etc

2011-08-07 — 2025-05-05

Wherein Science for Policy Is Described as an Applied Art for Wicked Problems, Where Ensembles of Scenarios and Robustness‑oriented, Adaptive Methods Are Recommended for Unique, High‑stakes Decisions.

OK, so we can’t hope for predictions of the outcome of complex, large, and unique things within the usual setup of control-trial scientific research. What can we hope for? What should we do with it?

For framing what I mean, let’s consider the classic framing of Wicked Problems.

Wicked problems are a class of social or policy challenges that are extremely difficult to solve due to their complex, interconnected, and evolving nature. First formalized by Rittel and Webber (1973), wicked problems have several defining characteristics:

- No definitive formulation: The problem cannot be definitively stated or bounded.

- No stopping rule: It’s unclear when the problem is “solved”.

- Solutions are not true-or-false, but good-or-bad: There’s no objective measure of success.

- No immediate or ultimate test of solutions: Implementing solutions creates waves of consequences that cannot be fully anticipated.

- No trial-and-error learning: Each intervention significantly changes the problem space.

- No enumerable set of solutions: There’s no limited set of potential solutions to consider.

- Each problem is essentially unique: No two wicked problems are alike.

- Can be considered a symptom of another problem: Wicked problems are interconnected with other problems.

- Multiple stakeholders with different values: Different stakeholders frame the problem differently.

- No right to be wrong: Policymakers are held accountable for their actions.

Examples include climate change, poverty, healthcare reform, and terrorism — problems where traditional scientific approaches struggle because they lack clear boundaries, involve competing values, and resist definitive solutions. Geopolitical strife and AI management would be wicked problems too.

For a concrete example of these challenges playing out in a specific national context, see the case study on Science in Australia.

1 Measuring results in wicked problems

It’s hard. But we do it successfully sometimes; otherwise, how would any government or business persist? See analytics.

2 Stylized simulation

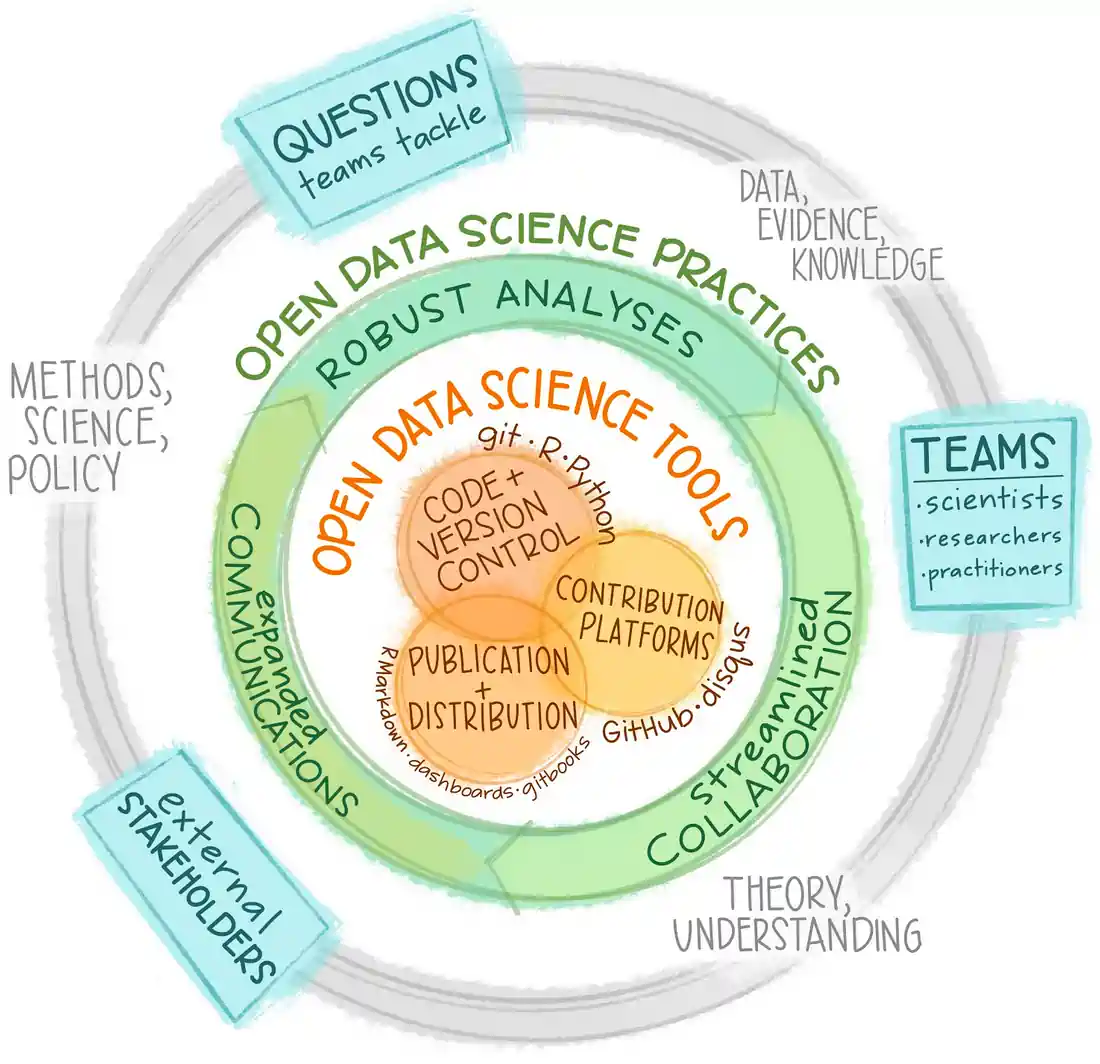

There are some suggestions in Bankes (2002):

conceive and employ ensembles of alternatives rather than single models, policies, or scenarios;

use principles of invariance or robustness to devise recommended conclusions or policies;

use adaptive methods to meet the challenge of deep uncertainty; and

iteratively seek improved conclusions through interactive mechanisms that combine human and machine capabilities.

Of course, most of these are ruled out by the modern policy cycle, are they not? When can you advise someone to adopt a policy of rapidly trying stuff out and adapting when it doesn’t work? Is there anything less compatible with the modern policy cycle than policy cycles which deviate from an electoral term and outcomes which are delivered as anything other than certain? When push comes to ballot, does any political rhetoric run on anything like statistically valid evidence, or is it all trial by anecdote and “common sense”?

Pffft, political pragmatism. Let’s think about what we might do.

3 Formal causal infernce in policy

Causation can be made well-defined, possibly even for causality in social systems, but is it worth your time to do so in political discourse? Possibly a red herring, for the same reason touched upon above. When it comes to The World, we rarely have access to big scalar “causal” levers to pull. Casually framed causal questions in policy, where they are meaningful at all, are often uninteresting. e.g.

- “Does pornography cause sexual violence?”

- “Do needle exchanges increase the incidence of drug use?”

These kinds of questions are not even well-posed, and even if they were defined enough to be answerable, they would be useless. More pertinent questions, of the sort I like to imagine we are actually considering:

- If we, e.g., ban certain forms of pornography, what effect might that have on sexual violence? How much? Over what time frame might we expect to see results to re-evaluate our policy? What other side effects would such a policy have? What other things influence sexual violence? What is the most efficient one to tackle?

- If we legalise needle exchanges, what effect will that have on the total harm to society of drug usage? When? How about if we subsidise needle exchanges? Provide youth activities in high-risk areas? How would these policies interact?

I suppose this frames the use of science for policy as kind of utilitarian stochastic calculus, which is both more and less than I think it could do, but it will serve as a first pass.

If propagating that idea is, in itself, enough to slightly lower the incidence of people on talk shows demanding of experts, or one another, “but does [loaded issue A] cause [ghastly consequence B]?” then my blood pressure will benefit.

Update: Some of this is now formalised as external validity.

There are extensions/relaxations of causal inference that are designed for policy, notably Causal networks (Malakar et al. 2023) and causal influence diagrams (Newell and Wasson 2002). These try to use a causal-ish formalism to address the difficulties of reasoning through scenario planning with complex interdependencies. They look interesting but out of my wheelhouse.

4 How to do science for policy

Consider classic science (which is paradigmatically physics, for your average contemporary working philosopher of science). There we are greatly concerned with causation, by which we mean specific effects which will reliably and quantifiably be induced by specific perturbations, and we nut it out by setting up the same perturbations and observing the same effect, time after time after time. Then we try to come up with maximally general or elegant explanations for those results, then get Nobel prizes all round, heartily congratulate our colleagues, and everyone lives happily ever after, eventually with jetpacks and benign post-human intelligences. And nanobots.

That’s what it seems like from over in social science, anyway.

For policy, things are fiddlier. Policy questions have huge numbers of interacting variables, highly contingent answers, and are often one-offs. Sometimes many of the interacting variables can be reduced to simpler, more empirically sound sub-systems, and sometimes not. Moreover, living systems tend to be non-stationary, grossly non-ergodic, and highly path-dependent. What is the best we can do under such circumstances?

5 Designing systems that manage themselves

See mechanism design or perhaps community governance.

6 Post normal science

What was this mess? Philosophy of science for catastrophic risk.

Defined, pace Kuhn, by Funtowicz and Ravetz (1994) using a great many words that I shall roughly distil thus: Science in the domain where we can no longer access a semblance of a large ensemble of experiments upon which to test our hypotheses. This is a problem for science as such, which does best when there are many observations to statistically smooth out the imperfections in our tests so that we can come to a description of some underlying dynamics. Or, at least, that’s what I was told in high school, measuring the slope of our stop-watch-timed weight-dropping measurements to find a value for gravity.

The prototypical example of a subject of post normal science is the Earth. We can’t extrapolate in any meaningful way from a single data point, so lots of questions pertaining to its dynamics become difficult. Since Earth is the only planet with life we know, how many others out there have life? Since this is the only industrial economy coupled to a planetary atmospheric system that we have known, how do we know if global warming will occur and mess us up royally? Must we throw up our hands in despair and fall back on rhetoric? e.g. Ravetz (1999) mentions cases of users arguing that climate change models are a Baudrillardian seduction. But ideally, methodologically, I mean, are there alternatives?

Yes.

The how-much-life-in-the-galaxy thing is not intractable — for example, the speed with which life arose on our planet gives hints to the ease of life formation — Stunt-Bayesians love that kind of thing (Spiegel and Turner 2012).

More usefully, let us consider what Nassim Taleb has usefully branded with a metaphor about black swans.

7 Planning under uncertainty

TBC. Nassim Taleb has a whole career based on handling heavy-tailed risk and managing out-of-sample downsides (Taleb 2007, 2020). This subsection needs a better name and a notebook of its own. Contrariness dictates I will not use Taleb’s terms.