Validating and reproducing science

“Scientist, falsify thyself”. Peer review, academic incentives, credentials, evidence and funding

2020-05-16 — 2025-05-22

Upon the thing that I presume academic publishing is supposed to do: further science. Reputation systems, collective decision making, groupthink management and other mechanisms for trustworthy science, a.k.a. our collective knowledge of reality itself.

I want to consider the system of peer review, networking, conferencing, publishing and acclaim and see how closely it matches an ideal system for uncovering truth. Further, I imagine how we could make a better system. But I won’t do that right now; I’ll just collect some provocative links to that theme, hoping for time for more thought later.

1 Some open review processes

pubpeer (who are behind peeriodicals) produces a peer-review overlay for web browsers to spread their commentary and peer critique more widely. The site concept is itself brusquely confusing, but well blogged; you’ll get the idea. They are not afraid of invective, and I thought they looked more amateurish than effective. But I have revised that opinion; they are quite selective and effective. This system has been implicated in topical high-profile retractions (e.g. 1 2).

There are many others that have less institutional backing; e.g. Uri Simonsohn.

- Related question: How do we discover research to peer review?

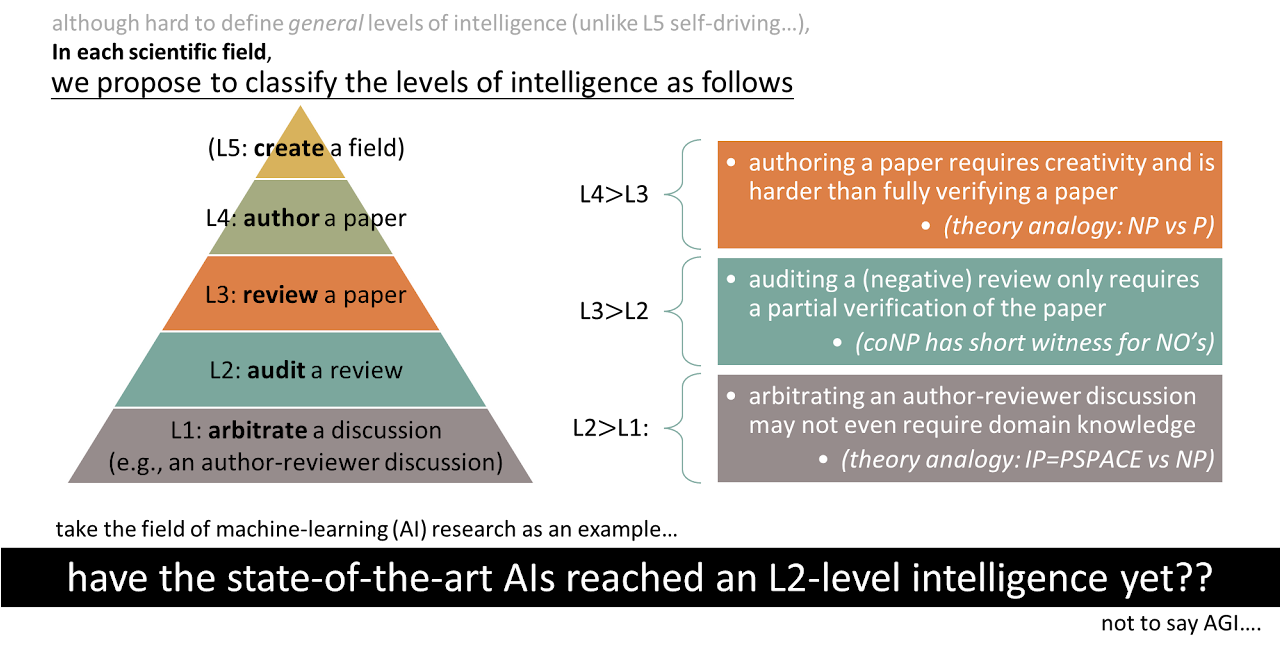

2 Mathematical models of the reviewing process

e.g. Cole, Jr, and Simon (1981);Lindsey (1988);Ragone et al. (2013);Nihar B. Shah et al. (2016);Whitehurst (1984).

The experimental data from Neurips experiments might be useful: See e.g. Nihar B. Shah et al. (2016) or a blog post on the 2014 experiment (1, 2).

3 Economics of publishing

See academic publishing.

4 Assignment for peer review process

There is some fun mechanism design and algorithmic work involved in peer review, e.g.

- Charlin and Zemel (2013)

- Gasparyan et al. (2015)

- Jan (2018)

- Merrifield and Saari (2009)

- Solomon (2007)

- Xiao, Dörfler, and van der Schaar (2014)

- Y. Xu, Zhao, and Shi (2017)

- Experiments with the ICML 2020 Peer-Review Process

- NeurIPS 2024 Experiment on Improving the Paper-Reviewer Assignment. The literature review in this post is also good (Budish et al. 2009; Charlin, Zemel, and Boutilier 2011; Charlin and Zemel 2013; Flach et al. 2010; Goldsmith and Sloan 2007; Jecmen et al. 2020, 2022; Littman 2021; Liu, Suel, and Memon 2014; Mimno and McCallum 2007; Rodriguez and Bollen 2008; Nihar B. Shah 2022; Stelmakh, Shah, and Singh 2021; Tang, Tang, and Tan 2010; Taylor 2008; Tran, Cabanac, and Hubert 2017; Vijaykumar 2020; Y. E. Xu et al. 2024).

- Matthew Feeney, Markets in fact-checking

5 Gatekeeping

Almost by definition we will be dissatisfied with how much gatekeeping academia does; we all have skin in this game.

Baldwin (2018):

This essay traces the history of refereeing at specialist scientific journals and at funding bodies and shows that it was only in the late twentieth century that peer review came to be seen as a process central to scientific practice. Throughout the nineteenth century and into much of the twentieth, external referee reports were considered an optional part of journal editing or grant making. The idea that refereeing is a requirement for scientific legitimacy seems to have arisen first in the Cold War United States. In the 1970s, in the wake of a series of attacks on scientific funding, American scientists faced a dilemma: there was increasing pressure for science to be accountable to those who funded it, but scientists wanted to ensure their continuing influence over funding decisions. Scientists and their supporters cast expert refereeing—or “peer review,” as it was increasingly called—as the crucial process that ensured the credibility of science as a whole. Taking funding decisions out of expert hands, they argued, would be a corruption of science itself. This public elevation of peer review both reinforced and spread the belief that only peer-reviewed science was scientifically legitimate.

Thomas Basbøll says

It is commonplace today to talk about “knowledge production” and the university as a site of innovation. But the institution was never designed to “produce” something nor even to be especially innovative. Its function was to conserve what we know. It just happens to be in the nature of knowledge that it cannot be conserved if it does not grow.

Andrew Marzoni, Academia is a cult.

Adam Becker on the assumptions and pathologies revealed by Wolfram’s latest branding and positioning:

So why did Wolfram announce his ideas this way? Why not go the traditional route? “I don’t really believe in anonymous peer review,” he says. “I think it’s corrupt. It’s all a giant story of somewhat corrupt gaming, I would say. I think it’s sort of inevitable that happens with these very large systems. It’s a pity.”

So what are Wolfram’s goals? He says he wants the attention and feedback of the physics community. But his unconventional approach—soliciting public comments on an exceedingly long paper—almost ensures it shall remain obscure. Wolfram says he wants physicists’ respect. The ones consulted for this story said gaining it would require him to recognise and engage with the prior work of others in the scientific community.

And when provided with some of the responses from other physicists regarding his work, Wolfram is singularly unenthused. “I’m disappointed by the naivete of the questions that you’re communicating,” he grumbles. “I deserve better.”

An interesting edge case in peer review and scientific reputation: Adam Becker, Junk Science or the Real Thing? ‘Inference’ Publishes Both. As far as I’m concerned, publishing crap in itself is not catastrophic. A process that fails to discourage crap by eventually identifying it would be bad.

6 Style guide for reviews and rebuttals

See scientific writing.

7 Reformers

8 Grants

TBD

9 Publication bias

Multiple testing across a whole scientific field, with a side helping of biased data release and terrible incentives.

On one hand, we hope that journals will help us find things that are relevant. On the other hand, we hope the things they help us find are actually true. It’s not at all obvious how to solve these kinds of classification problems economically, but we kind of hope that peer review does it.

To read: My likelihood depends on your frequency properties.

Keywords: “file-drawer process” and the “publication sieve”, which are the large-scale models of how this works in a scientific community and “researcher degrees of freedom” which is the model for how this works at the individual scale.

This is particularly pertinent in social psychology, where it turns out there is too much bullshit with

Sanjay Srivastava, Everything is fucked, the syllabus.

11 On the easier problem of local theories

On the other hand, we can all agree that finding small-effect universal laws in messy domains like human society is a hard problem. In machine learning, we frequently give up on that and just try to solve a local problem — does this work in this domain with enough certainty to help this problem? Then we still need to solve a problem about domain adaptation when we try to work out if we are still working on this problem, or at least one similar enough to this. But that feels like it might be easier by virtue of being less ambitious.

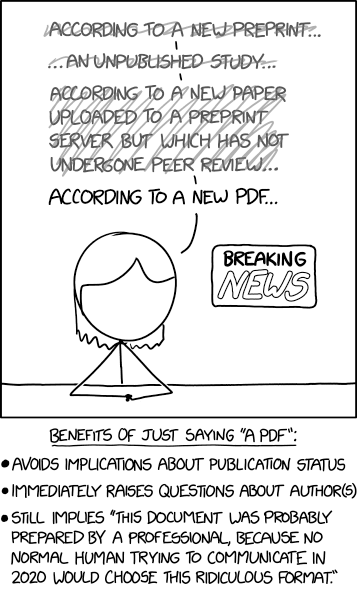

12 Incoming

Saloni Dattani, Real peer review has never been tried

Matt Clancy, What does peer review know?

Adam Mastroianni, The rise and fall of peer review

Étienne Fortier-Dubois, Why Is ‘Nature’ Prestigious?

Science and the Dumpster Fire | Elements of Evolutionary Anthropology

F1000Research | Open Access Publishing Platform | Beyond a Research Journal

F1000Research is an Open Research publishing platform for scientists, scholars and clinicians offering rapid publication of articles and other research outputs without editorial bias. All articles benefit from transparent peer review and editorial guidance on making all source data openly available.

Reviewing is a Contract - Rieck on the social expectations of reviewing and chairing.

Jocelynn Pearl proposes some fun ideas, including blockchainy ones, in Time for a Change: How Scientific Publishing is Changing For The Better.

Andrew Gelman in conversation with Noah Smith

Anyway, one other thing I wanted to get your thoughts on was the publication system and the quality of published research. The replication crisis and other skeptical reviews of empirical work have got lots of people thinking about ways to systematically improve the quality of what gets published in journals. Apart from things you’ve already mentioned, do you have any suggestions for doing that?

I wrote about some potential solutions in pages 19–21 of Gelman (2018) from a few years ago. But it’s hard to give more than my personal impression. As statisticians or methodologists we rake people over the coals for jumping to causal conclusions based on uncontrolled data, but when it comes to science reform, we’re all too quick to say, Do this or Do that. Fair enough: policy exists already and we shouldn’t wait on definitive evidence before moving forward to reform science publication, any more than journals waited on such evidence before growing to become what they are today. But we should just be aware of the role of theory and assumptions in making such recommendations. Eric Loken and I made this point several years ago in the context of statistics teaching (Gelman and Loken 2012), and Berna Devezer et al. published an article (Devezer et al. 2020) last year critically examining some of the assumptions that have at times been taken for granted in science reform. When talking about reform, there are so many useful directions to go, I don’t know where to start. There’s post-publication review (which, among other things, should be much more efficient than the current system for reasons discussed here), there are all sorts of things having to do with incentives and norms (for example, I’ve argued that one reason that scientists act so defensive when their work is criticised is because of how they’re trained to react to referee reports in the journal review process), and various ideas adapted to specific fields. One idea I saw recently that I liked was from the psychology researcher Gerd Gigerenzer, who wrote that we should consider stimuli in an experiment as being a sample from a population rather than thinking of them as fixed rules (Gigerenzer n.d.), which is an interesting idea in part because of its connection to issues of external validity or out-of-sample generalisation that are so important when trying to make statements about the outside world. * The Black Spatula Project - by Steve Newman

A 10 page paper caused a panic because of a math error. I was curious if Al would spot the error by just prompting: “carefully check the math in this paper” especially as the info is not in training data.

o1 gets it in a single shot. Should Al checks be standard in science?

Repo here: nick-gibb/black-spatula-project: Verifying scientific papers using LLMs

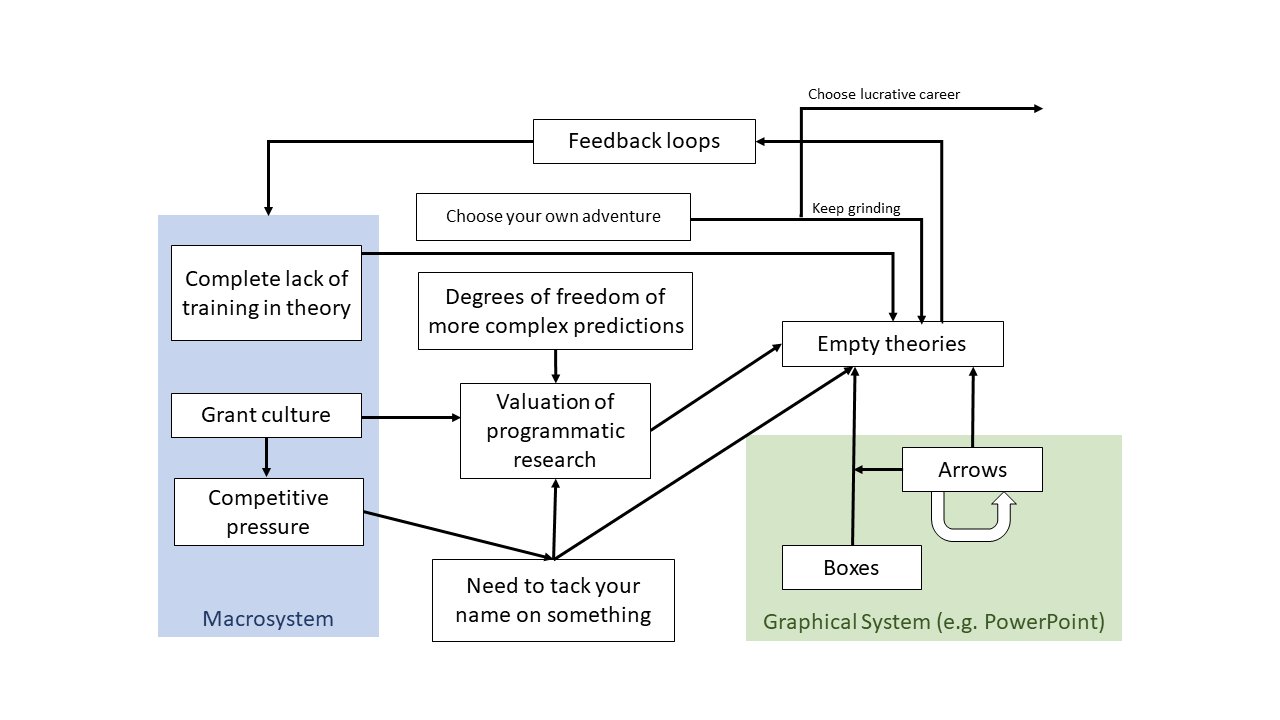

(Smaldino2020Interdisciplinarity?):

Why do bad methods persist in some academic disciplines, even when they have been clearly rejected in others? What factors allow good methodological advances to spread across disciplines? In this paper, we investigate some key features determining the success and failure of methodological spread between the sciences. We introduce a formal model that considers factors like methodological competence and reviewer bias towards one’s own methods. We show how self-preferential biases can protect poor methodology within scientific communities, and lack of reviewer competence can contribute to failures to adopt better methods. We then use a second model to further argue that input from outside disciplines, especially in the form of peer review and other credit assignment mechanisms, can help break down barriers to methodological improvement. This work therefore presents an underappreciated benefit of interdisciplinarity.

when does a certain practice–e.g., a study design, a way to collect data, a particular statistical approach–”succeed” and start to dominate journals?

It must be capable of surviving a multi-stage selection procedure:

- Implementation must be sufficiently affordable so that researchers can actually give it a shot

- Once the authors have added it to a manuscript, it must be retained until submission

- The resulting manuscript must enter the peer-review process and survive it (without the implementation of the practice getting dropped on the way)

- The resulting publication needs to attract enough attention post-publication so that readers will feel inspired to implement it themselves, fueling the eternally turning wheel of

Samsarapublication-oriented science