Content warning:

Discussion of an open community that specifically aims to engage with arguments despite edginess. Only ever one click away from finding dialogues with people with uncomfortable opinions on objectionable topics.

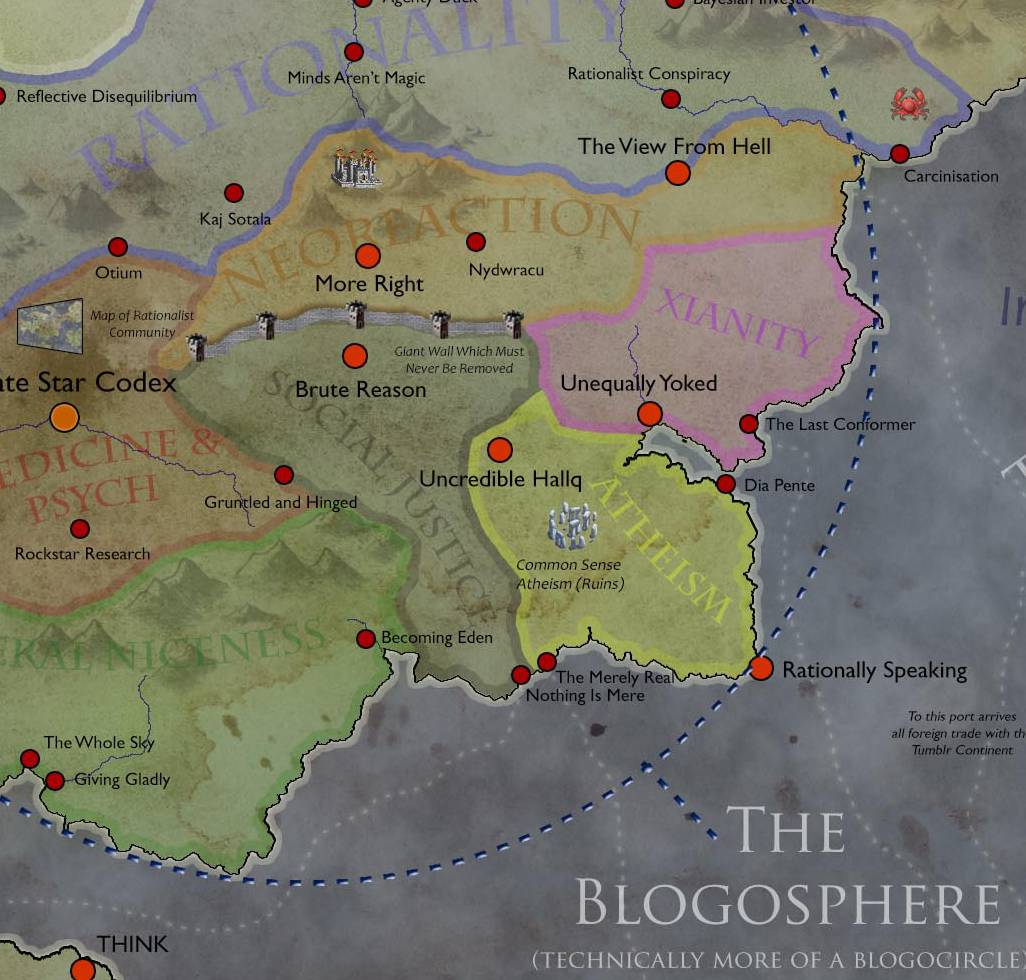

What and who are the capital-R, internet-era Rationalists?

I know about this, as I have been hanging out with rationalists quite a lot. I do not consider myself part of the movement 1 as such. And yet, I find myself agreeing with about 40% of rationalist doctrine, which is the righteous level of agreement according to the embossed gold plates communicated to Eliezer Yudkowsky.

I jest, I jest. The core of rationalist doctrine is small, but the body of literature is vast, full of thought experiments and wildly branching philosophical positions. It’s more a community of practice that you can engage with, than a thing that you can agree with en masse.

I think the coreis mostly about certain styles of discourse and experiments in how we might be better truth-seekers together. I find this relaxing to be near. Typical rationalists are excellent to disagree with, at least after they have a bit of practice.

Why might I make this claim? Who are these people? Let us attempt to define the rationalists a little better.

2 …as a community of practice

Some interesting things going on, but I am possibly not the one to write the social history of rationalists.

- Zvi Mowshowitz, What Is Rationalist Berkeley’s Community Culture?

- Sarah Constantin, The Craft is Not The Community

It would also be interesting to investigate the fall of the original lesswrong.org, and the role of neoreactionaries in that process, and the geographically local groups (Berkeley, New York, rest of the world…).

Jakob Falkovich claims that rationalism is a skillset/discipline that leads to its practitioners being different. Also he can turn a phrase, so I will let him suppose what rationalism as a discipline might be:

Michael Vassar says that what Rationalists call “thinking” is treated by most people as a rare technical ability (“design thinking”) that normal people can only pretend to do. What they call “thinking” we call “being depressed and anxious”. This sounded crazy when I first heard it, but the more I mulled it over the more it made sense and explained much of what has been happening in the last year.

Social reality is what is normal, accepted, cool, predictable, expected, rewarded, agreed upon. Physical reality is what is out there determining the outcomes of physical experiments, such as whether you get COVID or not if you wear a mask. When Rationalists say “thinking” they usually mean something like “using your effortful system 2 to determine something about physical reality”. It’s what I try to do when writing posts about COVID. Swimming in social reality is best done on feeling and intuition, not “thought”.

My experience is spending perhaps 97% of my time in social reality, swimming along with everyone else. 3% of the time I notice some confusion, an unexpected mismatch between my predictions and what physical reality hits me with, and try to think through a solution. 3% is enough to notice the difference between the two modes and to be able to switch between them on purpose.

I don’t think that this experience is typical.

With Vassar in mind, my best guess of the typical experience is being in social reality 99.9% of the time. The 0.1% are extreme shocks, cases when physical reality kicks someone so far off-script they are forced to confront it directly. These experiences are extremely unpleasant, and processing them appears as “depression and anxiety”. One looks at the first opportunity to dive back into the safety of social reality, in the form of a communal narrative that “makes sense” of what happened and suggests an appropriate course of action.

I am not actually sold on this idea. Falkovich also argues, in Is Rationalist Self-Improvement Real?, for the effectiveness of trying to be more rational (in more areas than theory of mind). 🚧TODO🚧 clarify

Julia Galef in The Scout Mindset makes a case that certain mindset habits can assist rationality, and seems less crazy.

Related:

2.1 Effectiveness

Chinese Businessmen: Superstition Doesn’t Count

…there are two commonly understood forms of rationality, and LessWrong is mostly concerned with only one of them. The two forms are:

- Epistemic rationality — how do you know that your beliefs are true?

- Instrumental rationality — how do you make better decisions to achieve your goals?

Jonathan Baron calls the first form—epistemic rationality — “thinking about beliefs”. He calls the second “thinking about decisions”.

LessWrong has concentrated most of its efforts on epistemic rationality. The vast majority of writing on the site focuses its attention on common cognitive biases and failures of human thinking, and discusses methods for overcoming them. In other words, LessWrong’s community of rationality practitioners desire the ability to hold accurate and true beliefs about the world, and believe that doing so will enable them to achieve success in their lives and in pursuit of their goals.

… My current bet, however, is that this simply can’t be true. LessWrong’s decade of existence, and my experience with traditional Chinese businessmen, suggests to me that instrumental rationality is the thing that dominates when it comes to success in business and life. It suggests to me that if you’re instrumentally rational, you don’t need to optimise for correct and true beliefs to succeed. You merely need a small set of true beliefs, related to your field; these beliefs can be determined from trial and error itself.

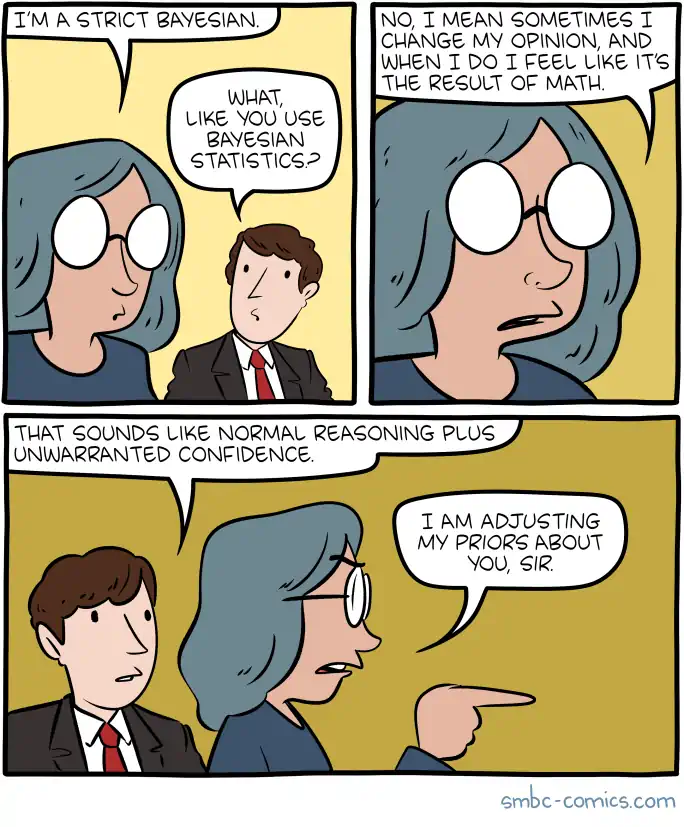

2.2 Casual use of Bayesian terminology

This used to annoy me. It has grown on me, if the goal is to make explicit the idea of trying to consider multiple hypotheses and act under uncertainty. If people do wish to imply they are actually performing Bayesian inference, I am sceptical about most uses of this terminology. There is more to do to conduct Bayesian inference, and also it is not enough merely to do Bayesian inference in an open world. I have my eye on you, aspiring rationalists, and am wearing a language-police badge.

3 In the epistemic ecosystem

The natural predator of the rationalist is the conflict theorist, who downplays the ability of human rational capacities to find agreement by cultivating reasoned disagreement in the face of the struggle for dominance.

Of these, the Anti-TESCREALists take rationalists as their preferred prey.

4 …as a cult

The rationalist path to reason has definite parallels with the induction process into a cult, in form. The content is quite different than your typical cult, but the dynamics of communities focused on changing themselves by unconventional means to think unconventional things, those do indeed use the same tools as cults.

Notionally the inner truths one unlocks in rationalism are distinguished from religious truths in certain ways:

- They seem to be typically more empirically informed than, e.g. scientology

- Less obviously intended to turn us into foot soldiers in a charismatic cult leader’s army

OTOH, there is a sacred text and much commentary by the learned. This community is all about knowingly sticking levers in the weird crevices of the human mind, including religious ones.. Is that bad? Is it that any sufficiently advanced community is indistinguishable from a cult?

I think they are aware of the dangers of normalising radical questioning.

And the movement does spawn actual cults?

- The Zizians.

- The Zizians and the Rationalist death cults

- Jessica Taylor My experience at and around MIRI and CFAR (Follow-up)

- Zoe Curzi, My Experience with Leverage Research

I’d be curious if it spawns cults at above the base rate for its demographic, and/or its salience as an open internet community.

Also, Lives Of The Rationalist Saints amused me greatly.

5 …in the internet of dunks

Exactly like every other community online, the rationalist community labours under the burden of being judged by the self-selected, most attention-grabbingly grating of the people who claim to be part of it. For all the vaunted claims that the rationalists have fostered healthy norms of robust intellectual debate and so on, their comments are a mess just like everyone else’s. This is empirically verifiable. The degree of mess might be different, but the shining perfection that the internet expects from outgroups it is not.

It is a recommended experience to try to contribute to the discussion in the comment threads dangling from, say, some Scott Alexander article. One with the typical halo of erudition, one that hits all the right notes of making you feel smart because you had that “a-HA” feeling and nodded along to it. “Oh!” you might think to yourself, “this intellectual ship would be propelled further out into the oceans of truth if I stuck my oar in, and other people who are, as I, elevated enough to read this blog, they will see my cleverness in turn, and we will together row ourselves onward, like brains-vikings in an intellectual longboat.”

You might think that, possibly with a better metaphor if you are a superior person. But I’ll lay you odds of 4:1 5:2 against anything fun happening at the moment you put this to experimental test. More likely upon sticking your oar in, you will find yourself in the usual lightly-moderated internet dogpile of people straw-manning and talking past each other in their haste to enact the appearance of healthy norms of thoughtful, robust debate, mostly without the more onerous labour of doing thoughtful, robust debate. “Mostly” in which metric? For sure by word count, facile and vacuous verbiage predominates. By head-count, maybe the situation is less grim? The thing is, producing thoughtless twaddle is cheaper and easier per word than finely honed reasoning, and the typically hardline open-door comment policy requires twaddle be given the benefit of the doubt.

Bitter corollary: Odds are not favourable that you are qualified to assess whether your own comment was twaddle.

No mistake, I think some useful and interesting debates have come out of card-carrying rationalists. Even, occasionally, from the comment threads, if you have time to surface the good ones between all the facile value-signalling and people claiming other people’s value systems are religions. I doubt that the bloggers who host these blogs would argue otherwise, or even find it surprising, but it still seems to startle neophytes and journalists. It is likely that the modest odds of a good debate are still better than the baseline extremely tiny odds elsewhere on the internet.

Occasionally I feel rationalists set themselves up with a difficult task here. The preponderance of reader surveys and comment engagement indicates rationalists are prone to regarding people who show up and fill out a survey as community members. This leads to a classic online social movement problem, which might be interpolated into Ben Sixsmith’s discussion of online-community-as-religion:

Participation in online communities requires far less personal commitment than those of real life. And commitment has often cloaked hypocrisy. Men could play the role of

God-fearing family menrationalist in public, for example, whilecheating on their wives and abusing their kidsfailing to participate in prediction markets. Being a respectable member of their community depended, to a great extent, on being afamilyrational man, but being a respectable member of onlineright-wingrationalist communities depends only on endorsing the concept.

Long story short, the rationalist corner of the internet is still full of social climbing, facile virtue signalling, trolling and general foolishness. If we insist on judging communities en masse though, which we do, the bar is presumably not whether they are all angels, or every community must be damned. We presumably care whether they do detectably better than the (toxic, atavistic) baseline. Perhaps this particular experiment attains the very best humans could. Perhaps this shambling nerdy quagmire has proportionally the highest admixture of quality thought possible from an open, self-policing community. Let’s find out, by some kind of RCT; it’s the best possible community to recruit for such a study.

5.1 Slate Star Codex kerfuffle

A concrete example with actual names and events is illustrative. Here are some warm takes on a high-profile happening that everyone has now forgotten about but seemed like a big deal during the hot culture war.

Cade Metz in the New York Times, Why Slate Star Codex is Silicon Valley’s safe space is the original article which sparked the kerfuffle.

Elizabeth Spiers, Slate Star Clusterfuck attempts to put this in the NYT-versus-Silicon Valley perspective. This is the main thing to read IMO.

Tanner Greer produced a round-up

The Framers and the Framed: Notes On the Slate Star Codex Controversywhich I remember being quite good although it has now fallen off the internet.Gideon Lewis-Kraus, Slate Star Codex and Silicon Valley’s War Against the Media

Scott Aaronson, grand anticlimax: the New York Times on Scott Alexander

There is some invective on the theme of renewing journalism more broadly:

- Everything old is new again in the mainstream media

- Matt Yglesias, In defence of interesting writing on controversial topics

The actual story IMO, if we ignore the particulars of the effects upon these protagonists for a moment, is that the internet of dunks grates against the internet of debate, if the latter is a thing except in our imaginations.

That said, N-Gate’s burn was funny for me.

just because the comments section of some asshole’s blog happens to be a place where technolibertarians cross-pollinate with white supremacists, says Hackernews, doesn’t mean it’s fair to focus on that instead of on how smart that blog’s readership has convinced itself it is. So smart, in fact, that to criticise them at all is tantamount to an admission that you’re up to something. This sort of censorship, concludes Hackernews, should never have been allowed to be published.

The latest iteration of people hating on rationalists is implying that rationalists are the shock troops of “TESCREALism”.

6 Rationalist dialect

- effective altruism

- Marginally/marginalist data-informed marginalist charity

- update my priors

-

Ambiguous. Could mean

- I believe with high certainty that I am strong Bayesian, or

- I am prepared to update my estimate of the plausibility of THIS argument in the light of new evidence, without holding to spurious certainty, or

- I am solving a problem in conditional probability and I just multiplied the prior distribution by the likelihood of the evidence and renormalized (Rarely this one).

- crux

- A crux is a crucial precondition for a belief you hold. See Double-cruxing. Stating them is regarded as a good-faith rhetorical strategy.

- empathies

- There is a complicated vocabulary for compassion, sympathy and empathy to imply that you are being careful about it.

- I could be wrong. If you think so, tell me. I would like to believe true things

- I kinda like this disclaimer, variants of which appear on various blogs. It is kind of a compressed rationalist manifesto that some people are trialling as a way to get the discussion started on a good foot. I wonder how effective it is. It is probably more effective than claiming to be prepared to ‘update my priors’.

- wamb

- The opposite of nerd

- cheems mindset

- cheems mindset: The mindset that leaves you scrambling for reasons why something just can’t be done (as opposed to the Improving mentality.)

7 Demography of the rationalists

Extremely interesting, to me at least. 🚧TODO🚧 clarify

8 Literature

Esp fan fiction. Oh yes, that is a thing. Backstory here or here.

Harry Potter and the Methods of Rationality Chapter 1: A Day of Very Low Probability, a harry potter fanfic (mirror)

UNSONG by Scott Alexander, is an online opus about kabbalah as computer science.

9 Rationalists in Australia

10 Post-rationalism

11 Incoming

12 References

Footnotes

I only join communities with meta- or post- as name prefixes.↩︎